Don’t Miss these 5 Data Science GitHub Projects and Reddit Discussions (April Edition)

Introduction

Data science is an ever-evolving field. As data scientists, we need to have our finger on the pulse of the latest algorithms and frameworks coming up in the community.

I’ve found GitHub to be an excellent source of knowledge in that regard. The platform helps me stay current with trending data science topics. I can also look up and download code from leading data scientists and companies – what more could a data scientist ask for? So, if you’re a:

- Data science enthusiast

- Machine learning practitioner

- Data science manager

- Deep learning expert

or any mix of the above, this article is for you. I’ve taken away the pain of having to browse through multiple repositories by picking the top data science ones here. This month’s collection has a heavy emphasis on Natural Language Processing (NLP).

I have also picked out five in-depth data science-related Reddit discussions for you. Picking the brains of data science experts is a rare opportunity, but Reddit allows us to dive into their thought process. I strongly recommend going through these discussions to improve your knowledge and industry understanding.

Want to check out the top repositories from the first three months of 2019? We’ve got you covered:

Let’s get into it!

Data Science GitHub Repositories

Sparse Transformer by OpenAI – A Superb NLP Framework

What a year this is turning out to be for OpenAI’s NLP research. They captured our attention with the release of GPT-2 in February (more on that later) and have now come up with an NLP framework that builds on top of the popular Transformer architecture.

The Sparse Transformer is a deep neural network that predicts the next item in a sequence. This includes text, images and even audio! The initial results have been record-breaking. The algorithm uses the attention mechanism (quite popular in deep learning) to extract patterns from sequences 30 times longer than what was previously possible.

Got your attention, didn’t it? This repository contains the sparse attention components of this framework. You can clone or download the repository and start working on an NLP sequence prediction problem right now. Just make sure you use Google Colab and the free GPU they offer.

Read more about the Sparse Transformer on the below links:

OpenAI’s GPT-2 in a Few Lines of Code

Ah yes. OpenAI’s GPT-2. I haven’t seen such hype around a data science library release before. They only released a very small sample of their original model (owing to fear of malicious misuse), but even that mini version of the algorithm has shown us how powerful GPT-2 is for NLP tasks.

There have been many attempts to replicate GPT-2’s approach but most of them are too complex or long-winded. That’s why this repository caught my eye. It’s a simple Python package that allows us to retrain GPT-2’s text-generating model on any unseen text. Check out the below-generated text using the gpt2.generate() command:

You can install gpt-2-simple directly via pip (you’ll also need TensorFlow installed):

pip3 install gpt_2_simple

NeuronBlocks – Impressive NLP Deep Learning Toolkit by Microsoft

Another NLP entry this month. It just goes to show the mind-boggling pace at which advancements in NLP are happening right now.

NeuronBlocks is an NLP toolkit developed by Microsoft that helps data science teams build end-to-end pipelines for neural networks. The idea behind NeuronBlocks is to reduce the cost it takes to build deep neural network models for NLP tasks.

There are two major components that makeup NeuronBlocks (use the above image as a reference):

- BlockZoo: This contains popular neural network components

- ModelZoo: This is a suite of NLP models for performing various tasks

You know how costly applying deep learning solutions can get. So make sure you check out NeuronBlocks and see if it works for you or your organization. The full paper describing NeuronBlocks can be read here.

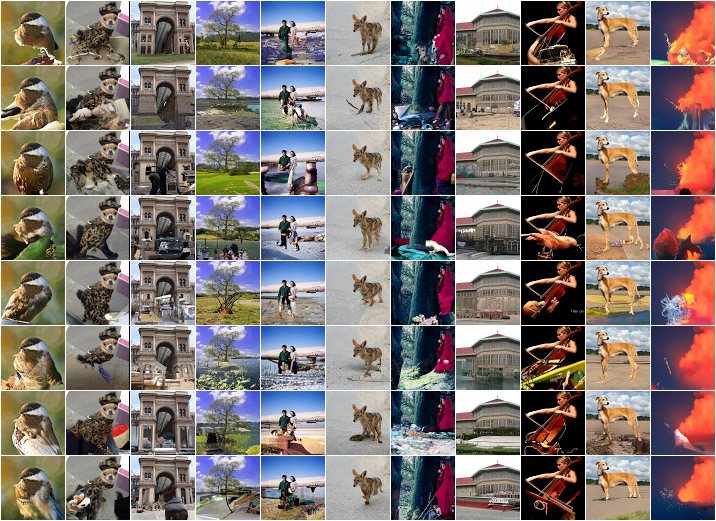

CenterNet – Computer Vision using Center Point Detection

I really like this approach to object detection. Generally, detection algorithms identify objects as axis-aligned boxes in the given image. These methods look at multiple object points and locations and classify each. This sounds fair – that’s how everyone does it, right?

Well, this approach, called CenterNet, models an object as a single point. Basically, it identifies the central point of any bounding box using keypoint estimation. CenterNet has proven to be much faster and more accurate than the bounding box techniques we are familiar with.

Try it out next time you’re working on an object detection problem – you’ll love it! You can read the paper explaining CenterNet here.

BentoML – Toolkit for Deploying Models!

Understanding and learning how to deploy machine learning models is a MUST for any data scientist. In fact, more and more recruiters are starting to ask deployment-related questions during data scientist interviews. If you don’t know what it is, you need to brush up right now.

BentoML is a Python library that helps you package and deploy machine learning models. You can take your model from your notebook to the production API service in 5 minutes (approximately!). The BentoML service can easily be deployed with your favorite platforms, such as Kubernetes, Docker, Airflow, AWS, Azure, etc.

It’s a flexible library. It supports popular frameworks like TensorFlow, PyTorch, Sci-kit Learn, XGBoost, etc. You can even deploy custom frameworks using BentoML. Sounds like too good an opportunity to pass up!

This GitHub repository contains the code to get you started, plus installation instructions and a couple of examples.

Data Science Reddit Discussions

What Role do Tools like Tableau and Alteryx Play in a Data Science Organization?

Are you working in a Business Intelligence/MIS/Reporting role? Do you often find yourself working with drag-and-drop tools like Tableau, Alteryx, Power BI? If you’re reading this article, I’m assuming you are interested in transitioning to data science.

This discussion thread, started by a slightly frustrated data analyst, dives into the role a data analyst can play in a data science project. The discussion focuses on the skills a data analyst/BI professional needs to pick up to stand any chance of switching to data science.

Hint: Learning how to code well is the #1 advice.

Also, check out our comprehensive and example-filled article on the 11 steps you should follow to transition into data science.

Lessons Learned During Move from Master’s Degree to the Industry

The biggest gripe hiring data science managers have is the lack of industry experience candidates bring. Bridging the gap between academia and industry has proven to be elusive for most data science enthusiasts. MOOCs, books, articles – all of these are excellent sources of knowledge – but they don’t provide industry exposure.

This discussion, starting from the author’s post, is gold fodder for us. I like that the author has posted an exhaustive description of his interview experience. The comments include on-point questions that probe out more information on this transition.

When ML and Data Science are the Death of a Good Company: A Cautionary Tale

The consensus these days is you can use machine learning and artificial intelligence to improve your organization’s bottom line. That’s what management feed leadership and that brings in investment.

But what happens when management doesn’t know how to build AI and ML solutions? And doesn’t invest in first setting up the infrastructure before even thinking about machine learning? That part is often overlooked during discussions and is often fatal to a company.

This discussion is about how a company, chugging along using older programming languages and tools, suddenly decides to replace its old architecture with flashy data science scripts and tools. A cautionary tale and one you should pay heed to as you enter this industry.

Have we hit the Limits of Deep Reinforcement Learning?

I’ve seen this question being asked on multiple forums recently. It’s an understandable thought. Apart from a few breakthroughs by a tech giant every few months, we haven’t seen a lot of progress in deep reinforcement learning.

But is this true? Is this really the limit? We’ve barely started to scratch the surface and are we already done? Most of us believe there’s a lot more to come. This discussion hits the right point between the technical aspect and the overall grand scheme of things.

You can apply the lessons learned from this discussion to deep learning as well. You’ll see the similarities when the talk turns to deep neural networks.

What do Data Scientists do on a Day-to-Day Basis?

Ever wondered what a data scientist spends most of their day on? Most aspiring professionals think they’ll be building model after model. That’s a trap you need to avoid at any cost.

I like the first comment in this discussion. The person equates being a data scientist to being a lawyer. That is, there are different kinds of roles depending on which domain you’re in. So there’s no straight answer to this question.

The other comments offer a nice perspective of what data scientists are doing these days. In short, there’s a broad range of tasks that will depend entirely on what kind of project you have and the size of your team. There’s some well-intentioned sarcasm as well – I always enjoy that!

End Notes

I loved putting together this month’s edition given the sheer scope of topics we have covered. Where computer vision techniques have hit a ceiling (relatively speaking), NLP continues to break through barricades. Sparse Transformer by OpenAI seems like a great NLP project to try out next.

What did you think of this month’s collection? Any data science libraries or discussions I missed out on? Hit me up in the comments section below and let’s discuss!