How Part-of-Speech Tag, Dependency and Constituency Parsing Aid In Understanding Text Data?

Overview

- Learn about Part-of-Speech (POS) Tagging,

- Understand Dependency Parsing and Constituency Parsing

Introduction

Knowledge of languages is the doorway to wisdom.

– Roger Bacon

I was amazed that Roger Bacon gave the above quote in the 13th century, and it still holds, Isn’t it? I am sure that you all will agree with me.

Today, the way of understanding languages has changed a lot from the 13th century. We now refer to it as linguistics and natural language processing. But its importance hasn’t diminished; instead, it has increased tremendously. You know why? Because its applications have rocketed and one of them is the reason why you landed on this article.

Each of these applications involve complex NLP techniques and to understand these, one must have a good grasp on the basics of NLP. Therefore, before going for complex topics, keeping the fundamentals right is important.

That’s why I have created this article in which I will be covering some basic concepts of NLP – Part-of-Speech (POS) tagging, Dependency parsing, and Constituency parsing in natural language processing. We will understand these concepts and also implement these in python. So let’s begin!

Table of contents

Part-of-Speech(POS) Tagging

Part-of-Speech (POS) tagging is a natural language processing technique that involves assigning specific grammatical categories or labels (such as nouns, verbs, adjectives, adverbs, pronouns, etc.) to individual words within a sentence. This process provides insights into the syntactic structure of the text, aiding in understanding word relationships, disambiguating word meanings, and facilitating various linguistic and computational analyses of textual data.

In our school days, all of us have studied the parts of speech, which includes nouns, pronouns, adjectives, verbs, etc. Words belonging to various parts of speeches form a sentence. Knowing the part of speech of words in a sentence is important for understanding it.

That’s the reason for the creation of the concept of POS tagging. I’m sure that by now, you have already guessed what POS tagging is. Still, allow me to explain it to you.

Part-of-Speech(POS) Tagging is the process of assigning different labels known as POS tags to the words in a sentence that tells us about the part-of-speech of the word.

Broadly there are two types of POS tags:

1. Universal POS Tags :

These tags are used in the Universal Dependencies (UD) (latest version 2), a project that is developing cross-linguistically consistent treebank annotation for many languages. These tags are based on the type of words. E.g., NOUN(Common Noun), ADJ(Adjective), ADV(Adverb).

List of Universal POS Tags

You can read more about each one of them here.

2. Detailed POS Tags:

These tags are the result of the division of universal POS tags into various tags, like NNS for common plural nouns and NN for the singular common noun compared to NOUN for common nouns in English. These tags are language-specific. You can take a look at the complete list here.

Now you know what POS tags are and what is POS tagging. So let’s write the code in python for POS tagging sentences. For this purpose, I have used Spacy here, but there are other libraries like NLTK and Stanza, which can also be used for doing the same.

In the above code sample, I have loaded the spacy’s en_web_core_sm model and used it to get the POS tags. You can see that the pos_ returns the universal POS tags, and tag_ returns detailed POS tags for words in the sentence.

Dependency Parsing

Dependency parsing is the process of analyzing the grammatical structure of a sentence based on the dependencies between the words in a sentence.

In Dependency parsing, various tags represent the relationship between two words in a sentence. These tags are the dependency tags. For example, In the phrase ‘rainy weather,’ the word rainy modifies the meaning of the noun weather. Therefore, a dependency exists from the weather -> rainy in which the weather acts as the head and the rainy acts as dependent or child. This dependency is represented by amod tag, which stands for the adjectival modifier.

Similar to this, there exist many dependencies among words in a sentence but note that a dependency involves only two words in which one acts as the head and other acts as the child. As of now, there are 37 universal dependency relations used in Universal Dependency (version 2). You can take a look at all of them here. Apart from these, there also exist many language-specific tags.

Now let’s use Spacy and find the dependencies in a sentence.

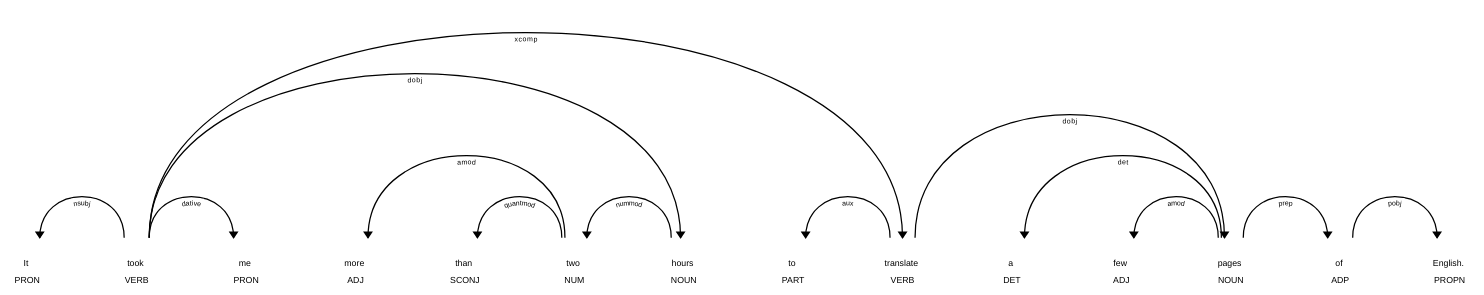

In the above code example, the dep_ returns the dependency tag for a word, and head.text returns the respective head word. If you noticed, in the above image, the word took has a dependency tag of ROOT. This tag is assigned to the word which acts as the head of many words in a sentence but is not a child of any other word. Generally, it is the main verb of the sentence similar to ‘took’ in this case.

Now you know what dependency tags and what head, child, and root word are. But doesn’t the parsing means generating a parse tree?

Yes, we’re generating the tree here, but we’re not visualizing it. The tree generated by dependency parsing is known as a dependency tree. There are multiple ways of visualizing it, but for the sake of simplicity, we’ll use displaCy which is used for visualizing the dependency parse.

In the above image, the arrows represent the dependency between two words in which the word at the arrowhead is the child, and the word at the end of the arrow is head. The root word can act as the head of multiple words in a sentence but is not a child of any other word. You can see above that the word ‘took’ has multiple outgoing arrows but none incoming. Therefore, it is the root word. One interesting thing about the root word is that if you start tracing the dependencies in a sentence you can reach the root word, no matter from which word you start.

Now you know about the dependency parsing, so let’s learn about another type of parsing known as Constituency Parsing.

Constituency Parsing

Constituency Parsing is the process of analyzing the sentences by breaking down it into sub-phrases also known as constituents. These sub-phrases belong to a specific category of grammar like NP (noun phrase) and VP(verb phrase).

Let’s understand it with the help of an example. Suppose I have the same sentence which I used in previous examples, i.e., “It took me more than two hours to translate a few pages of English.” and I have performed constituency parsing on it. Then, the constituency parse tree for this sentence is given by-

In the above tree, the words of the sentence are written in purple color, and the POS tags are written in red color. Except for these, everything is written in black color, which represents the constituents. You can clearly see how the whole sentence is divided into sub-phrases until only the words remain at the terminals. Also, there are different tags for denoting constituents like

- VP for verb phrase

- NP for noun phrases

These are the constituent tags. You can read about different constituent tags here.

Now you know what constituency parsing is, so it’s time to code in python. Now spaCy does not provide an official API for constituency parsing. Therefore, we will be using the Berkeley Neural Parser. It is a python implementation of the parsers based on Constituency Parsing with a Self-Attentive Encoder from ACL 2018.

You can also use StanfordParser with Stanza or NLTK for this purpose, but here I have used the Berkely Neural Parser. For using this, we need first to install it. You can do that by running the following command.

!pip install benepar

Then you have to download the benerpar_en2 model.

You might have noticed that I am using TensorFlow 1.x here because currently, the benepar does not support TensorFlow 2.0. Now, it’s time to do constituency parsing.

Here, _.parse_string generates the parse tree in the form of string.

End Notes

Now, you know what POS tagging, dependency parsing, and constituency parsing are and how they help you in understanding the text data i.e., POS tags tells you about the part-of-speech of words in a sentence, dependency parsing tells you about the existing dependencies between the words in a sentence and constituency parsing tells you about the sub-phrases or constituents of a sentence. You are now ready to move to more complex parts of NLP. As your next steps, you can read the following articles on the information extraction.

- How Search Engines like Google Retrieve Results: Introduction to Information Extraction using Python and spaCy

- Hands-on NLP Project: A Comprehensive Guide to Information Extraction using Python

In these articles, you’ll learn how to use POS tags and dependency tags for extracting information from the corpus. Also, if you want to learn about spaCy then you can read this article: spaCy Tutorial to Learn and Master Natural Language Processing (NLP) Apart from these, if you want to learn natural language processing through a course then I can highly recommend you the following which includes everything from projects to one-on-one mentorship:

Natural Language Processing using Python

Frequently Asked Questions

A. Part-of-Speech (POS) tagging is a preprocessing step in natural language processing (NLP) that involves assigning a grammatical category or part-of-speech label (such as noun, verb, adjective, etc.) to each word in a sentence. It serves several purposes as a preprocessing step:

1. Syntactic Analysis: POS tagging helps in understanding the grammatical structure of a sentence. It provides information about the roles of words in forming phrases and sentences, aiding in syntactic analysis.

2. Feature Extraction: POS tags can be useful as features for various NLP tasks, such as text classification, named entity recognition, and machine translation. Different POS tags often convey different semantic or contextual information about words.

3. Disambiguation: Many words in natural language have multiple possible interpretations (polysemy). POS tagging helps disambiguate word senses based on their grammatical context.

4. Language Modeling: POS tagging is often used as a building block for language models, providing information about the relationships between words and their likely syntactic roles.

5. Rule-Based Processing: POS tags can be used in rule-based processing to identify patterns and grammatical structures in text.

6. Lemmatization and Stemming: POS information is valuable for lemmatization and stemming, where words are reduced to their base forms.

7. Parsing and Grammar Checking: POS tagging aids in syntactic parsing and grammar checking by providing information about how words function within sentences.

In summary, POS tagging is a fundamental preprocessing step that helps enhance the accuracy and effectiveness of various NLP tasks by providing insights into the grammatical and syntactic structure of textual data.

A. In the sentence “She quickly reads a book,” POS tagging assigns tags like “PRON” (pronoun) to “She,” “ADV” (adverb) to “quickly,” “VERB” to “reads,” “DET” (determiner) to “a,” and “NOUN” to “book.” This tagging clarifies the roles and grammatical functions of words, aiding syntactic and semantic analysis in natural language processing tasks.

POS tagging in sentiment analysis helps understand the emotional tone of words in a sentence. It labels words with their parts of speech, aiding in identifying nuances that influence sentiment.

POS tagging in text classification involves assigning parts of speech (like nouns, verbs, etc.) to words in a given text. This tagging adds linguistic context, enhancing the accuracy of algorithms in understanding and categorizing text content.

If you found this article informative, then share it with your friends. Also, you can comment below your queries.