Top 4 Sentence Embedding Techniques using Python

Introduction

The ability of humans to understand nuances in a language is unmatchable. The perceptive human brain is able to understand humor, sarcasm, negative sentiment, and much more, very easily in a given sentence. The only criterion for this is that we have to know the language that sentence is in.

For instance, if someone commented on my article in Japanese, I certainly wouldn’t understand what the person is trying to say. This is the general rule, isn’t it? For effective communication, we need to interact with the listener in a language that he/she understands best.

For a machine to process and understand any kind of text, it is important that we represent this text in a language that the machine can understand. What language do you think machines understand best? Yes, it is that of numbers. A machine can only work with numbers, no matter what data we provide to it: video, audio, image, or text. That is why, representing text as numbers or embedding text, as it called, is one of the most actively researched topics.

In this article, I will be covering the top 4 sentence embedding techniques with Python Code. Further, I limit the scope of this article to providing an overview of their architecture and how to implement these techniques in Python. We will be taking the basic use case of finding similar sentences given a sentence and demonstrate how to use such techniques for the same. I will begin with an overview of word and sentence embeddings.

Table of contents

If you want to start your journey in learning NLP, I recommend you go through this free course- Introduction to Natural Language Processing

What is Word Embedding?

The initial embedding techniques dealt with only words. Given a set of words, you would generate an embedding for each word in the set. The simplest method was to one-hot encode the sequence of words provided so that each word was represented by 1 and other words by 0. While this was effective in representing words and other simple text-processing tasks, it didn’t really work on the more complex ones, such as finding similar words.

For example, if we search for a query: Best Italian restaurant in Delhi, we would like to get search results corresponding to Italian food, restaurants in Delhi and best. However, if we get a result saying: Top Italian food in Delhi, our simple method would fail to detect the similarity between ‘Best’ and ‘Top’ or between ‘food’ and ‘restaurant’.

This issue gave rise to what we now call word embeddings. Basically, a word embedding not only converts the word but also identifies the semantics and syntaxes of the word to build a vector representation of this information. Some popular word embedding techniques include Word2Vec, GloVe, ELMo, FastText, etc.

The underlying concept is to use information from the words adjacent to the word. There have been path-breaking innovation in Word Embedding techniques with researchers finding better ways to represent more and more information on the words, and possibly scaling these to not only represent words but entire sentences and paragraphs.

I recommend you go through this article to learn more- An Intuitive Understanding of Word Embeddings: From Count Vectors to Word2Vec

What is Sentence Embedding?

In NLP, sentence embedding refers to a numeric representation of a sentence in the form of a vector of real numbers, which encodes meaningful semantic information. It enables comparisons of sentence similarity by measuring the distance or similarity between these vectors. Techniques like Universal Sentence Encoder (USE) use deep learning models trained on large corpora to generate these embeddings, which find applications in tasks like text classification, clustering, and similarity matching.

What if, instead of dealing with individual words, we could work directly with individual sentences? In the case of large text, using only words would be very tedious and we would be limited by the information we can extract from the word embeddings.

Suppose, we come across a sentence like ‘I don’t like crowded places’, and a few sentences later, we read ‘However, I like one of the world’s busiest cities, New York’. How can we make the machine draw the inference between ‘crowded places’ and ‘busy cities’?

Clearly, word embedding would fall short here, and thus, we use Sentence Embedding. Sentence embedding techniques represent entire sentences and their semantic information as vectors. This helps the machine in understanding the context, intention, and other nuances in the entire text.

Sentence Embedding Models

Sentence embedding models are designed to encapsulate the semantic essence of a sentence within a fixed-length vector. Unlike traditional Bag-of-Words (BoW) representations or one-hot encoding, sentence embeddings capture context, meaning, and relationships between words. This transformation is crucial for enabling machines to grasp the subtleties of human language.

Methods of Sentence Embedding

Several methods are employed to generate sentence embeddings:

- Averaging Word Embeddings: This approach involves taking the average of word embeddings within a sentence. While simple, it may not capture complex contextual nuances.

- Pre-trained Models like BERT: Models like BERT (Bidirectional Encoder Representations from Transformers) have revolutionized sentence embeddings. BERT-based models consider the context of each word in a sentence, resulting in rich and contextually aware embeddings.

- Neural Network-Based Approaches: Skip-Thought vectors and InferSent are examples of neural network-based sentence embedding models. They are trained to predict the surrounding sentences, which encourages them to understand sentence semantics.

Noteworthy Sentence Embedding Models

- BERT (Bidirectional Encoder Representations from Transformers): BERT has set a benchmark in sentence embeddings, offering pre-trained models for various NLP tasks. Its bidirectional attention and contextual understanding make it a prominent choice.

- RoBERTa: An evolution of BERT, RoBERTa fine-tunes its training methodology, achieving state-of-the-art performance in multiple NLP tasks.

- USE (Universal Sentence Encoder): Developed by Google, USE generates embeddings for text that can be used for various applications, including cross-lingual tasks.

Sentence Embedding Libraries

Just like Word Embedding, Sentence Embedding is also a very popular research area with very interesting techniques that break the barrier in helping the machine understand our language.

- Doc2Vec

- SentenceBERT

- InferSent

- Universal Sentence Encoder

We assume that you have prior knowledge of word embeddings and other fundamental NLP concepts. Before continuing, I recommend you read the following articles-

- Ultimate Guide to Understand and Implement Natural Language Processing (with codes in Python)

- An Essential Guide to Pretrained Word Embeddings for NLP Practitioners

Now let us begin!

We will first set up some basic libraries and define our list of sentences. The following steps will help you do so-

Step 1:

Firstly, import the libraries and download ‘punkt‘

Step 2:

Then, we define our list of sentences. You can use a larger list (it is best to use a list of sentences for easier processing of each sentence)

Step 3:

We will also keep a tokenized version of these sentences

Python Code:

Step 4:

Finally, we define a function which returns the cosine similarity between 2 vectors

Let us start by exploring the Sentence Embedding techniques one by one.

Doc2Vec

An extension of Word2Vec, the Doc2Vec embedding is one of the most popular techniques out there. Introduced in 2014, it is an unsupervised algorithm and adds on to the Word2Vec model by introducing another ‘paragraph vector’. Also, there are 2 ways to add the paragraph vector to the model.

1.1) PVDM(Distributed Memory version of Paragraph Vector): We assign a paragraph vector sentence while sharing word vectors among all sentences. Then we either average or concatenate the (paragraph vector and words vector) to get the final sentence representation. If you notice, it is an extension of the Continuous Bag-of-Word type of Word2Vec where we predict the next word given a set of words. It is just that in PVDM, we predict the next sentence given a set of sentences.

1.2) PVDOBW( Distributed Bag of Words version of Paragraph Vector): Just lime PVDM, PVDOBW is another extension, this time of the Skip-gram type. Here, we just sample random words from the sentence and make the model predict which sentence it came from(a classification task).

The authors of the paper recommend using both in combination, but state that usually PVDM is more than enough for most tasks.

Step 1:

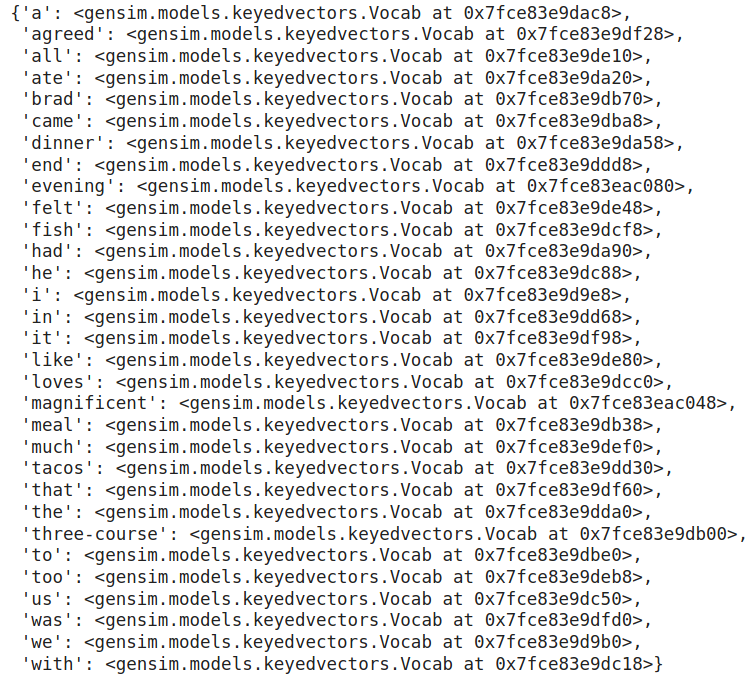

We will use Gensim to show an example of how to use Doc2Vec. Further, we have already had a list of sentences. We will first import the model and other libraries and then we will build a tagged sentence corpus. Each sentence is now represented as a TaggedDocument containing a list of the words in it and a tag associated with it.

Step 2:

We then train the model with the parameters:

Step 3:

We now take up a new test sentence and find the top 5 most similar sentences from our data. We will also display them in order of decreasing similarity. The infer_vector method returns the vectorized form of the test sentence(including the paragraph vector). The most_similar method returns similar sentences

SentenceBERT

Currently, the leader among the pack, SentenceBERT was introduced in 2018 and immediately took the pole position for Sentence Embeddings. At the heart of this BERT-based model, there are 4 key concepts:

- Attention

- Transformers

- BERT

- Siamese Network

Sentence-BERT uses a Siamese network like architecture to provide 2 sentences as an input. These 2 sentences are then passed to BERT models and a pooling layer to generate their embeddings. Then use the embeddings for the pair of sentences as inputs to calculate the cosine similarity.

We can install Sentence BERT using:

!pip install sentence-transformers

Step 1:

We will then load the pre-trained BERT model. There are many other pre-trained models available. You can find the full list of models here.

Step 2:

We will then encode the provided sentences. We can also display the sentence vectors(just uncomment the code below)

Step 3:

Then we will define a test query and encode it as well:

Step 4:

We will then compute the cosine similarity using scipy. We will retrieve the similarity values between the sentences and our test query:

There you go, we have obtained the similarity between the sentences in our text and our test sentence. A crucial point to note is that SentenceBERT is pretty slow if you want to train it from scratch.

InferSent

Presented by Facebook AI Research in 2018, InferSent is a supervised sentence embedding technique. The main feature of this model is that it is trained on Natural language Inference(NLI) data, more specifically, the SNLI (Stanford Natural Language Inference) dataset. It consists of 570k human-generated English sentence pairs, manually labeled with one of the three categories – entailment, contradiction, or neutral.

Just like SentenceBERT, we take a pair of sentences and encode them to generate the actual sentence embeddings. Then, extract the relations between these embeddings using:

- concatenation

- element-wise product

- absolute element-wise difference.

The output vector of these operations is then fed to a classifier that classifies the vector into one of the 3 above-defined categories. The actual paper proposes various encoder architectures, majorly concentrated around GRUs, LSTMs, and BiLSTMs.

Another important feature is that InferSent uses GloVe vectors for pre-trained word embeddings. A more recent version of InferSent, known as InferSent2 uses fastText.

Let us see how Sentence Similarity task works using InferSent. We will use PyTorch for this, so do make sure that you have the latest PyTorch version installed from here.

Step 1:

As mentioned above, there are 2 versions of InferSent. Version 1 uses GLovE while version 2 uses fastText vectors. You can choose to work with any model (I have used version 2). Thus, we download the InferSent Model and the pre-trained Word Vectors. For this, please first save the models.py file from here and store it in your working directory.

We also need to save the trained model and pre-trained GLoVe word vectors. According to the code below, our working directory should have an ‘encoders’ folder and a folder called ‘GLoVe’. The encoder folder will have our model while the GloVe folder should have the word vectors:

Then we load our model and our word embeddings:

Step 2:

Then, we build the vocabulary from the list of sentences that we defined at the beginning:

Step 3:

Like before, we have the test query and we use InferSent to encode this test query and generate an embedding for it.

Step 4:

Finally, we compute the cosine similarity of this query with each sentence in our text:

Universal Sentence Encoder

One of the most well-performing sentence embedding techniques right now is the Universal Sentence Encoder. And it should come as no surprise from anybody that it has been proposed by Google. The key feature here is that we can use it for Multi-task learning.

This means that the sentence embeddings we generate can be used for multiple tasks like sentiment analysis, text classification, sentence similarity, etc, and the results of these asks are then fed back to the model to get even better sentence vectors that before.

The most interesting part is that this encoder is based on two encoder models and we can use either of the two:

- Transformer

- Deep Averaging Network(DAN)

Both of these models are capable of taking a word or a sentence as input and generating embeddings for the same. The following is the basic flow:

- Tokenize the sentences after converting them to lowercase

- Depending on the type of encoder, the sentence gets converted to a 512-dimensional vector

- If we use the transformer, it is similar to the encoder module of the transformer architecture and uses the self-attention mechanism.

- The DAN option computes the unigram and bigram embeddings first and then averages them to get a single embedding. This is then passed to a deep neural network to get a final sentence embedding of 512 dimensions.

- These sentence embeddings are then used for various unsupervised and supervised tasks like Skipthoughts, NLI, etc. The trained model is then again reused to generate a new 512 dimension sentence embedding.

USE Embedding Process

To start using the USE embedding, we first need to install TensorFlow and TensorFlow hub:

Step 1: Firstly, we will import the following necessary libraries:

Step 2: The model is available to us via the TFHub. Let’s load the model:

Step 3: Then we will generate embeddings for our sentence list as well as for our query. This is as simple as just passing the sentences to the model:

Step 4: Finally, we will compute the similarity between our test query and the list of sentences:

Conclusion

To conclude, we saw the top 4 sentence embedding techniques in NLP and the basic codes to use them for finding text similarity. I urge you to take up a larger dataset and try these models out on this dataset for other NLP tasks as well. Also, this is just a basic code to calculate sentence similarity. For a proper model, you would need to preprocess these sentences first and then transform them into embeddings.

Also, I have given an overview of the architecture and I can’t wait to explore more on how sentence embedding techniques will enhance to help machines understand our language better and better!

If you are interested to learn NLP, I recommend this course- Natural Language Processing (NLP) Using Python

Moreover, this article does not say that there are no other popular models. Some of the honorable mentions include FastSent, Skip-thought, Quick-thought, Word Movers Embedding, etc. If you have tried these out or any other model, please share it with us in the comments below!

Frequently Asked Questions

A. Sentence embedding methods include averaging word embeddings, using pre-trained models like BERT, and neural network-based approaches like Skip-Thought vectors.

A. An example of word embedding is Word2Vec, which represents words as continuous vector values, capturing semantic relationships.

A. The best sentence embedding model can vary based on the task, but BERT-based models like RoBERTa and T5 are often considered among the top choices.

A. Sentence encoding focuses on representing a sentence in a fixed-size vector, while sentence embedding aims to capture contextual and semantic information in a continuous vector representation.

Hi while running this code i am getting completely opposite similaries my output for all the four looks strange this is the output for the Universal Sentence Encoder and i am using from scipy.spatial.distance import cosine To check the similarity Sentence = I ate dinner. ; similarity = 0.5313358306884766 Sentence = We had a three-course meal. ; similarity = 0.6435693204402924 Sentence = Brad came to dinner with us. ; similarity = 0.7966105192899704 Sentence = He loves fish tacos. ; similarity = 0.834845632314682 Sentence = In the end, we all felt like we ate too much. ; similarity = 0.8501257747411728 Sentence = We all agreed; it was a magnificent evening. ; similarity = 0.9415640830993652

"from scipy.spatial.distance import cosine" imports cosine distance rather cosine similarity. Since all the embedding vectors are in positive space hence you can just take "1-cosine(query_vec, model([sent])[0])" as measure of similarity between two sentences.

One of the most informative introductions to sentence embedding available at this moment. Congratulations on writing such a clear and concise intro!

I think there is a typo in command while you were trying to install the infersent model--- you did it for fast-text model(infersent 2) while it should be infersent 1 (for Glove); scince you installed the glove pretrained vector dataset.

Hello, I have a question about the universal serial encoder. How it would deal with the out of vocabulary words? Looking forward to hear back from you.

Hello, thank you for this great article. I have one question, for the Universal Sentence Encoder, the code that you have provided; what is being implemented? Transformer or DAN?