GPT-3 THE NEXT BIG THING! Foundation of Future?

This article was published as a part of the Data Science Blogathon.

Introduction

Did you ever have a thought or a wish that you just wanted to write two lines of an essay or a journal and the computer just wrote the rest for you? If yes, then GPT-3 is the answer for you. Baffled? So are the people who got their hands on the GPT-3.

Every field in AI is making advancements and NLP & Deep learning are such field in the world of AI that is making great progress as we speak. The latest trendsetter in this field and the talk of every social media discussion is GPT-3. If you are puzzled with the title so am I and the reason being GPT-3 itself gave out that “GPT-3 is going to be the next big thing after Bitcoin”. As the GPT family is making continuous advancements, the predecessors will definitely be remembered for showing the path of advancements and will be considered as the foundation of the future.

So in this article let’s solve the puzzle if it is worth the talk by understanding what it is and how powerful is it?

Table of Contents

- What is GPT-3?

- Data on GPT-3

- GPT-3 and Machine learning

- What can GPT-3 DO?

- Challenges involved with GPT-3

- GPT-3 vs Humans

What is GPT-3?

GPT stands for Generative pre-trained Transformer 3(GPT-3) which is an autoregressive language model that uses deep learning to produce human-like texts. It is the third generation of the GPT series created by an Artificial intelligence-based research laboratory named OpenAI which is based out of San Francisco. It is considered to be the largest artificial neural network created to date. This family of models works like autocomplete in your phone that tries to predict the missing puzzle of your input. It is out on private beta and Microsoft announced an agreement with OpenAI to license OpenAI’s GPT-3.

Data on GPT-3

Data is the fuel of any machine learning or AI models, So what does GPT-3 feed on?

It’s fed with almost or entire data on the internet. It is trained on the massive amount of data from the Common Crawl dataset. So this dataset alone makes up 60% of training data for a GPT-3 along with Wikipedia and others. It consists of 175 billion parameters and has been trained with 45TB of data. A model is always as good as the data that we feed a model and GPT-3 has aced it in terms of the size but the quality will be known only once it’s used full fledge and we can always discuss the bias of data used and the model bias

.jpeg)

But one thing is for sure, that is a whole lot of data that GPT-3 possess.

GPT-3 and Machine learning

OpenAI researchers and engineers have trained a large-scale unsupervised language model that generates coherent paragraphs of texts. It is the largest neural network to date. GPT-3 has demonstrated outstanding NLP capabilities as the training parameters are huge. The results of in-context learning show that higher accuracy is achieved with larger models. Its predecessor GPT-2 (released in Feb 2019) was trained on 40GB of text data and had 1.5 BN parameters. It was evaluated under 3 conditions-

- Zero-shot learning: Aims at predicting with instances not seen during training

- One-shot learning: The number of seen instances is one during training

- Few-shot learning: The learning model is fed with small amounts of training data.

It achieved good results in zero and one shot and in a few shots it surpassed models like BERT from google that also required fine-tuning. The figure below shows the accuracy of the model based on parameters and learning.

Larger models make increasingly efficient use of in-context information. The steeper “in-context learning curves” for large models demonstrate

improved ability to learn a task from contextual information.

What can GPT-3 do?

- Its ability is that it provides outputs on anything that has a language structure.

- GPT-3 can be used in writing essays, poems, stories, journals, or answering questions, all these tasks look simpler to a GPT-3.

- It was seen providing codes for a machine learning model given just the dataset name as input.

- GPT-3 was also able to create an app and write codes for special buttons.

- It can also give outputs for SQL queries with simple English inputs.

Well, those are only a few examples of what it can do. Social media platforms like Twitter and LinkedIn are filled with videos and discussions on its capabilities and they are all amazed and why not!

Here is the link to view the capabilities of a GPT-3.

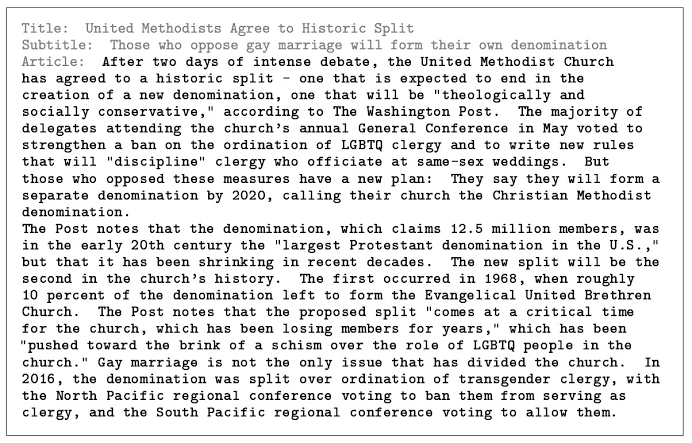

The photo above shows GPT-3 being able to write a full article by just inputting the title

Challenges Involved with GPT-3

After understanding all this, are you wondering, why has OpenAI not open-sourced GPT3 if it’s that great?

The answer is with every technology there are boons and curses and this case is no different.

.jpeg)

- Malicious use of technology, computational costs, and safety are the challenges that OpenAI has when it comes to GPT-3.

- It’s easier to use the model to provide disinformation which is difficult to prevent once it’s open-sourced and that was also a major concern in the predecessors too.

- The model underlying is very large and very expensive to run, this makes it hard for anyone except larger companies to benefit from the underlying technology.

- The OpenAI researchers in their paper also confirmed that the models do exhibit bias and hence they are addressing the issues with usage guidelines and potential safety.

- The concern of bots trolling and spamming can be immense which points out ethics unlike humans who take rest, sleep, take time to have food whereas a trolling or a spamming bot can go on and on.

How good is GPT-3 compared to humans?

Humans can learn with few data and simpler steps while AI machines like GPT-3 require a lot of data to train and predict. AI can outperform humans in specific tasks but does that mean we can say AI is better than humans, definitely not. GPT-3 was seen performing well in specific tasks like writing journals and a survey was conducted to distinguish whether the journal was written by Humans or GPT.

The result came out to be that it wrote journals as good a human would write and it was indistinguishable. Humans always excel with human characteristics of empathy, socializing, and carrying out complex tasks with creativity where AI fails drastically. GPT-3 can be biased based on the data and can possibly end up writing biased articles or journals towards a particular race or culture which can be destructible to any institution using it directly without a review. It lacks the logical thinking capabilities that humans possess.

End Notes

If you are thrilled by the power of GPT-3, you are not alone. We all are and the hype is as such. This will transform every industry and make way for future generations of AI products including the next generations of GPT. In fact, this entire blog could have been written by a GPT-3.

Though it is exceptionally good at some tasks, there is a lot of room for improvement, and as it’s computationally complex the tool may be very expensive and this means that it could be out of reach of smaller companies and individuals. The researchers are aware of model & data bias and acknowledge the same in their paper.

If you want to get your hands on the capability of GPT-3 and test it, you can request access to the beta version of the API from OpenAI here. The GPT-3 and its predecessors can be a foundation for better performing GPTs and a world of AI in the future.

I hope you liked this article, do let me know your opinions and thoughts about GPT-3

Thank you

It is excellent article, for the data science students.