CatBoost: A machine learning library to handle categorical (CAT) data automatically

Introduction

How many of you have seen this error while building your machine learning models using “sklearn”?

I bet most of us! At least in the initial days.

This error occurs when dealing with categorical (string) variables. In sklearn, you are required to convert these categories in the numerical format.

In order to do this conversion, we use several pre-processing methods like “label encoding”, “one hot encoding” and others.

In this article, I will discuss a recently open sourced library ” CatBoost” developed and contributed by Yandex. CatBoost can use categorical features directly and is scalable in nature.

“This is the first Russian machine learning technology that’s an open source,” said Mikhail Bilenko, Yandex’s head of machine intelligence and research.

P.S. You can also read this article written by me before “How to deal with categorical variables?“.

Table of Contents

- What is CatBoost?

- Advantages of CatBoost library

- CatBoost in comparison to other boosting algorithms

- Installing CatBoost

- Solving ML challenge using CatBoost

- End Notes

1. What is CatBoost?

CatBoost is a recently open-sourced machine learning algorithm from Yandex. It can easily integrate with deep learning frameworks like Google’s TensorFlow and Apple’s Core ML. It can work with diverse data types to help solve a wide range of problems that businesses face today. To top it up, it provides best-in-class accuracy.

It is especially powerful in two ways:

- It yields state-of-the-art results without extensive data training typically required by other machine learning methods, and

- Provides powerful out-of-the-box support for the more descriptive data formats that accompany many business problems.

“CatBoost” name comes from two words “Category” and “Boosting”.

As discussed, the library works well with multiple Categories of data, such as audio, text, image including historical data.

“Boost” comes from gradient boosting machine learning algorithm as this library is based on gradient boosting library. Gradient boosting is a powerful machine learning algorithm that is widely applied to multiple types of business challenges like fraud detection, recommendation items, forecasting and it performs well also. It can also return very good result with relatively less data, unlike DL models that need to learn from a massive amount of data.

Here is a video message of Mikhail Bilenko, Yandex’s head of machine intelligence and research and Anna Veronika Dorogush, Head of Tandex machine learning systems.

2. Advantages of CatBoost Library

- Performance: CatBoost provides state of the art results and it is competitive with any leading machine learning algorithm on the performance front.

- Handling Categorical features automatically: We can use CatBoost without any explicit pre-processing to convert categories into numbers. CatBoost converts categorical values into numbers using various statistics on combinations of categorical features and combinations of categorical and numerical features. You can read more about it here.

- Robust: It reduces the need for extensive hyper-parameter tuning and lower the chances of overfitting also which leads to more generalized models. Although, CatBoost has multiple parameters to tune and it contains parameters like the number of trees, learning rate, regularization, tree depth, fold size, bagging temperature and others. You can read about all these parameters here.

- Easy-to-use: You can use CatBoost from the command line, using an user-friendly API for both Python and R.

3. CatBoost – Comparison to other boosting libraries

We have multiple boosting libraries like XGBoost, H2O and LightGBM and all of these perform well on variety of problems. CatBoost developer have compared the performance with competitors on standard ML datasets:

The comparison above shows the log-loss value for test data and it is lowest in the case of CatBoost in most cases. It clearly signifies that CatBoost mostly performs better for both tuned and default models.

In addition to this, CatBoost does not require conversion of data set to any specific format like XGBoost and LightGBM.

4. Installing CatBoost

CatBoost is easy to install for both Python and R. You need to have 64 bit version of python and R.

Below is installation steps for Python and R:

4.1 Python Installation:

pip install catboost4.2 R Installation

install.packages('devtools')

devtools::install_github('catboost/catboost', subdir = 'catboost/R-package')

5. Solving ML challenge using CatBoost

The CatBoost library can be used to solve both classification and regression challenge. For classification, you can use “CatBoostClassifier” and for regression, “CatBoostRegressor“.

Here’s a live coding window for you to play around the CatBoost code and see the results in real-time:

In this article, I’m solving “Big Mart Sales” practice problem using CatBoost. It is a regression challenge so we will use CatBoostRegressor, first I will read basic steps (I’ll not perform feature engineering just build a basic model).

import pandas as pd

import numpy as np

from catboost import CatBoostRegressor

#Read trainig and testing files

train = pd.read_csv("train.csv")

test = pd.read_csv("test.csv")

#Identify the datatype of variables

train.dtypes

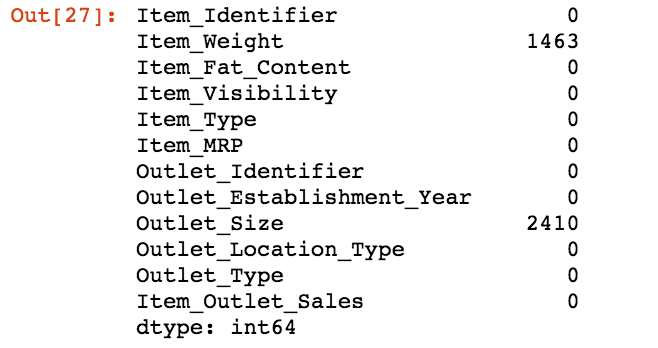

#Finding the missing values train.isnull().sum()

#Imputing missing values for both train and test train.fillna(-999, inplace=True) test.fillna(-999,inplace=True)

#Creating a training set for modeling and validation set to check model performance X = train.drop(['Item_Outlet_Sales'], axis=1) y = train.Item_Outlet_Sales from sklearn.model_selection import train_test_split X_train, X_validation, y_train, y_validation = train_test_split(X, y, train_size=0.7, random_state=1234)

#Look at the data type of variables X.dtypes

Now, you’ll see that we will only identify categorical variables. We will not perform any preprocessing steps for categorical variables:

categorical_features_indices = np.where(X.dtypes != np.float)[0]

#importing library and building model from catboost import CatBoostRegressor model=CatBoostRegressor(iterations=50, depth=3, learning_rate=0.1, loss_function='RMSE') model.fit(X_train, y_train,cat_features=categorical_features_indices,eval_set=(X_validation, y_validation),plot=True)

As you can see that a basic model is giving a fair solution and training & testing error are in sync. You can tune model parameters, features to improve the solution.

Now, the next task is to predict the outcome for test data set.

submission = pd.DataFrame()

submission['Item_Identifier'] = test['Item_Identifier']

submission['Outlet_Identifier'] = test['Outlet_Identifier']

submission['Item_Outlet_Sales'] = model.predict(test)

submission.to_csv("Submission.csv")

That’s it! We have built first model with CatBoost

6. End Notes

In this article, we saw a recently open sourced boosting library “CatBoost” by Yandex which can provide state of the art solution for the variety of business problems.

One of the key features which excites me about this library is handling categorical values automatically using various statistical methods.

We have covered basic details about this library and solved a regression challenge in this article. I’ll also recommend you to use this library to solve a business solution and check performance against another state of art models.

Correction: I best most of us! At least in the initial days. I bet *

Great article ! Thanks Sunil !

This is a great article. Exactly the same error I was facing today.. Thanks so much

Awesome article Sunil! Thank you very much + greetings from Switzerland

Hello Sunil, I simply cannot import or run catboost .After I have successfully installed it I try importing it and get "Kernel dead"Not Trusted error in Jupyter. From command line it says "Python stopped working". What do you suggest?

Very Helpful! will definitely try this out!

Will CATBOOST perform equivalently good with multiclass imbalance data.?

I am interested for machine learning