Keyword Extraction Methods from Documents in NLP

Introduction

Keyword extraction plays a vital role in distilling crucial information from paragraphs or documents. This automated method identifies the most relevant words and phrases within text, aiding in content summarization and issue identification, such as in meeting minutes (MOM). Imagine the need to analyze numerous product reviews online, potentially totaling hundreds of thousands. Keyword extraction becomes indispensable for uncovering key terms that define each review. This approach unveils the prominent topics in consumer discussions, saving valuable time for your team. In this blog, we’ll delve into the keyword extraction using NLP.

This article was published as a part of the Data Science Blogathon.

Table of contents

What is Keyword Extraction?

Keyword extraction is the process of automatically identifying important words or phrases in a text document. It condenses the main topics or themes discussed. Techniques include statistical analysis, NLP algorithms, and machine learning. Widely used in document summarization, SEO, and information retrieval, it aids in organizing and categorizing text data for various applications

How Does Keyword Extraction Work?

Keyword extraction is like finding the most important words or phrases in a piece of writing automatically. Here’s how it works:

- First, we prepare the text by breaking it into individual words and removing unimportant ones like “and” or “the”.

- We count how often each word appears in the text. If a word shows up a lot, it’s probably important.

- We also look at how rare the word is overall. If it’s rare in general but common in this text, it’s likely very important here.

- We combine these two things to figure out which words are most important in this text specifically.

- Then, we rank the words based on how important they are. The most important ones come out on top.

- Finally, we pick out the top words as our keywords.

So, keyword extraction is all about figuring out which words really matter in a piece of writing.

Rake_NLTK

RAKE (Rapid Automatic Keyword Extraction) is a well-known keyword extraction method that finds the most relevant words or phrases in a piece of text using a set of stopwords and phrase delimiters. Rake nltk is an expanded version of RAKE that is supported by NLTK. The steps for Rapid Automatic Keyword Extraction are as follows:

- Split the input text content by dotes

- Create a matrix of word co-occurrences

- Word scoring – That score can be calculated as the degree of a word in the matrix, as the word frequency, or as the degree of the word divided by its frequency

- keyphrases can also create by combining the keywords

- A keyword or keyphrase is chosen if and only if its score belongs to the top T scores where T is the number of keywords you want to extract

Python Implementation of Keyword Extraction using Rake Algorithm

For Installation

pip3 install rake-nltkFor Extracting the Keywords

Please read this official document to learn more about the RAKE algorithm.

Spacy

SpaCy, a newer Python NLP library compared to NLTK or Scikit-Learn, aims to simplify deep learning for text data analysis. Here, we outline the steps for extracting keywords from text using spaCy.

- Split the input text content by tokens

- Extract the hot words from the token list.

- Set the hot words as the words with pos tag “PROPN“, “ADJ“, or “NOUN“. (POS tag list is customizable)

- Find the most common T number of hot words from the list

- Print the results

Python Implementation of Keyword Extraction Using Spacy

For Installation

pip3 install spacyFor extracting the keywords

import spacy

from collections import Counter

from string import punctuation

nlp = spacy.load("en_core_web_sm")

def get_hotwords(text):

result = []

pos_tag = ['PROPN', 'ADJ', 'NOUN']

doc = nlp(text.lower())

for token in doc:

if(token.text in nlp.Defaults.stop_words or token.text in punctuation):

continue

if(token.pos_ in pos_tag):

result.append(token.text)

return result

new_text = """

When it comes to evaluating the performance of keyword extractors, you can use some of the standard metrics in machine learning: accuracy, precision, recall, and F1 score. However, these metrics don’t reflect partial matches. they only consider the perfect match between an extracted segment and the correct prediction for that tag.

Fortunately, there are some other metrics capable of capturing partial matches. An example of this is ROUGE.

"""

output = set(get_hotwords(new_text))

most_common_list = Counter(output).most_common(10)

for item in most_common_list:

print(item[0])Output

accuracy

precision

capable

partial

prediction

score

correct

extractors

matches

perfectTextrank

Textrank, a Python tool for keyword extraction and text summarization, analyzes word relationships by examining their sequential occurrences. The algorithm employs the PageRank algorithm to rank the most significant terms in the text. Textrank seamlessly integrates with the Spacy pipeline and executes the following key steps for keyword extraction:

- Step 1: Textrank constructs a word network (word graph) to identify relevant terms based on word co-occurrence. Words frequently found together in the text are linked, with stronger connections for higher frequency pairs.

- Step 2: The Pagerank algorithm assesses word relevance within the network. The top third of terms are retained as significant. When relevant terms appear consecutively in the text, they are grouped together in a keywords table.

Textrank, implemented in Python, offers swift and precise phrase extraction and extractive summarization, making it a valuable addition to spaCy workflows. This graph-based method is language-agnostic and does not rely on domain-specific knowledge. For keyword extraction, we’ll utilize PyTextRank, a Python version of TextRank integrated as a spaCy pipeline plugin. To delve deeper into Textrank, you can refer to the base paper linked here.

Python Implementation of Keyword Extraction Using Textrank

For Installation

pip3 install pytextrank

spacy download en_core_web_smFor Extracting the Keywords

import spacy

import pytextrank

# example text

text = "Compatibility of systems of linear constraints over the set of natural numbers. Criteria of compatibility of a system of linear Diophantine equations, strict inequations, and nonstrict inequations are considered. Upper bounds for components of a minimal set of solutions and algorithms of construction of minimal generating sets of solutions for all types of systems are given. These criteria and the corresponding algorithms for constructing a minimal supporting set of solutions can be used in solving all the considered types systems and systems of mixed types."

# load a spaCy model, depending on language, scale, etc.

nlp = spacy.load("en_core_web_sm")

# add PyTextRank to the spaCy pipeline

nlp.add_pipe("textrank")

doc = nlp(text)

# examine the top-ranked phrases in the document

for phrase in doc._.phrases[:10]:

print(phrase.text)Output

mixed types

minimal generating sets

systems

nonstrict inequations

strict inequations

natural numbers

linear Diophantine equations

solutions

linear constraints

a minimal supporting setWord Cloud

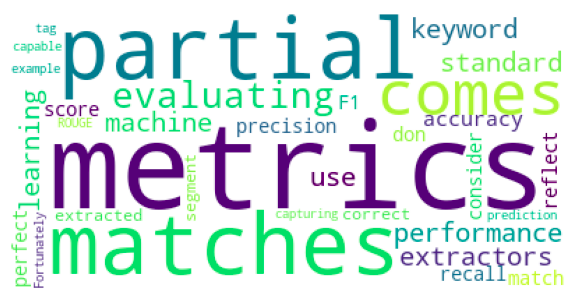

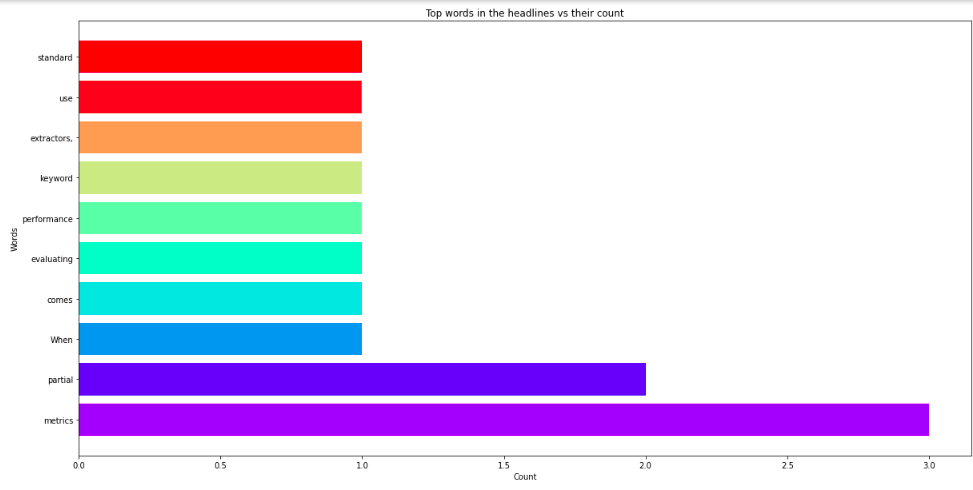

The magnitude of each word represents its frequency or relevance in a word cloud, which is a data visualization tool for visualizing text data. A word cloud can be used to emphasise important textual data points. Data from social networking websites are frequently analyzed using word clouds.

The greater and bolder a term appears in the word cloud, the more times it appears in a source of textual data (such as a speech, blog post, or database) (Also known as a tag cloud or a text cloud). A word cloud is a collection of words shown in different sizes. The more frequently a term appears in a document and the more important it is, the larger and bolder it is. These are great ways for extracting the most important parts of textual data, such as blog posts, and databases.

Python Implementation of Keyword Extraction Using Wordcloud

For installation

pip3 install wordcloud

pip3 install matplotlibFor extracting the keywords and showing their relevancy using Wordcloud

import collections

import numpy as np

import pandas as pd

import matplotlib.cm as cm

import matplotlib.pyplot as plt

from matplotlib import rcParams

from wordcloud import WordCloud, STOPWORDS

all_headlines = """

When it comes to evaluating the performance of keyword extractors, you can use some of the standard metrics in machine learning: accuracy, precision, recall, and F1 score. However, these metrics don’t reflect partial matches; they only consider the perfect match between an extracted segment and the correct prediction for that tag.

Fortunately, there are some other metrics capable of capturing partial matches. An example of this is ROUGE.

"""

stopwords = STOPWORDS

wordcloud = WordCloud(stopwords=stopwords, background_color="white", max_words=1000).generate(all_headlines)

rcParams['figure.figsize'] = 10, 20

plt.imshow(wordcloud)

plt.axis("off")

plt.show()

filtered_words = [word for word in all_headlines.split() if word not in stopwords]

counted_words = collections.Counter(filtered_words)

words = []

counts = []

for letter, count in counted_words.most_common(10):

words.append(letter)

counts.append(count)

colors = cm.rainbow(np.linspace(0, 1, 10))

rcParams['figure.figsize'] = 20, 10

plt.title('Top words in the headlines vs their count')

plt.xlabel('Count')

plt.ylabel('Words')

plt.barh(words, counts, color=colors)

plt.show()Output

KeyBert

KeyBERT is a straightforward and user-friendly keyword extraction technique that leverages BERT embeddings to identify the most similar keywords and keyphrases within a given document. It relies on BERT embeddings and employs basic cosine similarity to pinpoint sub-documents within the text that closely resemble the document as a whole.

To create a document-level representation, BERT is utilized for extracting document embeddings. Subsequently, word embeddings for N-gram words/phrases are extracted. Finally, cosine similarity is applied to identify words/phrases that closely resemble the document, allowing for the identification of terms that best encapsulate the entire document.

KeyBert utilizes huggingface transformer-based pre-trained models to generate embeddings, with the default choice being the all-MiniLM-L6-v2 model.

Python Implementation of Keyword Extraction Using KeyBert

For installation

pip3 install keybertFor extracting the keywords and showing their relevancy using KeyBert

from keybert import KeyBERT

doc = """

Supervised learning is the machine learning task of learning a function that

maps an input to an output based on example input-output pairs. It infers a

function from labeled training data consisting of a set of training examples.

In supervised learning, each example is a pair consisting of an input object

(typically a vector) and a desired output value (also called the supervisory signal).

A supervised learning algorithm analyzes the training data and produces an inferred function,

which can be used for mapping new examples. An optimal scenario will allow for the

algorithm to correctly determine the class labels for unseen instances. This requires

the learning algorithm to generalize from the training data to unseen situations in a

'reasonable' way (see inductive bias)."""

kw_model = KeyBERT()

keywords = kw_model.extract_keywords(doc)

print(keywords)Output

[('supervised', 0.6676), ('labeled', 0.4896), ('learning', 0.4813), ('training', 0.4134), ('labels', 0.3947)]Yet Another Keyword Extractor (Yake)

For automatic keyword extraction in Yake, text features are exploited in an unsupervised manner. YAKE is a basic unsupervised automatic keyword extraction method that identifies the most relevant keywords in a text by using text statistical data from single texts. This technique does not rely on dictionaries, external corpora, text size, language, or domain, and it does not require training on a specific set of documents. The Yake algorithm’s major characteristics are as follows:

- Unsupervised approach

- Corpus-Independent

- Domain and Language Independent

- Single-Document

Python Implementation of Keyword Extraction Using Yake

For Installation

pip3 install yakeFor Extracting the Keywords and Showing their Relevancy Using Yake

import yake

doc = """

Supervised learning is the machine learning task of learning a function that

maps an input to an output based on example input-output pairs. It infers a

function from labeled training data consisting of a set of training examples.

In supervised learning, each example is a pair consisting of an input object

(typically a vector) and a desired output value (also called the supervisory signal).

A supervised learning algorithm analyzes the training data and produces an inferred function,

which can be used for mapping new examples. An optimal scenario will allow for the

algorithm to correctly determine the class labels for unseen instances. This requires

the learning algorithm to generalize from the training data to unseen situations in a

'reasonable' way (see inductive bias)."""

kw_extractor = yake.KeywordExtractor()

keywords = kw_extractor.extract_keywords(doc)

for kw in keywords:

print(kw)Output

('machine learning task', 0.022703501568910843)

('Supervised learning', 0.06742808121232775)

('learning', 0.07245709008069999)

('training data', 0.07557730010583494)

('maps an input', 0.07860851277995791)

('output based', 0.08846540097554569)

('input-output pairs', 0.08846540097554569)

('machine learning', 0.09853013116161088)

('learning task', 0.09853013116161088)

('training', 0.10592640317285314)

('function', 0.11237403107652318)

('training data consisting', 0.12165867444610523)

('learning algorithm', 0.1280547892393491)

('Supervised', 0.12900350398758118)

('supervised learning algorithm', 0.13060566752120165)

('data', 0.1454043828185849)

('labeled training data', 0.15052764655360493)

('algorithm', 0.15633092600586776)

('input', 0.17662443762709562)

('pair consisting', 0.19020472807220248)MonkeyLearn API

MonkeyLearn is a user-friendly text analysis tool with a pre-trained keyword extractor that you can use to extract important phrases from your data using MonkeyLearn’s API. APIs are available in all major programming languages, and developers can extract keywords with just a few lines of code and obtain a JSON file with the extracted keywords. MonkeyLearn also has a free word cloud generator that works as a simple ‘keyword extractor,’ allowing you to construct tag clouds of your most important terms. Once you’ve created a Monkeylearn account, you’ll be given an API key and a Model ID for extracting keywords from the text.

Check out the official Monkeylearn API docs for additional information.

Advantages of keyword extraction automation

- Product descriptions, customer feedback, and other sources can all be used to extract keywords.

- Determine which terms are most frequently used by customers.

- Monitoring of brand, product, and service references in real-time

- It is possible to automate and speed up data extraction and entry.

Python Implementation of Keyword Extraction using MonkeyLearn API

For Installation

pip3 install monkeylearnFor Extracting the Keywords Using Monkeylearn API

from monkeylearn import MonkeyLearn

ml = MonkeyLearn('your_api_key')

my_text = """

When it comes to evaluating the performance of keyword extractors, you can use some of the standard metrics in machine learning: accuracy, precision, recall, and F1 score. However, these metrics don’t reflect partial matches; they only consider the perfect match between an extracted segment and the correct prediction for that tag.

"""

data = [my_text]

model_id = 'your_model_id'

result = ml.extractors.extract(model_id, data)

dataDict = result.body

for item in dataDict[0]['extractions'][:10]:

print(item['parsed_value'])Output

performance of keyword

standard metric

f1 score

partial match

correct prediction

extracted segment

machine learning

keyword extractor

perfect match

metricTextrazor API

Another API for extracting keywords and other useful elements from unstructured text is Textrazor. The Textrazor API can be accessed using a variety of computer languages, including Python, Java, PHP, and others. You will receive the API key for extracting keywords from the text once you have made an account with Textrazor. Visit the official website for additional information.

Textrazor is a good choice for developers that need speedy extraction tools with comprehensive customization options. It’s a keyword extraction service that may be used locally or in the cloud. The TextRazor API may be used to extract meaning from text and can be easily connected with our necessary programming language. We can design custom extractors and extract synonyms and relationships between entities in addition to extracting keywords and entities in 12 different languages.

Python Implementation of Keyword Extraction Using Textrazor API

For Installation

pip3 install textrazorFor Extracting the Keywords with Relevance_score and Confidence_score from a Webpage Using Textrazor API

import textrazor

textrazor.api_key = "your_api_key"

client = textrazor.TextRazor(extractors=["entities", "topics"])

response = client.analyze_url("https://www.textrazor.com/docs/python")

for entity in response.entities():

print(entity.id, entity.relevance_score, entity.confidence_score)Output

Document 0.1468 2.734

Debugging 0.4502 6.739

Application software 0.256 1.335

High availability 0.4024 5.342

Best practice 0.3448 1.911

Box 0.03577 0.9762

Application software 0.256 1.343

Experiment 0.2456 4.424

Deprecation 0.1894 2.876

Object (grammar) 0.2584 1.039

False positives and false negatives 0.09726 2.222

System 0.3509 1.251

Algorithm 0.3629 17.14

Document 0.1705 2.741

Accuracy and precision 0.4276 2.089

Concatenation 0.4086 3.503

Twitter 0.536 6.974

News 0.2727 1.43

System 0.3509 1.251

Document 0.1705 2.691

Application programming interface key 0.1133 1.795

...

...

...Key Takeaway

Keyword extraction is an automated method of extracting the most relevant words and phrases from text input. Important points to remember are given below.

- Keyword extraction is commonly used when we need to extract key information from a batch of documents.

- In this article, I have tried to expose you to some of the most popular tools for automatic keyword extraction tasks in NLP.

- Rake NLTK, Spacy, Textrank, Word cloud, KeyBert, and Yake are the tools and MonkeyLearn and Textrazor are the APIs that I mentioned here.

- Each of these tools has its own advantaged and specific use cases.

- These are the most effective keyword extraction techniques currently in use in the data science field.

Conclusion

The goal of keyword extraction is to find phrases that best describe the content of a document automatically. Key phrases, key terms, key segments, or simply keywords are the terminologies used to define the terms that indicate the most relevant information contained in the document. My Github page contains the entire codebase for keyword extraction methods. If you have any problems when using these tools, please let us know in the comments section below.

Happy coding🤗

Read the latest articles on our blog.

Frequently Asked Questions

A. Keyword extraction in NLP involves techniques like TF-IDF, TextRank, or BERT embeddings. These methods analyze text data to identify and rank the most important words or phrases that capture the document’s essence.

A. NLP aids in information extraction by parsing and analyzing textual data to identify structured information like entities, relationships, and facts. Named Entity Recognition (NER) and relation extraction are common NLP techniques used for this purpose.

A. Keyword extraction methods include TF-IDF (Term Frequency-Inverse Document Frequency), TextRank, LDA (Latent Dirichlet Allocation), and BERT embeddings. These techniques automatically identify and rank keywords or key phrases from text data.

Here is the Example for KeyPhrase Extraction :

Original Text: “Learning a new language is rewarding. Immersion programs offer intensive practice and cultural exposure. Regular practice and patience are key.”

Key Phrases Extracted:

Learning a new language

Immersion programs: intensive practice, cultural exposure

Regular practice

Patience

The media shown in this article is not owned by Analytics Vidhya and are used at the Author’s discretion.

Thanks to sharing information about the keyboard extractor.

Which of the keyword extraction techniques works the best for extracting the product type from product title in ecommerce data? eg. "Adidas womens Hoops 2.0 Basketball Shoe" should return "shoe" or even better "Basketball Shoe".

Nice post! Thanks for sharing the post about the keywords extraction method using documents in NLP. Is there any other method?

Nice Blog. Could you please share github link. Though its mentioned in blog, hyperlink is missing.