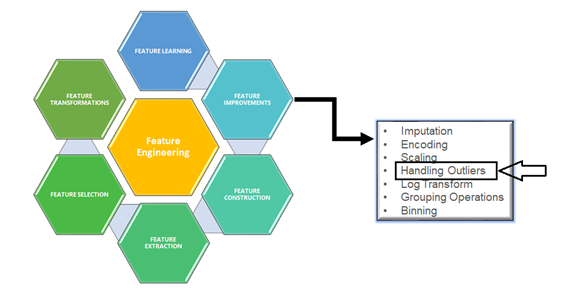

Before we get started the discussion on Outliers, we should understand exactly what Feature Improvements mean under Feature Engineering.

Family of Feature Engineering

In the Feature Engineering family, we are having many key factors are there, let’s discuss Outlier here. This is one of the interesting topics and easy to understand in Layman’s terms.

In the picture of the Apples, we can find the out man out?? Is it? Hope can Yes!

But the huge list of a given feature/column from the .csv file could be a really challenging one for naked eyes.

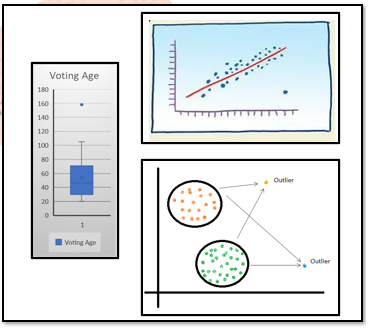

First and foremost, the best way to find the Outliers are in the feature is the visualization method.

Visualization of outlier

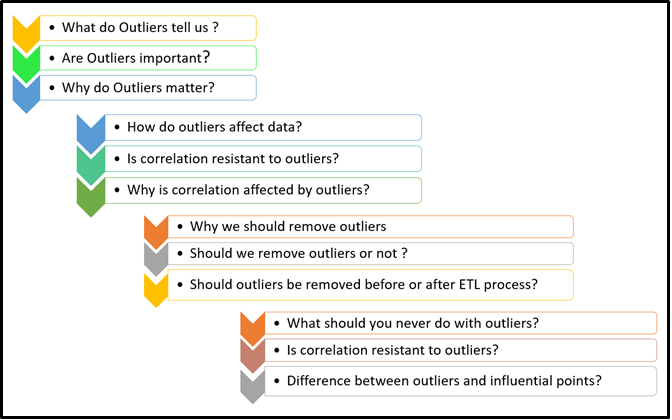

Of course! It would be below quick reasons.

That’s fine, but you might have questions about Outlier if you’re a real lover of Data Analytics, Data mining, and Data Science point of view.

Let’s have a quick discussion on those.

So far we have discussed what is Outliers, how it affects the given dataset, and Either can we drop them or NOT. Let see now how to find from the given dataset. Are you ready!

We will look at simple methods first, Univariate and Multivariate analysis.

Let see some sample code. Just I am taking titanic.csv as a sample for my analysis, here I am considering age for my analysis.

plt.figure(figsize=(5,5)) sns.boxplot(y='age',data=df_titanic)

You can see the outliers on the top portion of the box plot visually in the form of dots.

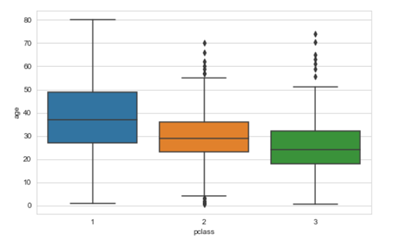

plt.figure(figsize=(8,5)) sns.boxplot(x='pclass',y='age',data=df_titanic)

We can very well use Histogram and Scatter Plot visualization technique to identify the outliers.

On top of this, we have with

mathematically to find the Outliers as follows Z-Score and Inter Quartile Range (IQR) Score methods

Z-Score method: In which the distribution of data in the form mean is 0 and the standard deviation (SD) is 1 as Normal Distribution format.

Let’s consider below the age group of kids, which was collected during data science life cycle stage one, and proceed for analysis, before going into further analysis, Data scientist wants to remove outliers. Look at code and output, we could understand the essence of finding outliers using the Z-score method.

import numpy as np

kids_age = [1, 2, 4, 8, 3, 8, 11, 15, 12, 6, 6, 3, 6, 7, 12,9,5,5,7,10,10,11,13,14,14]

mean = np.mean(voting_age)

std = np.std(voting_age)

print('Mean of the kid''s age in the given series :', mean)

print('STD Deviation of kid''s age in the given series :', std)

threshold = 3

outlier = []

for i in voting_age:

z = (i-mean)/std

if z > threshold:

outlier.append(i)

print('Outlier in the dataset is (Teen agers):', outlier)

Mean of the kid’s age in the given series: 2.6666666666666665

STD Deviation of kids age in the given series: 3.3598941782277745

The outlier in the dataset is (Teenagers): [15]

(IQR) Score method: In which data has been divided into quartiles (Q1, Q2, and Q3). Please refer to the picture Outliers Scaling above. Ranges as below.

Let’s have the junior boxing weight category series from the given data set and will figure out the outliers.

import numpy as np import seaborn as sns # jr_boxing_weight_categories jr_boxing_weight_categories = [25,30,35,40,45,50,45,35,50,60,120,150] Q1 = np.percentile(jr_boxing_weight_categories, 25, interpolation = 'midpoint') Q2 = np.percentile(jr_boxing_weight_categories, 50, interpolation = 'midpoint') Q3 = np.percentile(jr_boxing_weight_categories, 75, interpolation = 'midpoint')

IQR = Q3 - Q1

print('Interquartile range is', IQR)

low_lim = Q1 - 1.5 * IQR

up_lim = Q3 + 1.5 * IQR

print('low_limit is', low_lim)

print('up_limit is', up_lim)

outlier =[]

for x in jr_boxing_weight_categories:

if ((x> up_lim) or (x<low_lim)):

outlier.append(x)

print(' outlier in the dataset is', outlier)

Interquartile range is 20.0

low_limit is 5.0

up_limit is 85.0

the outlier in the dataset is [120, 150]

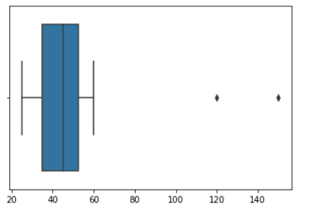

sns.boxplot(jr_boxing_weight_categories)

Loot at the boxplot we could understand where the outliers are sitting in the plot.

So far, we have discussed what is Outliers, how it looks like, Outliers are good or bad for data set, how to visualize using matplotlib /seaborn and stats methods.

Now, will conclude correcting or removing the outliers and taking appropriate decision. we can use the same Z- score and (IQR) Score with the condition we can correct or remove the outliers on-demand basis. because as mentioned earlier Outliers are not errors, it would be unusual from the original.

Hope this article helps you to understand the Outliers in the zoomed view in all aspects. let’s come up with another topic shortly. until then bye for now! Thanks for reading! Cheers!!

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,

Great explanation! Thanks