Scraping Data Using Octoparse for Product Assessment

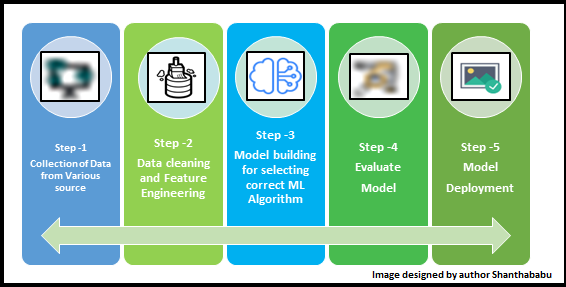

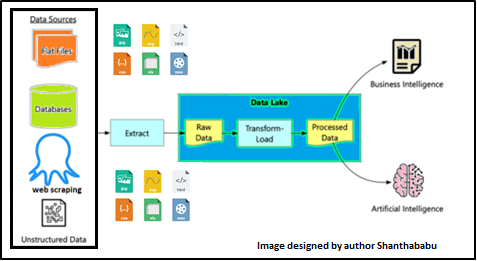

In today’s data-driven world, it is crucial to have access to reliable and relevant data for informed decision-making. Often, data from external sources is obtained through processes like pulling or pushing from data providers and subsequently stored in a data lake. This marks the beginning of a data preparation journey where various techniques are applied to clean, transform, and apply business rules to the data. Ultimately, this prepared data serves as the foundation for Business Intelligence (BI) or AI applications, tailored to meet individual business requirements. Join me as we dive into the world of data scraping with Octoparse and discover its potential in enhancing data-driven insights.

This article was published as a part of the Data Science Blogathon.

Table of contents

- Web Scrapping and Analytics

- Data Providers

- What is Web-Scraping and Why?

- Why Web-Scraping?

- Web Scraping Process

- Features

- Features

- Open Target Webpage

- Creating Workflow and New-Task

- Scrapping the Content from the Identified Web-page

- Customizing and Validating the Data using Review Future

- Extract the Data using Workflow

- Save Configuration, and Run the Workflow

- Schedule-task

- Data Extraction – Process starts

- Data ready to Export

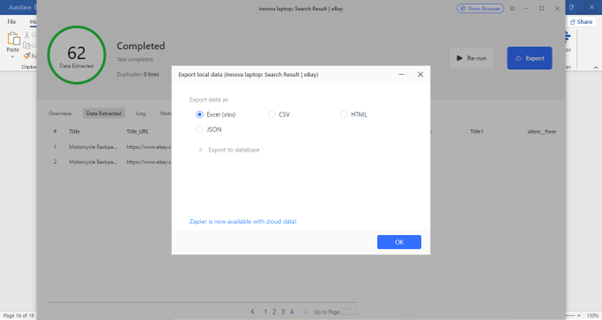

- Chose the Data Format for Further Usage

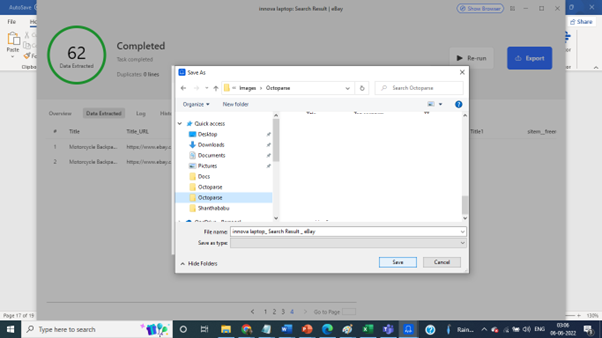

- Saving the Extracted Data

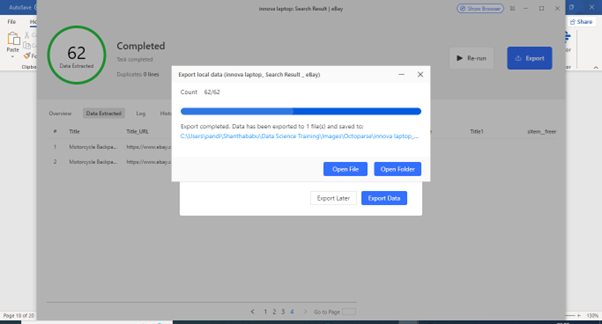

- Extracted Data is Ready in the Specified-format

- Conclusion

- Frequently Asked Questions

Web Scrapping and Analytics

Yes! In some cases, we have e to grab the data from an external source using Web Scraping techniques and do all data torturing on top of the data to find the insight of the data with techniques.

Same time we do not forget to use to find the relationship and correlation between features and expand the other opportunities to explore further by applying mathematics, statistics, and visualisation techniques, on top of selecting and using machine learning algorithms and finding the prediction/classification/clustering to improve the business opportunities and prospects, this is a tremendous journey.

Focusing on excellent data collection from the right resource is the critical success of a data platform project. I hope you know that. In this article, let’s try to understand the process of gaining data using scraping techniques – zero code.

Before getting into this, I will try to understand a few things better.

Data Providers

As I mentioned earlier, the Data Sources for DS/DA could be in from any data source. Here, our focus is on Web-Scraping processes.

What is Web-Scraping and Why?

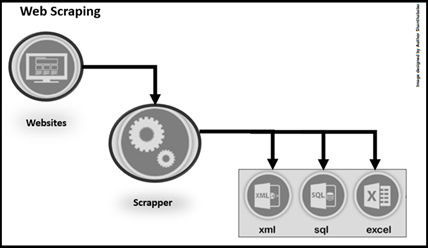

Web-Scraping is the process of extracting data in diverse volumes in a specific format from a website(s) in the form of slice and dice for Data Analytics and Data Science standpoint and file formats depending on the business requirements. It would .csv, JSON, .xlsx,.xml, etc.. Sometimes we can store the data directly into the database.

Why Web-Scraping?

Web-Scraping is critical to the process; it allows quick and economical extraction of data from different sources, followed by diverse data processing techniques to gather the insights directed to understand the business better and keep track of the brand and reputation of a company to align with legal limits.

Web Scraping Process

RequestVsResponse

The first step is to request the target website(s) for the specific contents of a particular URL, which returns the data in a specific format mentioned in the programming language (or) script.

Parsing&Extraction

As we know, Parsing is usually applied to programming languages (Java..Net, Python, etc.). It is the structured process of taking the code in the form of text and producing a structured output in understandable ways.

Data-Downloading

The last part of scrapping is where you can download and save the data in CSV, JSON format or a database. We can use this file as input for Data Analytics and Data Science perspective.

There are multiple Web Scraping tools/software available in the market, and let’s look at a few of them.

In the market, many Web-Scraping tools are available, and let’s review a few of them.

ProWebScraper

ProWebScraper is one of the most powerful web-scraping tools and is web-based. It used to materialise with its classical customer service and cost-effectiveness. It helps us to extract data from website(s). and It’s designed to make the scraping process a completely uncomplicated exercise. Yes, it requires no coding, just pointing and clicking the required item, and the output will extract into our target dataset.

Features

- Completely effortless exercise

- It can be used by anyone can who knows how to browse

- You can extract the desired data in a few seconds with simple clicks.

- It can scrape Texts, Table data, Links, Images, Numbers and Key-Value Pairs.

- It can scrape multiple pages.

- It can be scheduled based on the demand (Hourly, Daily, Weekly, etc.)

- Highly scalable, it can run multiple scrapers simultaneously and thousands of pages.

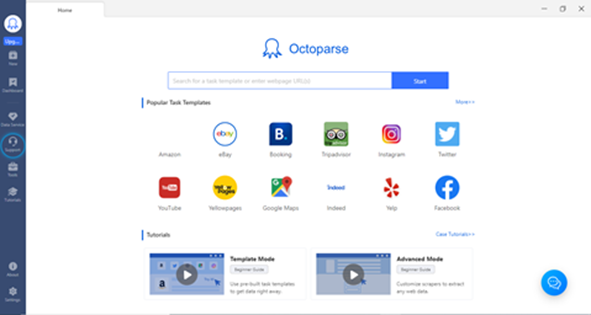

Let’s focus on Octoparse,

The web-Data Extraction tool, Octoparse, stands out from other devices in the market. You can extract the required data without coding, scrape data with modern visual design, and automatically scrapes the data from the website(s) along with the SaaS Web-Data platform feature.

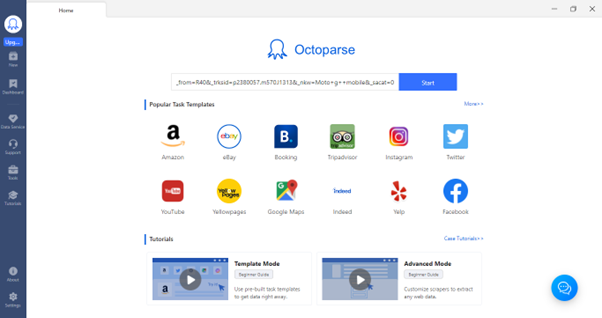

Octoparse provides ready-to-use Scraping templates for different purposes, including Amazon, eBay, Twitter, Instagram, Facebook, BestBuy and many more. It lets us tailor the scraper according to our requirements specific.

Compared with other tools available in the market, it is beneficial at the organisational level with massive Web- Scraping demands. We can use this for multiple industries like e-commerce, travel, investment, social, crypto-currency, marketing, real estate etc.

Features

- Both categories could find it easy to use this to extract information from websites.

- ZERO code experience is fantastic.

- Indeed, it makes life easier and faster to get data from websites without code and with simple configurations.

- It can scrape the data from Text, Table, Web-Links, Listing-pages and images.

- It can download the data in CSV and Excel formats from multiple pages.

- It can be scheduled based on the demand (Hourly, Daily, Weekly, etc.)

- Excellent API integration feature, which delivers the data automatically to our systems.

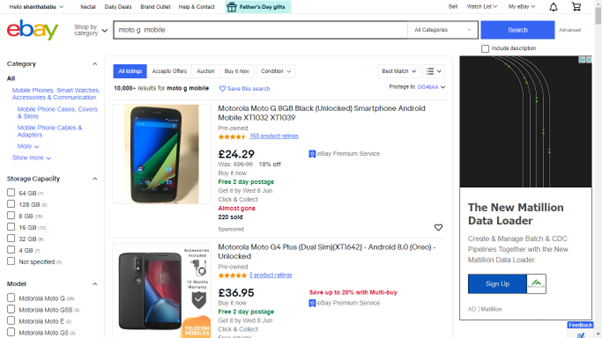

Now time to Scrape eBay product information using Octoparse.

Getting product information from eBay, Let’s open the eBay and select/search for a product, and copy the URL

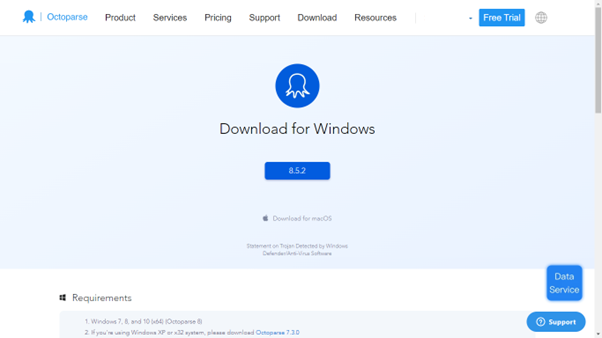

Before starting your journey, you should download Octoparse version 8.5.2 for this demo purpose (https://www.octoparse.com/download/windows)

In a few steps, we were able to complete the entire process.

- Open the target webpage

- Creating a workflow

- Scrapping the content from the specified web pages.

- Customizing and validating the data using review future

- Extract the data using workflow

- Scheduling

Open Target Webpage

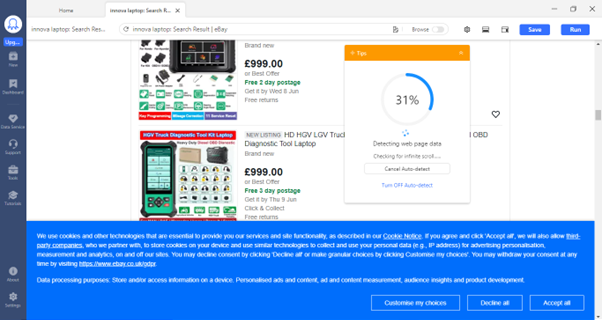

Let’s login Octoparse, paste the URL and hit the start button; Octoparse starts auto-detect and pulls the details for you in a separate window.

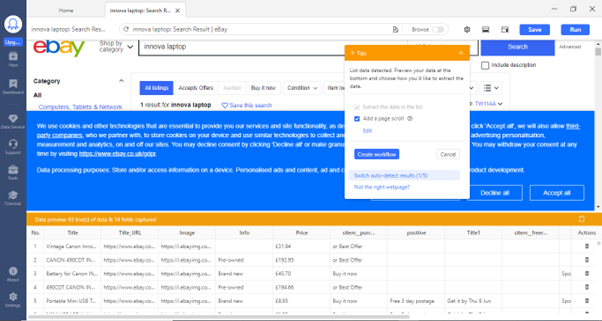

Creating Workflow and New-Task

Wait until the search reaches 100% so that you will get data for your needs.

During the detection, Octoparse will select the critical elements for your convenience and save our time.

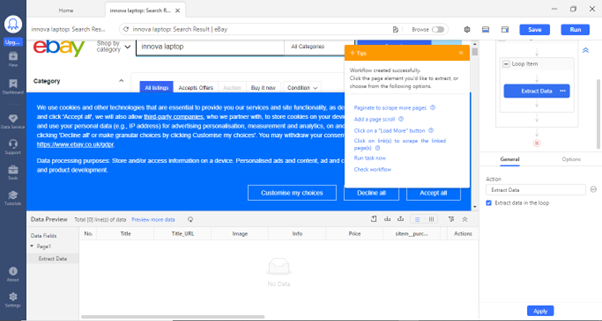

After your verification on the page, click on Create Workflow.

Note: To remove the cookies, please turn off the browser tag.

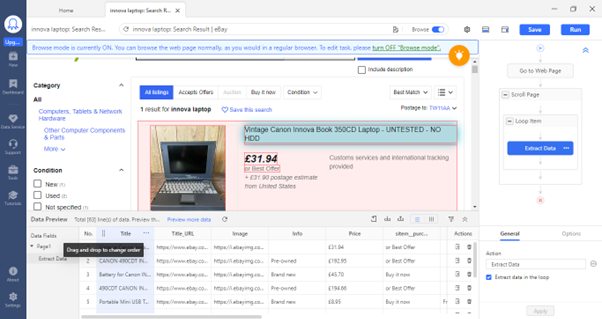

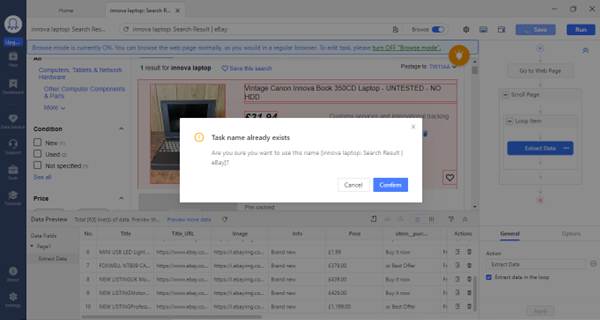

Scrapping the Content from the Identified Web-page

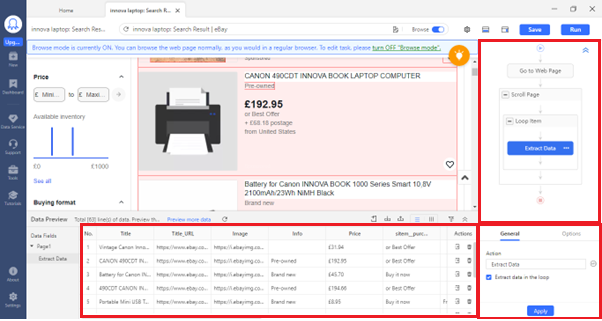

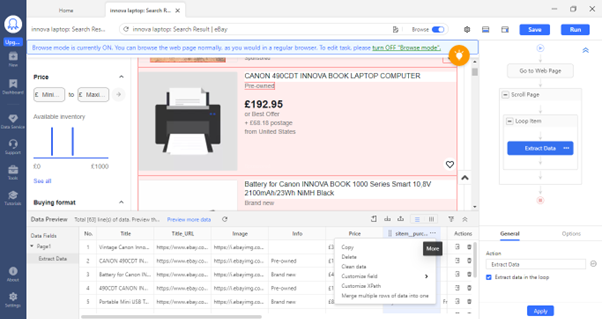

Once we confirm the detection, the Workflow template is ready for configurations and data preview at the bottom. There you can configure the column as convenient (Copy, Delete, Customize the column, etc.,)

Customizing and Validating the Data using Review Future

You can add your custom field(s) in the Data preview window, import and export the data, and remove duplicates.

You can configure the list of columns as we require once done. you can preview the selected individual line item by clicking on the right side of the pane

Extract the Data using Workflow

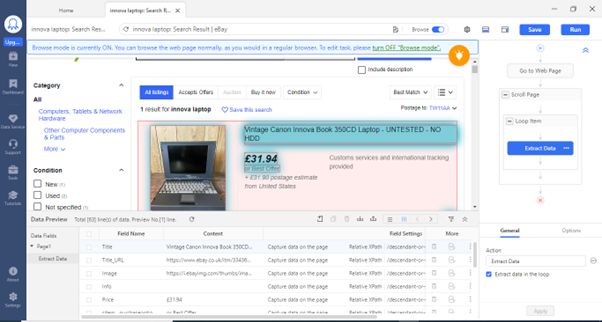

On the Workflow window, based on your hit on each navigation, we could move around the web browser. – Go to the web page, Scroll Page, Loop Item, Extract Data, and you can add new steps.

We can configure time out, file format in JSON or NOT, Before and After the action is performed, and how often the action should perform. After the required configurations have been done, we could act and extract the data.

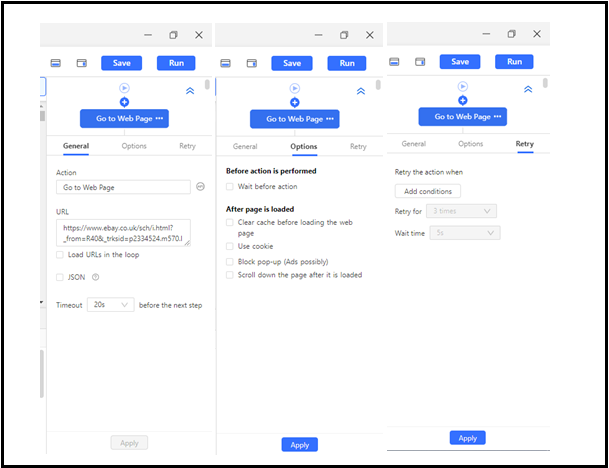

Save Configuration, and Run the Workflow

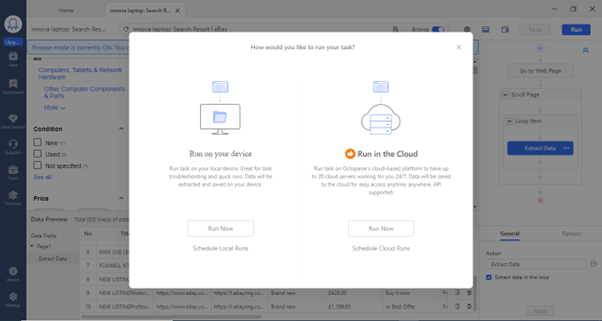

Schedule-task

You can run it on your device or in the cloud.

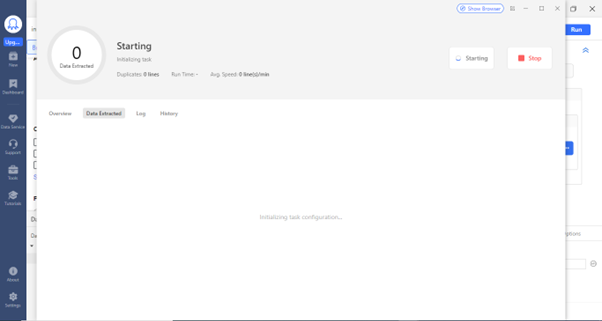

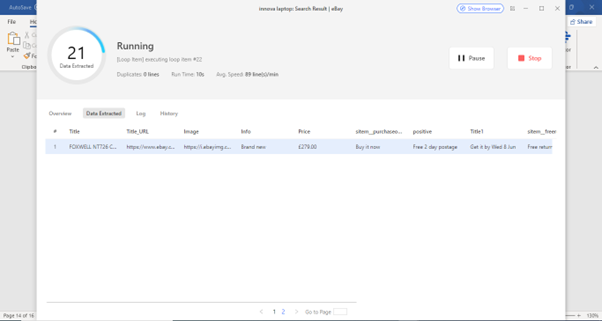

Data Extraction – Process starts

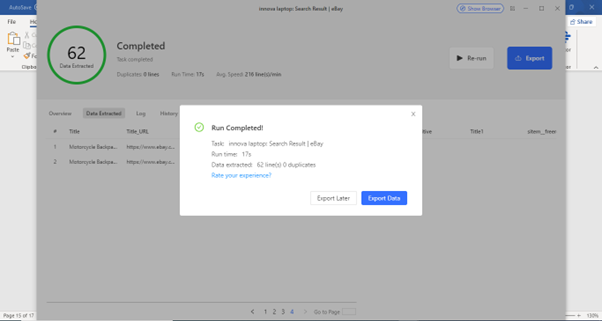

Data ready to Export

Chose the Data Format for Further Usage

Saving the Extracted Data

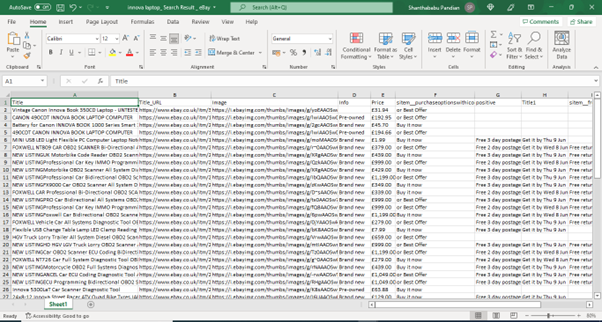

Extracted Data is Ready in the Specified-format

Data is ready for further usage either in Data Analytics and Data Science

What is Next! Yes, no doubt about that, have to load in Jupiter notebook and start using the EDA process extensively.

Conclusion

So far, we have explored various aspects, and I am sure that you could be able to understand Data sources, Data processing, Data Science/ML lifecycle, What is Web-Scraping, the process involved in it, tools in the market, and its key features, along with very detailed steps to extract the product data from eBay using Octoparse, Here the quick summary of this article and take-away,

- Importance of Data Source

- Data Science Lifecycle

- What is Web Scrapping and Why

- The process involved in Web Scrapping

- Top Web Scraping tools and their overview

- Octoparse Use case – Data Extraction from eBay

- Data Extraction using Octoparse – detailed steps (Zero Code)

I have enjoyed this web-scraping tool and am impressed with its features; you can try and want it to extract free data for your Data Science & Analytics practice projects perspective.

Frequently Asked Questions

A. Octoparse is a web scraping tool used for extracting data from websites. It allows users to automate the extraction process, collect data from various sources, and save it in structured formats for analysis.

A. Octoparse is a reliable web scraping tool offering a user-friendly interface, robust features, and good customer support. It is widely used and recommended by users for its effectiveness in data extraction.

A. Octoparse offers both free and paid versions. The free version provides limited functionality and is suitable for basic scraping needs. The paid version, Octoparse Professional, offers advanced features and is available as a subscription-based service.

A. Octoparse offers a paid version, Octoparse Professional, which provides additional features and capabilities beyond what is available in the free version. The paid version provides more flexibility and power for complex scraping tasks.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.