Top 50 Data Warehouse Interview Questions and Answers

Introduction

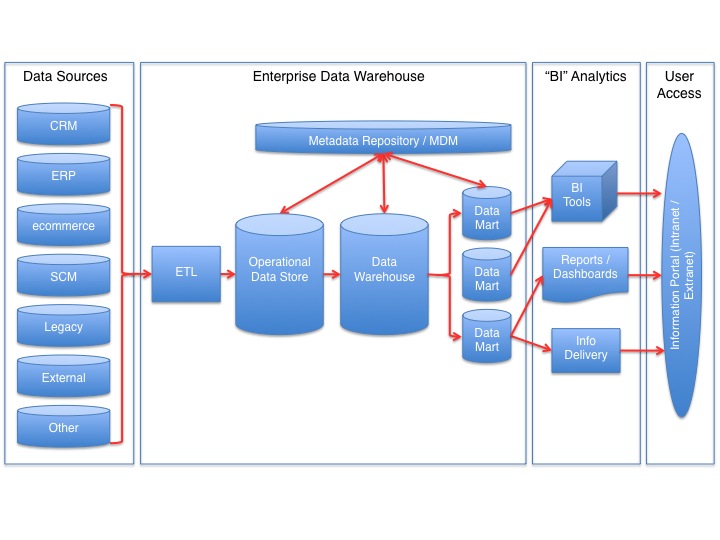

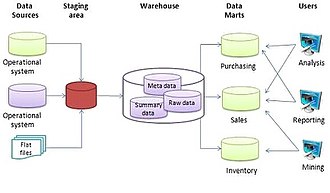

Before diving into the interview questions, let’s begin by understanding the data warehouse’s overview. A data warehouse is a system to collect and manage extensive data from multiple sources, such as transactional systems, log files, and external data sources. The data is then organized and structured for seamless retrieval, querying, and analysis. Its main objective is to act as a central repository for an organization’s historical data, supporting reporting, business intelligence, and data mining.

Data warehouses can handle vast amounts of data and accommodate complex queries and analyses. They adopt a multidimensional model architecture, ensuring rapid and efficient querying and data aggregation. Data is often stored in a denormalized format to optimize queries further, prioritizing faster retrieval while consuming more storage space. Additionally, data warehouses commonly include ETL (Extract, Transform, Load) processes, which are responsible for extracting data from various sources, transforming it into a suitable format for warehouse loading, and ultimately loading it for storage and analysis.

This article was published as a part of the Data Science Blogathon.

Table of contents

Beginner Level Data Warehouse Interview Questions

Q1. What is a data warehouse, and how is it different from a database?

This is one of the most commonly asked data warehouse interview questions. A data warehouse and a database are both systems used for storing and managing data, but they are used for different purposes and have some key differences.

- A database is a system used for storing and managing data in an organized, structured way. Databases are used for transactional systems, where data is constantly added, updated, and deleted in real time. They are optimized for transactional processing and support many data types, such as text, numbers, and images.

- On the other hand, a data warehouse is a system used specifically for storing and managing large amounts of historical data. The primary purpose of a data warehouse is to provide a central repository for an organization’s historical data, which can be used for reporting, business intelligence, and data mining. Data warehouses are designed to handle large amounts of data and support complex queries and data analysis. They use a specific architecture, called a multidimensional model, that allows for fast and efficient querying and aggregation of data.

In summary, a database is optimized for transactional processing, while a data warehouse is optimized for analytical processing. While databases have the most recent data, data warehouses have a more extended history of data.

Q2. Name some popular data warehousing tools and technologies.

Some popular data warehousing tools and technologies include Microsoft SQL Server, Oracle Exadata, Amazon Redshift, Google BigQuery, Snowflake, and Apache Hive. These platforms provide robust capabilities for data integration, transformation, storage, and analytical processing to support data warehousing requirements.

Q3. Explain the concept of ETL (Extract, Transform, Load) in data warehousing.

Next on the list of intermediate-level data warehouse interview questions is your experience with ETL. ETL stands for Extract, Transform, Load. It is a process that moves data from various sources, such as transactional systems, log files, and external data sources, into a data warehouse for reporting, business intelligence, and data mining.The process of ETL typically involves the following steps:

- Extract: The first step is to extract data from the various sources that need to be loaded into the data warehouse. This can include pulling data from databases, flat files, or APIs.

- Transform: Once the data is extracted, it needs to be transformed into a format that can be loaded into the data warehouse. This can include cleaning and standardizing the data, removing duplicates, and changing data types.

- Load: The final step is to load the transformed data into the data warehouse. This can include loading the data into a staging area, which can be further changed and cleaned before being loaded into the final data warehouse.

ETL processes can be complex and time-consuming, especially when dealing with large amounts of data and multiple sources. To make it more efficient, companies often use ETL tools, such as Informatica, DataStage, and Talend, that automate and manage the ETL process. These tools allow for scheduling, monitoring, and controlling ETL jobs.

In my experience, the ETL process is a crucial part of data warehousing; it helps to ensure that the data stored in the data warehouse is accurate, consistent, and up-to-date.

Q4. What is the purpose of a dimensional model in a data warehouse?

The dimensional model in a data warehouse organizes data into easily accessible structures, such as star schema or snowflake schema. Its purpose is to simplify complex queries, improve query performance, and provide a user-friendly, intuitive way to analyze data. Dimensional models typically consist of fact tables containing measures and dimension tables with descriptive attributes.

Q5. What are the various techniques for data summarization in a data warehouse?

Data summarization techniques in data warehouse interview questions is yet another important aspect every applicant is assessed on. Data summarization techniques in a data warehouse include:

- Roll-up: aggregating data from multiple dimensions to a higher level of granularity.

- Drill-down: breaking down data to a lower level of granularity.

- Drill-through: navigating from a higher-level summary to detailed data.

- Pivot: transforming data from a vertical to a horizontal representation.

- Cube: pre-calculating and storing summarized data in a multidimensional structure.

- Grouping Sets: aggregating data based on multiple groupings in a single query.

- Materialized Views: storing pre-calculated data in a separate database object for improved query performance.

- Sampling: summarizing data by analyzing a subset of the data instead of the entire dataset.

source: wikimedia.org

Q6. What is the role of a data mart in a data warehouse environment?

A data mart is a subset of a data warehouse designed to serve a specific business department or function. The role of a data mart is to provide focused and optimized access to detailed data for a particular line of business, such as sales, marketing, or finance.Data marts are created to address the needs of a specific department or business function, allowing them to have a dedicated, customized data repository tailored to their particular requirements. This approach can improve the performance of data analysis and reporting, reduce data redundancy, and enhance data security by limiting access to sensitive data.

By providing a subset of the data from the data warehouse that is relevant to a particular department, data marts can also reduce the complexity of the data warehouse and improve its scalability. Additionally, the appropriate department can develop and maintain data marts, giving them more control over their data and its usage.

Q7. What is a fact table and a dimension table in a data warehouse?

In a data warehouse, a fact table stores quantitative and numerical data, such as sales revenue or quantities sold. It typically contains foreign keys referencing various dimension tables. Dimension tables, on the other hand, hold descriptive attributes related to each dimension, like customer names or product categories. Fact and dimension tables together form the foundation of a star schema or snowflake schema.

Q8. Differentiate between snowflake schema and star schema.

Both snowflake and star schemas are dimensional data models used in data warehousing. In a star schema, dimensions are denormalized and connected directly to the fact table, forming a star-like structure. In contrast, the snowflake schema normalizes dimension tables into multiple related tables. While star schema offers simpler queries, the snowflake schema optimizes storage but may require more complex joins.

Q9. What are the main challenges faced while implementing a data warehouse?

Implementing a data warehouse comes with several challenges, including data integration from diverse sources, ensuring data quality and consistency, dealing with large data volumes, optimizing query performance, managing metadata, and maintaining security and compliance standards.

Q10. How do you handle data quality issues in a data warehouse?

Handling data quality issues involves performing data profiling to identify discrepancies, cleansing and standardizing data, setting up validation rules during the ETL process, implementing data quality checks, and establishing data governance practices to monitor and improve data quality continuously.

Q11. What are the primary differences between a data warehouse and a data lake?

A data warehouse is a structured and centralized repository designed for historical data analysis, using ETL processes to clean, transform, and organize data. In contrast, a data lake is a vast storage repository that stores raw and unstructured data, accommodating both structured and unstructured data types. Data lakes do not require upfront schema definitions, enabling data exploration and flexible analysis.

Moderate Level Data Warehouse Interview Questions

Q12. Discuss the benefits and drawbacks of using an on-premise data warehouse versus a cloud-based one.

On-premise data warehouses offer more control over data and security but require substantial upfront investment and maintenance costs. Cloud-based warehouses provide scalability, flexibility, and cost-effectiveness, but reliance on the internet and potential data security concerns are drawbacks.

Q13. Explain slowly changing dimensions (SCD) and provide examples of different types.

Slowly Changing Dimensions (SCD) refer to data attributes that change over time and require historical tracking. Examples include SCD Type 1 (overwrite old values), SCD Type 2 (add new rows with historical changes), and SCD Type 3 (maintain both old and new values in the same row).

Q14. How do you manage data integrity in a data warehouse?

Managing data integrity is something every candidate is asked when it comes to data warehouse interview questions. Data integrity ensures that data in a data warehouse is accurate, consistent, and complete. It is an essential aspect of data warehousing, ensuring that the data can be trusted and used for reporting, business intelligence, and data mining.Here are a few ways to handle and manage data integrity in a data warehouse:

- Data Validation: Data validation is checking the data for accuracy and completeness before loading it into the data warehouse. This can include checking for missing or invalid data, and that data conforms to specific business rules or constraints.

- Data Quality: Data quality ensures that the data is accurate and consistent. This can include removing duplicates, standardizing data, and ensuring that data conforms to specific standards.

- Data Reconciliation: Data reconciliation compares data in the data warehouse with data in the source systems. This can help to identify any discrepancies or errors in the data, which can then be corrected.

- Data Auditing: Data auditing is the process of tracking and logging changes made to the data in the data warehouse. This can include keeping a record of who made the changes, when the changes were made, and what the differences were.

- Data Governance: Data Governance is the overall management of the availability, usability, integrity, and security of the data used in an organization. It includes policies, standards, and procedures that ensure the appropriate management of data throughout its lifecycle.

- Security: Data security is protecting data from unauthorized access or changes. This can include implementing access controls and encryption to protect data from hackers or internal threats.

By implementing these best practices, you can ensure that the data in your data warehouse is accurate, consistent, and complete. This will help to ensure that the data can be trusted and used for reporting, business intelligence, and data mining.

Q15. How can you optimize the performance of a data warehouse?

Performance optimization involves using indexing, data partitioning, materialized views, query optimization, and hardware improvements like SSD storage or parallel processing to reduce query response times and enhance overall system efficiency.

Q16. What is data partitioning, and how does it improve query performance?

Data partitioning involves dividing large tables into smaller, manageable segments based on a defined criterion (e.g., date range). It enhances query performance by restricting the amount of data scanned during query execution, thereby minimizing processing time.

Q17. Describe the concept of conformed dimensions and their importance in data warehousing.

Conformed dimensions are dimensions that are consistent and standardized across multiple data marts or subject areas. Using conformed dimensions ensures uniformity in data and facilitates data integration, enabling users to analyze data across different business processes seamlessly.

Q18. What is a data mart, and how is it different from a data warehouse?

A data mart is a smaller, specialized subset of a data warehouse, focusing on specific business functions or user groups. Unlike data warehouses, data marts are designed for easy accessibility and are built using a dimensional modeling approach.

Q19. How do you handle incremental data loading in a data warehouse?

Incremental data loading involves adding only the new or modified data since the last update. It can be achieved through change data capture (CDC) techniques, using timestamp or flag columns, or by tracking new records through data comparison.

Q20. Discuss the role of metadata in data warehousing.

Metadata provides information about data sources, structures, relationships, and transformations in the data warehouse. It aids in data understanding, lineage, and governance, simplifies data integration, and assists users in locating relevant data for analysis.

Q21. How do you design a data warehouse schema for an extensive, complex data set?

Designing a data warehouse schema for an extensive, complex data set can be challenging. However, by following a structured and systematic approach, you can ensure that the schema is well-designed and able to handle the complexity of the data set. Here are a few key steps you can take when designing a data warehouse schema for an extensive, complex data set:

- Understand the requirements: Before designing the schema, you must clearly understand the data warehouse’s requirements and objectives. This includes understanding the types of data stored in the warehouse, the queries and analyses performed on the data, and the performance requirements.

- Normalize the data: The first step in designing the schema is to normalize the data. This involves breaking down the data into its most minor logical components so that it can be stored in a structured and organized manner. By normalizing the data, you can ensure that it is consistent, reliable, and easy to maintain.

- Choose a schema design: Several schema designs can be used for a data warehouse, such as star schema, snowflake schema, and fact constellation schema. Each schema has advantages and disadvantages, so choosing a design that best fits your data and requirements is essential.

- Define the dimensions and facts: Once you have chosen a schema design, the next step is to define the dimensions and facts of the data. Dimensions are the characteristics of the data that you want to analyze, such as time, location, and product. Facts are the measurements or metrics you want to track, such as sales or revenue.

- Optimize for performance: After the schema is designed, it is essential to optimize it for performance. This can include using indexes, partitioning the data, and denormalizing the data to minimize the number of joins required for queries.

- Test the schema: Before implementing it, it is essential to ensure that it meets the requirements and performs well. This can include running sample queries and analyzing the performance of the schema.

- Document the schema: Finally, it is essential to document the schema, including the design decisions made and the reasoning behind them, so that others can understand and maintain the schema in the future.

It’s important to note that the above steps are a general guide, and the specifics of the design will depend on the data set and the organization’s requirements.

Q22. Explain the concept of late arriving data (LAD) in data warehousing and how it can be handled.

Late arriving data refers to data that arrives after the expected load time due to delays or system issues. It can be handled by implementing processes like data staging, time window checks, data deduplication, and timestamp-based logic to update or insert the data correctly.

Q23. How does the choice of data modeling technique (e.g., dimensional, relational) impact the performance of a data warehouse?

The choice of data modeling technique directly impacts query performance. Dimensional modeling (star or snowflake) simplifies queries, leading to faster response times, whereas relational modeling may involve complex joins, slowing down analytical queries. Dimensional models are generally preferred for data warehousing due to their query optimization benefits.

Advanced Level Data Warehouse Interview Questions

Q24. Compare and contrast different data warehouse architectures (e.g., Kimball, Inmon, Data Vault).

Kimball follows a dimensional modeling approach with a star or snowflake schema, optimized for ease of use and query performance. Inmon uses a normalized schema for consistency but may require complex joins. Data Vault focuses on scalability and flexibility, using hubs, links, and satellites to enable easier data integration and accommodate changes.

Q25. Explain the concept of slowly changing facts (SCF) and provide examples of different types.

Slowly Changing Facts (SCF) refer to measures or numerical data that change over time. Examples include Type 1 (overwrite old values), Type 2 (add new rows with historical changes), and Type 3 (maintain both old and new values in the same row).

Q26. What are the critical considerations for scaling a data warehouse to handle large volumes of data?

Critical considerations for scaling a data warehouse include selecting the right hardware and storage infrastructure, optimizing data partitioning and indexing, using distributed processing techniques, and adopting cloud-based solutions for elastic scalability.

Q27. Describe the role of surrogate key generation in data warehousing and various methods for generating them.

Surrogate keys serve as unique identifiers for records in dimension tables, reducing complexities of using natural keys. Various methods for generating surrogate keys include sequential integers, UUIDs, hash functions, or using database sequences.

Q28. How can you implement real-time data integration in a data warehouse?

Real-time data integration involves capturing and processing data as it is generated. Techniques like change data capture (CDC), event-driven ETL workflows, and streaming technologies (e.g., Apache Kafka) can be used to continuously update the data warehouse with real-time data.

Q29. Discuss the role of data lineage in ensuring data accuracy and compliance in a data warehouse.

Data lineage provides a complete historical record of data’s origins and transformations, enhancing data traceability and quality assurance. It is crucial for auditing, compliance, and identifying the source of data issues in the data warehouse.

Q30. What is the role of materialized views in a data warehouse, and how do they impact performance?

Materialized views are precomputed query results stored as physical tables, speeding up query performance by reducing computation time. They act as summaries or aggregates of data, making complex queries more efficient in a data warehouse environment.

Q31. Explain the concept of data virtualization and its use in data warehousing.

Data virtualization allows access to data from various sources without physical data movement. It provides a unified view of data, simplifying data integration, reducing data duplication, and enabling real-time data access in a data warehouse.

Q32. How can you ensure data security and privacy in a data warehouse?

Data security and privacy can be ensured through encryption, role-based access controls, data masking, implementing firewalls, regular security audits, and compliance with data protection regulations like GDPR or HIPAA.

Q33. Describe the process of data profiling and its importance in data warehousing.

Data profiling involves analyzing data to understand its structure, patterns, completeness, and quality. It helps identify anomalies, data errors, and inconsistencies, enabling better data cleansing, transformation, and improved decision-making in a data warehouse.

Q34. Explain the concept of data sharding and its use in distributing data across multiple servers in a data warehouse environment.

Data sharding involves horizontally partitioning large datasets into smaller, manageable segments called shards, which are distributed across multiple servers. This approach improves data distribution, query performance, and scalability in data warehouses.

Q35. How can you implement data masking and obfuscation techniques to protect sensitive data in a data warehouse?

Data masking involves replacing sensitive data with realistic but fictitious data to protect its confidentiality. Techniques like encryption, pseudonymization, or tokenization can be used to ensure data privacy in the data warehouse, while still enabling analytical processes.

Q36. Discuss the concept of surrogate key vaulting and its importance in maintaining historical data accuracy during ETL processes.

Surrogate key vaulting involves storing the history of surrogate key values alongside their respective records. It ensures historical accuracy during ETL processes, allowing proper associations between facts and dimensions across different points in time within the data warehouse.

Coding Data Warehouse Interview Questions

Q37. Write a SQL query to retrieve the top 10 customers with the highest total purchase amount from a sales fact table.

SELECT customer_id, SUM(purchase_amount) AS total_purchase_amount

FROM sales_fact_table

GROUP BY customer_id

ORDER BY total_purchase_amount DESC

LIMIT 10;Q38. Given a dimension table containing product categories, write a SQL query to count the number of products in each category.

SELECT category_name, COUNT(*) AS product_count

FROM product_dimension_table

GROUP BY category_name;Q39. Implement a Python script to perform an incremental load of data from a source database to a data warehouse.

# Assuming you have a source connection and a data warehouse connection

import pandas as pd

source_data = pd.read_sql_query('SELECT * FROM source_table WHERE last_updated > ?', source_connection, params=[last_load_time])

data_warehouse_connection.execute('DELETE FROM target_table WHERE last_updated > ?', (last_load_time, ))

source_data.to_sql('target_table', data_warehouse_connection, if_exists='append', index=False)Q40. Write a SQL query to calculate the average sales amount for each quarter from a sales fact table.

SELECT DATEPART(QUARTER, sale_date) AS quarter, AVG(sales_amount) AS average_sales_amount

FROM sales_fact_table

GROUP BY DATEPART(QUARTER, sale_date);Q41. Create a stored procedure to handle the insertion of new data into a slowly changing dimension table.

CREATE PROCEDURE sp_InsertSCDType2

@product_id INT,

@product_name VARCHAR(100),

@current_date DATE

AS

BEGIN

IF EXISTS (SELECT * FROM product_dimension_table WHERE product_id = @product_id)

BEGIN

-- Insert new row with end date for existing record

UPDATE product_dimension_table

SET end_date = DATEADD(DAY, -1, @current_date)

WHERE product_id = @product_id AND end_date IS NULL;

END

-- Insert new row with start date for the new record

INSERT INTO product_dimension_table (product_id, product_name, start_date)

VALUES (@product_id, @product_name, @current_date);

ENDQ42. Implement a data transformation process in Python to cleanse and format data before loading it into a data warehouse.

import pandas as pd

# Assuming you have a DataFrame called 'raw_data' containing the data to be transformed

# Cleanse and format data (e.g., remove nulls, fill missing values, convert date formats, etc.)

clean_data = raw_data.dropna().apply(lambda x: x.strip() if isinstance(x, str) else x)

# Load the cleaned data into the data warehouse

clean_data.to_sql('target_table', data_warehouse_connection, if_exists='replace', index=False)

Write a SQL query to find the total revenue generated by each customer in the last six months.

SELECT customer_id, SUM(revenue) AS total_revenue

FROM sales_fact_table

WHERE sale_date >= DATEADD(MONTH, -6, GETDATE())

GROUP BY customer_id;Q44. Create a function in your preferred programming language to generate surrogate keys for a given dimension table.

# Assuming you have a function to generate surrogate keys called 'generate_surrogate_key'

# This function takes a string value from the dimension table as input and returns a unique integer surrogate key

def generate_surrogate_key(value):

# Implementation of the surrogate key generation logic

# (e.g., hash the value, map it to an integer range, etc.)

return hash(value) % (10**9) # Modulo to limit the surrogate key to 9 digits

# Example usage

surrogate_key = generate_surrogate_key('ProductA')Q45. Write a SQL query to find the top-selling products in each category based on the quantity sold.

SELECT category_name, product_name, SUM(quantity_sold) AS total_quantity_sold

FROM sales_fact_table sf

JOIN product_dimension_table pd ON sf.product_id = pd.product_id

GROUP BY category_name, product_name

ORDER BY category_name, total_quantity_sold DESC;

To save storage space, implement a process to archive and compress old data in a data warehouse.

-- Create an archive table for old data

CREATE TABLE archived_sales_fact_table AS

SELECT *

FROM sales_fact_table

WHERE sale_date < DATEADD(YEAR, -2, GETDATE());

-- Delete old data from the original table

DELETE FROM sales_fact_table

WHERE sale_date < DATEADD(YEAR, -2, GETDATE());Q47. Write a SQL query to calculate the year-over-year percentage growth in revenue for each product category from a sales fact table.

SELECT category_name,

YEAR(sale_date) AS sales_year,

SUM(revenue) AS total_revenue,

LAG(SUM(revenue)) OVER(PARTITION BY category_name ORDER BY YEAR(sale_date)) AS previous_year_revenue,

(SUM(revenue) - LAG(SUM(revenue)) OVER(PARTITION BY category_name ORDER BY YEAR(sale_date))) / LAG(SUM(revenue)) OVER(PARTITION BY category_name ORDER BY YEAR(sale_date)) * 100 AS yoy_growth

FROM sales_fact_table

GROUP BY category_name, YEAR(sale_date);Q48. Create a Python script to automatically refresh materialized views in a data warehouse at regular intervals.

import time

while True:

# Run the script to refresh materialized views

# You can use the appropriate SQL commands to refresh the views

# Example: data_warehouse_connection.execute('REFRESH MATERIALIZED VIEW mv_name;')

time.sleep(3600) # Wait for an hour before refreshing the views againQ49. Implement a data validation process in your preferred programming language to identify and log data inconsistencies during ETL operations.

import logging

# Assuming you have a DataFrame called 'transformed_data' after ETL operations

# Check for data inconsistencies (e.g., negative values in revenue, missing values, etc.)

if transformed_data['revenue'].lt(0).any():

logging.warning("Negative values found in revenue column.")

if transformed_data.isnull().any().any():

logging.error("Missing values found in the transformed data.")

# Log other data validation checks as neededQ50. Create a function in your preferred programming language to calculate the moving average of numerical time-series data stored in a data warehouse.

import pandas as pd

def calculate_moving_average(data, window_size):

return data.rolling(window=window_size).mean()

# Example usage

# Assuming you have a DataFrame called 'time_series_data' with a 'value' column representing numerical time-series data

moving_average = calculate_moving_average(time_series_data['value'], window_size=3)Conclusion

In this article, we have discussed the various data warehouse interview questions that can be asked in any AI-based company or for the data scientist role. Apart from the only simple questions, we have discussed the answers to fundamental questions comprehensively, which will help in any interviews. The summary of the article is as follows:

- The key differences between the database and data warehouse. How to segregate the data from the data warehouse.

- We designed a data warehouse schema for the extensive and complex dataset.

- Discussed the ETL (Extract-Transform-Load) process in the data warehouse.

- How to manage the data integrity in a data warehouse.

- Various techniques for data summarization in a data warehouse.

- Role of data mart in a data warehouse environment.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.