A few days back, the content feed reader, which I use, showed 2 out of top 10 articles on deep learning.

This is when I thought I need a better understanding of what is deep learning. I probably noticed the term – deep learning sometime late last year. And it has grown in its presence around me since then. I wanted to make sure this is a global phenomena and not just me getting served specific content based on my searches or past history. So, I pulled up Google trends for deep learning. This is what, it showed:

Clearly, I was catching up a new trend. So, let me summarize my findings and views on the topic, basis what I have read in the last few days.

P.S. You might have figured out by now, that I am not an expert in Deep Learning (phew!). But, I am more motivated than most of the people to learn about them. I hope to provide a meaningful summary to starters and a few thought provoking questions to the experts. If you have any questions / opinion on the topic, please add them in the comments below.

What is Deep Learning?

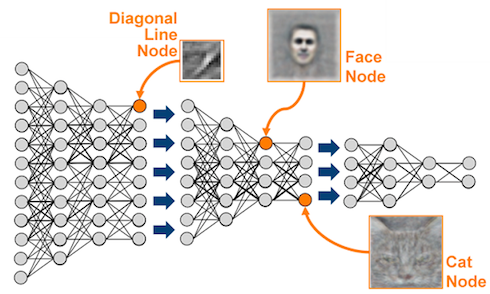

Deep learning is probably one of the hottest topics in Machine learning today, and it has shown significant improvement over some of its counterparts. It falls under a class of unsupervised learning algorithms and uses multi-layered neural networks to achieve these remarkable outcomes. Here is a simple illustration from Analytic Store’s blog:

A large number of pixels are fed to the network as input, after which the network learns and evolves to recognize higher level features like faces and cats.

Here are a few achievements driving the attention to this area:

- Amazing accuracy on quite a few Kaggle competitions – Dogs vs. Cats image recognition (98.9% accuracy), Saving the whales problem (98% accuracy)

- Ability to learn and identify cats by using YouTube videos without any supervision

Following are a few events, which suggest the lookout for people with knowledge of deep learning:

- Google’s acquisition of Deepmind Technologies

- Google hiring Jeff Hinton, one of the thought leaders in this space (you can check out his course on neural networks on Coursera)

- Facebook hiring Yann LeCun, a student of Jeff Hinton to lead its AI lab

- Baidu hires Andrew Ng, another pioneer in the field (and co-founder of Coursera).

Applications of deep learning:

If you have not figured it out already, deep learning finds its applications in following areas:

- Image recognition (e.g. Tagging faces in photos)

- Voice recognition (e.g. Voice based search, Siri)

- Pattern detection (e.g. Handwriting recognition)

But, neural networks have been there for decades, what is re-kindling this interest now?

Yes, neural networks have existed since ages. Interest in neural networks peaked in the 1980s and 90s and then died off because of the inherent problems with them and black box like approach.

There are a few reasons why this is happening now. The biggest one among them, being the drop in computational costs. Classification of cats through unsupervised learning of YouTube videos was achieved by deploying 16,000 computers in Google lab! The cost of deploying these algorithms is not small, even by today’s standards.

Resources in deep learning:

Here is a list of some good resources to start reading / following, if you are interested in this area:

- www.deeplearning.net along with its tutorials

- Jeff Hinton’s Neural network course on Coursera

- Google+ community

- Yann LeCun overview of Deep Learning with Marc’Aurelio Ranzato

Questions in my mind:

Some of the questions, which remain in my mind are:

- This looks like a huge black box to deal with (something like a scaled up version of random forests), which might do well in data science competitions, but fails to deliver any business understanding. Will it be useful and impactful for larger community or stay with in labs of data giants working on huge data sets? For example, the model might say classify a particular person as a likely defaulter, but would not provide, why it is doing so.

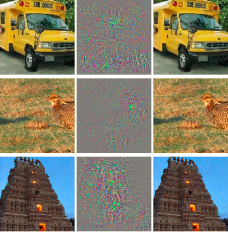

- The methods have shown some flaws, which are difficult to explain. According to a recent study, algorithms were able to classify the images on the left in the picture below, but were not able to classify the images on the right – which may seem very similar to human eyes. (Source: KDNuggets, original case study)

- Overfitting / Choosing the right algorithm – Given the nature of these algorithms, you are building multiple middle (hidden) layers in your architecture. These would work well on problems with infinite (or very large) degrees of freedom. However, if you have limited degrees of freedom, we might end up with an overfitted model – probably time to go back to traditional methods.

End notes:

I have to admit, I started my research from a place where these algorithms looked more like a buzz. But given the attention from data giants, success in some of the Kaggle competitions and the reducing costs of computations, I am starting to believe that the hotness of the field is justified.

Whether it is actually justified or not, only time will tell. In the meanwhile, I’ll continue with my research and keep you posted on how are things panning out at my end. And to gain expertise in working in neural network try out our deep learning practice problem – Identify the Digits.

What do you think about Deep learning? Do you think it will change the way people look at machine learning today? Or do you think this might just be another hype? How would people solve for some of the challenges, I have mentioned in the post? Do let me know your thoughts through comments below.

Thank you Kunal for Sharing and the links related to deep learning. Very interesting. There are so many new stuffs to read, understand and apply.

Thumbs up AV team ! Am in the learning journey of machine learning with Coursera. I heard the word Deep Learning few times in recent time, but thought it could be deepest version of machine learning. Now am little clear from your blog. To conclude i think deep learning is not hype and it will change the way people look at machine learning today.

Great job Kunal. AV is a great source for learning stuff. Keep up the good work. BTW, please send me a note on my gmail. I still plan to meet you in person. Will be in Bombay in August. Srikar

Done!