Introduction

This is a great time to be a data scientist – all the top tech giants are integrating machine learning into their flagship products and the demand for such professionals is at an all-time high. And it’s only going to get better!

Apple has been a major advocate of machine learning, and has packed it’s products with features like FaceID, Augmented Reality, Animoji, Healthcare sensors, etc. While watching Apple’s keynote event yesterday, I couldn’t help but wonder at the new chip technology they have developed that uses the power of machine learning algorithms.

In this article, we’ll check out some of the ways Apple has used machine learning to enrich the user experience. And believe me, some of the numbers you’ll see will blow your mind.

And if you’re already itching to get started with building your first ML models on an iPhone using Apple’s CoreML, check out this excellent article.

The A12 Chip

/cdn.vox-cdn.com/uploads/chorus_image/image/61360847/Screen_Shot_2018_09_12_at_2.36.27_PM.1536774839.png)

Source: The Verge

Designed in-house by Apple’s developers, the A12 chip features an even more advanced neural engine than last year (when the neural engine made it’s official debut inside the A11 chip). The A11 chip powers the iPhone X, 8, and 8 Plus so you can imagine why the A12 has created quite a stir in the machine learning community.

The A12 is using features as small as 7 nanometers as compared to 10 in the A11, which explains the acceleration in speed. And did you really think Apple would let the event slide without mentioning battery life? The A12 chip has a smart compute system that automatically recognizes which tasks should run on the primary part of the chip, which ones should be sent to the GPU, and which ones should be delegated to the neural engine.

So what’s the deal with the neural engine?

Source: Apple Insider

The neural engine’s key functions are two-fold:

- To use facial recognition algorithm with super quick speed to authenticate Face ID. This algorithm uses neural networks to map certain facial features/points and has of course been trained on millions of images to avoid making a mistake in the real product. It’s critical that the algorithm factors in physical objects like glasses and people’s hair into account, which Apple says it will do this year with even more accuracy

- To track facial movement for Animojis. Similar to the description above, the algorithm maps certain facial features and converts them into an animal emoji face in real-time

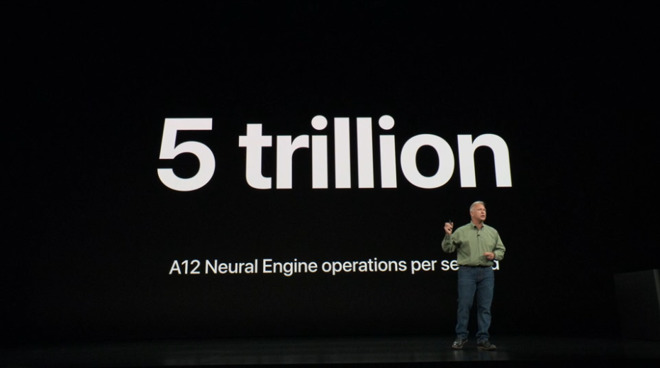

This year’s engine has eight cores which is how the chip can perform 5 trillion operations per second. Last year’s version had two cores and could go up to 600 billion operations per second. It’s a nice microcosm of how rapidly technology is evolving in front of our eyes.

And the neural engine can do even more..

It will help iPhone users take better pictures (how much better can you get every year?!). When you press the shutter button, the neural network identifies the kind of scene in the lens, and makes a clear distinction between any object in the image and the background. So next time you take a photograph, just remember how quick the neural network must be, to do all this in a matter of milliseconds.

You can learn all about object detection and computer vision algorithms in our ‘Computer Vision using Deep Learning‘ course! It’s a comprehensive offering and an invaluable addition to your machine learning skillset.

The Apple Watch

The Apple Watch Series 4 feels like a health monitoring device more than at any point since it’s debut four years back. Of course all the excitement is around the watch’s design and how it’s 35% bigger than last year’s product. But let’s step out of that limelight and look at one of the more intriguing features – new health sensors.

The Watch comes with an electrocardiogram (ECG) sensor. Why is this important, you ask? Well for starters, it’s the first smartwatch to pack in this feature. But more importantly, the sensor measure not just your heart’s rate, but also it’s rhythm. This helps monitor any irregular rhythm and the Watch immediately alerts you in case of any impending danger. These sensors have been approved by the FDA and the American Heart Association.

Further, these the Series 4 watches are integrated with an improved accelerometer and gyroscope. This will help the sensors in detecting if the wearer has fallen over. Once a person has fallen over and shown no sign of movement for 60 seconds, the device sends out an emergency call to up to five (pre-defined) emergency contacts simultaneously.

I’m sure you must have guessed by now what’s behind all these updates? Yes, it’s machine learning. Healthcare, as I mentioned in this article, is ripe for taking in machine learning terms. There are billions of data points at play, and combining ML with domain expertise is where the jackpot lies. I’m glad to see companies like Apple utilizing it, albeit in their own products.

End Notes

The competition between the likes of Apple, Google, and others is heating up and artificial intelligence and machine learning could be the key to winning the battle. Hardware is critical here – as it gets significant upgrades each year, more and more complex algorithms can be built in.

Fascinated by all this and looking for a way to get started with data science? Try out our ‘Introduction to Data Science‘ course today! We will help you take your first steps into this awesome new world.

You couldn’t have picked a better time to get into data science, honestly. A quick glance at Apple’s official job postings shows more than 400 openings for machine learning related positions. The question then remains whether there are enough experienced people to fulfill that demand.

You can view the entire Apple event here.