This article was published as a part of the Data Science Blogathon.

Introduction

The main motto of this post is to give a brief about these libraries and how useful it is in your career. Machine Learning is tough to learn; when it comes to data preprocessing, algorithms, and training models. But, it’s not the same after the growth in technology and the availability of both low-code and no-code machine learning platforms and libraries, and limitations to use and apply ML models in applications is less.

These libraries help the user to user-defined functions that take care of preprocessing and quickly run the ML models. Lines of code is less by using these libraries. The no-code platform offers drag and drop, which is an easy way to run the ML model but lacks in flexibility. On the other hand, low-code ML is better, which offers both flexibilities and ready-to-use code. Without further ado, let us dive in and know about these libraries and their functionalities.

Low Code Libraries

Pycaret

I have been using PyCaret for the last four months and admit this library is unique. By using this training, testing and deploying an ML model is simple. They also provide tutorial notebooks for Regression, Classification, Clustering, and NLP, and their documentation is good.

PyCaret is an open-source, low-code machine learning library in Python that automates machine learning workflows. It is an end-to-end machine learning and model management tool that speeds up the experiment cycle exponentially and makes you more productive. — https://www.pycaret.org.

PyCaret Functions

- compare_models() – trains all models available in the library, use default hyperparameters, and performance using cross-validation. MAE, MSE, RMSE, R2, RMSLE, MAPE are Regression metrics. Accuracy, AUC, Recall, Precision, F1, Kappa, MCC are Classification metrics.

- create_model() – creates a single model which we specify using default hyperparameters and evaluate performance.

- tune_model() – model is passed as estimator and tunes hyperparameter. uses random grid search with pre-defined tuning grids that are customizable.

- ensemble_model() – accepts trained model, and returns a table with k-fold cross-validated scores of evaluation metrics.

- plot_model() – used to evaluate the performance of trained ML model.

Features of the latest version include:

- Tuning of hyperparameters of the various model on GPU: LightGBM, XGboost, and Catboost.

- Updated deploy_model function to support for deployment on GCP as well as Microsoft Azure.

- Plot model function included with ‘scale’ parameter which you can use to control and generate high-quality plots.

- Included Boruta algorithm for enhanced feature engineering.

They provide well-designed tutorials. To know more about Pycaret.

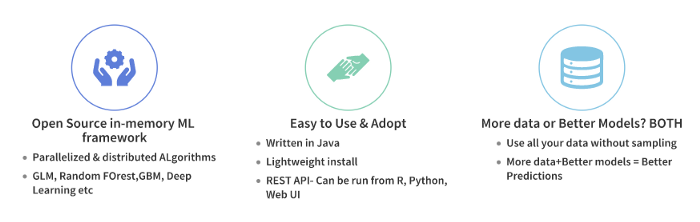

H2O AutoML

Image by H2o AutoML

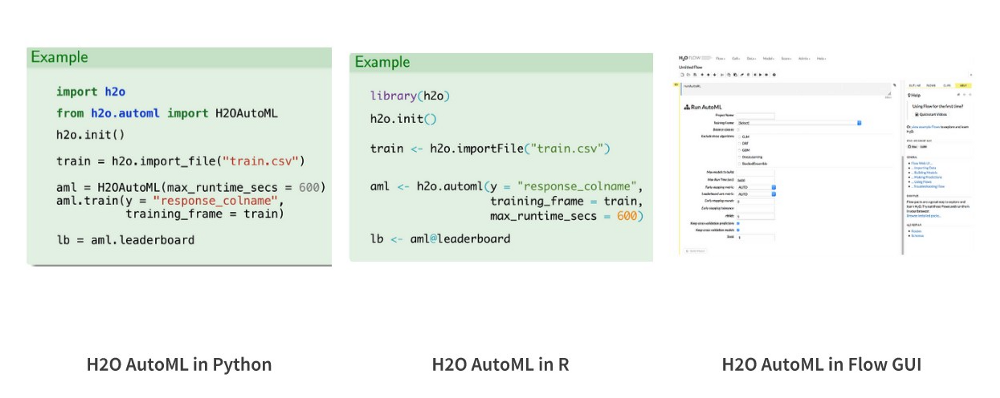

For beginners, it helps automate preprocessing, training, validation, and fine-tuning models. Assist advanced users in data engineering and stacking different models. For these reasons, even Kaggle competitors use H2O AutoML. When compared with PyCaret, one should write more code using this if you are not using the web interface. Despite this, it is still easier to train a model by using H2O AutoML. All you need is to write a few lines of code in R or Python.

To know more about H2O Automatic Machine Learning.

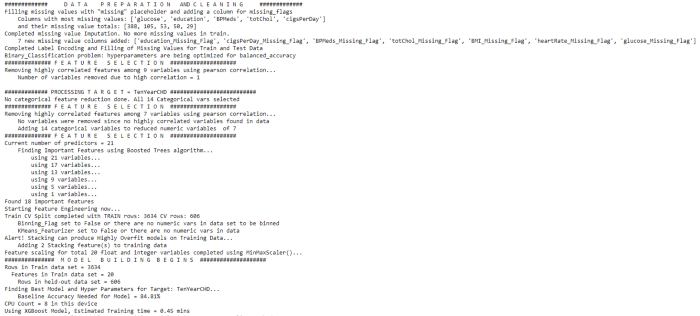

Auto-ViML

It is another low-code library know as “Auto_ViML” or “Automatic Variant Interpretable Machine Learning” (pronounced “Auto_Vimal”) developed to serve as an AutoML pipeline that could effectively contribute to modern data workflows. It accepts any dataset that is in the form of Pandas Dataframe. It performs data cleaning and category feature transformation like identifying missing values and left to the model to decide how to use them. It also performs feature selection automatically to produce the simplest model with the least number of features and high performance.

It provides a verbose output that allows for a great deal of understanding and model interpretability. Install Auto_ViML through ‘pip install autoviml’. It produces model performance results as graphs automatically. It can handle text, date-time, numeric, boolean, factor, and categorical variables all in one model. Users can use the featuretools library to perform Feature Engineering.

Features of the latest version are

- Use SMOTE for the imbalanced dataset and set Imabalanced_Flag = True in the input.

- It detects text variables automatically and does NLP processing on those data.

- It also detects data time variables automatically and adds extra features.

- Now users can perform feature engineering with the available featuretools library.

To get more information about Auto-ViML. To get the more stable and full version using this command pip install git+https://github.com/AutoViML/Auto_ViML.git

Image by author

No Code ML Platforms

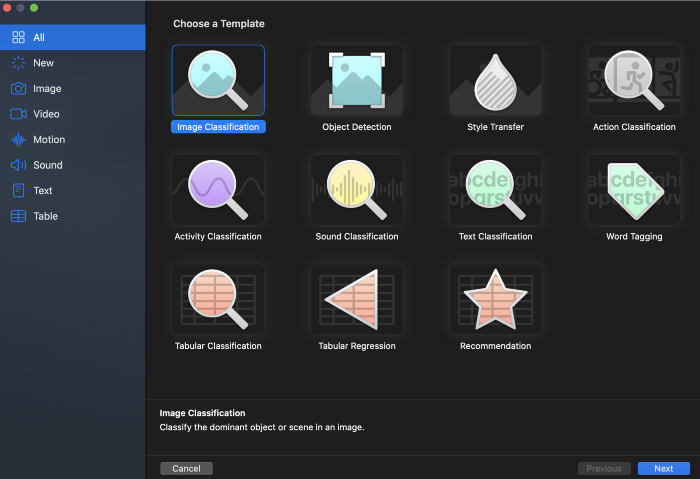

CreateML

Create ML is a no-code drag and drop tool developed by Apple for mac users. It is an independent macOS application with a bunch of pre-trained model templates. Build your custom models with the help of transfer learning. It has a variety of model types such as Image Classification, Style Transfer, Sound Classification, Text Classification, and Recommendation system where you can select the model type and add data, parameters to start training.

Before the training, you can set the iteration count and fine-tune the metrics. For models like style transfer, it provides real-time results on validation data. At last, it generates a CoreML model, which you can test and deploy in IOS applications.

Features of CreateML

- By using an easy-to-use app interface, build and train powerful models.

- Using different datasets, train multiple models in a single project.

- Using continuity with the iPhone camera and microphone on mac, you can preview model performance.

- To control model training, you can use options such as pause, save, resume, and extend.

- By using CPU and GPU, train models at blazing speed.

- For better model training performance, use an external GPU.

To know more about this tool – check here

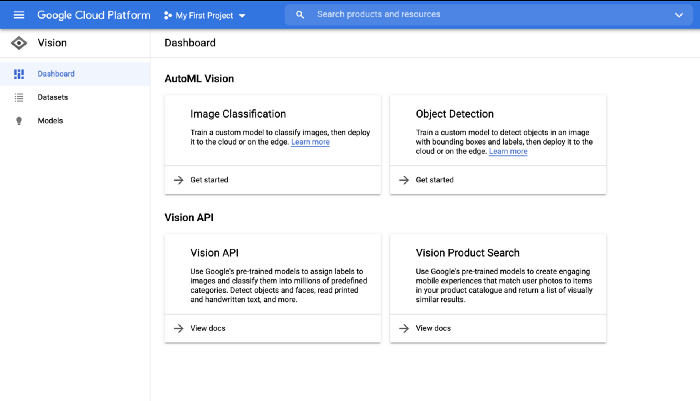

Google Cloud Auto ML

When Apple leads its way through CreatML, Google can’t afford to be left backward. Here AutoML works similar to CreatML, although on the cloud. Google AutoML at present offers Natural Language, Auto ML Translation, Video Intelligence, Vision in the package of ML products. It helps developers with less ML expertise to build models specific to their use case. Users can create custom models that fit their business needs and integrate those into websites and applications. Since it works on the cloud, there is no need to know transfer learning or creation of neural networks by offering out of the box support for wholly tested Deep learning models.

After the completion of training, you can validate and export the model in .tflite, .pb, and other formats. Those who are interested in ML could give a try Google AutoML. The trial version offers $300 credit for free to spend over the next three months and access to all cloud platforms products including Firebase and Google Maps API. To know more about the Products.

RunwayML

RunwayML is another great ML platform designed for creators and makers. It provides a charming visual interface to train models ranging from object detection, text, and image generation(GANs) to motion capture and other models without writing code. It allows to search models ranging from super-resolution images to background removal and style transfer. It is not free of cost at the time of exporting the model from this application one can leverage the power of pre-trained GAN to synthesize new images from prototypes. One of the highlights is synthesizing pictures as you type sentences by its Generative Engine. Available for Mac, Windows, or can be used on the browser.

Image by Author

To know more information about RunwayML.

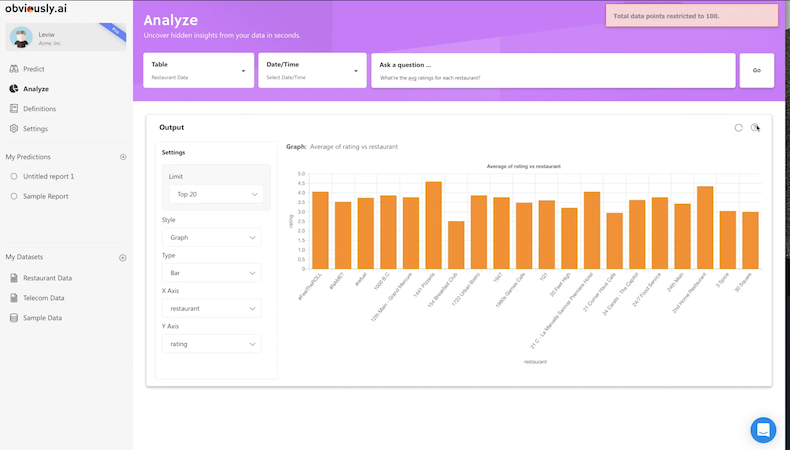

Obviously AI

It uses state of the art NLP to perform a tedious task on user-preferred CSV data. Here, the goal is to upload the dataset, choose the prediction column, and evaluate results by entering questions in natural language. Trains the machine learning model by choosing the correct algorithm for the users. So, one can get a prediction report, be it for predicting demand for inventory or forecast revenue, with a few clicks. It is most useful for start-ups and medium-sized companies looking to step into the field of artificial intelligence without having a data science team. It allows you to integrate data from other sources such as BigQuery, Salesforce, MySQL, RedShift, etc. So, without knowing how regression and text classification, the user can leverage this platform to perform predictive analysis of data. Obviously.ai offers a free plan for those who are interested can try it out.

Image by author

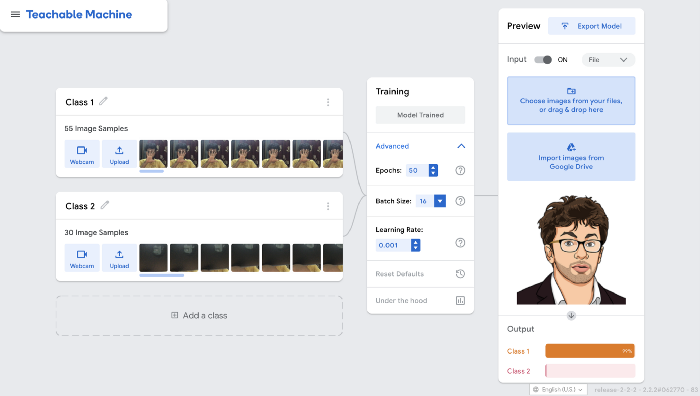

Teachable Machine

Now, we loot at another Google no-code ML platform. Whereas AutoML is developer-friendly, Teachable Machines allow you to quickly train models to recognize sounds, images, and posses right from the browser. To teach model, users can easily drag and drop files or use the webcam to create a dirty dataset of sounds or images.

It uses the Tensorflow.js library in the browser and ensure that training data stays on the device. It is a big step by Google for people who are interested in practicing Machine Learning without coding knowledge. Users can export the model in Tensorflow.js or tflite formats and used it in apps or websites. Using Onyx, the user can convert the model into different formats. Here, I managed to train a simple image classification model in less than a minute. To know more about Teachable Machine.

Image by author

Conclusion

Many other open-source libraries and platforms are released. These would be helpful for those who start venturing into the complex world of Machine Learning for the first time. One must be aware of solving their business needs by efficiently using these tools. These platforms bridge the gap between Data Scientist and non-ML practitioners. With these tools, it makes Machine Learning a lot more enjoyable. As we witness more automation, I think these platforms will exist in the future. SnapML is another excellent no-code tool/library that allows you to train and upload custom models that could be used in Snap Lenses.

That’s for the day, I hope you’ve found this post valuable. Thank you.

Good information sir

Thanks for the fantastic write-up on AutoML libraries Pavan Kalyan! I would also mention a new entrant in the field called “Lobe.AI” by Microsoft which has a very good UI and more. You should check it out and perhaps add to this article someday.