Introduction

Multicollinearity is a topic in Machine Learning of which you should be aware. I know this topic since from past years I have dive into the Statistics concept which is important for all those who are do something in the field of Data Science. I have seen a lot of Data Science peoples who are professional but they don’t know some stuff related to multicollinearity.

This is especially important for all those peoples who are coming from a non-mathematical background or those who have not more knowledge of Statistical concepts. It is not just learning a topic in Data Science but It is important when you are trying to crack the Data Science Interviews and finding insights from the data on which we have to apply the ML Algorithms.

So, in this article, we will see what is multicollinearity, why it is a problem, what causes multicollinearity, and then try to understand what is the illness in the model where multicollinearity exists and finally we see some techniques to remove the multicollinearity.

I highly recommend that before diving further into much deeper concepts, it is good if you have a basic understanding of a Regression model and some statistical terms.

Table of Contents

1. What is Multicollinearity?

2. The problem with having multicollinearity

3. What causes multicollinearity?

4. How to detect multicollinearity by using VIF?

5. Test for detection for multicollinearity

6. Solutions for multicollinearity

7. Implementation of VIF using Python

What is Multicollinearity?

Multicollinearity occurs when two or more independent variables(also known as predictor) are highly correlated with one another in a regression model.

This means that an independent variable can be predicted from another independent variable in a regression model. For Example, height, and weight, student consumption and father income, age and experience, mileage and price of a car, etc.

Let us take a simple example from our everyday life to explain this. Assume that we want to fit a regression model using the independent features such as pocket money and father income, to find the dependent variable, Student consumption here we cannot find an exact or individual effect of all the independent variables on the dependent variable or response since here both independent variables are highly correlated means as father income increases pocket money also increases and if father income decreases pocket money also decreases.

This is the multicollinearity problem!

The problem with having multicollinearity

So finally to fulfill our research objective for a regression model we have to fix the problem of multicollinearity which is finally important for our prediction also.

Let say we have the following linear equation

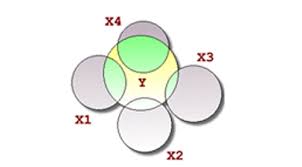

Y=a0+a1*X1+a2*X2

Here X1 and X2 are the independent variables. The mathematical significance of a1 is that if we shift our X1 variable by 1 unit then our Y shifts by a1 units keeping X2 and other things constant. Similarly, for a2 we have if we shift X2 by one unit means Y also shifts by one unit keeping X1 and other factors constant.

But for a situation where multicollinearity exists our independent variables are highly correlated, so if we change X1 then X2 also changes and we would not be able to see their Individual effect on Y which is our research objective for a regression model.

“ This makes the effects of X1 on Y difficult to differentiate from the effects of X2 on Y. ”

Note:

Multicollinearity may not affect the accuracy of the model as much but we might lose reliability in determining the effects of individual independent features on the dependent feature in your model and that can be a problem when we want to interpret your model.

What causes multicollinearity?

Multicollinearity might occur due to the following reasons:

1. Multicollinearity could exist because of the problems in the dataset at the time of creation. These problems could be because of poorly designed experiments, highly observational data, or the inability to manipulate the data. (This is known as Data related multicollinearity)

For example, determining the electricity consumption of a household from the household income and the number of electrical appliances. Here, we know that the number of electrical appliances in a household will increase with household income. However, this cannot be removed from the dataset.

2. Multicollinearity could also occur when new variables are created which are dependent on other variables(Basically when we do the data preprocessing or feature engineering to make the new feature from the existing features . This is known as Structure related multicollinearity)

For example, creating a variable for BMI from the height and weight variables would include redundant information in the model. (Since BMI depend on the height and the weight itself)

For example, including the weight of a person in kilograms and another for weight in grams or some other units.

4. When we want to encode the categorical features to numerical features for applying the machine learning algorithms since ML algorithms only understand numbers not text. So for this task, we use the concept of the Dummy variable. Inaccurate use of Dummy variables can also cause multicollinearity. (This is known as Dummy Variable Trap)

For example, in a dataset containing the status of marriage variable with two unique values: ‘married’, ’single’. Creating dummy variables for both of them would include redundant information. We can make do with only one variable containing 0/1 for ‘married’/’single’ status.

5. Insufficient data in some cases can also cause multicollinearity problems.

Detecting Multicollinearity using VIF

Let’s try detecting multicollinearity in a dataset to give you a flavor of what can go wrong.

Although correlation matrix and scatter plots can also be used to find multicollinearity, their findings only show the bivariate relationship between the independent variables. VIF is preferred as it can show the correlation of a variable with a group of other variables.

” VIF determines the strength of the correlation between the independent variables. It is predicted by taking a variable and regressing it against every other variable. “

or

VIF score of an independent variable represents how well the variable is explained by other independent variables.

R^2 value is determined to find out how well an independent variable is described by the other independent variables. A high value of R^2 means that the variable is highly correlated with the other variables. This is captured by the VIF which is denoted below:

VIF=1/(1-R^2)

So, the closer the R^2 value to 1, the higher the value of VIF and the higher the multicollinearity with the particular independent variable.

- VIF starts at 1(when R^2=0, VIF=1 – minimum value for VIF) and has no upper limit.

- VIF = 1, no correlation between the independent variable and the other variables.

- VIF exceeding 5 or 10 indicates high multicollinearity between this independent variable and the others.

- Some researchers assume VIF>5 as a serious issue for our model while some researchers assume VIF>10 as serious, it varies from person to person.

I have a dataset of Boston price prediction based on the following independent features, Attribute Information (in order):

– CRIM per capita crime rate by town

– ZN proportion of residential land zoned for lots over 25,000 sq. ft.

– INDUS proportion of non-retail business acres per town

– CHAS Charles River dummy variable (= 1 if tract bounds river; 0 otherwise)

– NOX nitric oxides concentration (parts per 10 million)

– RM average number of rooms per dwelling

– AGE proportion of owner-occupied units built before 1940

– DIS weighted distances to five Boston employment centers

– RAD index of accessibility to radial highways

– TAX full-value property-tax rate per $10,000

– PTRATIO pupil-teacher ratio by town

– B 1000(Bk – 0.63)^2 where Bk is the proportion of blacks by town

– LSTAT % lower status of the population

– MEDV Median value of owner-occupied homes in $1000’s

Test for detection of Multicollinearity

1. Variance Inflation Factor(VIF)

– If VIF=1; No multicollinearity

– If VIF=<5; Low multicollinearity or moderately correlated

– If VIF=>5; High multicollinearity or highly correlated

2. Tolerance(Reciprocal of VIF)

– If VIF is high then tolerance will be low i.e, high multicollinearity.

– If VIF is low the tolerance will be high i.e, low multicollinearity.

Solutions for Multicollinearity

1. Drop the variables causing the problem.

– If using a large number of X-variables, a stepwise regression could be used to determine which of the variables to drop.

– Removing collinear X-variables is the simplest method of solving the multicollinearity problem.

2. If all the X-variables are retained, then avoid making inferences about the individual parameters. Also, restrict inferences about the mean value of Y of values to X that lie in the experimental region.

3. Re-code the form of the independent variables.

For example, if x1 and x2 are collinear, you might try using x1 and the ratio x2/x1 instead.

4. Ridge and Lasso Regression– This is an alternative estimation procedure to ordinary least squares. Penalizes for the duplicate information and shrinks or drops to zero the parameters of a regression model.

5. By standardizing the variables i.e, by subtracting the mean value or taking the deviated forms of the variables (xi=Xi-mean(X))

7. Increase in sample size may sometimes solve the problem of multicollinearity.

Implementation of VIF using Python

Python Code:

import numpy as np

import pandas as pd

import statsmodels.api as sm

from sklearn.datasets import load_boston

boston = load_boston()

boston.DESCR #For see the description about the complete dataset.

X = boston["data"] # Independent variables

Y = boston["target"] # Dependent variable

names = list(boston["feature_names"]) # Name for all the attributes

df = pd.DataFrame(X, columns=names) # Make the pandas dataframe for Data analysis

print(df.head()) # See first 5 samples from the dataframe

for index in range(0, len(names)):

y = df.loc[:, df.columns == names[index]]

x = df.loc[:, df.columns != names[index]]

model = sm.OLS(y, x) #Fit ordinary least squares method

results = model.fit()

rsq = results.rsquared

vif = round(1 / (1 - rsq), 2)

print("R Square value of {} column is {} keeping all other columns as independent features".format(

names[index], (round(rsq, 2))

)

)

print("Variance Inflation Factor of {} column is {} n".format(

names[index], vif)

)The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.

Hi. Thanks for the article and code. This is an excellent explanation and I had no idea of VIF. Thanks for the code, too. That's the hard part.

Many thanks for detailed explanation on Multicollinearity. If I am not wrong, multi-collinearity is not a problem in tree based methods like DT, RF & Boosting etc. and does so ML methods and only serious problem with regression methods either linear or logistic etc. thanks for the post anyway

Hi Since I am not from the mathematics department but I read ur theoretical part and the way u explain is really well and anyone from non mathematics can easily understand. Thanks 😊