Introduction

Instead of starting with the definition of statistics, I wish to start with the quote of Karl Pearson’s definition on Statistics, “Statistics is the

grammar of science”.

Recently everyone is talking about Data. After hearing the word “Data” the basic questions that arise in our minds are,

What is Data?

How Data is collected?

How Data can be analyzed?

How Data is interpreted?

To answer all these questions, the term “Statistics” is used. Statistics is the basic and important tool to deal with the data. Now coming to the definition of statistics, it involves the collection, descriptive, analysis and concludes the data.

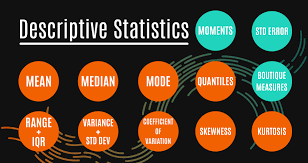

There are two types of Statistics, Descriptive and Inferential Statistics.

In Descriptive Statistics, from the given observation the data is summarized. The summarization takes place by considering the sample from the population using the mean or standard deviation.

There are four different categories in Descriptive Statistics. They are,

- Measure of frequency

- Measure of dispersion

- Measure of central tendency

- Measure of position.

Based on the number of times a particular data has occurred defines the measure of frequency. The Measure of dispersion can be defined based on the Range, Variance, Standard Deviation, etc., The mean, median, mode, skewness of the respective data comes under the measure of central tendency. Finally, based on the percentile and quartile the position is measured.

Then looking into Inferential Statistics, once the data is collected, tabulated, and analyzed the summary or the inference is derived by using inferential statistics. The inferences are drawn based upon sampling variation and observational error.

Based on the information and conclusion derived from the sample the inferential statistics help us to predict and estimate results for the population.

STATISTICAL ANALYSIS of DATA

Statistics is used in a variety of sectors in our day-to-day life for analyzing the right data. Based on the interpretation the development steps are taken in both private and public sectors.

Before getting into Analyzing the data, there are few things to remember.

Define your question, Collect the right data, Understand the data, Cleaning the data, Analyze the data and finally interpret the results for the questions.

What is defining the question? For an organization, the betterment steps are taken from the past data analysis. For better steps, there will be a few objectives to be answered perfectly to give a good interpretation. The question should give the potential solution for the problem. For that framing, a relevant question is more important. Based on the questions only, the data will be collected. So defining the question is plays a major role.

For example, In a company, if the employee attrition is high. The solution for reducing the employee moving from the company should be drawn for that the basic variables like the employee experience, their satisfactory level, their promotion, duration of working hours, etc., to be determined such that the problem can be resolved to give a potential solution.

How to collect the right data? The data collection has two classifications. One is primary data and another is secondary data. In primary data, the data will be collected through questionnaires, by sending emails or approaching each person. For example census. Whereas, in secondary data, it’s the data that is already available in the secondary source like agency or Database.

Now before collecting the new data, identify the existing data that is available from the database. Apart from that collect the relevant data to satisfy the objective. Then organize the existing data with the new data to proceed with the analysis. For example: Taking the same case of employee attrition, the data to be collected are experience in the company, working hours, educational qualification, distance from home, traveling hours, promotion, age of the employee, increment or hike, etc., these data are important to be collected to find the reason for employee attrition. There may be few variables that already available in the database and any new variables that are needed can be added.

Why do we need to Understanding the data? Once the data is collected there may be many variables that are related directly or indirectly to the objective. For that, we first need to study about all the variables whether it is nominal or ordinal. Preparing the data for analysis is done after understanding the data. While understanding we get to know about the data types, rows, and columns, missing in the data, finding the independent and dependent variables, etc.,

There may be few variables that may not be related to the question that the organization has and those variables can be used in future for future analysis. For finding those kinds of variables understanding the data is more important. Taking the same employee attrition example there may be data related to the family such as family members, years of experience in a previous company, social status, etc., and every variable is to be understood such that to split the data in such a way for answering the question.

How Cleaning the data is done? Data cleaning is the process of modifying the data, removing the duplicate variables, creating dummy variables if needed. Deleting the unwanted columns that are not related to the question. If the data cleaning is not proper it may lead to a lower accuracy of the model and may tend to misleading conclusions.

Once the data cleaning is done, the right data to answer the question is ready. Data manipulation is done in many ways like plotting the data, creating pivot tables for the variables, correlation, regression, detecting outliers process may take place. During the stage of manipulation, it may be needed to proceed with an existing dataset or remove some dataset or there may be a need to add few more data to answer the question. After all these stages, the required data will be ready for analysis.

How to analyze the data? When starting to talk about analysis the main thing is that model selection. Selecting the model plays an important role to analyze the data and answering the objective. Defining the dependent and independent variables is the important stage when analyzing the data. Currently, machine learning techniques are used for data analysis such that predictions and interpretations can be done easily. But still, some objectives can be answered directly while doing data visualization and basic statistical analysis. The tools used for analyzing the data are, Python, Excel, R programming, SPSS, STATA, etc.,

Correlation is used to find the relationship or association between two or more variables. Correlation lies between values -1 to +1. The interpretation is that, if the correlation is +1 then it is strongly positively correlated, -1 then it is strongly negatively correlated and 0 implies no correlation exists. Correlation works in both the case of quantitative and qualitative data.

Coming to regression, this analysis is used when we need to find the dependencies of one variable on the other. The regression value lies between 0 and 1. If the regression value is 1 then it is a perfect fit and 0 then it is not a good fit. The predictive model can be done by using regression analysis. This also uses both quantitative and qualitative data. There are two types of regression analysis. Linear Regression and Multiple Linear Regression.

In Linear Regression, it has one dependent variable and one independent variable. For example, if the price is low the sales will be high. In the case of the multiple linear regression model, it has one independent variable and several dependent variables. For example, the price of the house depends on the number of rooms in the house, area of each room, number of car parking, facilities, location, etc.,

In the Case of Survival analysis, if the data is concerning the time of occurrence of an event, then survival analysis can be applied. The event will have the outcome as 0 or 1. For example, the patient survival from a heart attack can be denoted the 0 or 1. 0 denotes the person not survived and 1 denotes he/she survived. This can be predicted having the variables such as age, smoker or non-smoker, urban or rural living person, having blood pressure or not. Based on all the factors considering the person surviving status can be estimated. Currently, Survival Analysis can be applied in the case of COVID patients.

Finally coming to the part of Machine Learning techniques, such as the Random Forest, Decision Tree, KNN, etc., can be applied in the case of prediction and classification technique. In the example of employee attrition, taking the objective as either the male or female employee who can leave the company can be determined by using classification technique. Several models can be developed and based on the accuracy of the models, which model can predict the future employee attrition can be determined. If higher the accuracy then that particular model can be used to predict the future data.

Interpreting the result: After analyzing the data, it’s time to interpret the result. While interpreting the result check whether the analysis answered all the questions that were framed, does the data collected helped in the analysis, and from the interpretation is there a positive result for the betterment of the objective. By considering our example of employee attrition the analysis part should suggest some better steps or improvements to reduce the employee attrition from the company.

These are the basic important thing to be done and noticed while doing statistical data analysis.

Finally, I wish to quote the words of Seth Godin – “Data is not useful until it becomes information”

Hope you all found some basic ideas about statistics and data analysis using statistics.

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.

So nice to know .I dont know this .Thank you for sharing to ...non computer IT savvy like me.Rosita