This article was published as a part of the Data Science Blogathon

Wait! What does the term ‘AI’ actually stand for? Artificial Intelligence, isn’t it? At least that is what the world has been crazy for.

But what if we discover that we are moving towards something known as the ‘Augmented’ Intelligence. In this blog, we walk together to learn about this new interesting concept and how can we use it for creating the products and platforms that we have always dreamt of.

(We will be referring to Augmented Intelligence as Au-I, a self-made reference)

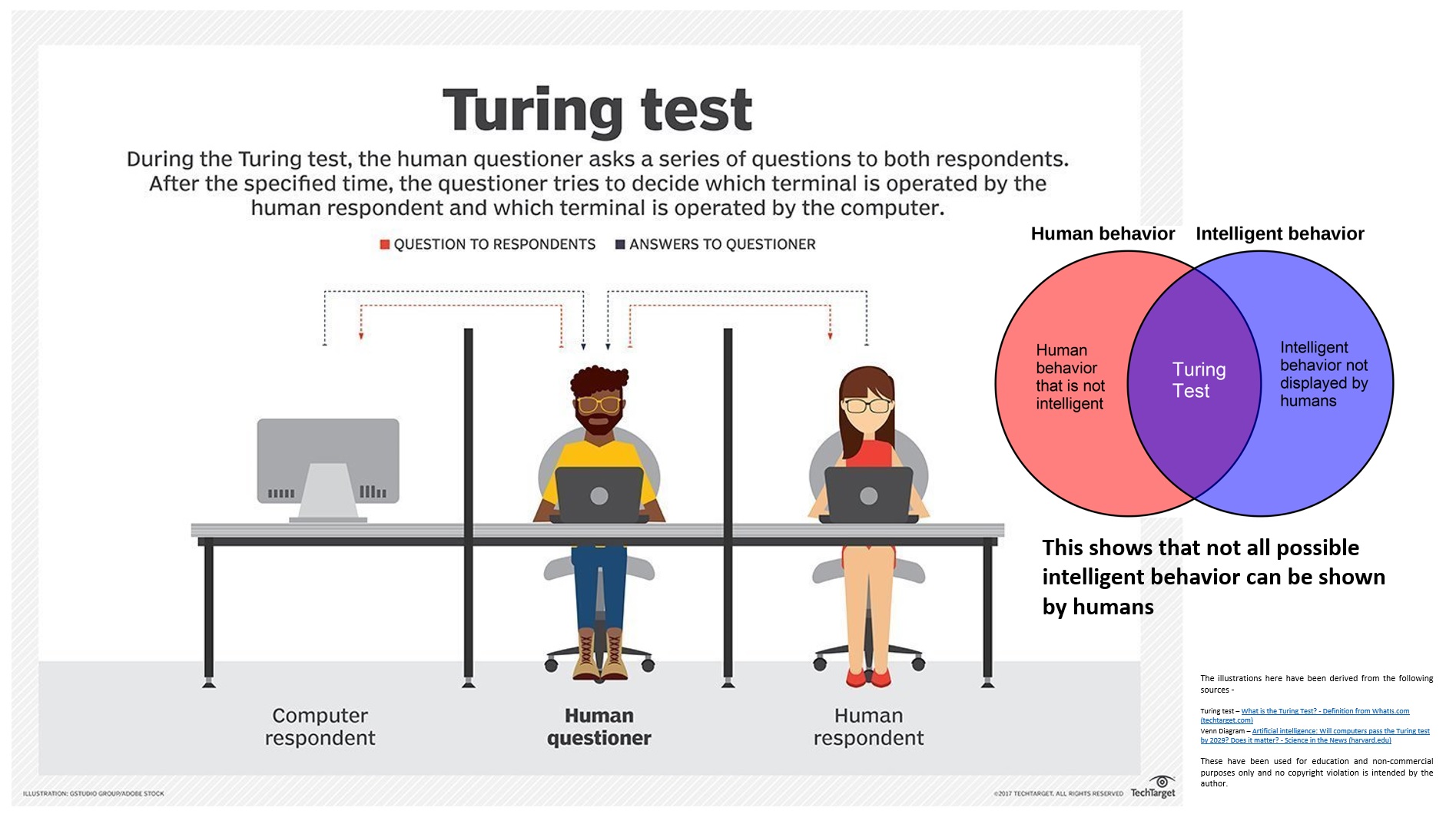

For a long time in the past, the notion of machines that could think and act like humans was a fantasy. As computers arrived at the mainframe, this somehow looked possible. Then it was Alan Turing, one of the greatest computer scientists, who believed that one-day computers could get indistinguishable from humans in the way they communicate and show their intelligence. And thus, he came up with the Turing Test in 1950. In this, a human evaluator is supposed to ask some questions to a machine and a human, without knowing their identities. Based on the answers received, if the evaluator fails to clearly distinguish between the computer and the human, the computer is said to have passed the Turing Test. Sounds like an interesting AI chatbot game, right?

But it was not until 1956 when the term ‘Artificial Intelligence’ originated as a name for a summer conference held at Dartmouth University organized by computer scientist John McCarthy.

Anddddd… now, we have the term ‘AI’ on the mouths of every other guy entering the college. The boom of this technology has indeed been for very real reasons. From healthcare to energy to astronomy, there has been no more space left to embrace and make use of AI.

Now hold on! Going on to the future, what if AI gets even more intelligent and powerful? What if computers get truly indistinguishable from humans and surpass us? The fear is real but perhaps reality isn’t.

Augmented Intelligence comes when humans remember that Artificial Intelligence is their brain child and not vice-versa.

The motive of Artificial Intelligence, and of any digital technology for that matter, is after all to help and assist humans and of course not to replace humans. And there’s where the concept of Augmented Intelligence comes in. The term ‘augment’ means to complete something and through Augmented Intelligence, we look to combine the intellect of humans with the trained intelligence of machines to make the system better for our own purpose. Here we kind of restrict the system from surpassing us, if ever possible.

And now you think what exactly is this new concept, how different it is, and what all we got to do?

On defining Augmented Intelligence, we have – it is the synergistic use of a machine’s ability to process data and perform tasks along with the intellectual abilities of a human. Note that we tried to define this, without the use of Artificial Intelligence. We may also Au-I may as an alternative form of Artificial Intelligence where humans and machines work together rather than machines doing all the work (this is easier to understand!)

It has been overwhelming to see the increasing ability of machines to think and work like humans, especially for activities like language, voice, and image recognition. The computing power enables them to process information from all around the world in an astonishingly small amount of time. The notion of Artificial Intelligence has been majorly based on these abilities.

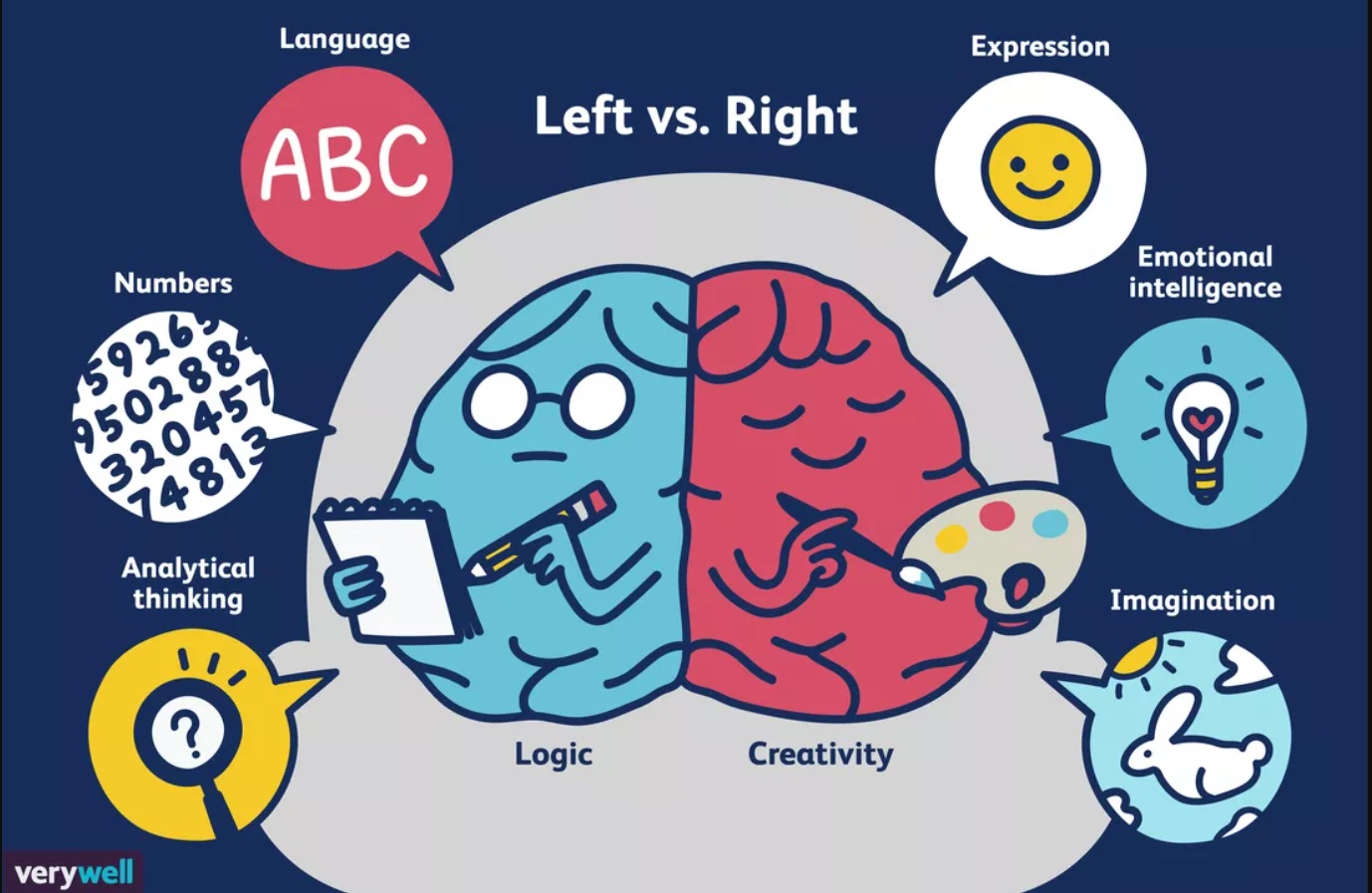

Okay, but have you ever noticed that all this is just the capacity of the left part of the human brain. This part of the brain focuses on mathematics, language, and analytical abilities much like the AI models we use presently.

We are yet to tap the abilities of the right side of the human brain that deals with creativity and imagination and this looks possible only through human-machine collaboration. A world of myriad possibilities awaits when a full utilization of the human brain is made possible, even if through a collective approach.

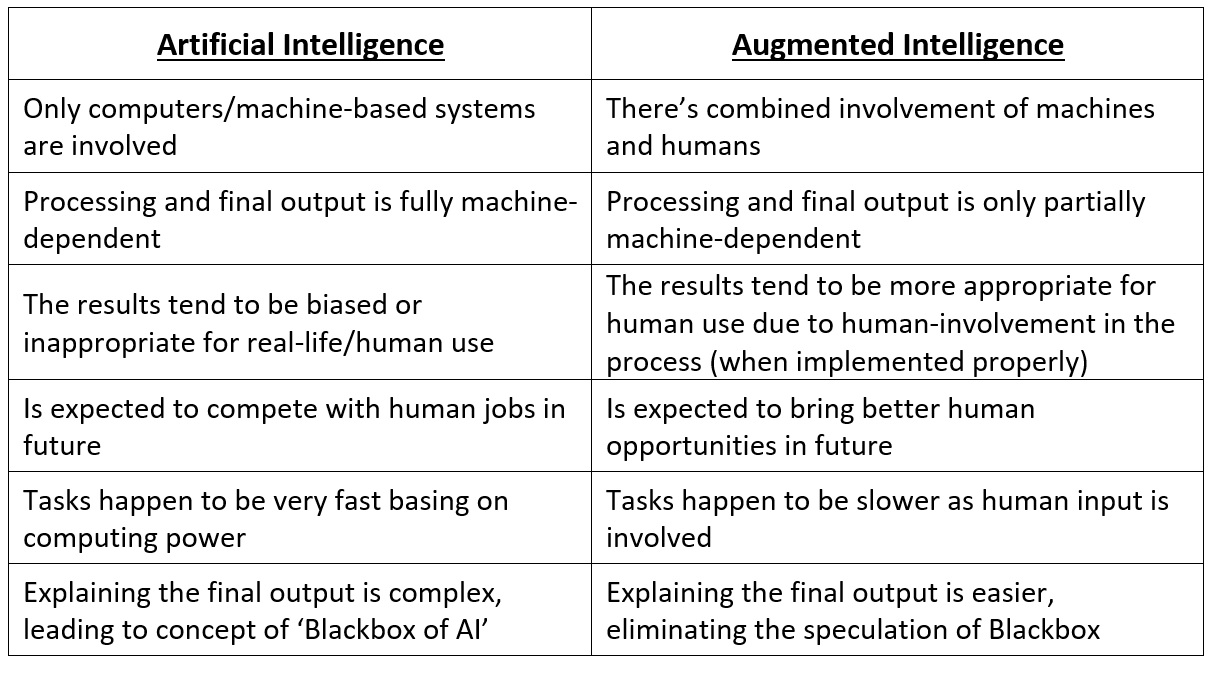

If you are keen to know the differences between AI and Au-I, here’s a quick comparison table that makes things lucid –

Given that Au-I is an interesting combination of humans and machines (their abilities), you must be curious to know where all has been used so far? Are there any examples?

The answer is of course, yes! But before we look into it, it’s important to understand that Au-I is often not a very separate thing from AI. While processing data and developing ML models, there is often the interplay of humans and machines. The algorithms to be chosen, the parameters to be tuned, the discrepancies to be corrected are mostly done by humans. It is only in the case of deep learning, neural networks, and auto-ML where the gear shifts towards the computers. Here are some examples of Au-I where the computer’s artificial intelligence has been positively enriched (augmented) by consistent human inputs to give enhanced outputs and results.

The use of prediction and recommendation systems in healthcare where human input is considered before the final decision-making is a good example of Augmented Intelligence. A human touch reduces the rate of discrepancies and the results are properly explainable. The knowledge and experience of doctors and medical practitioners is also utilized effectively in this case.

An interesting study carried out by a team from Harvard Medical School at the Cameleon Grand Challenge showed that an algorithm could accurately detect breast cancer by 92% of the time. In the case of a human pathologist, the accuracy 96%. But when the pathologist worked together with the computer’s algorithm, the results were 99% accurate. (Read more about their study here and here)

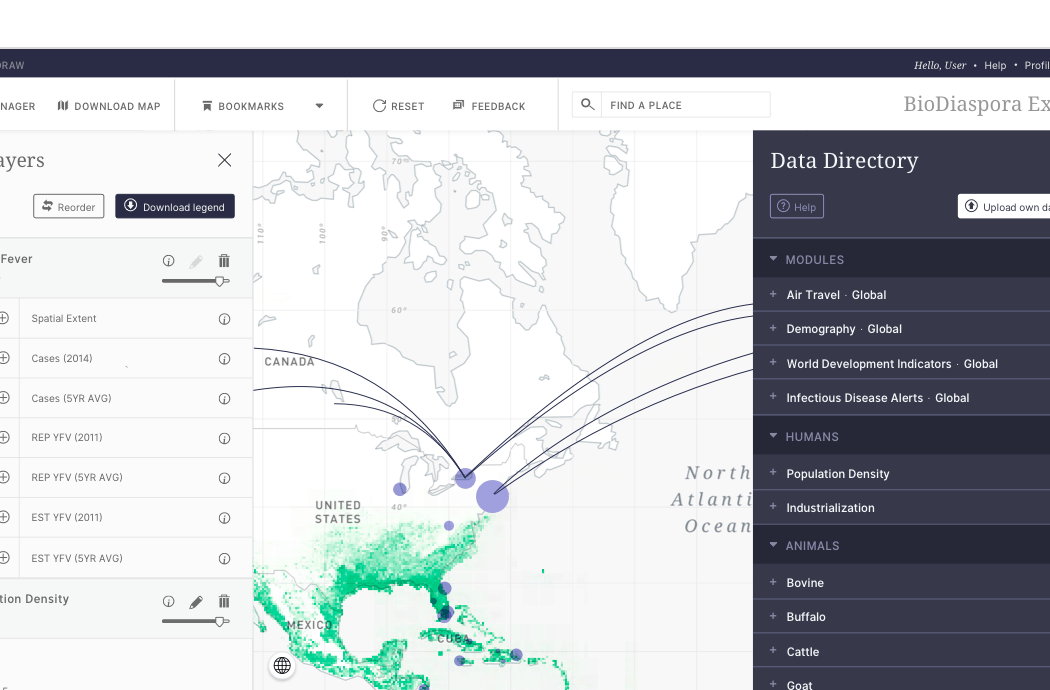

Augmented Intelligence found its use in this COVID-19 scenario also. BlueDot is an AI company from Canada that specializes in infectious disease epidemiology. Even before the first warnings were issued by WHO and CDC, it was BlueDot that predicted the 2019-nCoV outbreak and sent alerts to its customers on December 31. The company has been previously successful in predicting the spread of the Zika virus also. The work published by the authors from BlueDot demonstrates the high variety and veracity of data that can be aggregated to make very accurate epidemiologic predictions.

(Source – BetaKit)

Another popular example is Google Flu which utilized search engine queries to improve flu epidemic tracking. The model itself didn’t perform well but other researchers were able to utilize Google’s data and other social media data to predict the spread much more accurately.

Dermatology has been a discipline of clinal science where detection of skin diseases has been of low accuracy. The involvement of AI and Deep Learning can help change the scenario in a very positive way. Additionally, the interplay of humans and algorithms has great benefits. A study by Lieu et al compares the diagnosing capability of Deep Learning systems with dermatologists, primary care physicians (PCPs), and nurse practitioners. Based on this study, an article by Standford School of Medicine emphasizes how Au-I can be effectively used for this and what should be the future direction.

2. Educational Sciences

Education has been the core aspect of everyone’s lives and the world has been looking for insane ways to improve the education systems so as to produce better individuals. This comes as a necessity when the world is struggling to provide a quality education through digital tools amidst the pandemic.

Educational Data Mining is the discipline where Machine Learning and Analytics are used to generate insights and trends relating to the behavior and learning of students. The use of Augmented Intelligence can be quite fruitful in this regard, with a shift towards open-ended processes. In a study at the University of Eastern Finland, a clustering algorithm named Neural N-Tree is used that enables the end-users of EDM to analyze educational data in a continuous and iterative process. It is through the interactions between the model and the end-users that the predictive accuracy increases. This is made possible not by the conventional parameter tuning but by model adjusting. For users who don’t possess the required skill set, the method might be challenging but it is definitely beneficial for skilled people.

An interesting study by Standford University and Snapchat bring out a beautiful use case of Augmented Intelligence using human-machine collaboration. The study involves a formulated system where human input is used to enhance the object detection/annotation ability to exist computer vision algorithms.

The experiments are carried out by involving state-of-art concepts like crowd engineering by defining a set of constraints like utility, precision, and budget based on which the involvement of humans is decided and implemented. The optimization is done using the Markov Decision Process. The results are satisfying both shown based on computer engineering perspective, crowd engineering perspective.

They find out by the use of human inputs, it is indeed feasible to enhance the capability of existing scene and object detectors based on CV and AI algorithms. Read their full study here.

So, you are fascinated by the wonders of Augmented Intelligence by looking at some of the use cases. Now, it’s a dire necessity to figure out how we can make use of this in overcoming the lacunas of Artificial Intelligence. This is definitely possible through crowd engineering and the humans-in-loop approach.

You are managing a team and you ask them to send emails to your clients. Either they get the inputs from you and work on their own (take feedback from you upon making mistakes) or else they leave it for you to finally edit and send the email. You being a manager with a tight schedule, you have to be choosy when deciding on what to see and what to ignore before you finally send the email.

Compare this analogy with an AI model making. This day-to-day office story aptly explains the thin difference between AI and Au-I and how we should be approaching it.

Being an AI/ML engineer or an application developer, if you wish to get an edge by the use of Au-I, you need to devise an effective and feasible way in which inputs and feedbacks by the human crowd are integrated. The input by humans may be incorrect, time-consuming, and confusing. The involvement has to be optimized and there lies the real spice behind Au-I. Though still at a nascent computer-science concept stage, Augmented Intelligence will be rapidly finding its way into mainstream development as we envision a tech-strong society of tomorrow.

About the Author

Hello, this is Jyotisman Rath from Bhubaneswar, India. A thing that always excites me is solving new and exciting challenges. Alongside pursuing I-MTech in Chemical Engineering, I owe a great interest in the field of AI/ML and Data Science. I look to integrate these with my research interests and real-world scenarios and I believe technology is much more than just coding.

Would love to see you on LinkedIn and Instagram. For any queries, mail me here.

The media shown in this article are not owned by Analytics Vidhya and are used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,