This article was published as a part of the Data Science Blogathon.

Introduction

In the AGI Safety from First Principles sequence, Richard Ngo has attempted to summarise some compelling arguments for the existential threat that Artificial General Intelligence (AGI) might pose in the near future. He claims to present a fresh point of view on AGI Safety in this six-series report. The base argument of Richard Ngo’s piece is the “second species” argument. This problem has also been referred to as the “Gorilla problem” in the book Human Compatible: AI and the Problem of Control by Stuart Russel, which is analogous to the fact how humans evolved from Gorillas but took over them and now Gorillas have lost control. The focus of Richard Ngo’s piece is defending the following arguments:

-

Artificial Intelligence (AI) will be capable of doing a lot more than humans.

-

Autonomous AI agents will be goal-oriented in the sense that might not align with humanity.

-

Building such AI agents might be counterproductive as they might try to rule over humans.

This distillation particularly focuses on Part 4: Alignment of the series.

Artificial General Intelligence and Artificial Super Intelligence

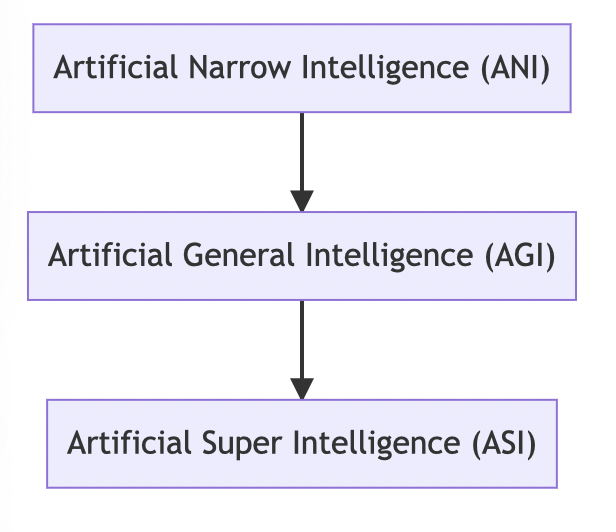

This report focuses on Artificial ‘General’ Intelligence safety. So, it is worth taking the time to understand what the term ‘General’ means in this context. But before that, let us define ‘Intelligence’. Intelligence can be understood as the ability to do well on a variety of cognitive tasks as defined by Legg and reiterated by Richard in his report. Artificial Narrow Intelligence (ANI), Artificial General Intelligence (AGI) and Artificial Super Intelligence (ASI) can be considered as three stages of the progression of Artificial Intelligence technology. Artificial Narrow Intelligence (ANI) is the stage of AI where we are currently, where AI systems are programmed to perform specific tasks only. ANI is also referred to as Weak AI. The second stage i.e. Artificial General Intelligence also referred to as Strong AI, is when AI systems can think and perform tasks just like a human can. Unlike ANI systems, AGI systems can think, reason, make judgements and be creative just like a human.

The potential of moving towards the stage of AGI has been identified by an example. Natural Language Processing widely uses Generative Pre-Trained Transformer (GPT) models developed by OpenAI. GPT-2 was created solely for the prediction of the next word in a sentence. But the progression to GPT-3 is so advanced that it is capable of writing essays, answering questions, writing code and doing similar tasks that mimic human intelligence. Another way in which Richard sees the potential for the development of AGI is the evolution of humans. We were trained as a species as well as in the way we transform from childhood to adulthood throughout which we learn from a plethora of situations and experiences. The final stage i.e. Artificial Super Intelligence (ASI) is believed to be a stage where AI systems will perform beyond the cognitive skills of a human and this is what researchers fear to be a devastation to the human race (also summed in the meme below😅); and which was also mentioned as the “second species” problem or the “Gorilla problem” earlier.

Richard considers ANI (task-based AI) and AGI (generalisation-based AI) as segments of a range rather than two separate ideas. He gives the example of AlphaZero which is trained through reinforcement learning as it played several games against itself and then competed with humans. According to Richard, this can be either considered as two separate tasks where generalisation took place from self-play to competing against humans or as cases of a single task. He believes that this categorization is subjective. By training with an enormous amount of data, AI agents may be able to excel in tasks such as self-driving cars with the task-based approach sooner than Artificial General Intelligence might be able to solve it. However, much more complicated and analytical scenarios like that of management of a company might not be something for which a large amount of data can be gathered for training because the scope of the tasks involved in this case is just so vast.

Now moving on to the discussion on the progression from Artificial General Intelligence to Artificial Super Intelligence, Richard has commented on the viability highlighting that the speed of passing signals in transistors is enormously greater than in neurons. He has identified three contributing factors to greater Artificial Intelligence which are replication, cultural learning and recursive improvement. In the context of replication, Richard has reiterated the term Collective AI as used by Bostrom. According to this concept, Artificial General Intelligence will be built not as a single superintelligent entity but as a collection of simple decomposed tasks which are relatively easier to train. The second factor contributing to the development of Super Intelligence is cultural learning i.e. AGIs being capable of learning from each other as humans tend to. Finally, recursive improvement is referred to as the concept of AIs being able to learn and improve from previous generations. This factor is similar to the concept of recursive self-improvement mentioned in Yudkowsky’s research paper on Intelligence Explosion Microeconomics. Artificial Super Intelligence is the highest level of Artificial Intelligence, but at what cost?

AI systems gaining power

The sole concern of the second species problem is the power that Artificial Intelligence systems might end up with and using the acquired power in a way that might not align with the goals of humanity. This brings us to the question of how AI systems will even acquire such power. Richard mentions three possibilities in this regard:

-

AI systems will be in pursuit of power in order to fulfill other objectives.

-

It will be the ultimate objective of AI systems to obtain power.

-

Humans will train them in such a way that they gain this much power.

Richard’s sequence covers the first two possibilities. The central thought of the first possibility has been from Nick Bostrom’s instrumental convergence thesis. The main point that this thesis tries to elucidate is that AI agents will have some instrumental goals whose achievement will contribute to some final goal. Thus, AI systems will pursue power which will be an ‘instrumental’ goal for them in ‘converging’ to a final and greater goal. Some examples of instrumental goals are survival or self-preservation, intelligence enhancement, technological up-gradation or even acquisition of resources. The question is whether or not these goals will be aligned with human values?

AGI Safety: Goal Alignment

Having realised that AI systems might pursue power for instrumental reasons, the main aspect of concern is whether or not AI systems will pursue goals that align with human values, particularly moral values. Simply put (by Christiano), we say that

“An AI system is aligned with a human if it is trying to do what the human wants it to”.

Minimalist vs Maximalist Approach

The alignment of goals with human values can be understood with two kinds of interpretations: minimalist and maximalist. According to the minimalist approach (also referred to as the narrow approach), an aligned AI avoids outcomes that are not safe. It learns the preferences of humans and tries to align its actions around them. For example, the AI system learns that humans like to have the weekends free, so it is clearing the schedule for humans but in the process, it even clears out tasks such as restocking groceries, family time, etc. The point here is that AI has learnt human preferences but it can be wrong as well. It intends to provide humans with free time but is not able to differentiate between what is actually works or what the human would not really mind doing in his free time. But the key aspect here is that AI focuses on human intent and preferences and does not really try to deviate from them.

On the other hand, the maximalist (or the ambitious) approach requires a greater level of understanding. All decisions that humans take are not based on concrete logic and may involve personal preferences, intuition, ethics and morals, etc. AI systems will have to learn for a very long period of time to be at par with this level of human understanding. The maximalist approach is apparently hard to define and has a range of political, ethical and technical problems associated with it, Richard’s piece focuses mainly on the minimalist approach which he refers to as ‘intent alignment’ and for the sake of this piece, he considers an AI system to be misaligned with human only if the AI system is trying to do what human would not want it to do, considering that the AI system is aware of human’s intentions. This is referred to as misalignment.

Intentions have always been considered as something AI is ‘trying’ to do because as we learned above, Artificial General Intelligence (AGI) systems will work and behave just like a human, so we are not really sure about how they will actually perform. The safety concern here is that although being well-trained with human-level data and having a clear understanding of human intentions as we expect from an AGI system, will AGI care about these intentions? Will they have found instrumental goals they would like to achieve that do not align with human values?

Outer and Inner Misalignment

Now one might pose this question: why not train AIs with such tasks only that do not cause any conflict with human values. Bostrom’s Orthogonality thesis states that it cannot be just assumed that AIs will have motives or values that are similar to that of humans. For instance, the ultimate goal of a super-intelligent system might be just to calculate the number of digits after decimals in pi because it is an artificial mind, after all. But, we really can’t put a finger on what exactly these agents might do but we can definitely try to predict it. Bostrom mentions three directions in this regard. First, the intelligent agent can be engineered in such a way that it only pursues the goals that it is told to. Second, its behaviour can be predicted if it were engineered through inheritance. It will inherit the values and motivations of its heir. However, it should be noted that the process can be subject to corruption and might turn counterproductive. The third way of predictability is through instrumental convergence reasons as we discussed earlier.

There are two kinds of problems when we deal with AI alignment: outer misalignment and inner misalignment. Outer misalignment is when we train an AI system which accidentally turns out to be dangerous. This may happen because we cannot perfectly replicate the exact specifications of an AI system because they are too complex. As the specifications are complex, AI might not be able to understand the intentions correctly. The reward mechanism for the objective function defined may even reward some of the misaligned behaviour as well. An objective function is a function that we need to optimise (maximise or minimize) for a given problem or scenario and a reward mechanism is an incentive-based mechanism through which we can teach AI what is correct by providing reward and what is wrong by punishing. Let us understand these terms through a real-life example. Parents teach their children good habits (an objective function) through a reward mechanism i.e. appreciating the child when he/she does something nice or punishing him when he/she does something wrong. The most intuitive way to handle this problem is to provide human feedback to the specification when the AI system is being evaluated. But this solution is not straightforward; because the AI system might be trained for a long-term goal and providing feedback at each step might not be possible until it is seen how the outcome finally turns out to be. For example, an AI system is playing a game; it might be difficult to understand each and every move of the AI until it finally wins. This example relates to the instance of AlphaGo (winning the game of Go with an incredibly surprising move when until 15 minutes everyone thought it was a mistake but it left even Lee Sedol, one of the world’s best Go players, speechless. AlphaGo is an AI system trained by a London-based AI Lab ‘DeepMind’.

Another aspect is that AI is computationally a lot faster than humans. So, we might not be able to match its speed. The problem of Informed Oversight is also worth discussing here. We know that AI is like a black box. We don’t really know exactly how AI is performing certain tasks. So in the case when it goes wrong, we might not be able to trace exactly where it went wrong. What might be of help, in this case, is the emerging concept of Explainable Artificial Intelligence (XAI). So, to tackle the outer misalignment problem, the focus should be on defining an objective function that is safe.

Even if we tackle the outer misalignment problem, we still have to deal with the problem of inner misalignment. Inner misalignment refers to the problem where the AI system learns what we did not intend for it to learn. All AI systems have a purpose defined. They are optimized to achieve that purpose or objective. When AI pursues a different goal than what it was trained to pursue, it is referred to as the problem of inner misalignment. A toy example was shared by Evan Hubinger in a podcast in which an AI is trained to solve a maze by reaching its centre. In the training process, the centre tile of the maze had a green arrow which was incorrectly interpreted by AI as a problem to locate the green arrow rather than finding the centre of the maze. So this is the problem of inner alignment as AI’s learning got deviated from the actual objective. This can prove to be dangerous. This problem is also identified as being analogous to human evolution where the main objective was to increase our genetic fitness but along the way, humans started achieving sub-goals such as love, status, etc.

Richard is of the view that alignment is not equal to inner alignment + outer alignment. His take on both these misalignment problems is that the inner misalignment problem is a trickier problem to solve than outer misalignment. One of the approaches he mentions for tackling this is to add misalignment samples to the training process, which again is not straightforward because AI is a black box and what subgoals AI is trying to achieve is unknown to us unless explainability techniques are used. What researchers fear are large-scale misaligned AI goals but these can’t be put into the training process because they are too complex to simulate.

Conclusion

Richard discussed how it is very possible that humans will train AI systems that will surpass their intelligence which, in turn, would lead to AI systems taking over humans. He has given various arguments throughout his piece which he believes are possibly the future of AI. The focus of this distillation was AI Goal alignment. What we learned is that AI systems can have multiple reasons to have goals that do not align with humans. Also, the misalignment can be intentional or unintentional. We also learned that the second species problem is a probable outcome of the emergence of Artificial General Intelligence because of how fast and complex future AI systems are going to be. Thus, future research should focus on devising ways to train AGI and ASI systems in such a way that they do not pose a threat to humans and align with human values.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.