This article was published as a part of the Data Science Blogathon

Introduction

It’s pretty simple!

Today we will learn to create an AI chatbot from scratch using Intent matching and NLP algorithms.

Let’s see what we are gonna do:

* Prepare our dataset with questions(keywords) and respective intents.

* Prepare a JSON file containing replies for each intent.

* Transform our data into Tf-Idf Vectors.

* Use Deep Neural Network to classify the User’s question into one of the intents the model was trained on.

* Save our model for deploying it in the future.

* Load our saved model and use it to generate replies to the user’s queries.

Preparing our Dataset:

* We need some questions or keywords and the respective intents to create a chatbot using an Intent matching algorithm.

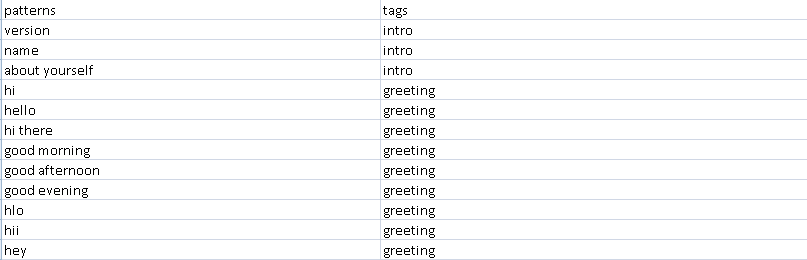

* Here we will create a CSV file containing keywords and their respective intents in the following format ( You can use your own format, All we need is some data with questions or keywords(patterns) and the respective intents(tags) as target variable).

* The more unique the keywords or questions under each intent, The more accurate the replies will be.

* Save your data as “training_data.csv”.

Preparing JSON file with replies:

* Now we have created our data, let’s create replies for each intent.

* In case you don’t know what a JSON file is, Just think of python dictionaries. JSON files work exactly the same.

* All we need is some replies under every intent which will be displayed to the user.

* Here is an example containing the replies under each intent in JSON format.

* Save your replies as “responses.json”.

{ "greeting": ["Hello! How can I help you?", "Hi! Ask me anything.", "Hi! I'm ready to answer all your questions."] }

What is Tf-Idf?

* Term frequency-inverse document frequency.

* It is like a bag of words (vectors with a count of occurrences of each word in a document), But which assigns a weight for each word which becomes very low for frequently occurring words like ‘is’, ‘are’, ‘was’, ‘the’ etc… instead of the count of occurrences.

Formula:

* tf(t,d) = count of t in d / number of words in d

* idf(t) = log(N/(df + 1))

* tf-idf(t, d) = tf(t, d) * log(N/(df + 1))

where, t -> term, d -> document, N -> no.of.documents the word is present.

Now, We have both training data and responses and we know what is Tf-Idf vectors. So let’s start building our chatbot.

Creating and training our model:

* Let’s import necessary modules.

# importing modules import pandas as pd from sklearn.feature_extraction.text import TfidfVectorizer from sklearn.preprocessing import LabelEncoder from tensorflow.keras import Sequential from tensorflow.keras.layers import Dense from tensorflow.keras.models import save_model

* Let’s load our data.

# importing training data

training_data = pd.read_csv("training_data.csv")

* Let’s process our data

(we will convert all the data into lowercase and then transform it into tf-idf vectors). TfidfVectorizer from Scikit-learn will make this easy.

# preprocessing training data training_data["patterns"] = training_data["patterns"].str.lower() vectorizer = TfidfVectorizer(ngram_range=(1, 2), stop_words="english") training_data_tfidf = vectorizer.fit_transform(training_data["patterns"]).toarray()

Here, ngram_range specifies how to transform the data, ngram_range of (1, 2) will have both monograms and bigrams in the Tf-Idf vectors. stop_words specifies the language from which the stop words to be removed.

* Let’s do one-hot encoding

on the Target variable (Intents). Label encoder will do this for you.

# preprocessing target variable(tags)

le = LabelEncoder()

training_data_tags_le = pd.DataFrame({"tags": le.fit_transform(training_data["tags"])})

training_data_tags_dummy_encoded = pd.get_dummies(training_data_tags_le["tags"]).to_numpy()

* Let’s define our model architecture.

# creating DNN chatbot = Sequential() chatbot.add(Dense(10, input_shape=(len(training_data_tfidf[0]),))) chatbot.add(Dense(8)) chatbot.add(Dense(8)) chatbot.add(Dense(6)) chatbot.add(Dense(len(training_data_tags_dummy_encoded[0]), activation="softmax")) chatbot.compile(optimizer="rmsprop", loss="categorical_crossentropy", metrics=["accuracy"])

These parameters worked fine for my data. You can use different parameters which suit your data.

We are doing multi-class classification. Hence, We must use “categorical_crossentropy” loss. Also, We want probabilities of different intents in our output layer. Hence, We must use the “softmax” activation function in our output layer.

* Let’s train our model

with the Tf-Idf vectors of the training data and respective one-hot encoded intents (target variable).

# fitting DNN chatbot.fit(training_data_tfidf, training_data_tags_dummy_encoded, epochs=50, batch_size=32)

I have trained the model for 50 epochs and a batch size of 32. You can change these values according to your dataset.

* Let’s save our model to deploying it in the future.

# saving model file save_model(chatbot, "chatbot")

Congrats!! You have successfully created an AI chatbot from scratch and saved it.

Now, you might be thinking about how to generate replies for questions, You will learn it too.

Now, Consider a new python script “chatbot_main.py” in which we are going to make our chatbot give replies to the users.

* Let’s import necessary modules.

# importing modules import pandas as pd from sklearn.feature_extraction.text import TfidfVectorizer from sklearn.preprocessing import LabelEncoder import numpy as np from tensorflow.keras.models import load_model import json import random

* Let’s load data, model, and responses

# importing training data

training_data = pd.read_csv("training_data.csv")

# loading model

chatbot = load_model("chatbot")

# loading responses

responses = json.load(open("responses.json", "r"))

* Let’s fit the Tf-Idf Vectorizer to our old training data in order to transform new data from the users.

# fitting TfIdfVectorizer with training data to preprocess inputs training_data["patterns"] = training_data["patterns"].str.lower() vectorizer = TfidfVectorizer(ngram_range=(1, 2), stop_words="english") vectorizer.fit(training_data["patterns"])

* Let’s fit the Label encoder to our old target variables in training data to inverse transform the newly predicted targets from our model.

# fitting LabelEncoder with target variable(tags) for inverse transformation of predictions le = LabelEncoder() le.fit(training_data["tags"])

* Let’s define a function that gets a string as an argument and transform it using TfIdfVectorizer which we fit to our training data and then passes the Tf-Idf vector to our model to make a prediction and then it selects the class with highest probability from the predictions and then inverse transform it to get the corresponding intent.

# transforming input and predicting intent

def predict_tag(inp_str):

inp_data_tfidf = vectorizer.transform([inp_str.lower()]).toarray()

predicted_proba = edubot_v2.predict(inp_data_tfidf)

encoded_label = [np.argmax(predicted_proba)]

predicted_tag = le.inverse_transform(encoded_label)[0]

return predicted_tag

* Let’s create a loop by which we can enter our question and get a reply from our chatbot.

# defining chat function

def start_chat():

print("--------------- AI Chat bot ---------------")

print("Ask any queries...")

print("I will try to understand you and reply...")

print("Type EXIT to quit...")

while True:

inp = input("Ask anything... : ")

if inp == "EXIT":

break

else:

if inp:

tag = predict_tag(inp)

response = random.choice(responses[tag])

print("Response... : ", response)

else:

pass

# calling chat function to start chatting start_chat()

Congrats!! You have successfully created an AI chatbot from scratch, saved it, and made the script reply to the user’s questions. You have learned and done so much!!

Thank you!!

About me:

I’m Balamurugan, a First-year student pursuing B.Tech (Computer Science and Engineering) in SASTRA Deemed to be University enthusiastic about data science, deep learning, NLP, and computer vision.

The media shown in this article are not owned by Analytics Vidhya and are used at the Author’s discretion.

Hi, There seems to be a typo in the following line: predicted_proba = edubot_v2.predict(inp_data_tfidf) I guess, the model object variable is 'chatbot'. Please help clarify and it would be great to share a RAW code link to github or something as it will help us test the code quickly. Regards, Acku