This article was published as a part of the Data Science Blogathon

Introduction:

In Machine Learning, one of the main types of learning includes Supervised Learning. Where we already have the correct output and set of features associated with that output. We use some algorithms and try to train them with the existing data and then try to predict the output of new data with only features associated with them. This is like a teacher, where the teacher teaches students about something and tells them what is correct and then when they give exams they need to know what they have learnt and provide with correct answers. KNN is used for both classifications as well as regression tasks in Machine learning.

About KNN:

- KNN tries to find similarities between predictors and values that are within the dataset.

- KNN uses a non-parametric method as there is not a particular finding of parameters to a particular functional form.

- It does not make any type of assumptions about the features and output of the dataset.

- KNN is also called a lazy classifier as it memorizes the training data and not exactly learn and fix the weights. Hence most of the computing work occurs during the classification rather than training time.

- KNN usually works by just trying to see to which class is the new feature near to and it just puts it to the class closest to that point.

Working of KNN Algorithm:

- Initially, we select a value for K in our KNN algorithm.

- Now we go for a distance measure. Let’s consider Eucleadean distance here. Find the euclidean distance of k neighbours.

- Now we check all the neighbours to the new point we have given and see which is nearest to our point. We only check for k-nearest here.

- Now we see to which class there is the highest number obtained. The max number is chosen and we assign our new point to that class.

- In this way, we use the KNN algorithm.

The ideal value of K in KNN:

Here we usually go for an odd number of K as it’s better during voting to see to which numbered class has more votes given and thus we can assign our new class to that.

If we go for too small a value of k, there is a good chance we may have overfitting of data, that’s is the algorithm may perform reasonably well of training but not well on testing data. And, we also may encounter noise if we just use the small value of k, if we have large data.

One way to determine k is to see the error plot for k and run a loop to a set of values, the k associated with the lowest error can be used for our problem. I will be performing these steps during our implementation of Heart disease data.

Pros and Cons of KNN algorithm:

Pros:

- We can implement the algorithm with ease.

- It is very effective against noisy data by averaging k-nearest neighbours.

- Works well in case of large data.

- The decision boundaries that are formed can be of arbitrary shapes.

Cons:

- Curse of dimensionality: Domination of distances by irrelevant attributes.

- Finding the correct value of k may be time expensive sometimes.

- Very high computation cost due to its distance measure.

Implementation of K-Nearest Neighbour on Heart disease dataset.

I have used the Heart disease UCI dataset for this task, which is available here:

1. Importing all Libraries:

import pandas as pd import numpy as np import seaborn as sns import matplotlib.pyplot as plt from sklearn.neighbors import KNeighborsClassifier from sklearn.metrics import accuracy_score

We can see here we have imported KNeighorsClassifier for our classification task. We import this from sklearn library. Sklearn has almost all the machine learning classifiers defined and we can call them and use them for our problem.

2. Read the heart disease dataset:

df = pd.read_csv('heart.csv')

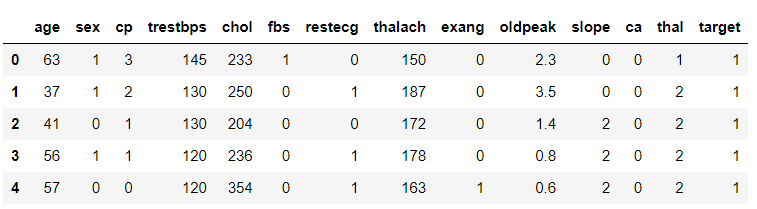

df.head()

As we can see target tells us if the person is suffering from heart disease or not.

sns.countplot(df['target'])

.png)

We will proceed with this as there isn’t much unbalance in target data.

3. Performing KNN by splitting to train and test set:

x= df.iloc[:,0:13].values

y= df['target'].values

from sklearn.model_selection import train_test_split

x_train, x_test, y_train, y_test= train_test_split(x, y, test_size= 0.25, random_state=0)

from sklearn.preprocessing import StandardScaler

st_x= StandardScaler()

x_train= st_x.fit_transform(x_train)

x_test= st_x.transform(x_test)

This step is common for all ML tasks and here I have just split the dataset and scaled it for further processing.

4. Checking for the best value of k:

error = []

# Calculating error for K values between 1 and 30

for i in range(1, 30):

knn = KNeighborsClassifier(n_neighbors=i)

knn.fit(x_train, y_train)

pred_i = knn.predict(x_test)

error.append(np.mean(pred_i != y_test))

plt.figure(figsize=(12, 6))

plt.plot(range(1, 30), error, color='red', linestyle='dashed', marker='o',

markerfacecolor='blue', markersize=10)

plt.title('Error Rate K Value')

plt.xlabel('K Value')

plt.ylabel('Mean Error')

print("Minimum error:-",min(error),"at K =",error.index(min(error))+1)

# Output => Minimum error:- 0.13157894736842105 at K = 7

.png)

5. Apply K-NN Algorithm:

classifier= KNeighborsClassifier(n_neighbors=7) classifier.fit(x_train, y_train) y_pred= classifier.predict(x_test) from sklearn.metrics import confusion_matrix cm= confusion_matrix(y_test, y_pred)

# Output =>array([[26, 7],

[ 3, 40]], dtype=int64)

This way we can see our confusion matrix. Here I specified the k value as 7 as we got the lowest mean error at 7.

6. Accuracy:

accuracy_score(y_test, y_pred)

# Output => 0.868421052631579

We got 86% accuracy on 25% of the dataset and this is a good sign. We could improve them by performing more hyperparameter tuning.

References:

1. https://www.dataminingbook.com/book/python-edition

2. https://www.kaggle.com/ronitf/heart-disease-uci

3. Image: https://unsplash.com/photos/KgLtFCgfC28

Applications of KNN:

Now we know about KNN and how to implement them. Let’s see some scenarios where KNN is used.

1. Music Recommendation System: Probably any recommendation system. But, in the case of music systems, we have a large amount of music coming and there is a high chance that we are getting the same music with different versions being recommended, These could be analyzed using KNN. We could even use it to see which music is of the person’s liking.

2. Outlier Detection: KNN has the ability to identify outliers.

3. Similar documents can be identified using KNN Algorithm.

Conclusion

You can find the complete code here:

https://github.com/Siddharth1698/Machine-Learning-Codes/tree/main/knn_heart_disease

Feel free to connect with me on:

1. https://www.linkedin.com/in/siddharth-m-426a9614a/

2. https://github.com/Siddharth1698