This article was published as a part of the Data Science Blogathon

Introduction

Docker is a platform that deals with building, running, managing, and distributing applications by using containers. Don’t worry if you are hearing the term ‘container’ in this context for the first time. You could imagine it as a software unit that contains the application code, required libraries, and other dependencies needed for the application that you want to build.

When do we need Dockers?

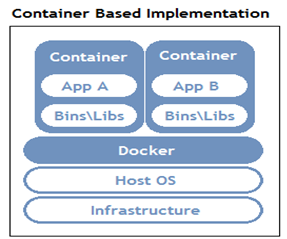

Let us suppose that we have two different Python applications that we want to host on a single server (either a physical machine or a virtual machine). Let us further assume that these two Python applications need different versions of Python and different libraries and dependencies. Since it is not possible to have different versions of Python installed on the same machine, we would either need two different physical machines or a single physical machine that is powerful enough to host two virtual machines. Both the solutions would incur quite a huge cost.

In order to avoid this cost and have an efficient solution at the same time, we could use a docker. The machine on which you wish to run and install the docker is known as the ‘Host’.

https://www.aquasec.com/cloud-native-academy/docker-container/

To deploy the applications on this host, we can create Docker containers that would contain everything needed to run the applications. These containers would not have their own operating system. The kernel of the host’s operating system would rather be shared across the multiple containers that we create. This makes these containers isolated from each other.

Some Docker Terminologies

Docker Engine

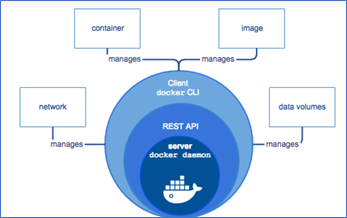

Docker Engine is an open-source containerization technology for building and containerizing applications. It works as a client-based application and has a Server, REST API and Client as its core components.

Docker Daemon

The Server runs a Docker Daemon which is nothing but a process that enables managing docker images, containers, networks, and storage volumes. Users can interact with it using the Docker Client.

Docker Engine Rest API

An API used by the applications to interact with the Docker Daemon, that can be accessed through an HTTP client.

https://www.aquasec.com/cloud-native-academy/docker-container/docker-architecture/

Docker CLI

Users can interact with the Docker Daemon using Docker commands through this command-line interface client.

Docker Image

A docker image is a template that consists of the application and all the other dependencies needed for running the application on Docker.

Docker Container

A running instance of the docker image is known as a docker container.

Docker Hub

Docker hub is an online repository wherein ready to use docker images are available.

Installing Dockers

Docker Desktop can be installed for your Mac or Windows environment from the following link –

https://docs.docker.com/desktop/

Dockers CLI Commands

docker create

This command creates a new container over the specified image.

$ docker create ubuntu

The above command enables creating a container using the latest Ubuntu image.

docker start

This command is used to start any stopped container. The command below enables starting the container whose container ID starts with 70257.

$ docker start 70257

docker stop

This command is used to stop any running container. The command below enables stopping the container whose container ID starts with 70257.

$ docker stop 70257

docker run

This command is used to create a container and then start it.

$ docker run ubuntu

The above command enables creating a container from the latest Ubuntu image and then start running it. If we want to interact with the created container, we could specify ‘-it’ before the docker run command:

$ docker run -it ubuntu

This provides us with the terminal to interact with the created Ubuntu container.

root@e4e633428474:/#

docker rm

This command is used to delete a container.

$ docker rm 70257

The above command enables deleting the container whose container ID starts with 70257.

docker ps

This command enables viewing all the containers running on the docker host.

$ docker ps

In order to view all the containers irrespective of their running status, we can include ‘-a’ to the above command.

$ docker ps -adocker images

This command enables viewing all the Docker images present on the Docker Host.

$ docker imagesBuild a docker container with your ML model

Step 1: Create a Dockerfile

Creating a Docker container begins by creating a Dockerfile. A Dockerfile simply put is a text file that has all the commands needed to create the container.

A Docker image has a base image on top of which layers are added with each of the layers adding some dependencies.

FROM continuumio/anaconda3:4.4.0 COPY . /usr/app/ EXPOSE 5000 WORKDIR /usr/app/ RUN pip install -r requirements.txt CMD python flask_api.py

In the code above, we started with a base image of Anaconda. The COPY command is used to copy all the files from the current directory to the specified directory. The EXPOSE command tells Docker to get all its needed information from the specified port during runtime. The WORKDIR command defines the working directory of the container at any time. The next command is for running the requirements.txt file which installs all the required python dependencies. The last command is used to run the flask_api.py file. This file can contain a trained ML model which can be used to predict new data. This file would serve all API requests and hence would contain a function that can be called by an API endpoint.

Step 2: Build an image with the Dockerfile

In order to build a docker container from the created Dockerfile, we need to run the docker build command that builds the image as per the instructions given in the Dockerfile.

$ docker build -t IMAGE_NAME:TAG .

In the above code, the image name is given by the user along with a tag. Tagging an image is equivalent to giving an image an alias which helps in distinguishing different versions of the image.

We can test the container we created on our local machine at this point by running the following command:

$ docker run IMAGE_NAME:TAG

About Author

Nibedita completed her master’s in Chemical Engineering from IIT Kharagpur in 2014 and is currently working as a Senior Consultant at AbsolutData Analytics. In her current capacity, she works on building AI/ML-based solutions for clients from an array of industries.