This article was published as a part of the Data Science Blogathon

Overview

In this article, we will learn about how to create a CI/CD Pipeline using Google Cloud Services: Google Source Repositories, Container Registry, CloudBuild, & Google Kubernetes Engine.

Prerequisites

- Basic Google Cloud Knowledge and an Account to walk along the hands-on part

- Basic Git commands

- Blog: For Docker, Containers understanding, and the application context that we will be going to use

Introduction

Once we have developed and deployed the application/product, It needs to be continuously updated based on user feedback or the addition of new features. This process should be automated, as without automation we have to run the same development and deployment steps/commands again and again for every change to the application.

With Continuous Integration and Continuous Delivery Pipeline, we can automate the complete workflow from building, testing, packaging, and deploying, which will be triggered when there are any changes to an existing application or we can say if there is any new commit to an existing code repository.

You can check out my previous blog if you want a CI/CD pipeline using AWS cloud.

If you don’t have a GCP account then create one, for new users Google provides $300 free credits. Go to the following link and get started.

Project Setup

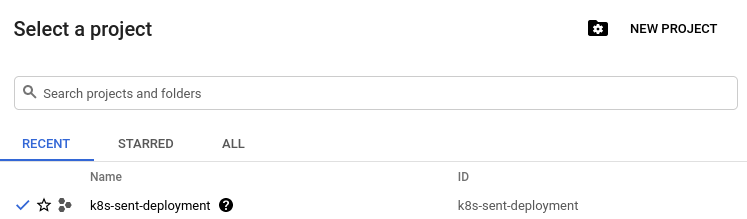

Once the account is created, on the home page there is an option to create a project. Go ahead create a new project.

Source: Author

I have created a project, k8s-sent-deployment so I will use that for setting up a complete pipeline.

Let’s learn about GCP services and create our CI/CD pipeline.

Container Registry

It is used to store Docker Container images, Similar to DockerHub, AWS ECS, and other private cloud container registries.

Benefits of Container Registry

- Secure, Private Docker Registry

- Build and deploy automatically

- In-depth vulnerability scanning

- Lockdown risky images

- Native Docker support

- Fast, high-availability access

For more details, you can check this link.

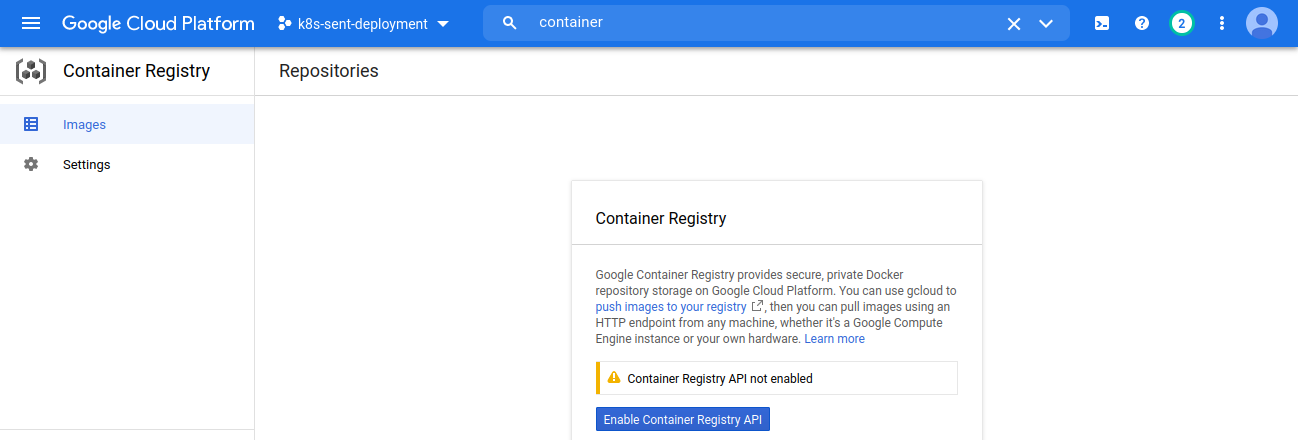

To use the service we need to activate the API, search for Container registry in the search bar, and on the next page click on Enable Container Registry API button.

Source: Author

Google Source Repositories

It is a version control similar to Github/Bitbucket use to store, manage, and track code.

For more details refer to the Google Source Repositories page.

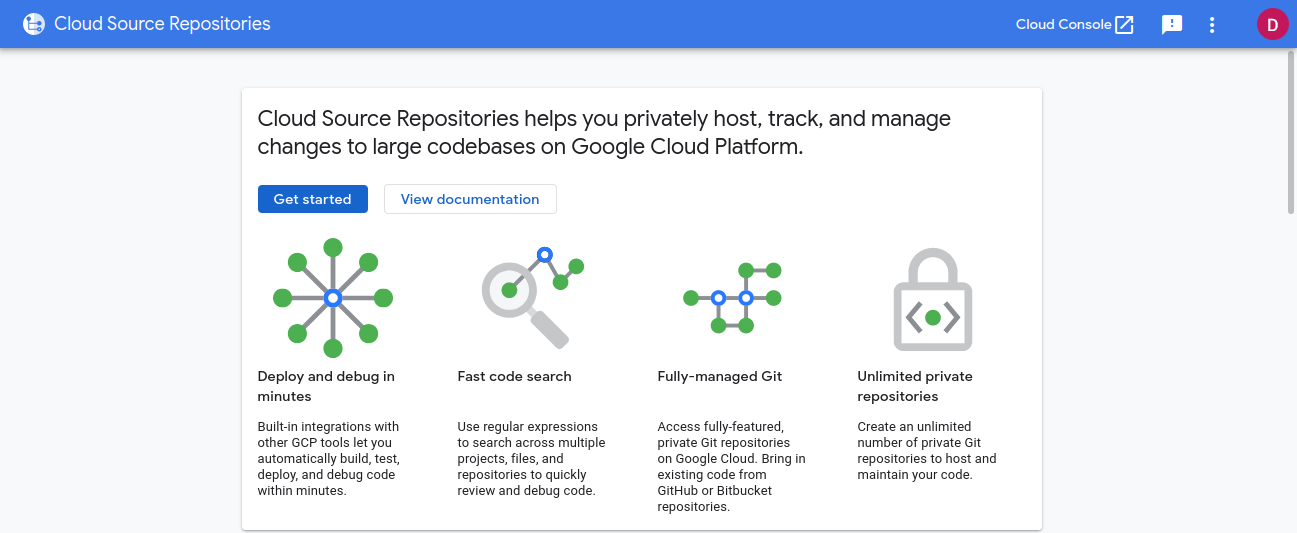

Now to use the service we need to activate the API, search for Cloud Source Repositories API, and on the landing page click on Enable.

Once the API is enabled, click Go to Cloud Source Repositories button and then click Get Started.

Source: Author

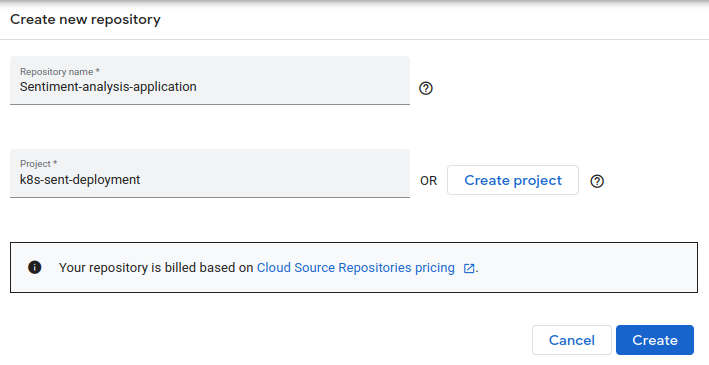

There is an option to either create a new repository or connect an external repository such as Github or Bitbucket. For this article, I will create a new repository. If you want to use the external repository then skip the following section.

Now, select Create a new repository, enter repository name and select the project that we have created earlier and then click Create.

Source: Author

You can either download the Sentiment Analysis application code from my Github account or you can use your own application. The flow will be the same just some changes here and there.

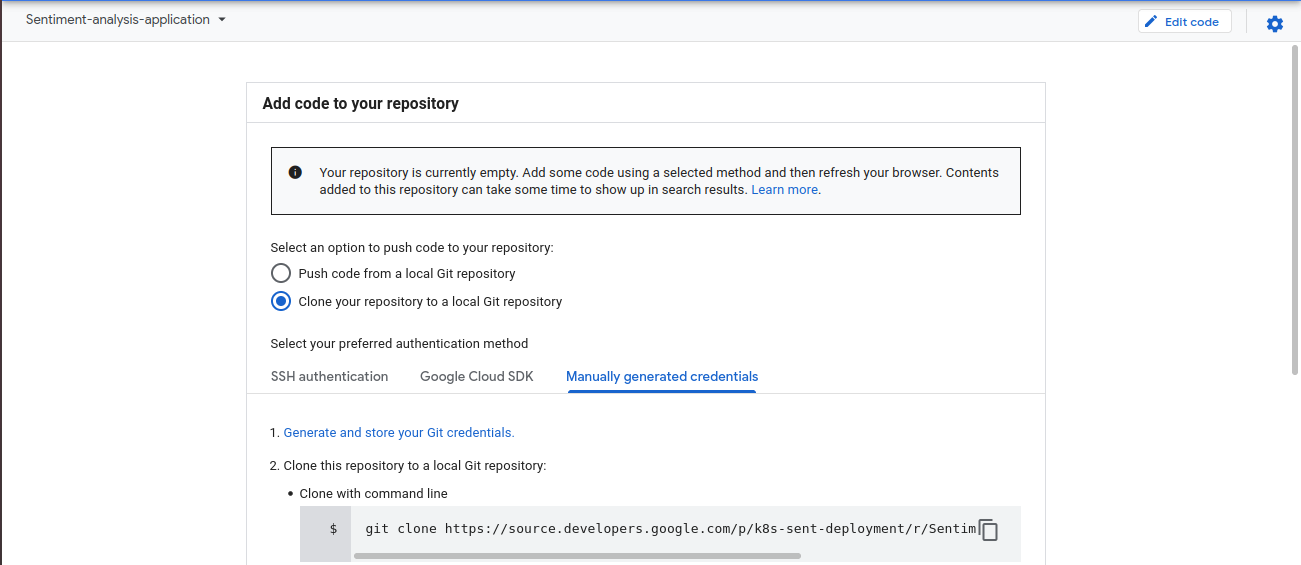

Once Cloud Source Repository is created you will be landed on a page to Add code to your repository. Select Clone repository to a local Git repository and follow the mentioned steps under Manually generated credentials.

Source: Author

Step 1 is to Generate and store Git credentials, click on the link and it will display a command, copy that and run it in your local terminal/command prompt. Now we can access our Cloud Source Repository.

Follow the next steps, Create a folder in your local system, open the terminal/command prompt, run the git clone command to clone the repository. Now copy the application code files to the clone folder and then run commit and push commands to update the Google Cloud Source Repository.

Now our source repository is ready to use.

Google Kubernetes Engine (GKE)

Kubernetes, also known as K8s, is an open-source system for automating deployment, scaling, and management of containerized applications.

GKE is Kubernetes managed by Google infrastructure. Some of the benefits are

- Auto Scale

- Auto Upgrade

- Auto Repair

- Logging and Monitoring

- Load Balancing

For more details, you can refer to the GKE documentation.

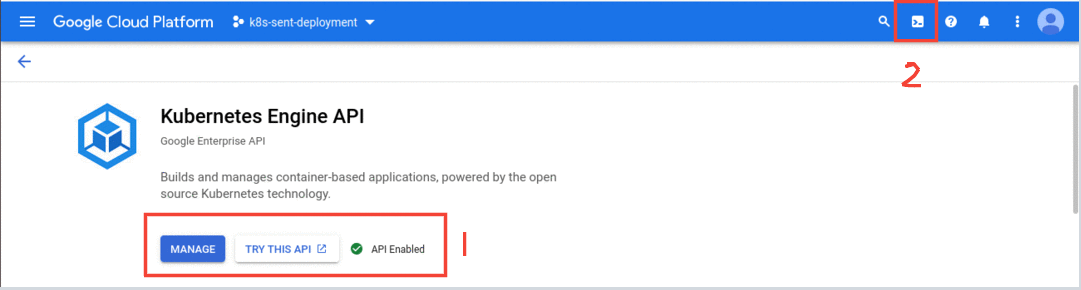

Now to use the service, we need to enable the API. Search for Kubernetes Engine API in the search bar and enable the API.

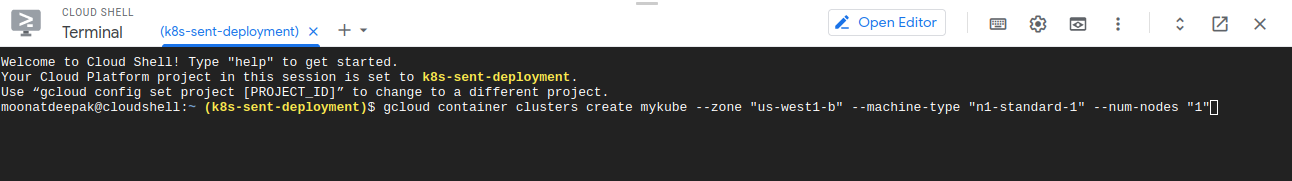

Once the API is enabled, we need to create a cluster. For that open cloud shell, you will find an icon on the top right side for Activate Cloud Shell.

Source: Author

Now to create a cluster, enter the following command in the cloud shell

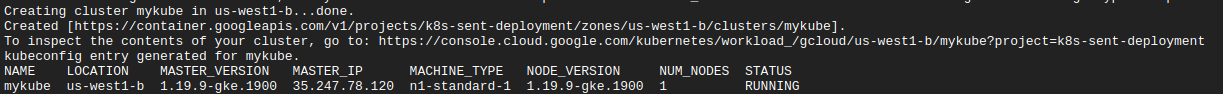

create cluster gcloud container clusters create mykube --zone "us-west1-b" --machine-type "n1-standard-1" --num-nodes "1"

It will create a Kubernetes cluster, ‘mykube‘ with 1 compute node of n1-standard-1 machine type.

Source: Author

Now we have to create the following configuration files for our pipeline

Dockerfile: A simple file that consists of instructions to build a Docker Image. Each instruction in a docker file is a command/operation, for example, what operating system to use, what dependencies to install or how to compile the code, and many such instructions which act as a layer. To learn more about Docker, containers, and how to create a Dockerfile check this blog.

FROM python:3.8-slim-buster WORKDIR /app COPY . /app RUN pip install -r requirements.txt EXPOSE 5000 CMD ["python3","app.py"]

Deployment YAML: To run an application we need to create a Deployment object and we can do that using a YAML file

apiVersion: apps/v1

kind: Deployment

metadata:

name: sentiment

spec:

replicas: 2

selector:

matchLabels:

app: sentimentanalysis

template:

metadata:

labels:

app: sentimentanalysis

spec:

containers:

- name: nlp-app

image: gcr.io/k8s-sent-deployment/myapp:v1

ports:

- containerPort: 5000

- apiVersion: Version of Kubernetes API

- kind: Kind of object to create, here its Deployment

- metadata: Data about objects to identify them

- spec: Specifications of the object, it includes replicas(no. of pods), labels, container image that we will create using docker file and push to google container registry and container port number

Service YAML: To expose an application running on a set of Pods as a network service we need a Service YAML file.

apiVersion: v1

kind: Service

metadata:

name: sentimentanalysis

spec:

type: LoadBalancer

selector:

app: sentimentanalysis

ports:

- port: 80

targetPort: 5000

In this file, we have specified kind: Service, in spec type: LoadBalancer to automatically distribute the load, and app name is same as in Deployment YAML file. It has port mapping which targets container port 5000.

For more details on Deployment and Service, check out the links in the Reference section.

CloudBuild

Cloud Build is a service that executes your builds on Google Cloud Platform’s infrastructure.

Cloud Build can import source code from a variety of repositories or cloud storage spaces, execute a build to your specifications, and produce artifacts such as Docker containers or Java archives.Source: Google Cloud Build Doc

It executes the commands in steps and is similar to executing commands in a script.

steps: #Build the image - name: 'gcr.io/cloud-builders/docker' args: ['build', '-t', 'gcr.io/$PROJECT_ID/myapp:v1', '.'] timeout: 180s #Push the image - name: 'gcr.io/cloud-builders/docker' args: ['push', 'gcr.io/$PROJECT_ID/myapp:v1'] # deploy container image to GKE - name: "gcr.io/cloud-builders/gke-deploy" args: - run - --filename=K8s_configs/ - --image=gcr.io/$PROJECT_ID/myapp:v1 - --location=us-west1-b - --cluster=mykube

In the first step, we will build the Docker image and in the next step, we will push the image to Google Container Registry. The final step is to deploy the application on the Kubernetes cluster, filename is the folder directory that will have Deployment and Service YAML files, specify the image and cluster name that we have created earlier.

Note: $PROJECT_ID variable value will be fetched from the environment.

Now we have everything to build our pipeline, so let’s start building one.

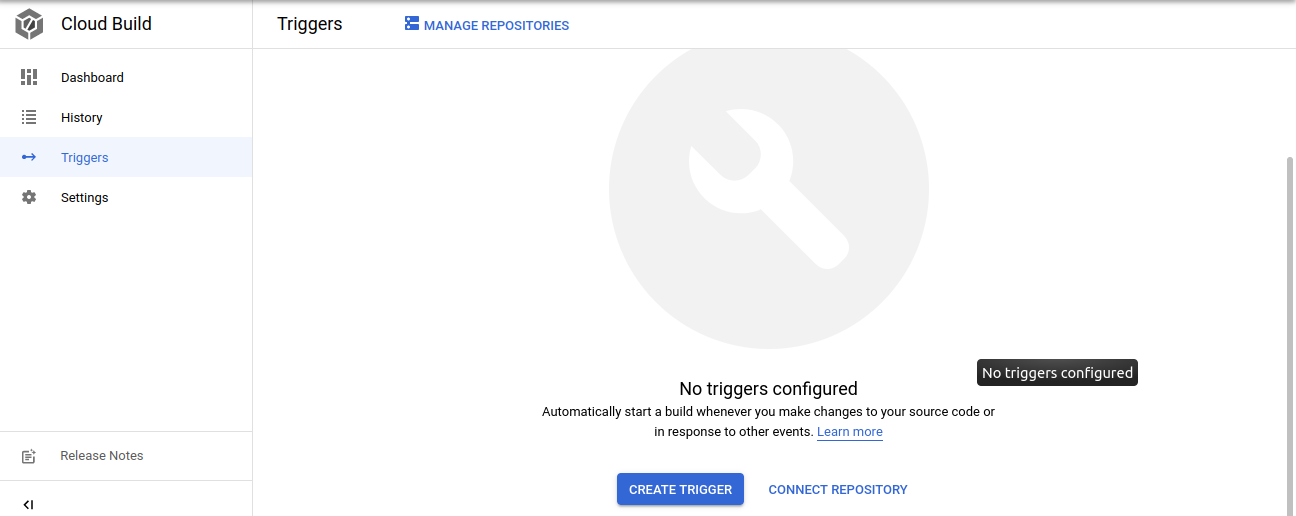

First, we need to create a Trigger so that whenever new changes are made in the Code repository the pipeline will trigger. If you are using GitHub/Bitbucket as a Source repository then follow this link to connect to your repository.

To create a Trigger, search for Cloud Build in the console and click CREATE TRIGGERS

Source: Author

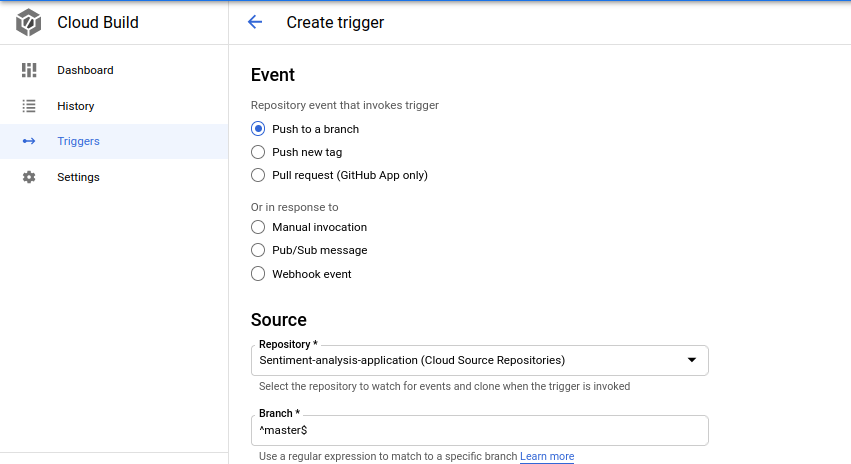

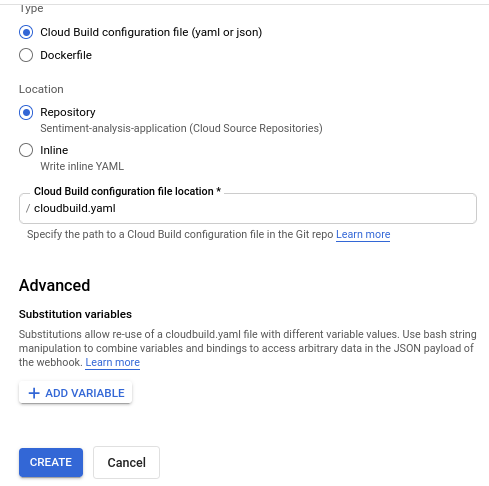

On the next page, select the event on how to trigger the pipeline and provide the Repository and Branch for monitoring for any changes, type select Cloud Build config file and provide the file location and then click CREATE

Source: Author

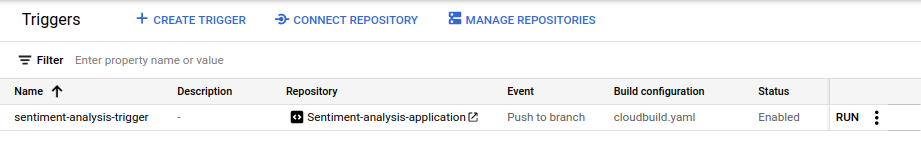

Once the Trigger is setup, we will have the option to Trigger the pipeline manually or we can make some changes in the code repository and the pipeline will trigger automatically

Source: Author

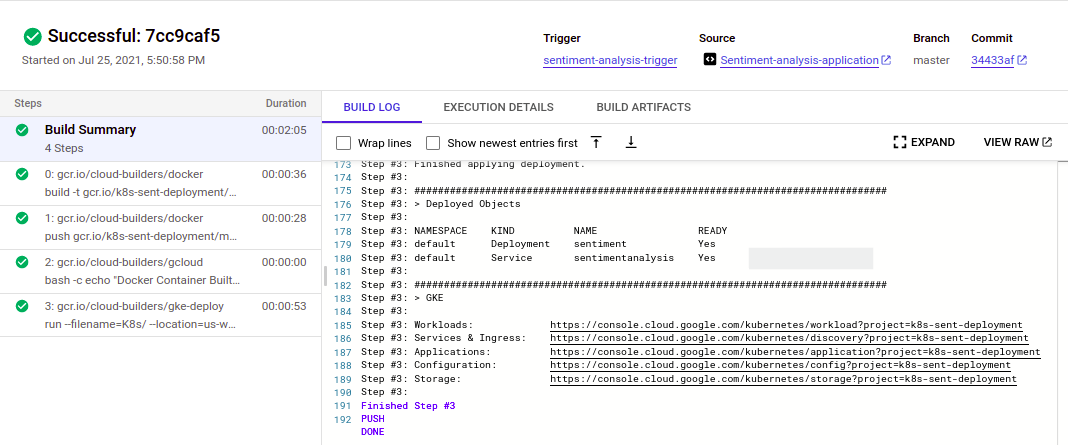

Let’s first check the manual process, click RUN and then click RUN TRIGGER, it will start the pipeline and follows the steps in the Cloud Build YAML file. It will take 3-4 minutes to complete the build. Once the build is completed we can check the container registry for the docker image and the Kubernetes Engine for confirming the Workloads and Service are up and running. If the build fails we can look into the logs and check where the error is and try to resolve it.

In my case, it failed once due to a permission issue, so if you face such a container permission issue just add IAM policy using the IAM console or through a cloud shell

gcloud projects add-iam-policy-binding k8s-sent-deployment --member=serviceAccount:@cloudbuild.gserviceaccount.com --role=roles/container.developer

Make sure to add the correct Cloud Build service account.

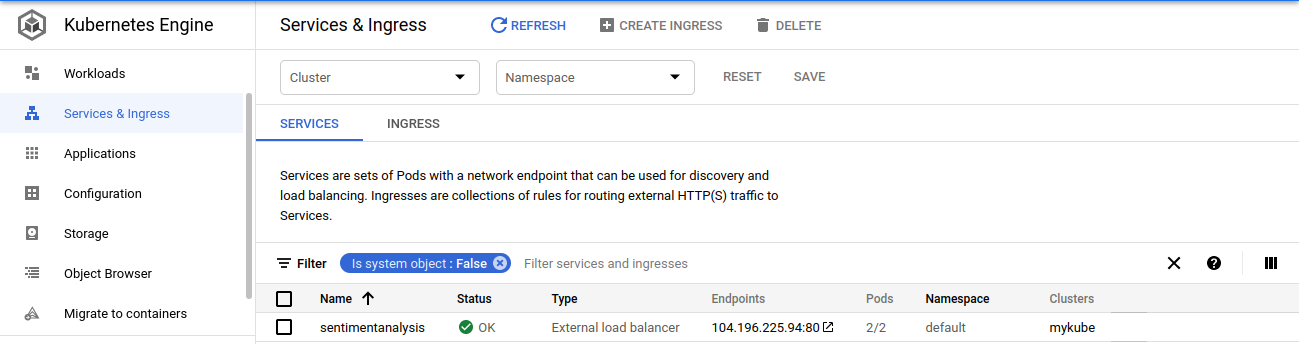

- Workloads are deployable units of computing that can be created and managed in a cluster

- Services are sets of Pods with a network endpoint that can be used for discovery and load balancing

- Ingresses are collections of rules for routing external HTTP(S) traffic to Services

Source: Author

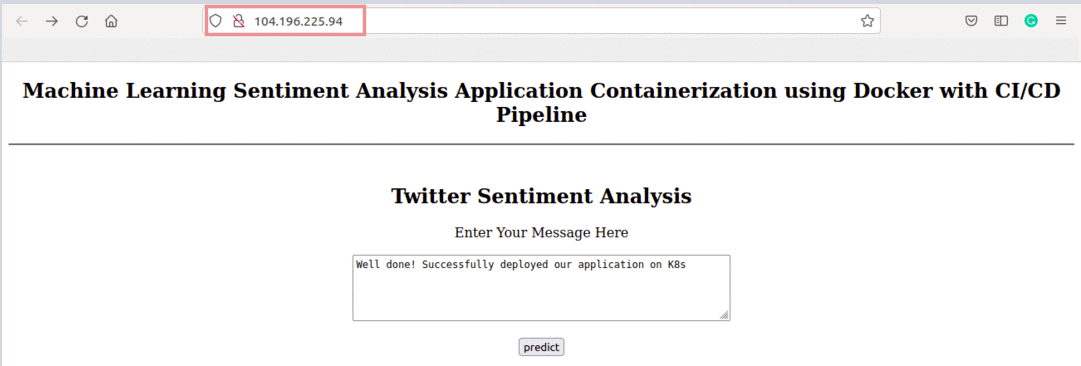

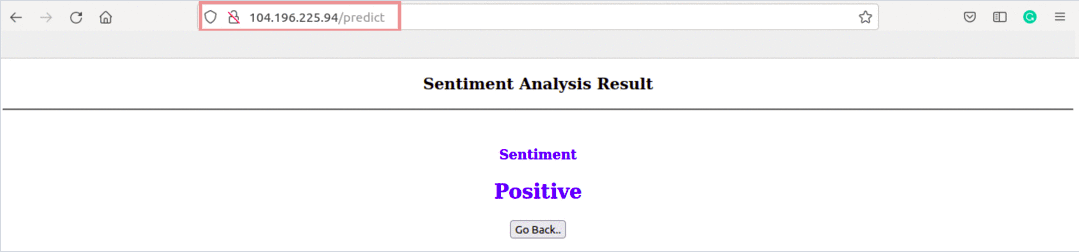

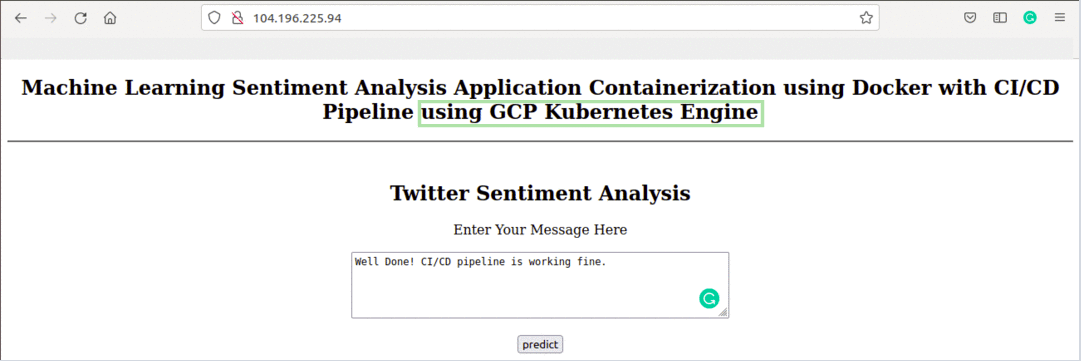

Now we can go to the endpoint and check our application

Source: Author

Great, our flask application is running fine.

Now, Let’s make some changes in the code repository and the build will trigger automatically

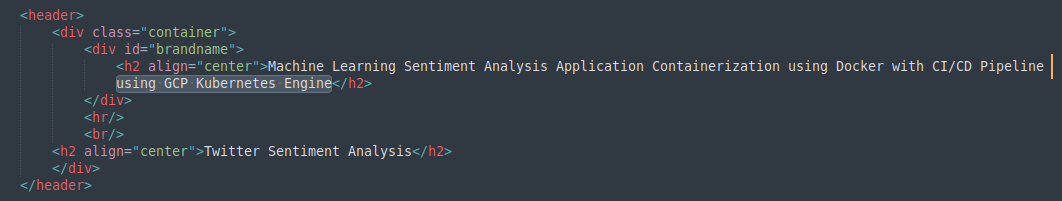

I have added a line ‘using GCP Kubernetes Engine‘ in home.html in the template directory and push the changes to the Source repository.

Source: Author

Once the build is complete, refresh the endpoint link and check for the changes

Source: Author

Well done! We have successfully created a CI/CD pipeline using Google Cloud Services.

Don’t Forget

To clean up the resources, you can delete the Kubernetes Cluster, Service, and the CloudBuild Trigger created, the container images in Container Registry, and artifacts stored in Cloud Storage.

References

https://kubernetes.io/docs/tasks/run-application/run-stateless-application-deployment/

https://kubernetes.io/docs/concepts/services-networking/service/

https://maximbetin.medium.com/continuous-integration-continuous-delivery-with-gcp-b5649f428234

https://cloud.google.com/kubernetes-engine/docs/troubleshooting?&_ga=2.10485873.-418656735.1626334404

About Author

Machine Learning Engineer, Solving challenging business problems through Data, Machine Learning, and Cloud.

Connect @ Linkedin

Did you please share an Design flow image for this