This article was published as a part of the Data Science Blogathon

What is Linear Regression?

Regression is a statistical term for describing models that estimate the relationships among variables.

Linear Regression model study the relationship between a single dependent variable Y and one or more independent variable X.

If there is only one independent variable, it is called simple linear regression, if there is more than one independent variable then it is called multiple linear regression.

It is modelling between the dependent and one independent variable. When there is only one independent variable in the linear regression model, the model has generally termed a simple linear

regression model.

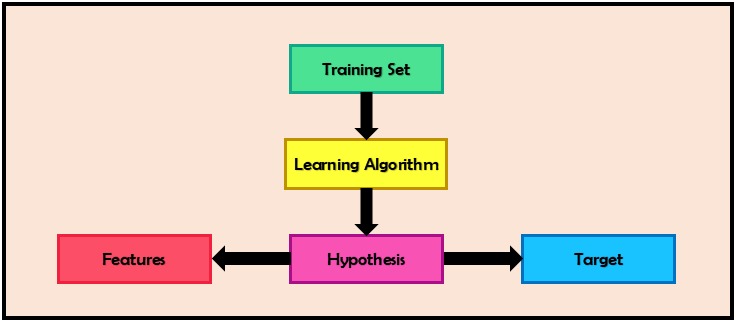

The basic algorithm to predict values for all machine learning models is the same.

Hypothesis

Here (θ₀)and (θ₁) are also called regression coefficients.

Below is the graph showing the sample dataset and hypothesis.

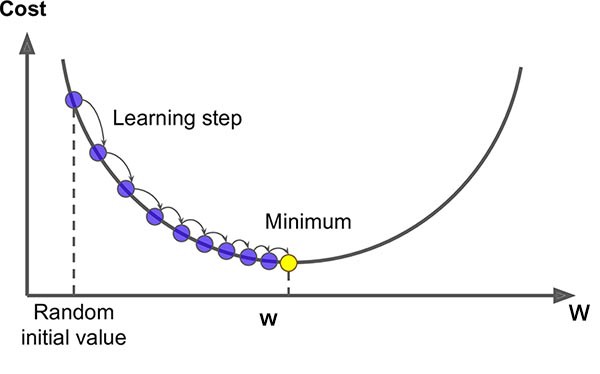

Gradient Descent

- Pick random values of (θ₀) and (θ₁).

- Keep on simultaneously updating values of (θ₀) and (θ₁) till the convergence.

- If the cost function does not decrease anymore, we reached our local minima.

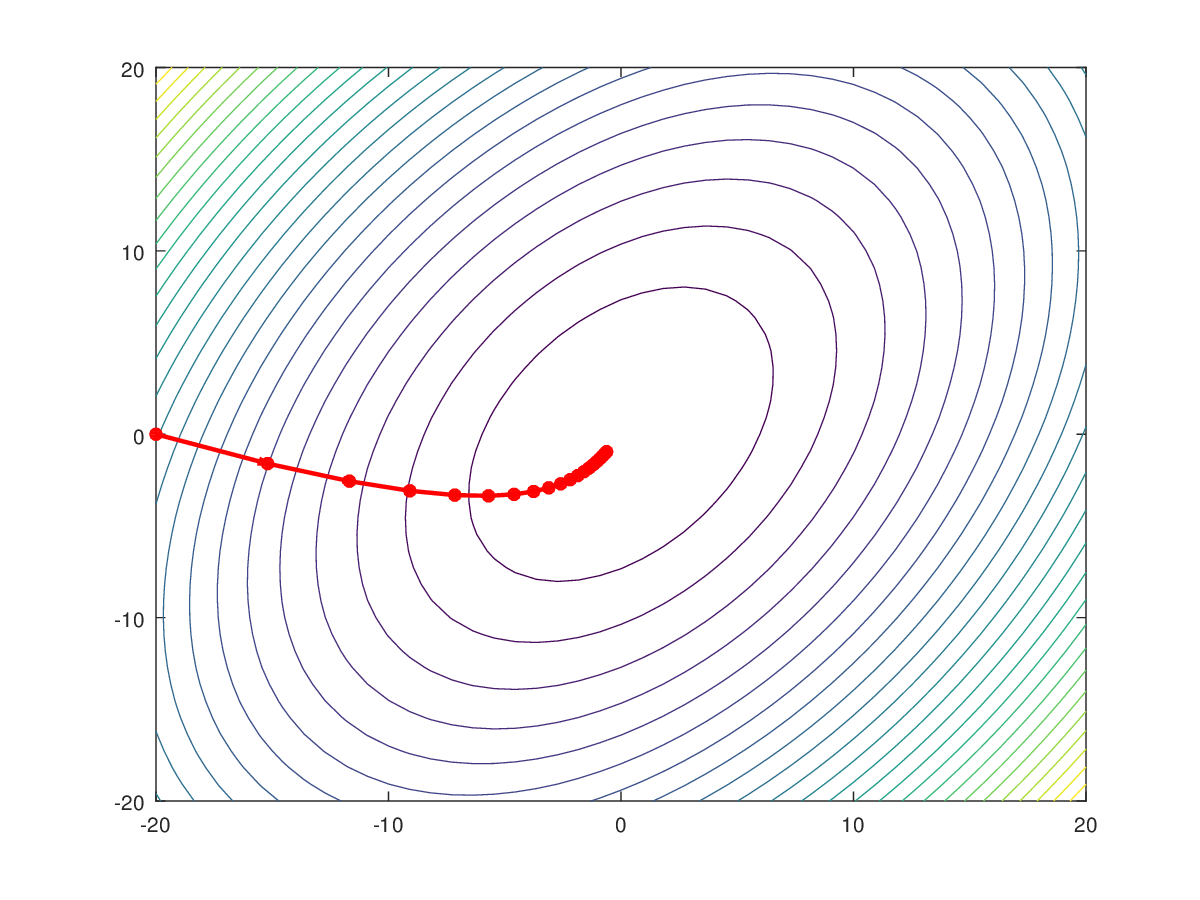

Plotting

As we can see here the rate of change of (θ₀) and (θ₁) depends on what is the learning rate (α).

- If the value of (α) is too low then our model will consume time and will have slow convergence.

- If the value of (α) is too high then our (θ₀) and (θ₁) may overshoot the optimal value and hence accuracy of the model will decrease.

- It is also possible in the high value of (α) that (θ₀) and (θ₁) will keep on bouncing between 2 values and may never reach the optimal value.

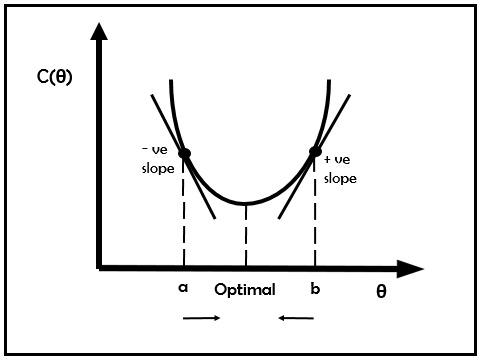

The plot of the Cost function (C) vs several iterations taken to reach minima must be like the graph shown below. Here around 500 iterations will be taken by the model to reach the approximate minima and after that, the graph will eventually flatten out.

If any other graph is plotted not similar to this, then (α) must be reduced.

To improve the model, we can make 2 modifications.

- Learn with a larger number of features (Implement Multilinear Regression).

- Solve for (θ₀) and (θ₁) without using an iterative algorithm like gradient descent.

Multi Linear Regression

In MLR, we will have multiple independent features (x) and a single dependent feature (y). Now instead of considering a vector of (m) data entries, we need to consider the (n X m) matrix of X, where n is the total number of dependent features.

So let us extend these observations to gain insights on Linear regression with multiple features or Multi-Linear Regression.

Firstly our hypothesis will change to n features now instead of just 1 feature.

But firstly in the case of Multiple Linear Regression, the most important thing is to make sure that all the features are on the same scale.

We do not want a model where one feature varies from 1 to 10000 and the other in a range of 0.1 to 0.01.

hence it is important to scale the features before making any hypothesis.

Feature Scaling

There are many ways to achieve feature scaling, the method which I am going to discuss here is Mean Normalisation.

As the name itself suggest, the mean of all features is approximately 0.

The formula to calculate normalized values is

Where (u) is the average value of (X₁) in the training set,(S₁) is the range of values.

(S₁) can also be chosen as Standard Deviation of (X₁), the values will still be normalized but the range will change.

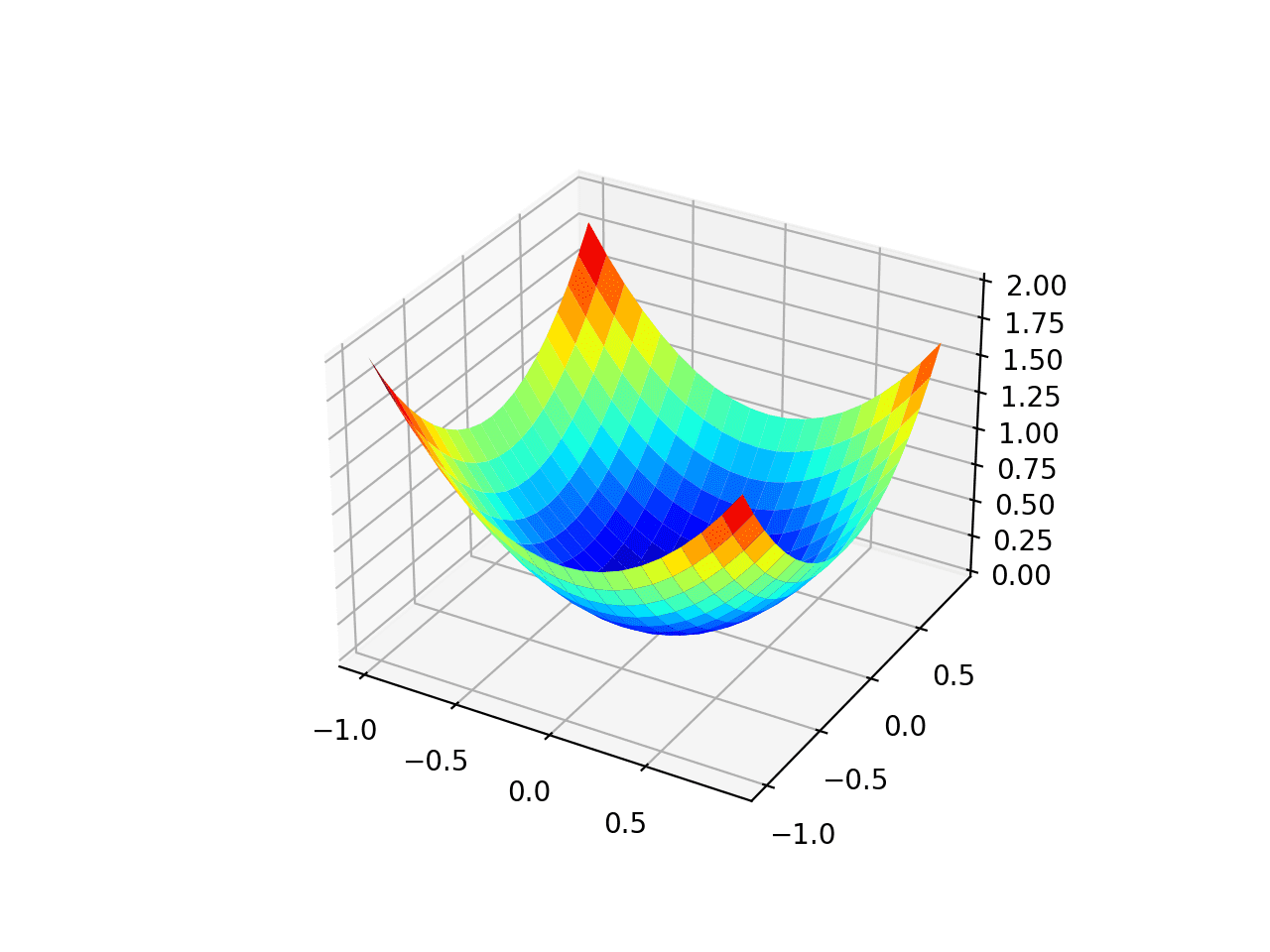

In MLR now our cost function will be modified to

And the Gradient descent simultaneous update will change to

Normal Equation

We know that batch gradient descent is an iterative algorithm, it uses all the training set at each iteration.

But gradient descent works better for larger values of n and is preferred over normal equations in large datasets.

Instead, Normal Equation makes use of vectors and matrices to find us minimum values.

in our hypothesis, we have values of (θ) as well as (x) ranging from 0 to n, so we create vectors individually of (θ) and (x) and our hypothesis formed will be,

To find minimum values of (θ) to reduce the cost function, and skipping the steps of gradient descent like to choose a proper learning rate (α), to visualize plots like contour plots or 3D plots and without feature scaling, the optimal values of (θ) can be calculated as

Key features of Normal Equation

- No need to choose learning rate (α)

- No iterations

- Feature scaling is not important

- Slow if there are a large number of features(n is large).

- Need to compute matrix multiplication (O(n3)). cubic time complexity.

gradient descent works better for larger values of n and is preferred over normal equations in large datasets.

We can also extend the concept of multilinear regression to form our base for the polynomial Regression.

Conclusion

In conclusion, for our model to perform accurately using gradient descent, values of (θ₀),(θ₁), and (α) play a major role. There can be many techniques as a normal equation which is comparatively easier to implement is used to find correct values of (θ₀) and (θ₁) but the most accurate result in a larger number of features is achieved by using Gradient descent.

Hope this article provided some new insights and an idea of how a simple looking model like linear regression is supported by pure mathematical concepts!

Keep Learning, Keep exploring!