This article was published as a part of the Data Science Blogathon.

Introduction to Artificial Neural Network

Artificial neural network(ANN) or Neural Network(NN) are powerful Machine Learning techniques that are very good at information processing, detecting new patterns, and approximating complex processes.

Artificial Neural networks ability is exemplary in tackling large and highly complex Machine Learning tasks of powering speech recognition services(eg Apple’s Siri), recommending the best videos to watch to millions of users every day(e.g, YouTube), or classifying billions of images(e.g., Google Images).

In 1958, psychologist Frank Rosenblatt was the first to invent a neural network. Called Perceptron, it was designed to model the ability of the human brain to process visual data and to acknowledge objects. Before we move forward to see how neural networks function, it is better to understand how it is inspired by the brain.

Biological Inspiration

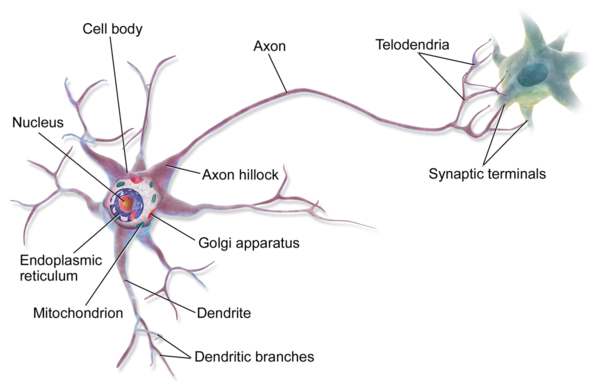

Image Source: Wikipedia/wiki/Neuron

Artificial Neural Networks are loosely inspired by how animals’ brains work. Our Brain is a complex network of neurons, each passing information to each other and processing sensory inputs into thoughts and actions. Each neuron receives its electrical inputs from its dendrites, which are cell fibers that propagate the electrical signal from the synapses(the junctions with preceding neurons) to the soma(neuron’s main body). If the accumulated electrical stimulation exceeds a specific threshold, the cell is activated and the electrical impulse is propagated further to the next neurons through the cells’ axon(the neuron’s output cable). Each Neuron can, therefore, be seen as a really simple signal processing unit, which – once stacked together-can achieve our thoughts and action.

2. Key Components of the neural network architecture

The Artificial Neural Network is made of individual units called neurons. These neurons are designed to mimic the behavior of the human brain. The key components of a neuron are as follows.

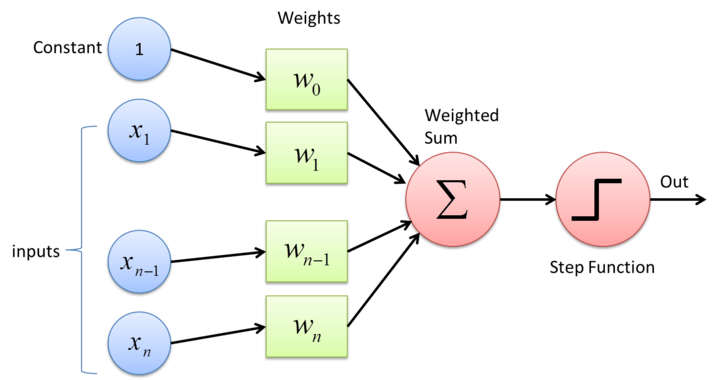

Image Source: https://dylanrae.ca/ML-details.html

1. Input:

Inputs are the features that are fed into the model for learning purposes. The input in Image Classification can be an array of pixel values pertaining to an image.

2. Weights:

Weight is a parameter that decides how much influence the input will have on the output. Inputs that are not so important will be scaled down and important inputs will be scaled up. For example, a negative word would have a deeper impact on decision-making in the case of a sentimental analysis model than a neutral word.

3. Bias:

The role of bias is to control the activation of a node. Bias can delay the activation, similarly, it can accelerate the activation.

4. Transfer Function:

The role of the transfer function is to combine the weighted inputs to output so that the activation function can be applied.

5. Activation Function:

An Activation decides whether a neuron should be activated or not. It will activate the neuron if it finds the input important in the process of predicting using mathematical operations.

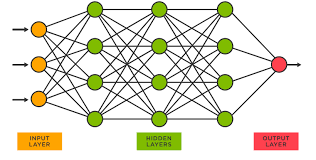

We form a layer of neurons when we have multiple neurons stacked together. Similarly, we can have a multi-layer neural network when we have multiple layers stacked next to each other.

The main components of this type of structure are as follows:

Image Source: https://www.tibco.com/reference-center/what-is-a-neural-network

-

Input layer: The input layer is the first layer in a neural network. The task of this layer is to bring the data into the system for processing by further layers of artificial neurons.

-

Hidden layers: A hidden layer comes after the input layer. Hidden layers are designed to non-linearly transform the inputs that enter the network. They are also designed to produce an output specific to a required task. For example, we may have different hidden layers to identify human eyes and ears respectively.

-

Output layer: The output from the hidden layers gets fed into the output layer and it comes to a final prediction. We get the final result in this layer.

3. Mathematical Model

By taking inspiration from biological neurons, each Artificial neuron takes several inputs(each a number), sums up all the inputs, and finally applies an activation function or Transfer function to obtain the output signal, which gets fed to the following neurons in the network.

3.1 Inputs, Weights, and Bias

After taking all inputs, they are summed up in a weighted manner. Each input is scaled up or down depending on the weights associated with that particular input. These weights are adjusted during the training phase of the network for the neuron to react to correct features. Another parameter that is also trained and used for summation is the neuron’s bias. It is added to the weighted sum as an offset. The bias is used to shift the result of the activation function towards the positive or negative side.

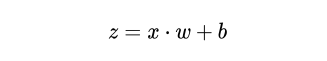

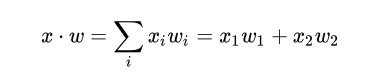

3.2 Mathematical Equation

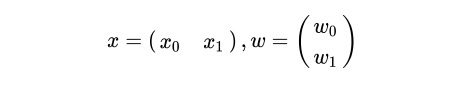

Let us suppose that we have a neuron that takes two input values, x1 and x2. Each of these inputs will be weighted by a factor,w1, and w2 respectively. These weighted inputs are summed up with an optional bias,b. See the following Image for representation of input vector, X and Weight vector, W.

We have expressed input values as a horizontal vector,x, and weights as a vertical vector, W.

Image Source: Author

The operation of the weighted sum of inputs and bias can be described by the following equation.

Image Source: Author

The dot product between the two vectors is done to have the weighted summation.

Image Source: Author

Now that we have scaled the inputs based on their weights and summed them together along with bias into the result, Z, we have to apply the activation function to Z to get the neuron’s output.

3.3 Why Do we need Activation Function?

A neuron without activation will essentially act as a linear model and will look for a linear pattern in the data. This will make the neural network simpler, but it won’t be able to detect complex patterns from the data.

3.4 Most Commonly Used Activation Functions

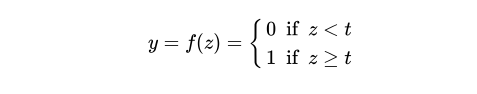

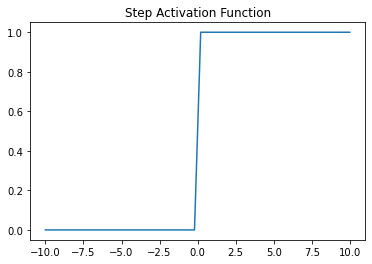

1. Step Function: Step Function is one of the simplest kinds of activation functions. In this, we select a threshold value and if the value of the weighted sum input say Z is greater than the threshold then the neuron is activated.

The mathematical equation :

Image Source: Author

The plot of Step Function:

Image Source: Author

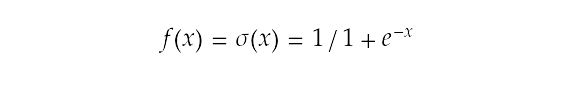

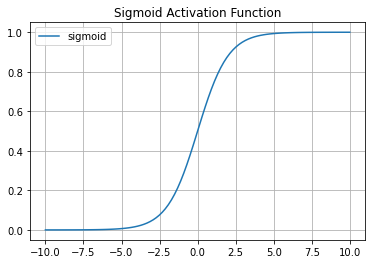

2. Sigmoid Function: Sigmoid Activation Function is one of the widely used activation functions in neural networks. The Curve of the sigmoid function is S-shaped.

Sigmoid Function transforms the value between the range 0 and 1.

The mathematical equation :

Image Source: Author

The plot of Sigmoid Function :

Image Source: Author

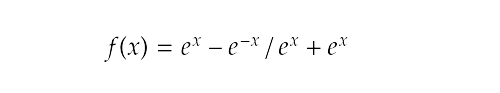

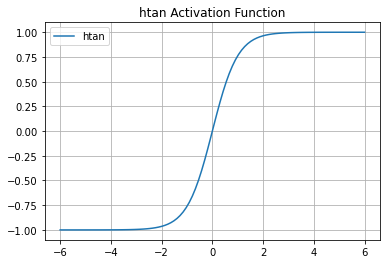

3. Tanh: Hyperbolic Tangent (Tanh) Activation function is similar to sigmoid function i.e it has a shape like S.The output ranges from -1 to 1.

The Mathematical equation:

Image Source: Author

The plot of Tanh function :

Image Source: Author

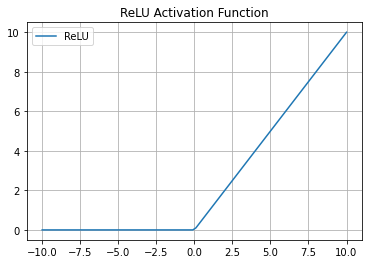

4. ReLU: Piecewise function that, if the input is z, the output will be z if z is positive, otherwise, it outputs zero.

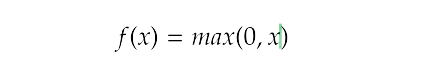

The Mathematical equation

Image Source: Author

The plot of ReLU function:

Image Source: Author

4. Implementation of Neuron in Python

We will implement the model from scratch using Numpy. Numpy will be used for matrix multiplication.

import numpy as np # Numpy is used for matrix multiplication.

np.random.seed(42) # Fixing the seed for the random number generation

class neuron(object):

def __init__(self, num_inputs, activation):

super().__init__()

# initializing the weight vector and the bias value:

self.W = np.random.rand(num_inputs) #Initialize weights for each input

self.b = np.random.rand(1)

#the Activation Function

self.activation_function = activation

def output(self, x):

z = np.dot(x, self.W) + self.b

return self.activation_function(z)

# Input Size of Neuron:

input_size = 4

#Step Function

def step(x):

return [0 if x <= 0 else 1 ]

#Sigmoid Function

def sigmoid(x):

return 1/(1+np.exp(-x))

#Tanh Function

def tanh(x):

return (np.exp(x) - np.exp(-x))/(np.exp(x) + np.exp(-x))

#ReLu Function

def relu(x):

data = [max(0,value) for value in x]

return np.array(data, dtype=float)

# Instantiating the perceptron:

#i have used step function,To use any other function remove the # and use it.

perceptron = neuron(num_inputs=input_size, activation=step)

#perceptron = neuron(num_inputs=input_size, activation=sigmoid)

#perceptron = neuron(num_inputs=input_size, activation=tanh)

#perceptron = neuron(num_inputs=input_size, activation=relu)

print("Neuron's random weights = {} , and random bias = {}".format(perceptron.W, perceptron.b))

#output:Neuron's random weights = [0.37454012 0.95071431 0.73199394 0.59865848] , and random bias = [0.15601864]

#Getting the input Ready to be feed to the Neuron

x = np.random.rand(input_size).reshape(1, input_size)

print("Input to the Neuron : {}".format(x))

#Print the Output of the Neuron

y = perceptron.output(x)

print("Neuron's output value for given `x` : {}".format(y))

Conclusion

In this article, I have tried to explain the Artificial neural network. We have seen how neural networks are inspired by the human brain. We have learned about the different components of a neuron, about different sections of multi-layer neural networks. About different activation functions used in the neural networks.