Overview of MLOps With Open Source Tools

This article was published as a part of the Data Science Blogathon.

Overview

The core of the data science project is data & using it to build predictive models and everyone is excited and focused on building an ML model that would give us a near-perfect result mimicking the real-world business scenario. In trying to achieve this outcome one tends to ignore the various other aspects of a data science project, especially the operational aspects. As ML projects are iterative in nature, tracking all the factors, configurations and results can become a very daunting task in itself.

With the growing and distributed data science teams, effective collaboration between teams becomes critical. The open-source tools that we will explore on this blog namely DVC Studio and MLFlow will help us to address some of these challenges by automatically tracking the changes/results from every iteration. To add more, both the tools give us a very interactive UI where the results are neatly showcased and of course, the UI is customizable !!.

Any Prerequisites?

All we need is the basics of machine learning, python, and version control account eg: Github. In this blog, Kaggle’s South Africa Heart Disease dataset will be used for running our experiments. Our target variable will be CHD (coronary heart disease).

Note: We will be using the same dataset and building the same model for sake of simplicity for both DVC Studio and MLflow use cases. Our aim is to understand the features of both the tools and not fine-tune the model building.

DVC Studio

DVC is an open-source tool/library which can be plugged into version control tools like Github, Gitlab, Bitbucket, etc to import ML projects for experimenting and tracking. The studio has a UI to track the experiments/metrics. To know more about DVC explore its features.

Installation & setup: The pip install dvc should be fine for installation. For more information on the installation of the windows version, please refer to install DVC. You can download/clone the code from Github for quick reference. You can download/clone the code from Github for quick reference.

We will load the dataset, split it and then carry out the model building step. All the code/files can be found under the src folder – skipping the code walkthrough of this section to keep the blog relatively short. You can access the complete code and walkthrough from the blog.

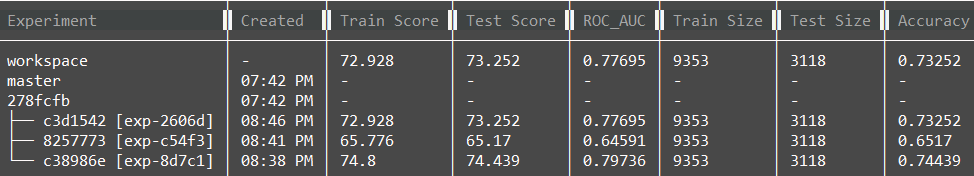

Before we move further, let’s take a glimpse of how the experiment and KPI’s are tracked in DVC.

As you would have noticed above, the output is in the console, definitely not interactive, what about the plots that are so crucial for ML models?. Is there a way to filter results on a defined threshold? eg: If we need to view the experiments which have an accuracy of higher than 0.7. The only way is to write a piece of code to filter that specific data. That’s where the DVC studio makes it very simple and interactive. We will look into UI in the next sections.

DVC Studio: Set up the DVC studio by following the below steps.

Step 1: Navigate to the URL https://studio.iterative.ai, Sign in with your Github and you will be able to see Add a view at the top right of the screen.

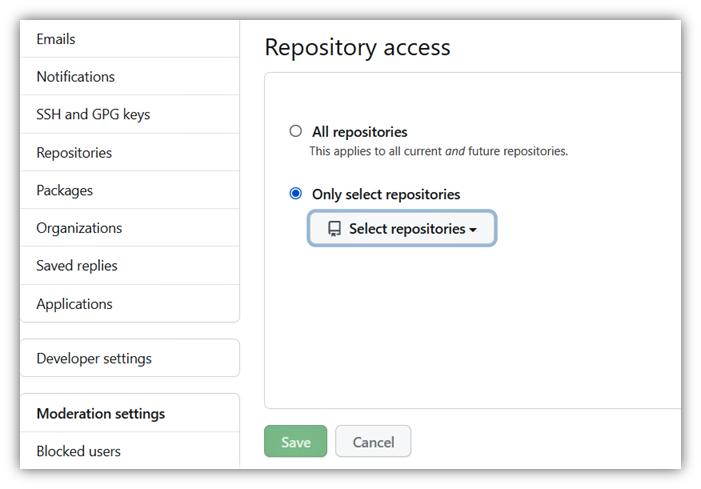

Step 2: The GitHub repository that we have should be mapped to the DVC studio by clicking on Configure Git Integration Settings.

Step 3: Once step 2 is completed, it will open the Git Integrations section. Select the repository and provide access.

Step 4: Once mapped, the repo will be available for the creation of a view as below.

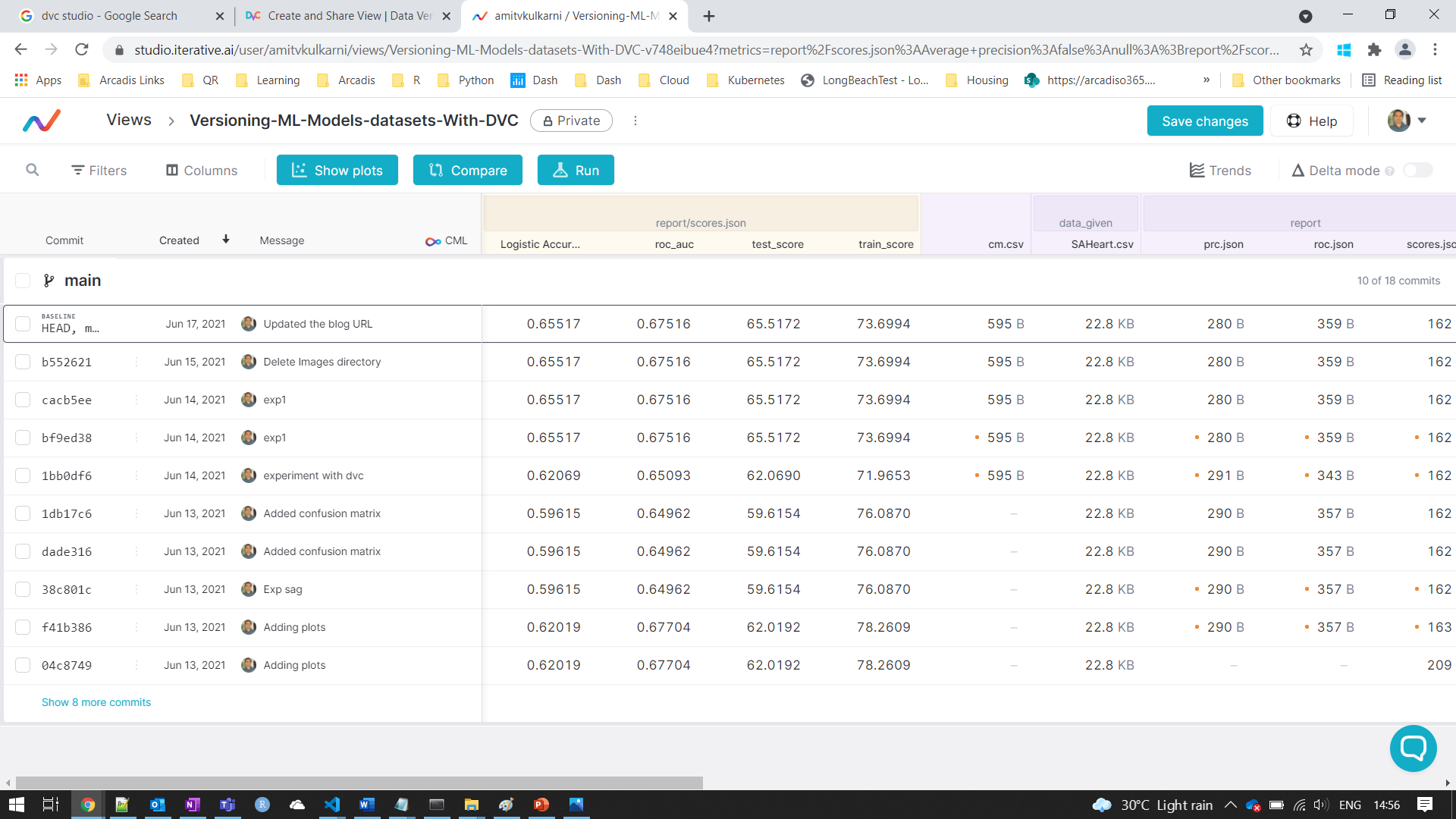

Step 5: Once the above steps are completed, click on the repo and open the tracker.

DVC studio experiment tracker UI

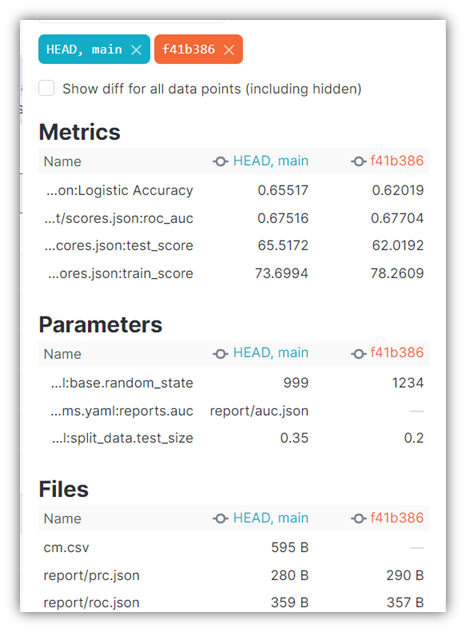

Model Comparison: Select the models of your choice and click on Compare to view the results.

Model Comparision

Run the experiments: There are two ways to run the experiments.

1. Make all the changes and check the code into the Github repository. The DVC studio automatically pulls the metrics on the studio for tracking.

2. The other way is to make changes on the DVC studio UI, run experiments, and then push it to Github.

MLflow

MLflow is an open-source tool for tracking ML experiments. Similar to DVC studio it helps with collaboration, carry out a varied range of experiments and analysis. To know more about MLflow explore its features.

Setting Up Work Environment:

You can access the code repository for download/clone from Github. Install the mlflow and other libraries for model building, set up the config file to improve the code readability. The files can be found under the MLflow folder.

Monitoring Model Metrics: The last and the current metrics are tracked and listed as in snapshot. If the number of iterations increases then keeping track of the changes becomes a challenging process and rather than focussing on improving the model performance, we will spend a lot of time tracking the changes and resulting metrics.

Path Metric Old New Change reportscores.json Logistic Accuracy 0.62069 0.65517 0.03448 reportscores.json roc_auc 0.65093 0.72764 0.07671 reportscores.json test_score 62.06897 65.51724 3.44828 reportscores.json train_score 71.96532 74.27746 2.31214

MLflow gives us a nice MLflow UI which helps us to track everything on the UI. Once, we are ready to run our experiment (classification.py), the metrics are tracked and displayed in the UI with the below piece of code command.

mlflow ui## Here is the output INFO:waitress:Serving on http://127.0.0.1:5000

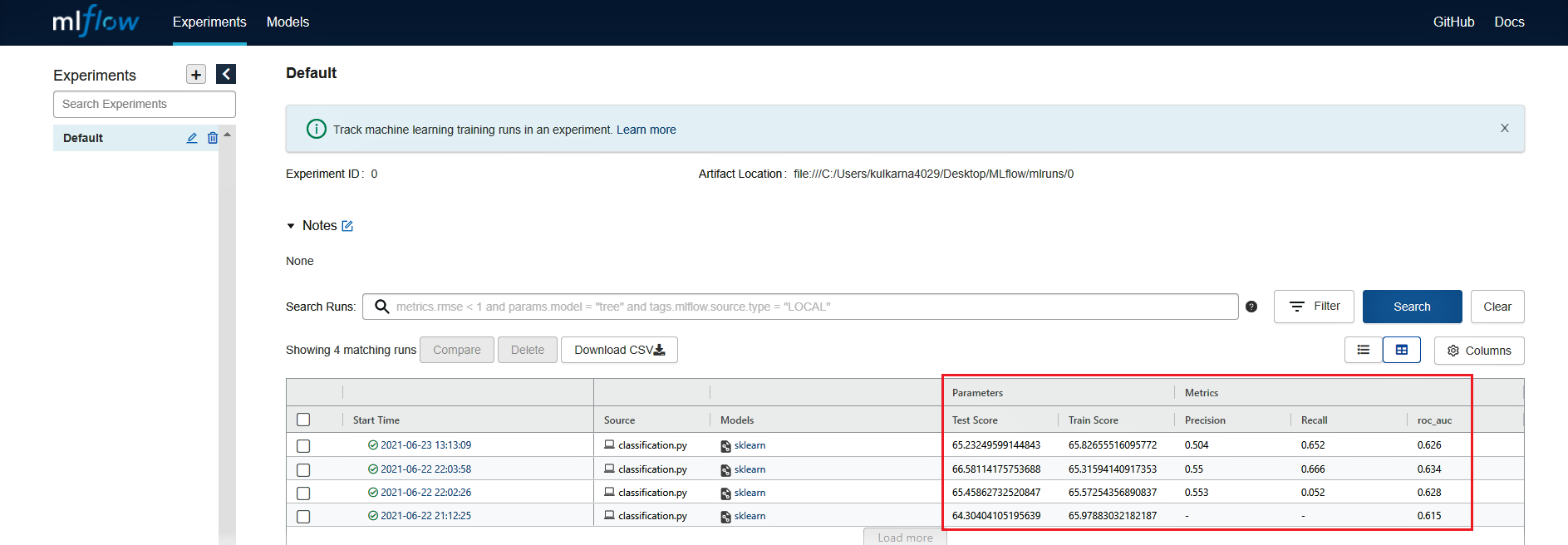

The URL is of the localhost, by clicking on the URL, we will be able to view the results in the UI. The UI is very user-friendly and one can easily navigate and explore the concerning metrics. The section highlighted in the red box below shows the tracking of metrics that are of our interest.

MLflow experiment tracker UI

Closing Note

In this blog, we had a look at an overview of MLOps and implemented it with open source tools namely DVC Studio and MLflow. These MLOps tools make tracking the changes and model performance hassle-free so that we can focus more on domain-specific tuning and model performance.

MLOps will continue to evolve in the future with more features added to the tools making the lives of data science teams that much easier in managing the operational side of machine learning projects.

If you liked the blog then here are articles on MLOps. Keep experimenting!

Tracking ML experiments with DVC