Ever wonder how chatbots understand your questions or how apps like Siri and voice search can decipher your spoken requests? The secret weapon behind these impressive feats is a type of artificial intelligence called Recurrent Neural Networks (RNNs).

Unlike standard neural networks that excel at tasks like image recognition, RNNs boast a unique superpower – memory! This internal memory allows them to analyze sequential data, where the order of information is crucial. Imagine having a conversation – you need to remember what was said earlier to understand the current flow. Similarly, RNNs can analyze sequences like speech or text, making them perfect for tasks like machine translation and voice recognition. Although RNNs have been around since the 1980s, recent advancements like Long Short-Term Memory (LSTM) and the explosion of big data have unleashed their true potential.

This article was published as a part of the Data Science Blogathon.

Recurrent Neural networks imitate the function of the human brain in the fields of Data science, Artificial intelligence, machine learning, and deep learning, allowing computer programs to recognize patterns and solve common issues.

RNNs are a type of neural network that can be used to model sequence data. RNNs, which are formed from feedforward networks, are similar to human brains in their behaviour. Simply said, recurrent neural networks can anticipate sequential data in a way that other algorithms can’t.

All of the inputs and outputs in standard neural networks are independent of one another, however in some circumstances, such as when predicting the next word of a phrase, the prior words are necessary, and so the previous words must be remembered. As a result, RNN was created, which used a Hidden Layer to overcome the problem. The most important component of RNN is the Hidden state, which remembers specific information about a sequence.

RNNs have a Memory that stores all information about the calculations. It employs the same settings for each input since it produces the same outcome by performing the same task on all inputs or hidden layers.

Also Read: Introduction to Autoencoders | Encoders and decoders for Data Science Enthusiasts

Recurrent neural networks (RNNs) set themselves apart from other neural networks with their unique capabilities:

Also Read: Deep Learning for Computer Vision – Introduction to Convolution Neural Networks

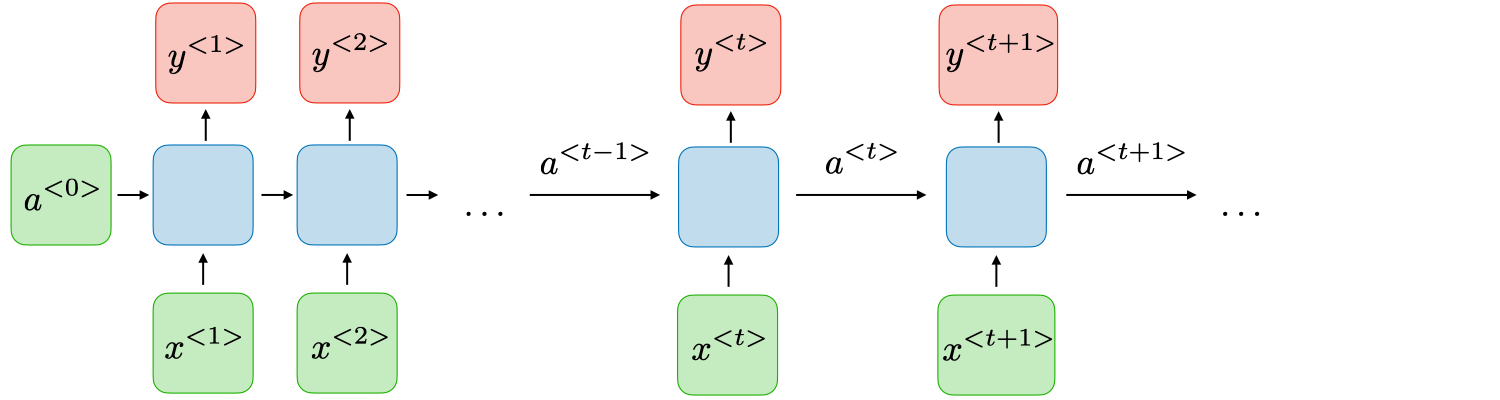

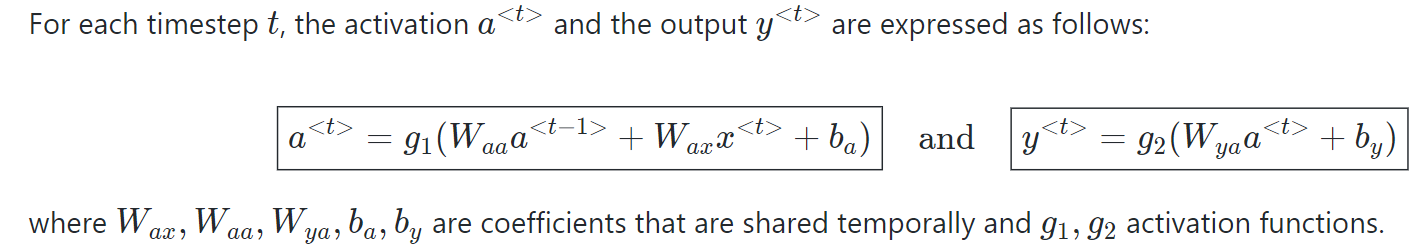

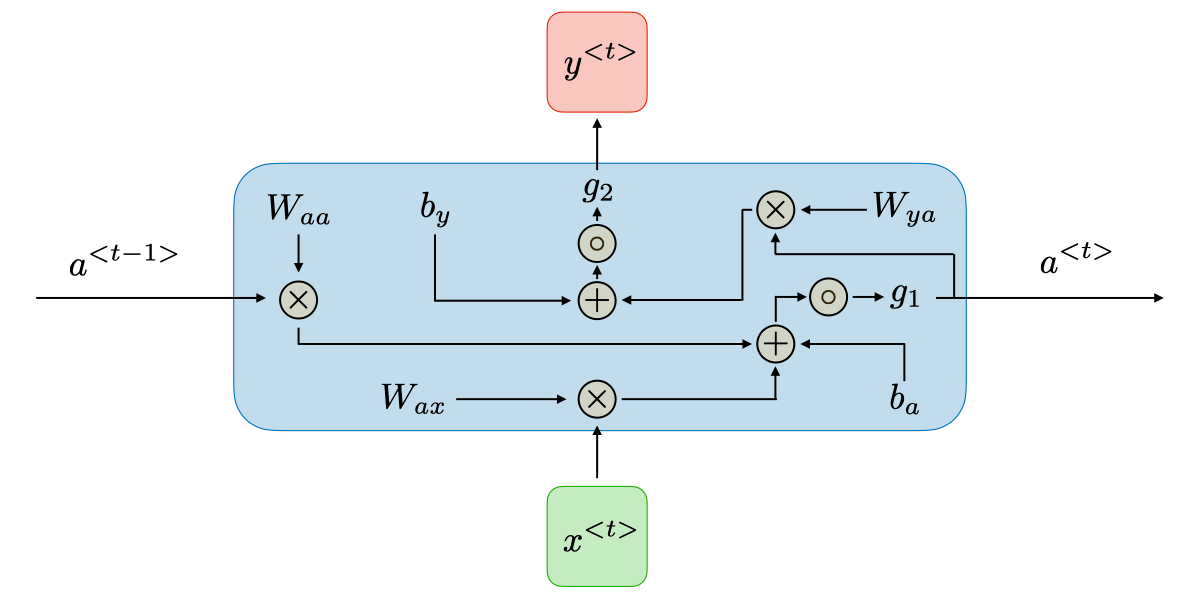

RNNs are a type of neural network that has hidden states and allows past outputs to be used as inputs. They usually go like this:

Here’s a breakdown of its key components:

RNNs are a type of neural network that has hidden states and allows past outputs to be used as inputs. They usually go like this:

RNN architecture can vary depending on the problem you’re trying to solve. From those with a single input and output to those with many (with variations between).

Below are some examples of RNN architectures that can help you better understand this.

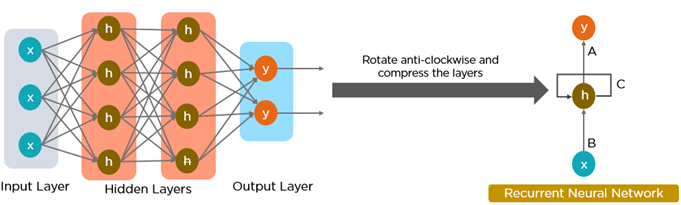

The information in recurrent neural networks cycles through a loop to the middle hidden layer.

The input layer x receives and processes the neural network’s input before passing it on to the middle layer.

Multiple hidden layers can be found in the middle layer h, each with its own activation functions, weights, and biases. You can utilize a recurrent neural network if the various parameters of different hidden layers are not impacted by the preceding layer, i.e. There is no memory in the neural network.

The different activation functions, weights, and biases will be standardized by the Recurrent Neural Network, ensuring that each hidden layer has the same characteristics. Rather than constructing numerous hidden layers, it will create only one and loop over it as many times as necessary.

A neuron’s activation function dictates whether it should be turned on or off. Nonlinear functions usually transform a neuron’s output to a number between 0 and 1 or -1 and 1.

The following are some of the most commonly utilized functions:

Advantages of RNNs:

Disadvantages of RNNs:

The information flow between an RNN and a feed-forward neural network is depicted in the two figures below.

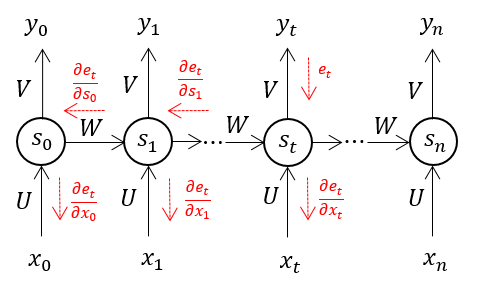

When we apply a Backpropagation algorithm to a Recurrent Neural Network with time series data as its input, we call it backpropagation through time.

A single input is sent into the network at a time in a normal RNN, and a single output is obtained. Backpropagation, on the other hand, uses both the current and prior inputs as input. This is referred to as a timestep, and one timestep will consist of multiple time series data points entering the RNN at the same time.

The output of the neural network is used to calculate and collect the errors once it has trained on a time set and given you an output. The network is then rolled back up, and weights are recalculated and adjusted to account for the faults.

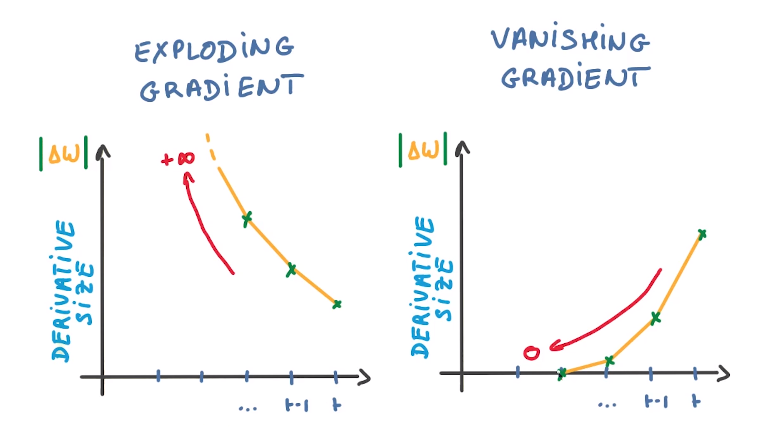

There are two key challenges that RNNs have had to overcome, but in order to comprehend them, one must first grasp what a gradient is.

With regard to its inputs, a gradient is a partial derivative. If you’re not sure what that implies, consider this: a gradient quantifies how much the output of a function varies when the inputs are changed slightly.

A function’s slope is also known as its gradient. The steeper the slope, the faster a model can learn, the higher the gradient. The model, on the other hand, will stop learning if the slope is zero. A gradient is used to measure the change in all weights in relation to the change in error.

To overcome issues like vanishing and exploding gradient descents that hinder learning in long sequences, researchers have introduced new, advanced RNN architectures.

Recurrent neural networks (RNNs) shine in tasks involving sequential data, where order and context are crucial. Let’s explore some real-world use cases. Using RNN models and sequence datasets, you may tackle a variety of problems, including :

Import the required libraries

import numpy as np

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layersHere’s a simple Sequential model that processes integer sequences, embeds each integer into a 64-dimensional vector, and then uses an LSTM layer to handle the sequence of vectors.

model = keras.Sequential()

model.add(layers.Embedding(input_dim=1000, output_dim=64))

model.add(layers.LSTM(128))

model.add(layers.Dense(10))

model.summary()Output:

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

embedding (Embedding) (None, None, 64) 64000

_________________________________________________________________

lstm (LSTM) (None, 128) 98816

_________________________________________________________________

dense (Dense) (None, 10) 1290

=================================================================

Total params: 164,106

Trainable params: 164,106

Non-trainable params: 0

|

Recurrent Neural Networks (RNNs) are a powerful and versatile tool with a wide range of applications. They are commonly used in language modeling and text generation, as well as voice recognition systems. One of the key advantages of RNNs is their ability to process sequential data and capture long-range dependencies. When paired with Convolutional Neural Networks (CNNs), they can effectively create labels for untagged images, demonstrating a powerful synergy between the two types of neural networks.

However, one challenge with traditional RNNs is their struggle with learning long-range dependencies, which refers to the difficulty in understanding relationships between data points that are far apart in the sequence. This limitation is often referred to as the vanishing gradient problem. To address this issue, a specialized type of RNN called Long-Short Term Memory Networks (LSTM) has been developed, and this will be explored further in future articles. RNNs, with their ability to process sequential data, have revolutionized various fields, and their impact continues to grow with ongoing research and advancements.

A. Recurrent Neural Networks (RNNs) are a type of artificial neural network designed to process sequential data, such as time series or natural language. They have feedback connections that allow them to retain information from previous time steps, enabling them to capture temporal dependencies. This makes RNNs well-suited for tasks like language modeling, speech recognition, and sequential data analysis.

A. A recurrent neural network (RNN) works by processing sequential data step-by-step. It maintains a hidden state that acts as a memory, which is updated at each time step using the input data and the previous hidden state. The hidden state allows the network to capture information from past inputs, making it suitable for sequential tasks. RNNs use the same set of weights across all time steps, allowing them to share information throughout the sequence. However, traditional RNNs suffer from vanishing and exploding gradient problems, which can hinder their ability to capture long-term dependencies.

A. RNNs and CNNs are both neural networks, but for different jobs. RNNs excel at sequential data like text or speech, using internal memory to understand context. Imagine them remembering past words in a sentence. CNNs, on the other hand, are masters of spatial data like images. They analyze the arrangement of pixels, like identifying patterns in a photograph. So, RNNs for remembering sequences and CNNs for recognizing patterns in space.

The media shown in this article is not owned by Analytics Vidhya and are used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,

I want to present a seminar paper on Optimization of deep learning-based models for vulnerability detection in digital transactions. I need assistance.