This article was published as a part of the Data Science Blogathon.

Introduction

Mainframes and punch cards were the first to be used for batch processing, leading to the discovery of Microsoft Azure Batch Services: Compute Management Platform. It is still used in business, engineering, science, and other fields that require a large number of automated tasks, such as processing accounts and payroll, calculating portfolio risks, designing new products, rendering animated films, testing software, finding energy, etc. forecasting the weather and discovering new cures for diseases. Previously, only a few people had access to the computing power needed in these circumstances.

(1).png)

Table of contents

What is Azure Batch?

Azure Batch is a cloud computing service provided by Microsoft Azure. It enables large-scale parallel and high-performance computing (HPC) workloads by dynamically provisioning and managing clusters of virtual machines (VMs). Azure Batch allows users to schedule and execute computational tasks across multiple VMs, distributing the workload and maximizing efficiency. It is commonly used for tasks like simulations, rendering, data processing, and other computationally intensive workloads that require significant processing power.

Use Azure Batch to run large parallel and high-performance computing (HPC) batch jobs in Azure. Azure Batch generates and manages a pool of compute nodes (VMs), installs applications, and schedules jobs to run on the nodes. There is no software to install, manage, or extend for clusters or task schedulers. Instead, you configure, manage, and monitor your operations using Batch APIs and tools, command-line scripts, or the Azure website.

Developers can use Batch as a platform service to build SaaS services or client applications that require large-scale execution. For example, you can use Batch to create a service that runs a Monte Carlo risk simulation for a financial services organization or a service that processes many photos.

Features of Azure Batch

Azure Batch Supports multiple features, some of which are listed below:

- Select your operating system and tools

Select the operating system and development tools needed to perform extensive tasks in Batch. Batch provides unified job management and schedule regardless of whether you are using Windows Server or Linux compute nodes but also allows you to take advantage of the unique features of each environment. Use your current Windows code, including Microsoft.NET, to perform large-scale computing operations in Azure with Windows. To run your computing operations on Linux, choose from popular distributions like CentOS, Ubuntu, and SUSE Linux Enterprise Server, or leverage Docker containers to lift and move your applications. Batch provides SDKs and supports various programming technologies such as Python and Java.

- Enable cluster cloud applications

A batch runs applications on workstations and clusters. To scale, it’s easy to run cloud files and scripts. Batch creates a queue for the work you want to do and then runs your programs. Describe the data that must be transferred to the cloud for processing, how the data should be scattered, what parameters to use for each operation, and the command to start the process. Think of it as an assembly line with many applications. You can use a batch to transfer data between stages and to manage execution as a whole.

- Imagine running at a 100x scale

You use a workstation, maybe a small cluster, or wait in a queue to run jobs. What if you could access 16 or even 100,000 crores whenever you wanted them and only pay for what you used? You can do so using Batch. Avoid waiting, which can limit your creativity. What would you be able to achieve in Azure that you can’t do today?

- Tell the batch what to do

Batch is built on an extensive job scheduling engine accessible to you as a managed service. Use the scheduler in your app to submit tasks. Batch can also be used with cluster task schedulers or behind the scenes in software as a service (SaaS). You don’t need to create your work queue, dispatcher, or monitor. Batch provides this as a service.

- Let Batch take care of the scale for you

When you’re ready to run a job, Batch starts a pool of compute virtual machines for you, installs applications and work data, runs jobs with as many jobs as you have, detects problems, moves work to a queue, and shrinks the pool. Once the job is done. You regulate scope to meet deadlines, manage expenses, and run at the right scale for your application.

- Deliver solutions as a service

Batch processing jobs on demand, not on a set schedule, allows your clients to run jobs in the cloud when needed. Control who has access to Batch and how many resources they can use, as well as verify that criteria such as encryption are met. Rich monitoring lets you see what’s going on and uncover problems. You can monitor usage with a detailed report.

How to create a batch account?

Follow these instructions to create a sample Batch account for testing purposes. You must have a Batch account to create pools and jobs. You can also connect a Batch account to an Azure storage account. A storage account is not required for this quick start but is useful for deploying applications and storing input and output data for most real-world tasks.

- Choose to create a resource from the Azure portal.

- Type “batch service” in the search box and select Batch Service.

- Select Create.

- Select Create New under Resource Group and name your resource group.

- This name must be unique within the Azure location you selected. It can only contain lowercase letters and numbers and must be between 3 and 24 characters long.

- Select Storage Account under Storage Account, and then select an existing storage account or create a new one.

- Leave the other options alone. To create a batch account, click Review + Create, then click Create.

- When the Deployment success message appears, navigate to the Batch account you created.

Microsoft Azure Batch Services Work Tools

Azure Batch is a non-visual tool with no graphical user interface. After activating the Azure Batch component in Azure, you can start creating compute pools.

- To begin with, Azure Batch will assume that there is data somewhere that needs to be roughened. This data is typically stored in Azure Blob Storage or Azure Data Lake Store. So your first duty should be to ensure that your data is uploaded to these storage spaces.

- Once that’s done, we can create a compute pool, a collection of one or more computed nodes that you collectively allocate work. When configuring a compute pool, you will be asked for the name of the pool and the type of nodes it should contain (which OS, which software to install, link to which Azure Storage, etc.).

- As a result, all compute nodes inside a compute pool are identical. These are Azure VMs that have been tailored to your requirements – they can be Linux or Windows nodes, they can be connected using an Azure VM, a custom image, or a docker image configuration, they can be dedicated or low-priority nodes, and they can be dedicated nodes or low priority nodes.

Work and Tasks

Once we have the compute pool and nodes in place, we can assign work to them. The work will be organized into tasks and assignments:

- A task is a set of tasks that can be parallelized. Example tasks include: running 10 different simulations of this model and running 1000 iterations of transformation scripts (for which each transformation is a task).

- A job refers to a single job run. In the above scenario, this would be one simulation run, one ETL run, or one AI job run. Each run can include downloading input files from Azure Storage – such as CSV files or parquets – in each run. Executing transformation or AI programs – for example, in Python or R, Returning findings to Azure Storage

After submitting the jobs, Azure Batch dynamically assigns the work to different nodes. Each node can take over one or more tasks (depending on the VM’s number of cores). After all, tasks are completed, the task is marked as complete, and the compute nodes are ready to start the next task.

How to work with Microsoft Azure Batch Services?

Azure Batch requires the use of an application or service manager to set up pools, assign jobs, and monitor if necessary. This service manager can be a portal interface, but we recommend using the available Python or R tools. In practice, you will use this Python (or R) script to create pools, tasks, and jobs.

The parallel package in R is a fantastic tool that allows you to delegate tasks to Azure Batch by executing each loop. The R-script within the for each loop is executed directly on the Azure Batch nodes. And the results are immediately batched and sent to the R user. The Azure Batch library in Python is just as powerful but works differently. This module makes it easy to use Python to create clusters and add jobs and tasks. However, it is up to the user to put the findings together.

How does Microsoft Azure Batch Services work?

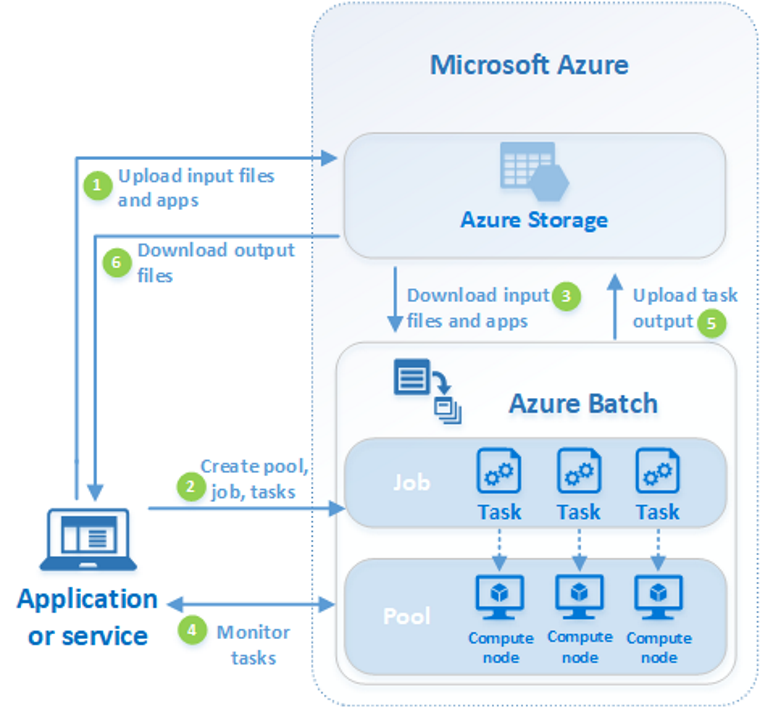

A typical Batch scenario involves scaling internally parallel work, such as image rendering for 3D scenes, across a group of compute nodes. This pool can serve as your “render farm,” supplying tens, hundreds, or even thousands of cores to your rendering project. The diagram below illustrates the steps of a typical Batch workflow using a client application or hosted service that uses Batch for parallel workloads.

Source: -https://azure.microsoft.com/en-in/pricing

Steps to Implement

Description of the Steps

- Upload the input files and the applications used to process those files to your Azure Storage account. Any data your application processes, such as financial modeling data or video files to be transcoded, can be used as input files. Scripts or applications that process the data, such as a media transcoder, may be included in the application files.

- In your Batch account, create a batch pool of compute nodes, a task to execute the workload in the pool, and tasks within the task. Compute nodes are virtual machines (VMs) that execute your commands. Pool parameters such as the number and size of nodes, a Windows or Linux virtual machine image, and the application to deploy when nodes join the pool must be specified. When you add tasks to a job, the Batch service schedules them to run on the compute nodes in the pool. Each job runs the software you provided to process the input files.

- Transfer the input files and applications to the batch. Each task can download the input data it will process to an assigned node before executing. You can get it from this page if the program has not already been installed on the pool nodes. The job will run on the selected node after the download from Azure Storage is complete.

- Monitor the execution of the Query Batch job as the jobs run to keep track of the job’s progress and its jobs. Batch communicates with your client application or service over HTTPS. Because you can monitor thousands of jobs running on thousands of compute nodes, make the most of Batch.

- Upload job output After the job is complete. They can send the results to Azure Storage.

- Downloading Output Files When your monitoring detects that the tasks in your job have been completed, the output data can be downloaded by your client application or service for further processing.

Frequently Asked Questions

A. Azure Batch is a cloud computing service by Microsoft Azure that enables parallel processing and execution of large-scale computational workloads across multiple virtual machines. On the other hand, Control-M is an enterprise workload automation solution by BMC Software. It helps schedule, manage, and monitor various types of workloads, including batch processing, data pipelines, and job scheduling across hybrid and multi-cloud environments. While both serve workload management purposes, they differ in their focus, features, and underlying infrastructure.

A. Azure Batch is used for executing large-scale and high-performance computing (HPC) workloads. It provides the ability to distribute computational tasks across multiple virtual machines (VMs), enabling efficient parallel processing. Azure Batch is commonly employed for tasks like simulations, rendering, data processing, and scientific computations. It allows users to scale resources dynamically, reducing processing time and costs while tackling complex computational workloads.

Conclusion

Azure Batch is a cloud platform for executing large parallel jobs. Limitations of on-premises computing capacity, as well as expensive infrastructure that requires large workloads, can be overcome with Azure Batch. Using multiple process nodes to perform work in parallel results in faster and more efficient execution of tasks, paying only for what you need.

- Azure Batch requires the use of an application or service manager to set up pools, assign jobs, and monitor if necessary. This service manager can be a portal interface, but we recommend using the available Python or R tools.

- To begin with, Azure Batch will assume that there is data somewhere that needs to be roughened. This data is typically stored in Azure Blob Storage or Azure Data Lake Store. So your first duty should be to ensure that your data is uploaded to these storage spaces.

- Developers can use Batch as a platform service to build SaaS services or client applications that require large-scale execution. For example, you can use Batch to create a service that runs a Monte Carlo risk simulation for a financial services organization or a service that processes many photos.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.