Deep learning has revolutionized computer vision and paved the way for numerous breakthroughs in the last few years. One of the key breakthroughs in deep learning is the ResNet architecture, introduced in 2015 by Microsoft Research. In this article, we will discuss the ResNet architecture and its significance in the field of computer vision. Also, in the articles you will get to know about the resnet stride 2, and also get to know all about to resnet architecture.

Learning Objectives

This article was published as a part of the Data Science Blogathon.

ResNet stands for Residual Neural Network and is a type of convolutional neural network (CNN). It was designed to tackle the issue of vanishing gradients in deep networks, which was a major hindrance in developing deep neural networks. The ResNet architecture enables the network to learn multiple layers of features without getting stuck in local minima, a common issue with deep networks.

Here are the key features of the ResNet (Residual Network) architecture:

Paper link: Here

Deep Neural Networks provide more accuracy as the number of layers increases. But, when we go deeper into the network, the accuracy of the network decreases instead of increasing.

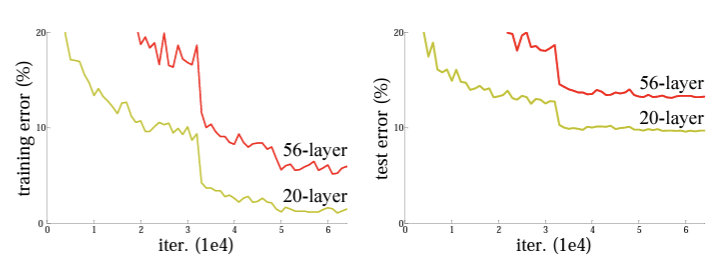

Source: arxiv.org

An increase in the depth of the network increases the training error, which ultimately increases the test error. Here, the training error on a 20-layer network is less than that of 56 layer network. Because of this, the network cannot generalize well for new data, which becomes inefficient. This degradation indicates that the increase in the model layer does not aid the model’s performance.

Adding more layers to a suitably deep model leads to higher training errors. The paper presents how architectural changes like residual learning tackle this degradation problem using residual networks.

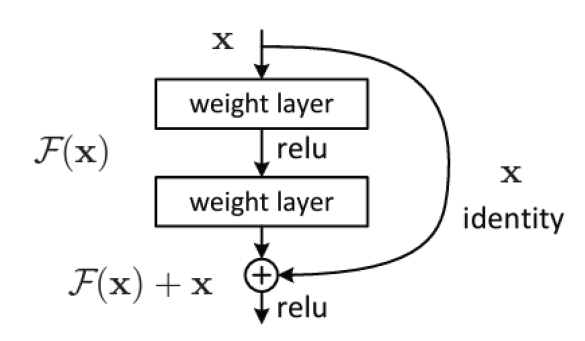

Residual Network adds an identity mapping between the layers. Applying identity mapping to the input will give the output the same as the input. The skip connections directly pass the input to the output, effectively allowing the network to learn an identity function.

The paper presents a deep convolutional neural network architecture that solves the vanishing gradients problem and enables the training of deep networks. It showed that deep residual networks could be trained effectively, achieving improved accuracy on several benchmark datasets compared to previous state-of-the-art models.

High-level Contributions of the Current Approach

The authors proposed a method to approximate the residual function and add that to the input. A residual block consists of two or more convolutional layers, where the output of the block is obtained by adding the input of the block to the output of the last layer in the block. This allows the network to learn residual representations, meaning that it learns the difference between the input and the desired output instead of trying to approximate the output directly.

Mathematical Formulation

The authors speculated that optimizing the residual mapping is easier than optimizing the original, unreferenced mapping.

The main aim would be to learn the residual function F(x), which would increase the overall accuracy of the network. The authors introduced the concept of zero padding and linear projection to tackle cases when there is a dimension mismatch.

Zero Padding:

Linear Projection:

Architcture Diagram:

The paper used the baseline model of VGGNet as a plain network with mostly 3×3 filters with two design rules:

The network comprises a global average pooling layer and a 1000-way fully connected layer with a softmax at the end. The dotted lines indicate a change in the size of the image from one residual block to another and are Linear projections that can be accomplished using 1×1 kernels.

Dataset Used: Imagenet

Resnet architecture was evaluated on the ImageNet 2012 classification dataset consisting of:

Identity vs. Projection Shortcuts

The author compared:

The accuracy increased from A to B to C. This concludes that projection is not important for addressing the degradation problem.

CIFAR-10 Dataset

Resnet architecture was evaluated on the CIFAR-10 dataset consisting of the following:

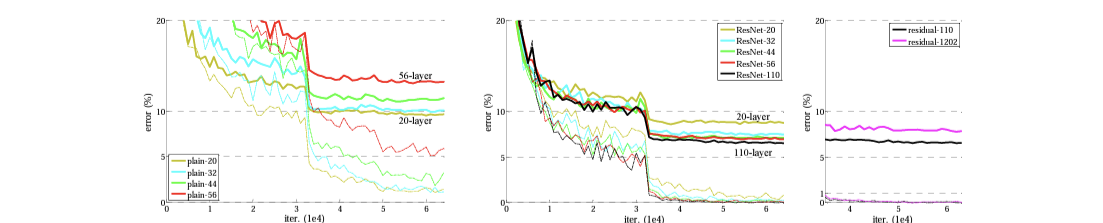

110 layers on the residual network have the same order of parameters as the previous network, only 19 layers. With a deeper network, it outperformed the previous network. However, with a 1202-layer network, the error increased.

Imagenet Dataset results

Source: arxiv.org

Training of 50, 101, and 152 layer ResNets on ImageNet dataset

CIFAR-10 Dataset results

On the CIFAR-10 dataset with 1202 layers, validation error increased on increasing the network layer, but training error is the same for both 110 and 1202 layers, near zero. Here the authors concluded that it was overfitting with a lot of layers.

Comparison of Current Approach with Previous

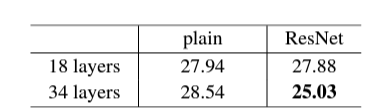

The table shows the training error with different layers.

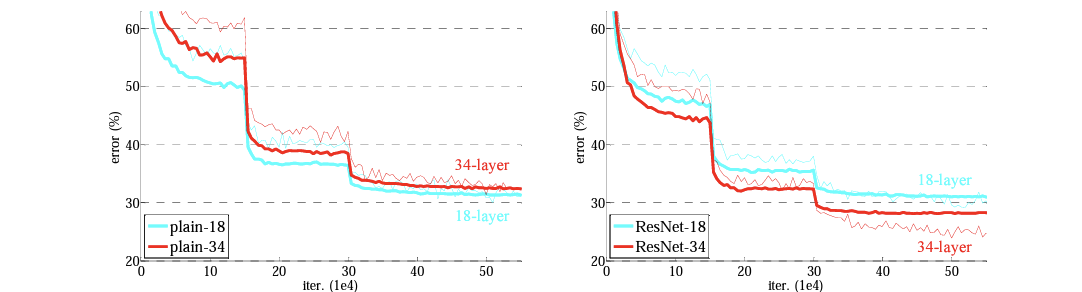

Residual connection to 18 layers is marginally better. However, introducing a residual connection to the 34 layers is much better.

ResNet architecture, which incorporates residual connections, significantly outperforms prior state-of-the-art models on image recognition tasks such as ImageNet. The authors demonstrate that residual connections help alleviate the vanishing gradient problem and enable much deeper networks to be trained effectively. The ResNet architecture achieves better results with fewer parameters, making it computationally more efficient. Residual connections are a general and effective approach for enabling deeper networks, and ResNet architecture will become a new benchmark for image recognition tasks.

Hope you like the article and Now know about the Resnet architecture, resnet stride 2 and all how to blocks in resnet. So in this way you will get understand about the resnet stride 2 and all about the Resnet topic.

ResNet layers vary. Common versions: 18, 34, 50, 101, 152. Deeper ResNets often perform better but need more computing power. Key idea: skip connections help training deep networks.

ResNet is a type of neural network that uses “skip connections” to overcome the vanishing gradient problem in deep networks. This allows for training much deeper networks, leading to improved performance in tasks like image recognition.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,