I really like working on unsupervised learning problems. They offer a completely different challenge to a supervised learning problem – there’s much more room for experimenting with the data that I have. It’s no wonder that the majority of developments and breakthroughs in the machine learning space are happening in the unsupervised learning domain.

And one of the most popular techniques in unsupervised learning is clustering. It’s a concept we typically learn early on in our machine learning journey and it’s simple enough to grasp. I’m sure you’ve come across or even worked on projects like customer segmentation, market basket analysis, etc.

But here’s the thing – clustering has many layers. It isn’t limited to the basic algorithms we learned earlier. It is a powerful unsupervised learning technique that we can use in the real-world with unerring accuracy.

Gaussian Mixture Models are one such clustering algorithm that I want to talk about in this article.

Table of contents

Gaussian Mixture Models (GMMs)

The Gaussian Mixture Model (GMM) is a probabilistic model used for clustering and density estimation. It assumes that the data is generated from a mixture of several Gaussian components, each representing a distinct cluster. GMM assigns probabilities to data points, allowing them to belong to multiple clusters simultaneously. The model is widely used in machine learning and pattern recognition applications. Here are the following points:

- Assumption: GMMs assume data is generated from a mixture of a fixed number of Gaussian (normal) distributions.

- Components: Each component in a GMM is a Gaussian distribution with its own parameters (mean and covariance).

- Clustering: GMM groups data points based on their probability of belonging to a particular Gaussian component.

- Parameter Estimation: The model parameters (means, covariances) are estimated using:

- Expectation-Maximization (EM) algorithm (most common)

- Maximum Likelihood Estimation (MLE) techniques

- Example with 3 Components (GD1, GD2, GD3):

- Each has its own mean (μ₁, μ₂, μ₃) and variance (σ₁, σ₂, σ₃).

- For any data point, GMM calculates the probability of it belonging to GD1, GD2, or GD3.

- EM Algorithm Behavior:

- Iteratively updates parameters to maximize data likelihood.

- Does not require explicit derivative calculations.

Wait, probability?

You read that right! Gaussian Mixture Models are probabilistic models and use the soft clustering approach for distributing the points in different clusters. I’ll take another example that will make it easier to understand.

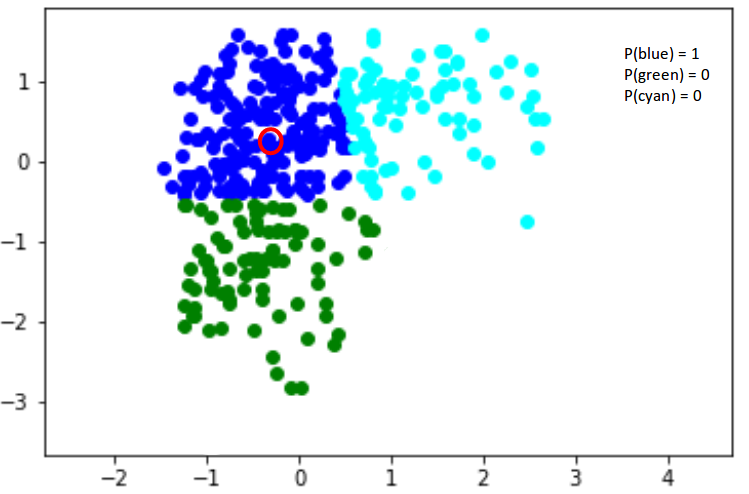

Here, we have three clusters that are denoted by three colors – Blue, Green, and Cyan. Let’s take the data point highlighted in red. The probability of this point being a part of the blue cluster is 1, while the probability of it being a part of the green or cyan clusters is 0.

These probabilities are computed using Bayes’ theorem, which relates the prior and posterior probabilities of the cluster assignments given the data. An important decision in GMMs is choosing the appropriate number of components, which can be done using techniques like the Bayesian Information Criterion (BIC) or cross-validation.

Now, consider another point – somewhere in between the blue and cyan (highlighted in the below figure). The probability that this point is a part of cluster green is 0, right? The probability that this belongs to blue and cyan is 0.2 and 0.8 respectively. These coefficients represent the responsibilities or soft assignments of the data point to the different Gaussian components in the mixture.

Gaussian Mixture Models use the soft clustering technique for assigning data points to Gaussian distributions, leveraging Bayes’ theorem to compute the posterior probabilities. I’m sure you’re wondering what these distributions are so let me explain that in the next section.

The Gaussian Distribution

I’m sure you’re familiar with Gaussian Distributions (or the Normal Distribution). It has a bell-shaped curve, with the data points symmetrically distributed around the mean value.

The below image has a few Gaussian distributions with a difference in mean (μ) and variance (σ 2 ). Remember that the higher the σ value more the spread:

Source: Wikipedia

In a one dimensional space, the probability density function of a Gaussian distribution is given by:

where μ is the mean and σ2 is the variance.

But this would only be true for a single variable. In the case of two variables, instead of a 2D bell-shaped curve, we will have a 3D bell curve as shown below:

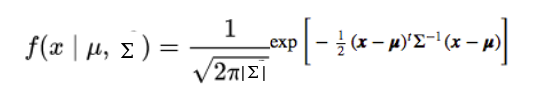

The probability density function would be given by:

where x is the input vector, μ is the 2D mean vector, and Σ is the 2×2 covariance matrix. The covariance would now define the shape of this curve. We can generalize the same for d-dimensions.

Thus, this multivariate Gaussian model would have x and μ as vectors of length d, and Σ would be a d x d covariance matrix.

Hence, for a dataset with d features, we would have a mixture of k Gaussian distributions (where k is equivalent to the number of clusters), each having a certain mean vector and variance matrix. But wait – how is the mean and variance value for each Gaussian assigned?

These values are determined using a technique called expectation maximization (EM). We need to understand this technique before we dive deeper into the working of Gaussian Mixture Models.

Characteristics of the Normal or Gaussian Distribution

Characteristics of the normal or Gaussian distribution:

- It’s bell-shaped with most values around the average.

- It has only one peak or mode.

- It stretches out forever in both directions.

- Its mean, median, and mode are the same.

- Its spread is measured by its standard deviation.

- The total area under its curve equals 1.

What is Expectation-Maximization?

Excellent question!

Expectation-Maximization (EM) is a statistical algorithm for finding the right model parameters. We typically use EM when the data has missing values, or in other words, when the data is incomplete.

These missing variables are called latent variables. We consider the target (or cluster number) to be unknown when we’re working on an unsupervised learning problem.

It’s difficult to determine the right model parameters due to these missing variables. Think of it this way – if you knew which data point belongs to which cluster, you would easily be able to determine the mean vector and covariance matrix.

Since we do not have the values for the latent variables, expectation-maximization tries to use the existing data to determine the optimum values for these variables and then finds the model parameters. Based on these model parameters, we go back and update the values for the latent variable, and so on.

Broadly, the Expectation-Maximization algorithm has two steps:

- E-step: In this step, the available data is used to estimate (guess) the values of the missing variables

- M-step: Based on the estimated values generated in the E-step, the complete data is used to update the parameters

Expectation-Maximization is the base of many algorithms, including Gaussian Mixture Models. So how does GMM use the concept of EM and how can we apply it for a given set of points? Let’s find out!

Expectation-Maximization in Gaussian Mixture Models

Let’s understand this using another example. I want you to visualize the idea in your mind as you read along. This will help you better understand what we’re talking about.

Let’s say we need to assign k number of clusters. This means that there are k Gaussian distributions, with the mean and covariance values to be μ1, μ2, .. μk and Σ1, Σ2, .. Σk. Additionally, there is another parameter for the distribution that defines the number of points for the distribution. In other words, the density of the distribution is represented with Πi, capturing the relative sizes of different subpopulations.

Now, we need to find the values for these parameters to define the Gaussian distributions. We already decided on the number of clusters and randomly assigned the values for the mean, covariance, and density. Next, we’ll perform the expectation step (E-step) and the maximization step (M-step) iteratively!

In the E-step, we compute the probability of each data point belonging to each of the k Gaussian components, given the current parameter values. Then, in the M-step, we re-estimate the parameters (means, covariances, and component weights) to maximize the likelihood of the data, using the responsibilities computed in the E-step. This optimization process continues until convergence or a maximum number of iterations is reached. Advanced techniques like variational inference can also be used for parameter estimation in complex GMM scenarios.

E-step

For each point x i, calculate the probability that it belongs to cluster/distribution c 1, c 2, … c k. This is done using the below formula:

This value will be high when the point is assigned to the right cluster and lower otherwise.

M-step

Post the E-step, we go back and update the Π, μ and Σ values. These are updated in the following manner:

- The new density is defined by the ratio of the number of points in the cluster and the total number of points:

- The mean and the covariance matrix are updated based on the values assigned to the distribution, in proportion with the probability values for the data point. Hence, a data point that has a higher probability of being a part of that distribution will contribute a larger portion:

Based on the updated values generated from this step, we calculate the new probabilities for each data point and update the values iteratively. This process is repeated in order to maximize the log-likelihood function. Effectively we can say that the

k-means only considers the mean to update the centroid while GMM takes into account the mean as well as the variance of the data!

Implementing Gaussian Mixture Models in Python

It’s time to dive into the code! This is one of my favorite parts of any article so let’s get going straightaway.

We’ll start by loading the data. This is a temporary file that I have created – you can download the data from this link.

Python Code:

import pandas as pd

import matplotlib.pyplot as plt

data = pd.read_csv('Clustering_gmm.csv')

plt.figure(figsize=(7,7))

plt.scatter(data["Weight"],data["Height"])

plt.xlabel('Weight')

plt.ylabel('Height')

plt.title('Data Distribution')

plt.show()That’s what our data looks like. Let’s build a k-means model on this data first:

#training k-means model

from sklearn.cluster import KMeans

kmeans = KMeans(n_clusters=4)

kmeans.fit(data)

#predictions from kmeans

pred = kmeans.predict(data)

frame = pd.DataFrame(data)

frame['cluster'] = pred

frame.columns = ['Weight', 'Height', 'cluster']

#plotting results

color=['blue','green','cyan', 'black']

for k in range(0,4):

data = frame[frame["cluster"]==k]

plt.scatter(data["Weight"],data["Height"],c=color[k])

plt.show()

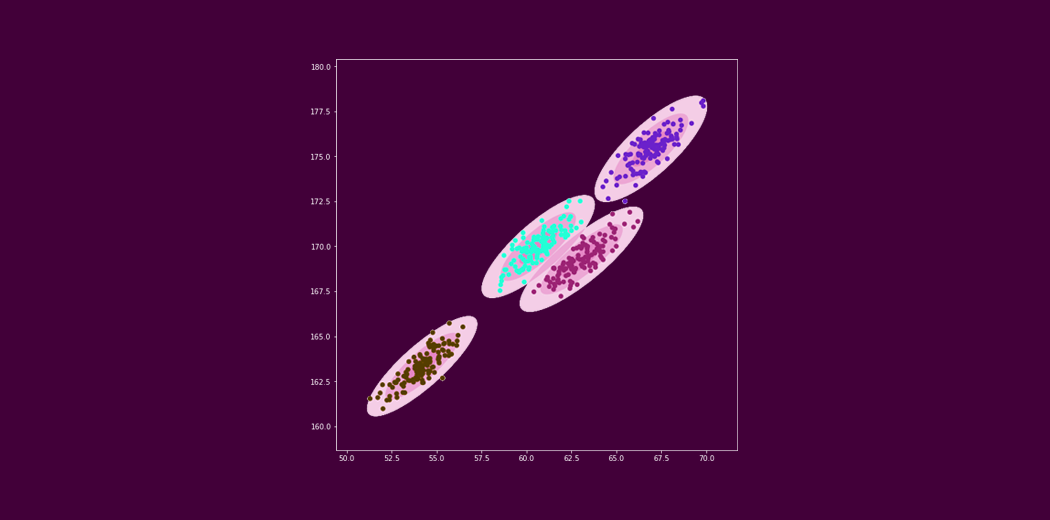

That’s not quite right. The k-means model failed to identify the right clusters. Look closely at the clusters in the center – k-means has tried to build a circular cluster even though the data distribution is elliptical (remember the drawbacks we discussed earlier?).

Let’s now build a Gaussian Mixture Model on the same data and see if we can improve on k-means:

import pandas as pd

data = pd.read_csv('Clustering_gmm.csv')

# training gaussian mixture model

from sklearn.mixture import GaussianMixture

gmm = GaussianMixture(n_components=4)

gmm.fit(data)

#predictions from gmm

labels = gmm.predict(data)

frame = pd.DataFrame(data)

frame['cluster'] = labels

frame.columns = ['Weight', 'Height', 'cluster']

color=['blue','green','cyan', 'black']

for k in range(0,4):

data = frame[frame["cluster"]==k]

plt.scatter(data["Weight"],data["Height"],c=color[k])

plt.show()

Excellent! Those are exactly the clusters we were hoping for. Gaussian Mixture Models have blown k-means out of the water here.

End Notes

This was a beginner’s guide to Gaussian Mixture Models. My aim here was to introduce you to this powerful clustering technique and showcase how effective and efficient it can be as compared to your traditional algorithms.

I encourage you to take up a clustering project and try out GMMs there. That’s the best way to learn and ingrain a concept – and trust me, you’ll realise the full extent of how useful this algorithm is.

Frequently Asked Questions

A. The Gaussian Mixture Model (GMM) is a probabilistic model used for clustering and density estimation. It assumes that the data points are generated from a mixture of several Gaussian distributions, each representing a cluster. GMM estimates the parameters of these Gaussians to identify the underlying clusters and their corresponding probabilities, allowing it to handle complex data distributions and overlapping clusters.

A. Gaussian Mixture Models (GMMs) are used for various tasks:

1. Clustering: GMM identifies underlying clusters in data, accommodating non-spherical clusters and overlapping patterns.

2. Density Estimation: GMM estimates the underlying probability density function of data.

3. Anomaly Detection: It can detect anomalies as data points with low probability under the fitted GMM.

4. Feature Extraction: GMM can represent data points in reduced-dimensional latent space for feature extraction and dimensionality reduction.

Gaussian mixture models (GMMs) are useful for clustering multimodal data with an unknown number of clusters. They are best suited for continuous data but can also be used with discrete data. Consider using a GMM if your data has multiple peaks in its value distribution or if you’re unsure of the number of clusters present.

Excellent article! Thank you, Aishwarya.

Great Explanation!

Thanks Sahar!

I am really blow with a lot of information right now! I will keep on reading as you post new blogs. This is really interesting.

Thanks Rani!

Why do the clusters formed by kMeans and GMM similar to me? Don't see how kMeans is making circular clusters. Can you please explain.

Look closely at the two clusters in the center (blue and black). The GMM model is able to separate the points correctly. The k-means on the other hand has some divided the points in such a manner that half of the blue points are from one cluster while the rest are from another cluster.