This article was published as a part of the Data Science Blogathon

Introduction

We all have heard about ResNets for Image Recognition and, many of us feel that ResNets can be intimidating in the beginning. The architecture of a ResNet looks huge and complicated at first, but once you understand the core concept behind ResNets you can do wonders with it.

In this blog we are going to look at the following points:

- What are ResNets?

- What are the different types of ResNets?

- How to code a ResNet in Tensorflow?

What are ResNets and their Types?

ResNets are called Residual Networks. ResNet is a special type of Convolutional Neural Network (CNN) that is used for tasks like Image Recognition. ResNet was first introduced in 2015 by Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun in their paper – “Deep Residual Learning for Image Recognition”.

Different types of ResNets can be developed based on the depth of the network like ResNet-50 or ResNet-152. The number at the end of ResNet specifies the number of layers in the network or how deep the networks are. We can design a ResNet with any depth using the basic building blocks of a ResNet that we will be looking ahead:

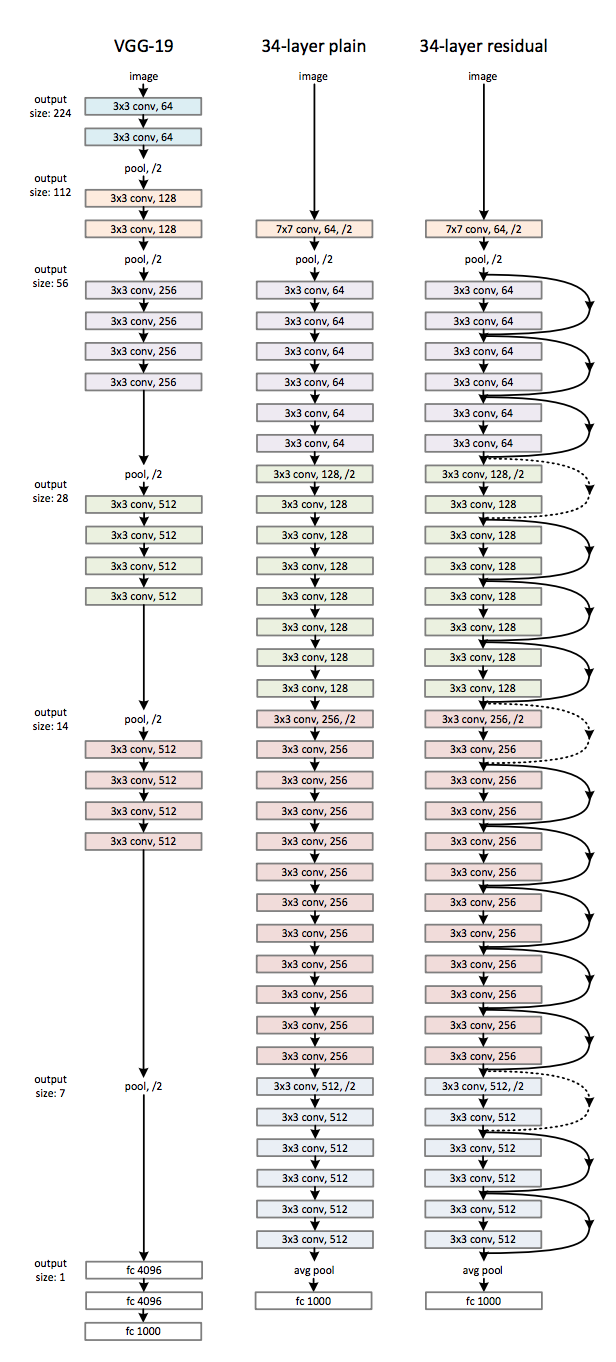

A ResNet can be called an upgraded version of the VGG architecture, with the difference between them being the skip connections used in ResNets. In the figure below, we can see the architecture of the VGG as well as the 34 layer ResNet.

Now you might be wondering why do we use a skip connection what purpose does it serve? So the answer to your question would be, in earlier CNN architectures as more and more layers were added to the Neural Network it was observed that the performance of the model started dropping, this was because of the vanishing gradient problem. As we went deeper into a network the vanishing gradient problem becomes more and more significant and the solution to this problem was using a skip connection. To know more about skip connections and the math behind them you can refer to this paper.

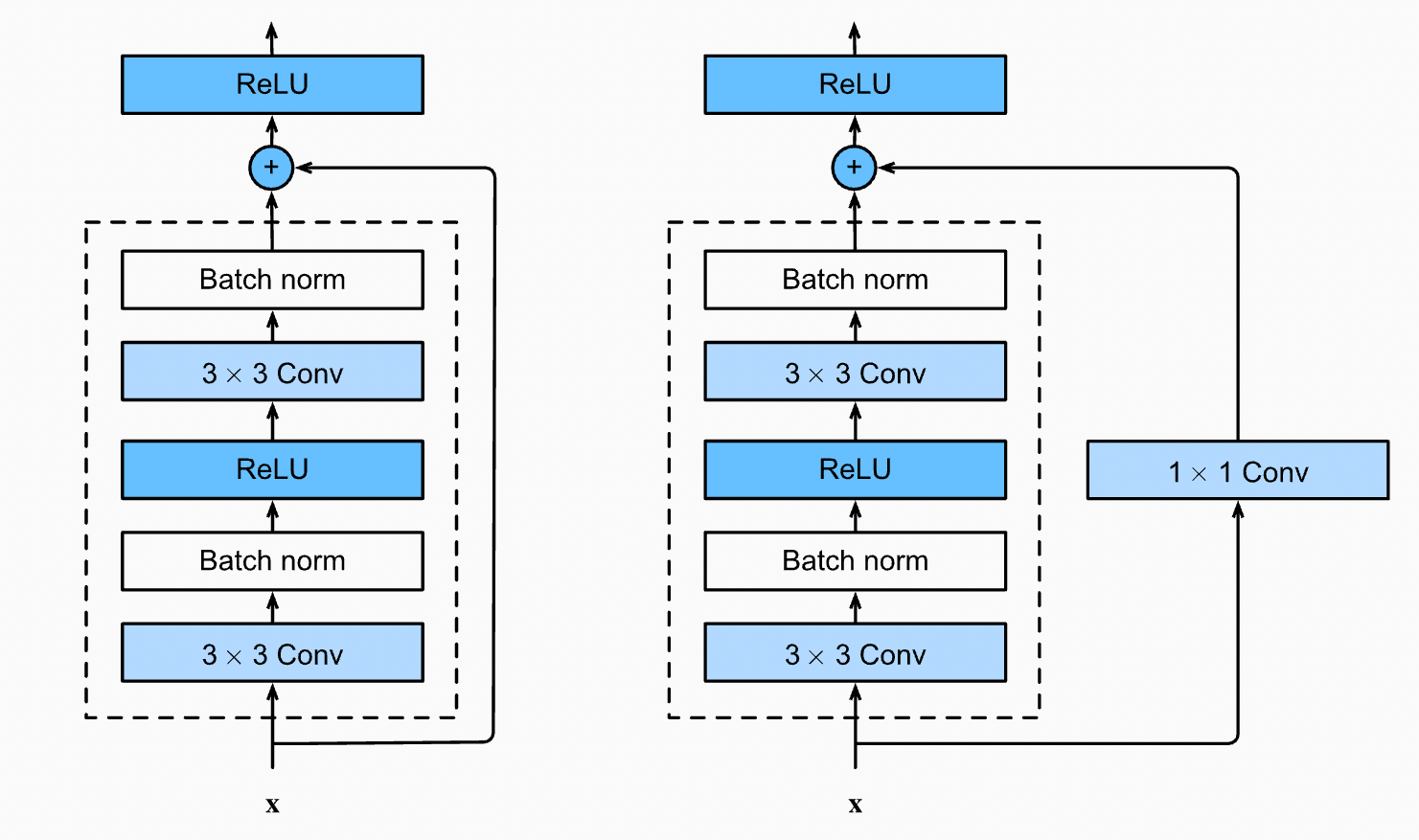

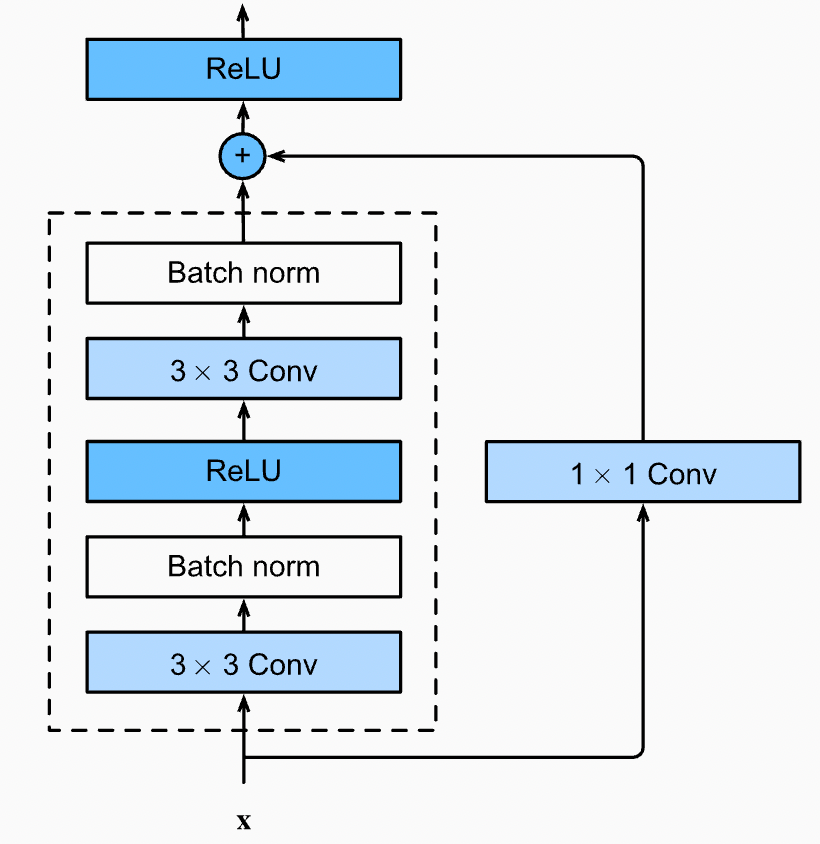

In Figure 2. we can see how a skip connection works, the skip connection skips training from a few layers and then connects it to the output. This helps the network skip the layers, which are hurting the performance of the model. This allows us to go deeper into the network without facing the problem of vanishing gradient.

In Figure 2. we can see two types of skip connections the left side block is called an Identity block and, the right side block is called a Bottleneck / Convolutional block. The difference between the two blocks is that the Identity block directly adds the residue to the output whereas, the Convolutional block performs a convolution followed by Batch Normalisation on the residue before adding it to the output.

Identity Block Structure and Code

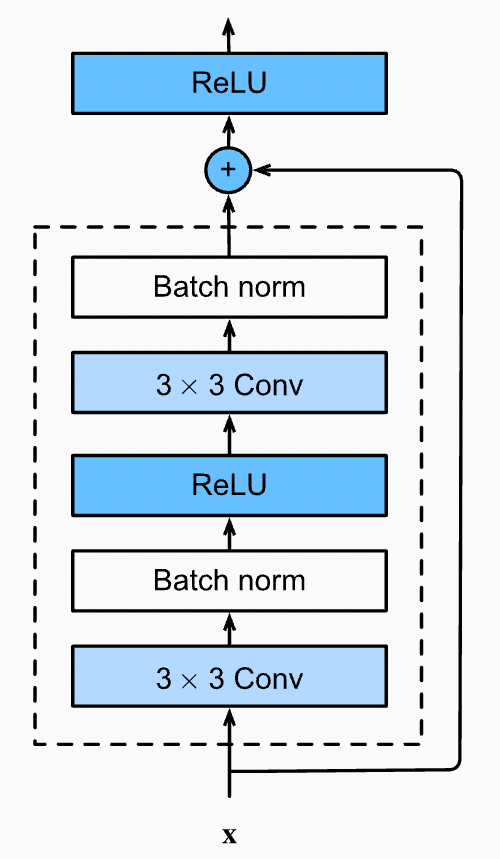

Now, let’s understand this identity block, every identity block has the architecture/algorithm as following: (Refer Fig 3.)

Algorithm for Identity Block

X_skip = Input

Convolutional Layer (3X3) (Padding=’same’) (Filters = f) →(Input)

Batch Normalisation →(Input)

Relu Activation →(Input)

Convolutional Layer (3X3) (Padding = ‘same’) (Filters = f) →(Input)

Batch Normalisation →(Input)

Add (Input + X_skip)

Relu Activation

You might wonder why we have taken padding as ‘same’ only for all Convolution layers. The reason behind this is, we have to maintain the shape of our input until we add it to the residue. If the shape of the input gets changed, we will get a Numpy Error saying- “Two arrays with different shapes cannot be added”.

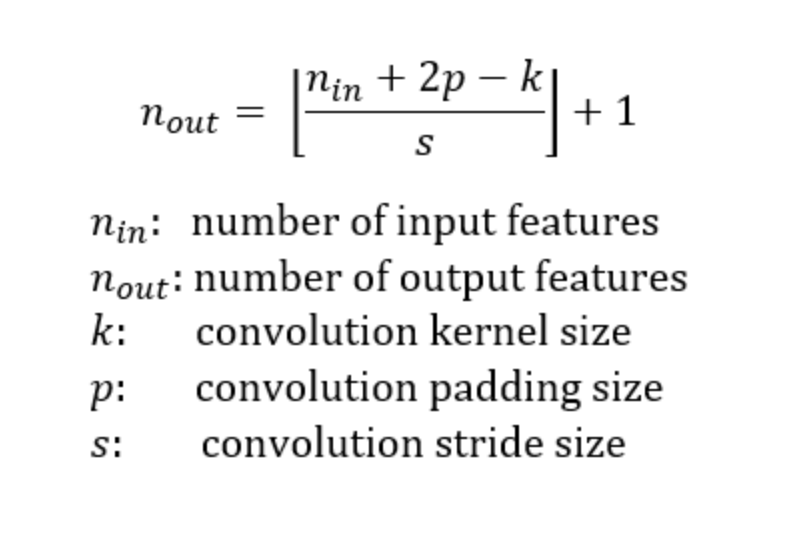

For example, consider the input size = (24 * 24), so the shape of our residue = (24 * 24) when we apply a kernel of (3, 3) on this input, the output shape = (22 * 22) but the shape of our residue will still be (24 * 24) which will make it impossible to Add as their shapes are different. Note that the layers having a conv filter of (1,1) don’t require padding as the kernel size (1 * 1) will not alter the shape of the input. Look at this formula for reference to the above example.

Fig 4. The formula for Output Size after a Convolution

Code for Identity Block

Now let’s code this block in Tensorflow with the help of Keras. To execute this code you will need to import the following:

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt

Moving on to the code, the code for the identity block is as shown below:

def identity_block(x, filter):

# copy tensor to variable called x_skip

x_skip = x

# Layer 1

x = tf.keras.layers.Conv2D(filter, (3,3), padding = 'same')(x)

x = tf.keras.layers.BatchNormalization(axis=3)(x)

x = tf.keras.layers.Activation('relu')(x)

# Layer 2

x = tf.keras.layers.Conv2D(filter, (3,3), padding = 'same')(x)

x = tf.keras.layers.BatchNormalization(axis=3)(x)

# Add Residue

x = tf.keras.layers.Add()([x, x_skip])

x = tf.keras.layers.Activation('relu')(x)

return x

Code for Identity Block of 34-Layer ResNet

Convolutional Block Structure and Code

Now that we have coded the identity block let us move on to the convolutional block the architecture/algorithm for Convolutional Block is as follows: (Refer Fig 5.)

Fig 5. Convolutional Block in a ResNet

Algorithm for Convolutional Block

X_skip = Input

Convolutional Layer (3X3) (Strides = 2) (Filters = f) (Padding = ‘same’) →(Input)

Batch Normalisation →(Input)

Relu Activation →(Input)

Convolutional Layer (3X3) (Filters = f) (Padding = ‘same’) →(Input)

Batch Normalisation →(Input)

Convolutional Layer (1X1) (Filters = f) (Strides = 2) →(X_skip)

Add (Input + X_skip)

Relu Activation

Some of the points to note in this convolution block are, the residue is not directly added to the output but is passed through a Convolution Layer. The strides in these layers are used to minimize the size of the image. Similar to the Identity block, we have to make sure that the Shape of the Input and Residue is the same so let us confirm this with an example. Refer to Fig 4. for cross-checking the calculations.

Input Shape = (24, 24), Residue Shape = (24, 24) After ConvInput1 → Input Shape = (13, 13) *stride = 2 After ConvInput2 → Input Shape = (13, 13) After ConvResidue1 → Residue Shape = (13, 13) *stride = 2

As we can see that the Input Shape and the Residue Shape end with the same dimensions we can now start coding this block.

Code for Convolutional Block

def convolutional_block(x, filter):

# copy tensor to variable called x_skip

x_skip = x

# Layer 1

x = tf.keras.layers.Conv2D(filter, (3,3), padding = 'same', strides = (2,2))(x)

x = tf.keras.layers.BatchNormalization(axis=3)(x)

x = tf.keras.layers.Activation('relu')(x)

# Layer 2

x = tf.keras.layers.Conv2D(filter, (3,3), padding = 'same')(x)

x = tf.keras.layers.BatchNormalization(axis=3)(x)

# Processing Residue with conv(1,1)

x_skip = tf.keras.layers.Conv2D(filter, (1,1), strides = (2,2))(x_skip)

# Add Residue

x = tf.keras.layers.Add()([x, x_skip])

x = tf.keras.layers.Activation('relu')(x)

return x

Code for Convolutional Block in 34-Layer ResNet

ResNet-34 Structure and Code

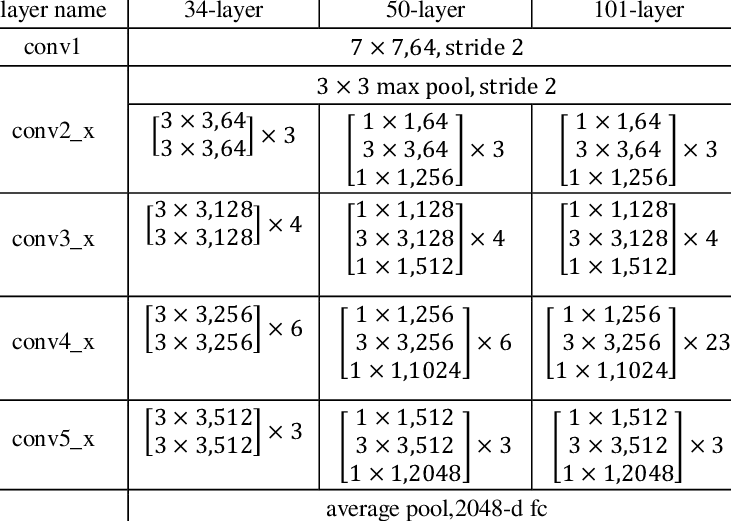

Fig 6. 34-Layer, 50-Layer, 101-Layer ResNet Architecture

Now let us follow the architecture in Fig 6. and build a ResNet-34 model. While coding this block we have to keep in mind that the first block, of every block in the ResNet will have a Convolutional Block followed by Identity Blocks except the conv2 block. For example, in the architecture mentioned in Fig 6. the conv3 block has 4 sub-blocks. So, the 1st sub-block will be Convolutional Block, which will be followed by 3 Identity Blocks. For reference, you can go back and look at Fig 1. where the solid black lines represent an Identity Block. The dotted black line represents a Convolutional Block.

We will now combine the Identity and Convolutional Blocks that we coded earlier to build the ResNet-34. So, now let’s code this.

def ResNet34(shape = (32, 32, 3), classes = 10):

# Step 1 (Setup Input Layer)

x_input = tf.keras.layers.Input(shape)

x = tf.keras.layers.ZeroPadding2D((3, 3))(x_input)

# Step 2 (Initial Conv layer along with maxPool)

x = tf.keras.layers.Conv2D(64, kernel_size=7, strides=2, padding='same')(x)

x = tf.keras.layers.BatchNormalization()(x)

x = tf.keras.layers.Activation('relu')(x)

x = tf.keras.layers.MaxPool2D(pool_size=3, strides=2, padding='same')(x)

# Define size of sub-blocks and initial filter size

block_layers = [3, 4, 6, 3]

filter_size = 64

# Step 3 Add the Resnet Blocks

for i in range(4):

if i == 0:

# For sub-block 1 Residual/Convolutional block not needed

for j in range(block_layers[i]):

x = identity_block(x, filter_size)

else:

# One Residual/Convolutional Block followed by Identity blocks

# The filter size will go on increasing by a factor of 2

filter_size = filter_size*2

x = convolutional_block(x, filter_size)

for j in range(block_layers[i] - 1):

x = identity_block(x, filter_size)

# Step 4 End Dense Network

x = tf.keras.layers.AveragePooling2D((2,2), padding = 'same')(x)

x = tf.keras.layers.Flatten()(x)

x = tf.keras.layers.Dense(512, activation = 'relu')(x)

x = tf.keras.layers.Dense(classes, activation = 'softmax')(x)

model = tf.keras.models.Model(inputs = x_input, outputs = x, name = "ResNet34")

return model

Code for ResNet34 Model

You can now put the code together and run it. Also, have a look at the model summary. This can be done using the ‘model.summary()’ that will show you the details of all the layers in our architecture. You can also try building different types of ResNets using the basics now!

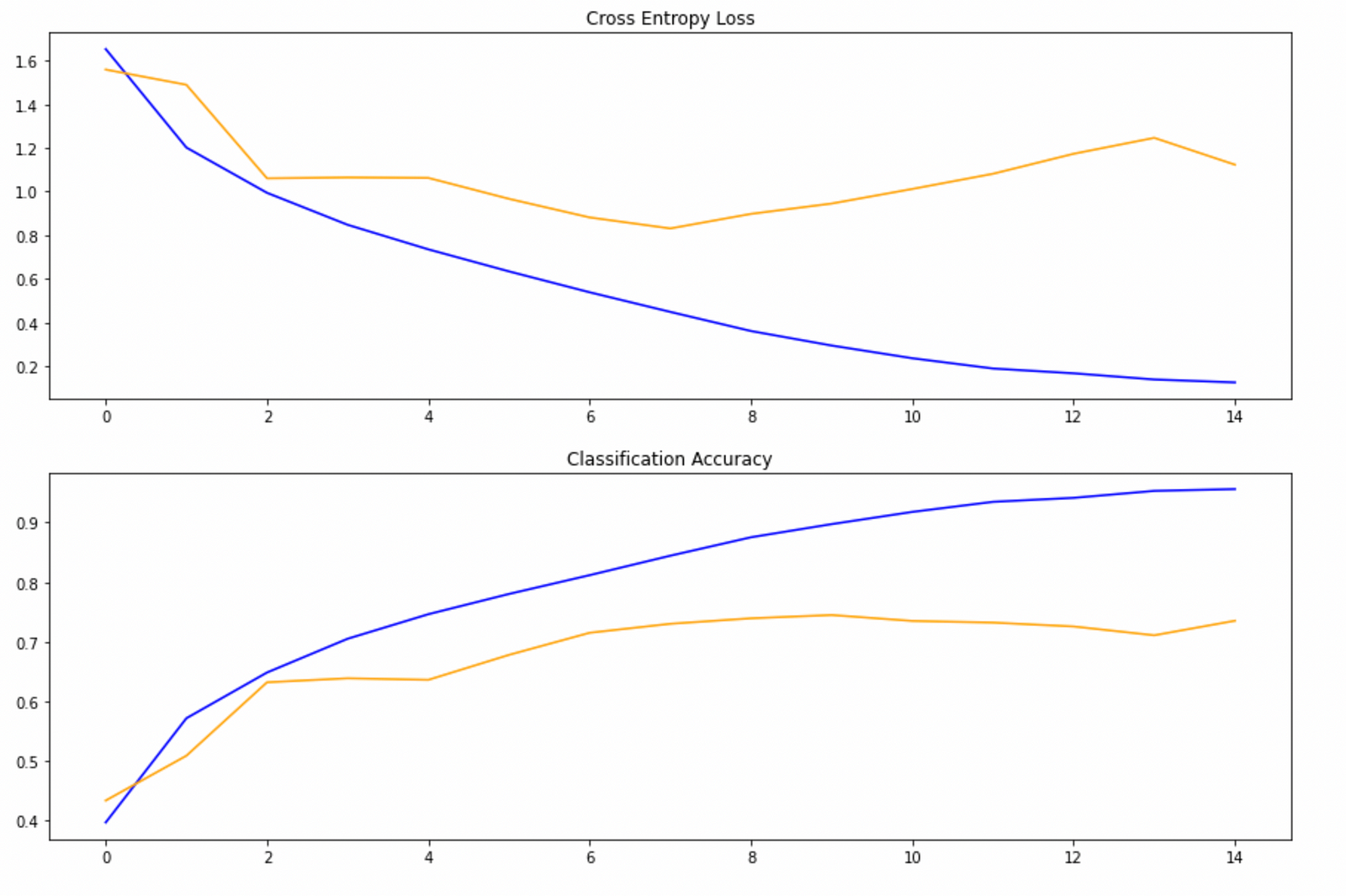

I have trained this model on the CIFAR-10 dataset for 15 epochs without any image augmentation. The results I obtained are as following if you are interested in looking at the code I have used, you can visit this Jupyter Notebook on Kaggle.

Conclusion

So in this blog, we looked at what are ResNets? What are the building blocks of a ResNet? and How to code a ResNet from Scratch? I hope you enjoyed reading the blogs and, this helped you in understanding how ResNets work. If you enjoyed reading this blog, do share it with your mates. If you have any doubts or face difficulties implementing the code, you can drop a comment below or contact me by mail. Do connect with me on LinkedIn. Happy Learning to everyone!

Email ID: [email protected] || LinkedIn

hello, great piece! but the notebook isn't showing when i click on the kaggle link. any help on that please??