This article was published as a part of the Data Science Blogathon

Table of Contents

- Introduction

- CurlWget Extension

- Uploading data on Colab from any Website

- Dealing with various file formats in Collaboratory

- Save and reuse files without wasting the internet

- If files are shared with you on gdrive

- Conclusion

- About the Author

Introduction

Google colaboratory is one of the most used web-based jupyter notebook environments for executing machine learning, deep learning models on cutting-edge CPUs, GPU’s and TPU’s.

If you are new to data science and deep learning, consider migrating from jupyter notebooks running on your local device to google colab notebooks.

There are many advantages of using colab and multiple hacks to customize your notebook as per your needs. But there is a significant disadvantage of using colab notebooks over jupyter notebooks.

As google colaboratory is a web-based notebook environment, you need to upload data from your local device onto the server. Uploading data is relatively slow in colab.

When you are into data science, you deal with datasets ranging from 100MB to a few GB’s. And uploading a dataset with a large file size takes a lot of time in google colaboratory.

In this blog, I will share an adequate solution to this problem by which you can upload even 10GB of a dataset in few seconds.

To do so, you need to be aware of the CurlWget extension, which is the key to our problem. This extension is a lifesaver and makes it easy to load data from anywhere on the browser to colab directly.

CurlWget Extension

Since google colab is hosted on Linux-based servers, we can utilize some basic Linux commands. CurlWget is a little plugin that helps with providing a ‘curl’ or ‘wget’ command line string to copy/paste on a console-only session like google colab.

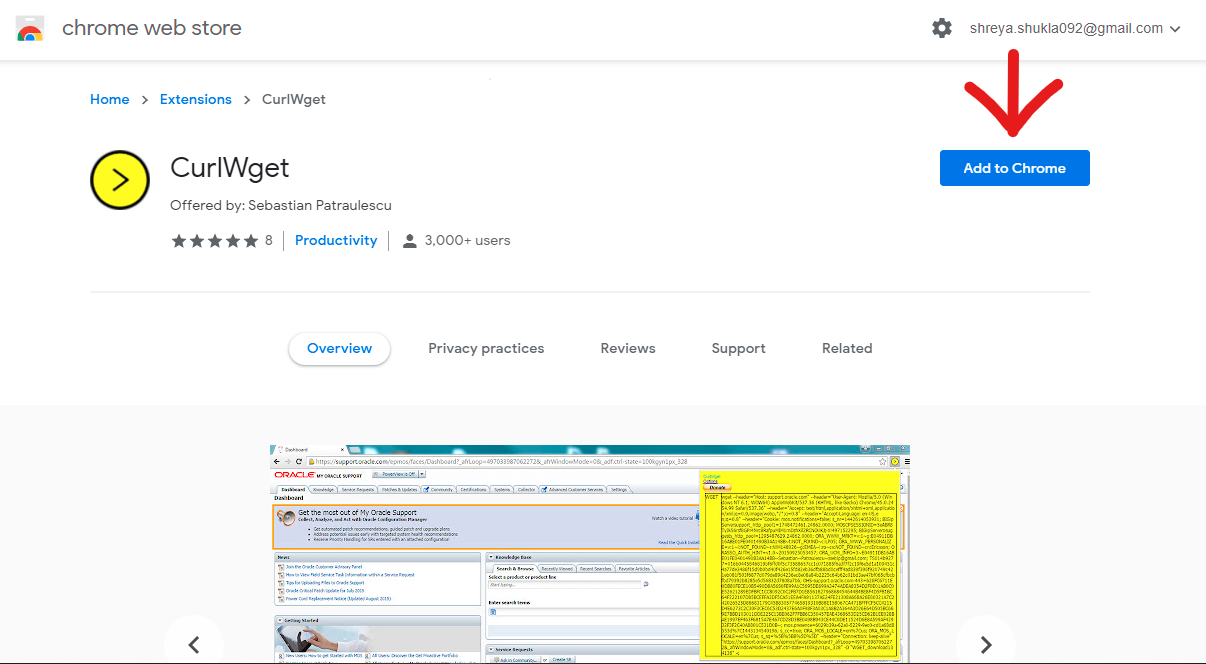

1. Click here to navigate to the extension page and add the extension to chrome.

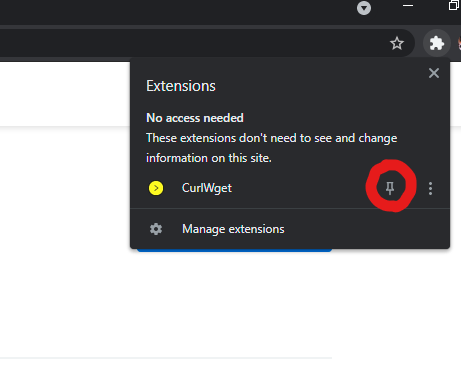

2.Pin the extension. You need to pin to use it further.

3. Now you are all set for further process.

Imagine if you are not using the extension to load your dataset directly. To do so, first, you have to download the dataset to your local system, and then again, you have to upload it on the colab, which costs a lot of time. The process of setting up data to further build models on it takes time and energy.

Uploading data on Colab from any Website

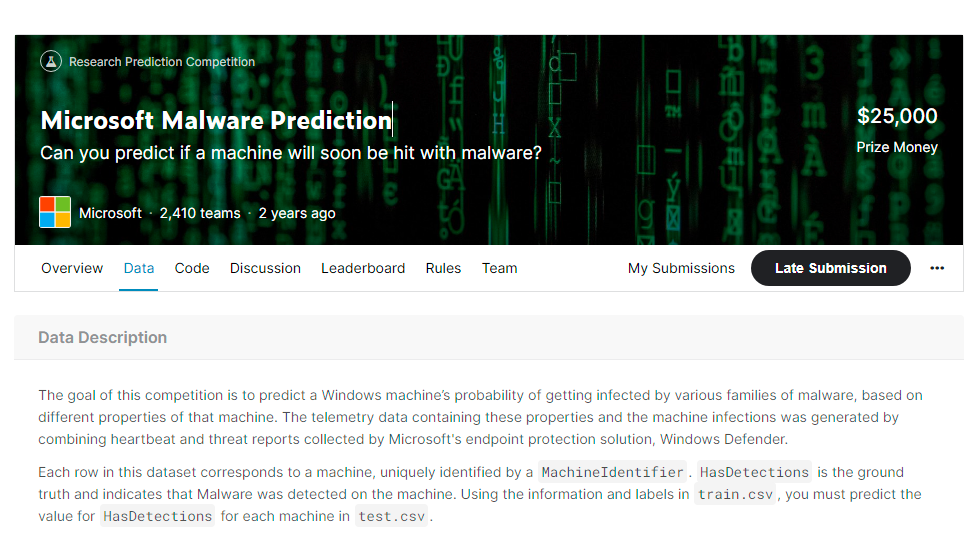

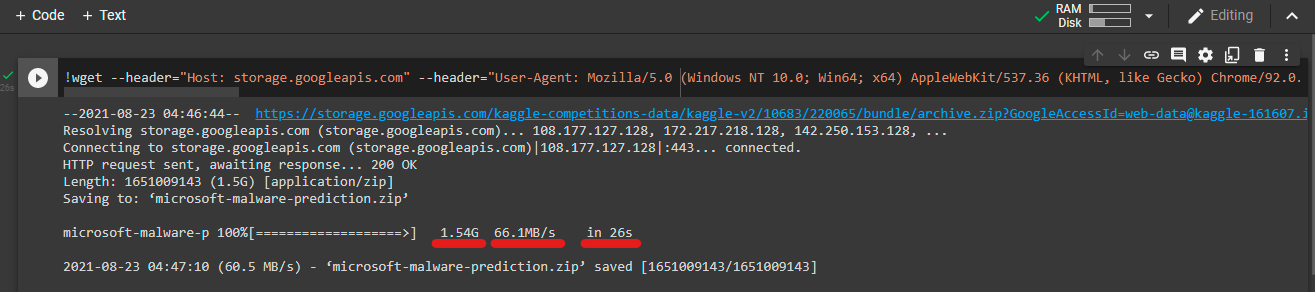

1. I will be using the Microsoft Malware dataset of 1.5 GB file size; if you want to know more about the dataset, click here. You will be navigated directly to ‘DATA PAGE,’ scroll down and click on download all to get the complete dataset. Choose the dataset you want to upload and follow along.

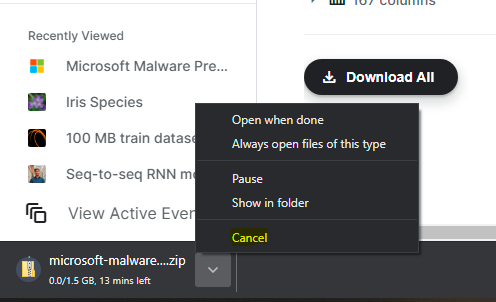

2. You will see your data is downloading, you have to cancel the download. Yes, you read right; cancel the download.

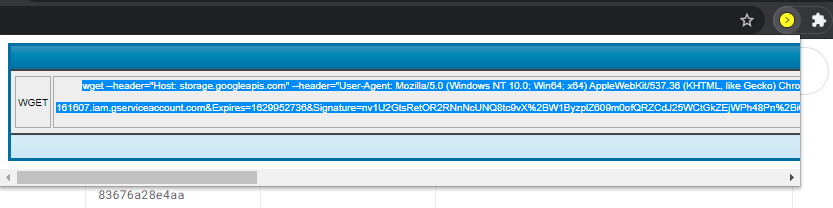

3.After canceling the download, click on the CurlWget extension you pinned earlier. You will see something written on it. Click inside the grey box; as soon as you click inside it, all the text automatically gets selected; copy it by pressing ctrl+C on your keyboard.

4. Go to your colaboratory, add an empty code cell type ‘!’ and paste all the text you copied from the extension without any space between the ‘!’ and text you copied and run the cell.

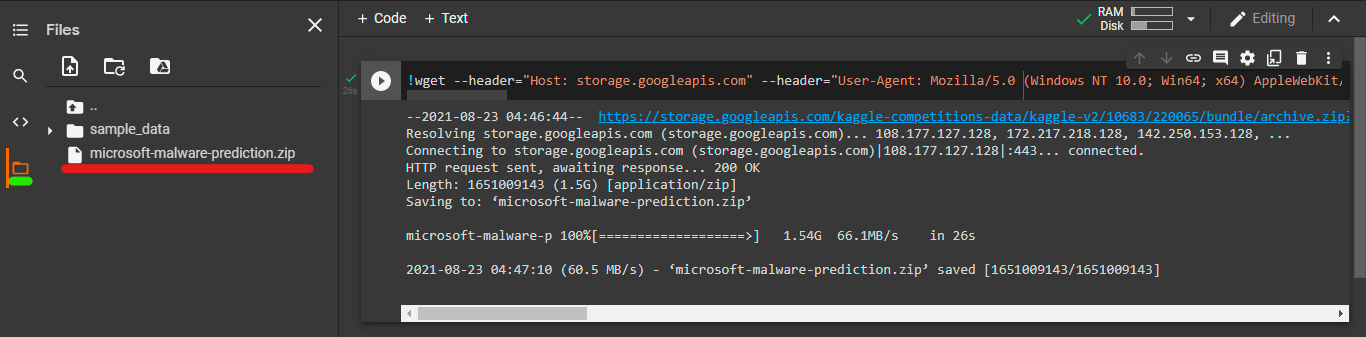

5. That’s it, your file/folder has been uploaded directly to the disk storage of google colab in just 26 seconds.

Datasets of file size in GB’s will load at the speed of a few 100’s MB/s and will cost only a few MB of your internet. You can load data of any file size, considering you don’t exceed the limited disk storage provided by colab.

Dealing with various file formats in Colaboratory

There are different methods for loading data from other places and various file types, but this method works the same for all the data given that it is present on a browser. Now let’s see how you will read and use the file you uploaded.

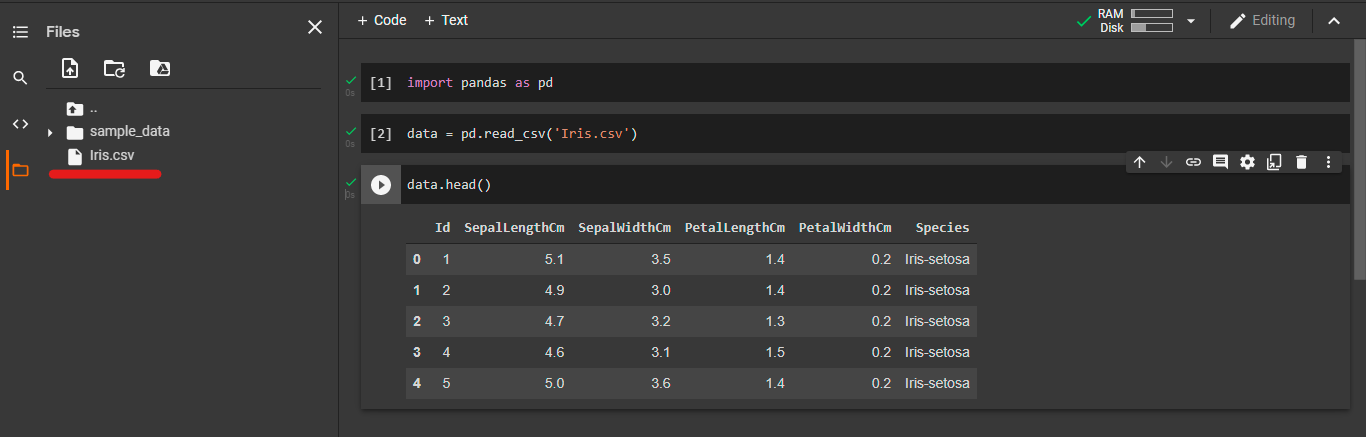

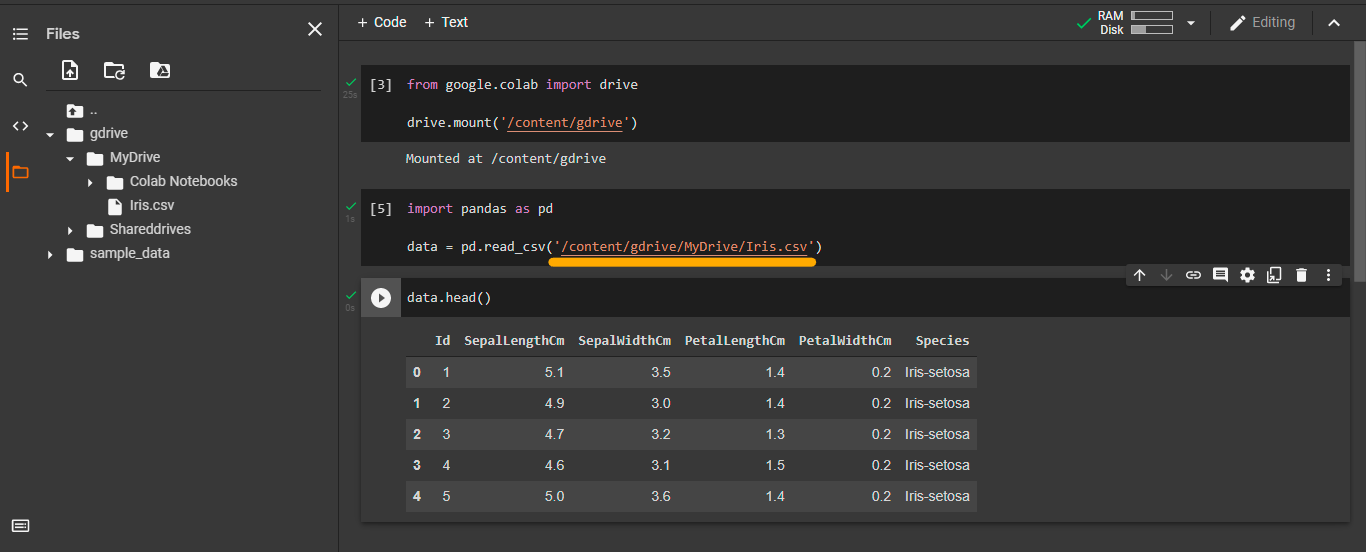

import pandas as pd

data = pd.read_csv('filename.csv')

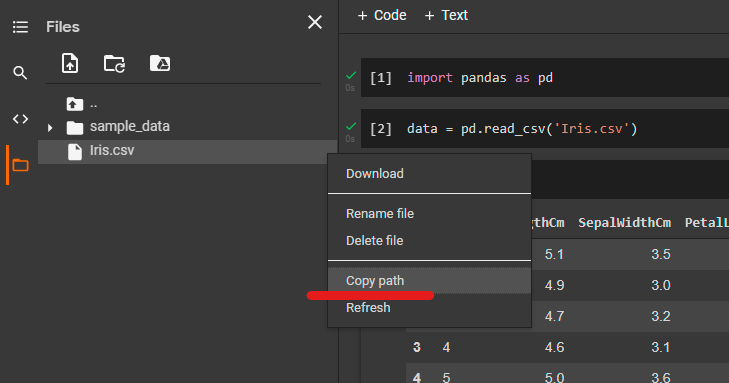

1. If the file is of CSV format, you can directly read it using pandas.

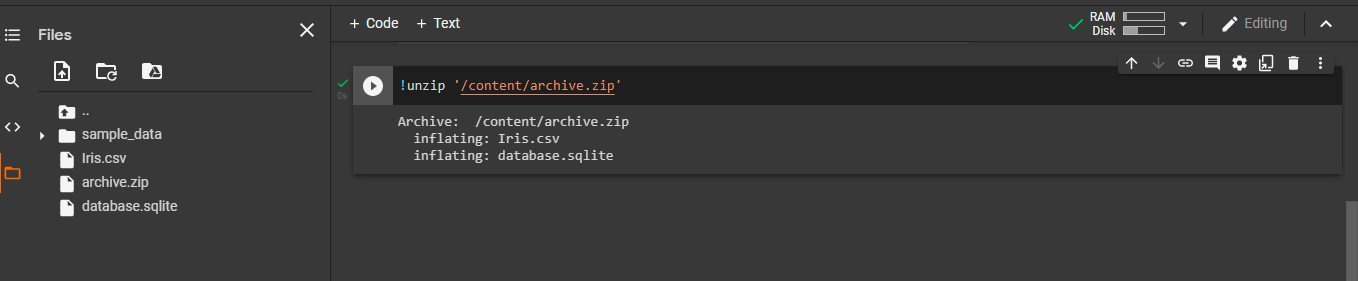

!unzip 'filepath'

2. If the file is in zip format, first, you have to unzip the file. Use the command above to unzip data.

You can copy the path of a file by clicking on the file 3 dots will appear, click on it and click on copy path and paste it by pressing Ctrl + V where you want it to be.

Similarly, you can untar and unrar, tar and rar files respectively.

Save and reuse files without wasting the internet

In general, when we work on machine learning and deep learning models, we have to preprocess the raw data file before using it in the model. There might be a situation in which you have to save the preprocessed data to use it in models further. To save data, you have to download it from colab and store it on your local computer but downloading data is also very slow in google colab, and it also consumes much internet. To avoid this problem, use gdrive to directly transfer your file from colab to gdrive so that you can use it whenever you need it.

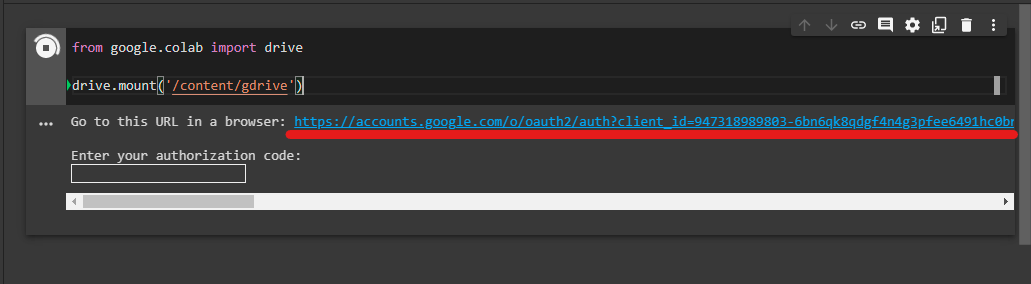

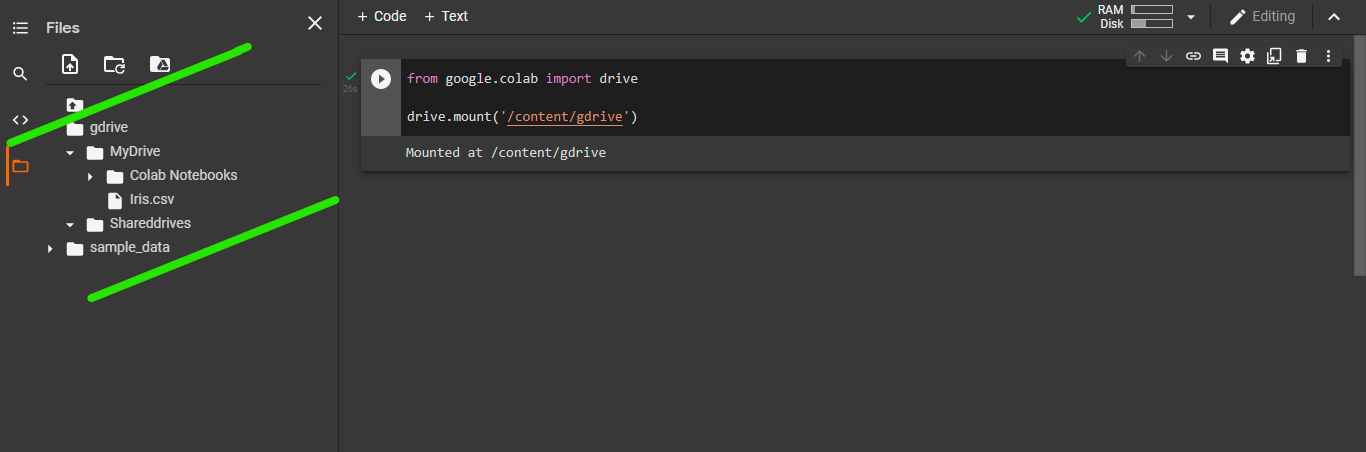

from google.colab import drive

drive.mount('/content/gdrive')

1. Use the code below to mount gdrive

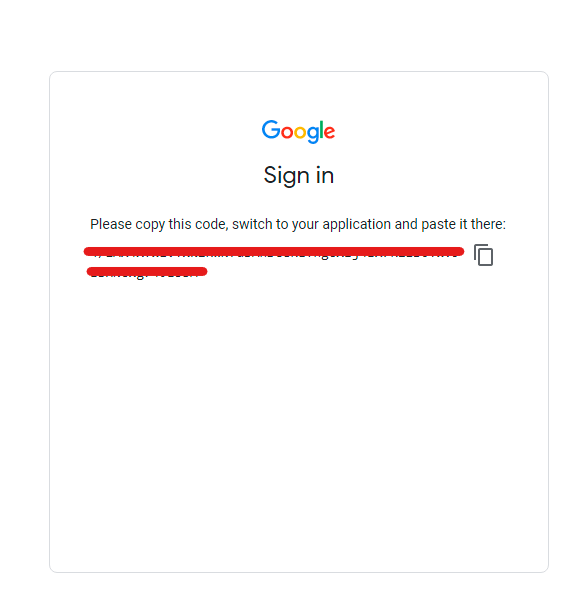

2. Colab requires you to add an authentication code; click on the link provided under the code cell. It will direct you to the code, copy it, paste it into the box, and press enter.

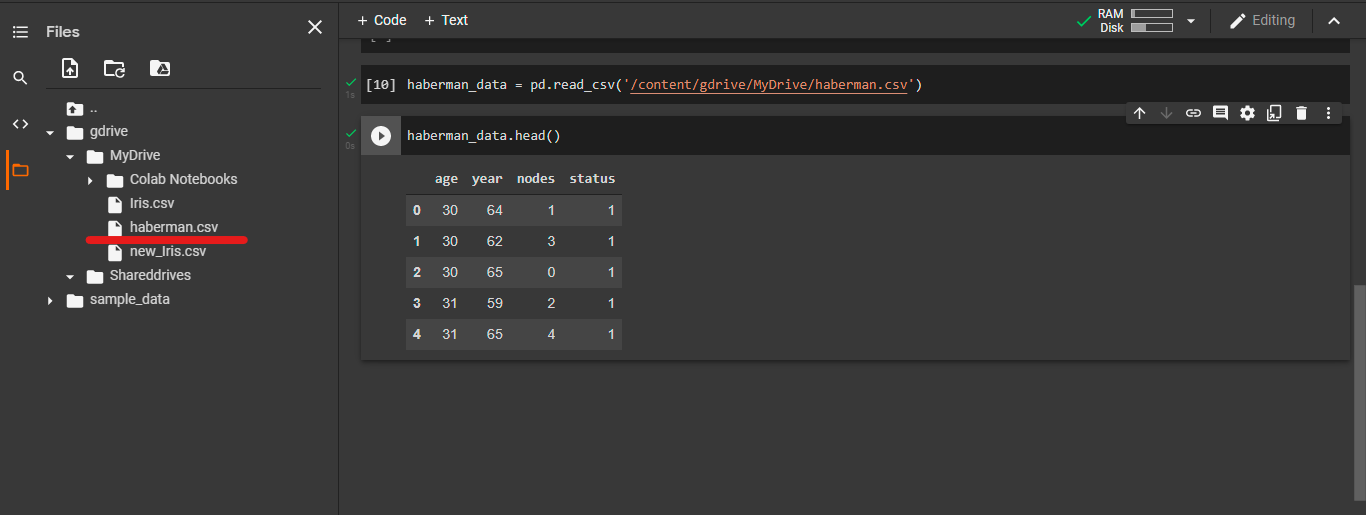

3. All the files and folders of your gdrive are now uploaded on the disk storage of the colab.

4. Click on the folder icon; you will see all the files present in your gdrive.

5. Copy the path of the file you want to use and read it with the appropriate library.

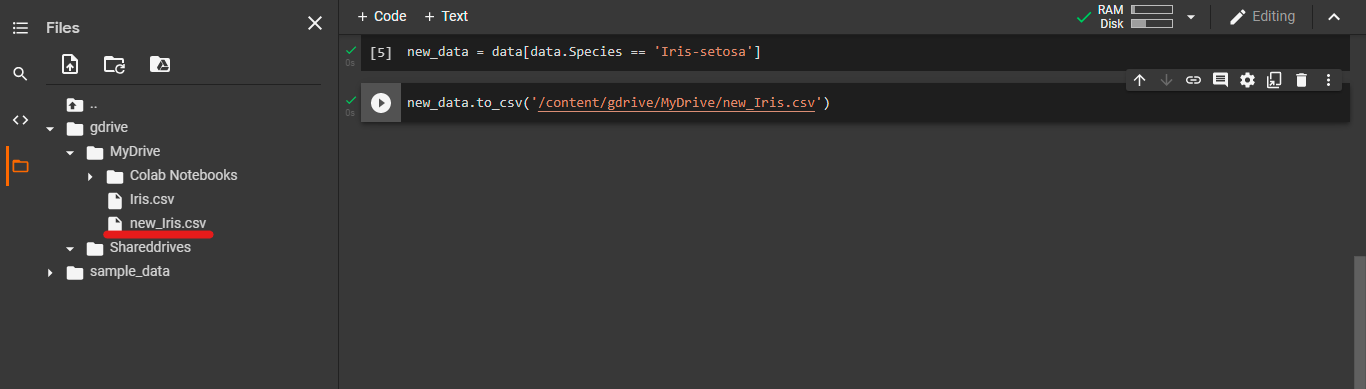

After you make changes in the data file or create new files for your project, save it directly on the gdrive from colab.

1. Use an appropriate library that saves files of your file type to the disk.

2. Choose the path of the folder where you want to save your file, add the file name to your file and run the cell. You will see that the file is directly uploaded to the gdrive.

Note:- If you already mounted gdrive on google colab and are doing changes to gdrive, it will be dynamically updated on colab. You need not mount again.

And if you are doing changes to gdrive via colab, gdrive will automatically update.

If files are shared with you on gdrive

If your colleague or friend shared a google drive link containing data files required for your project, you could directly use the file on google colab without downloading it on your local system.

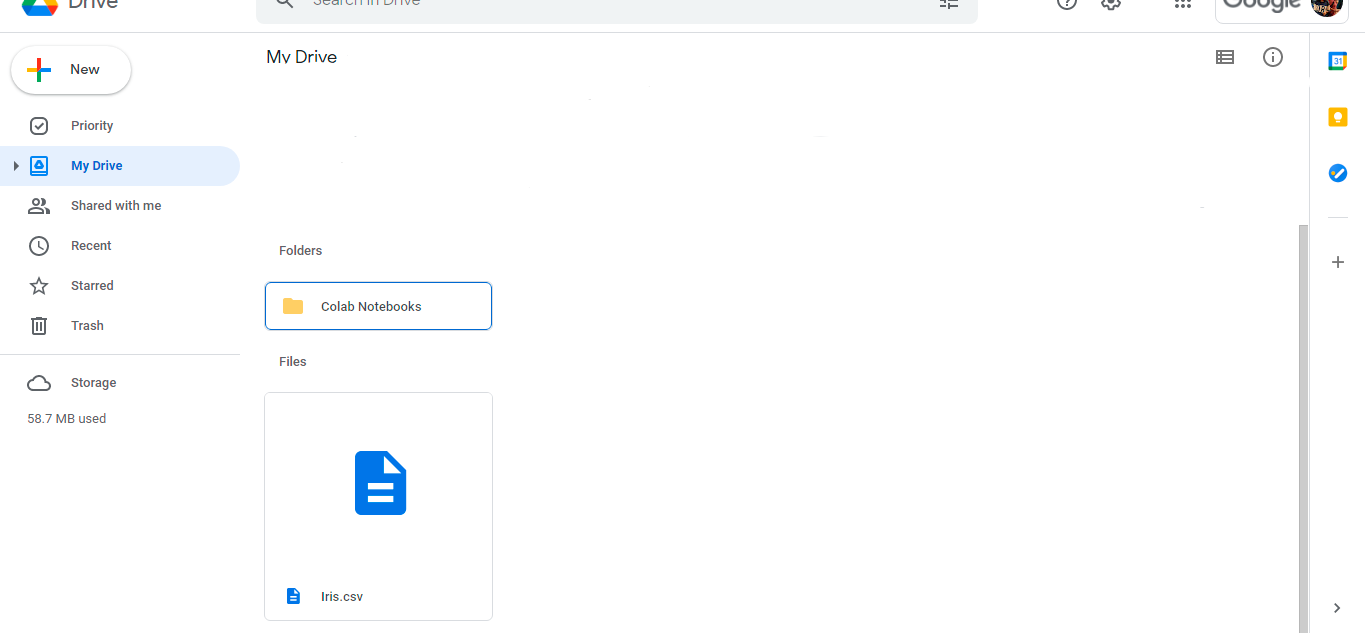

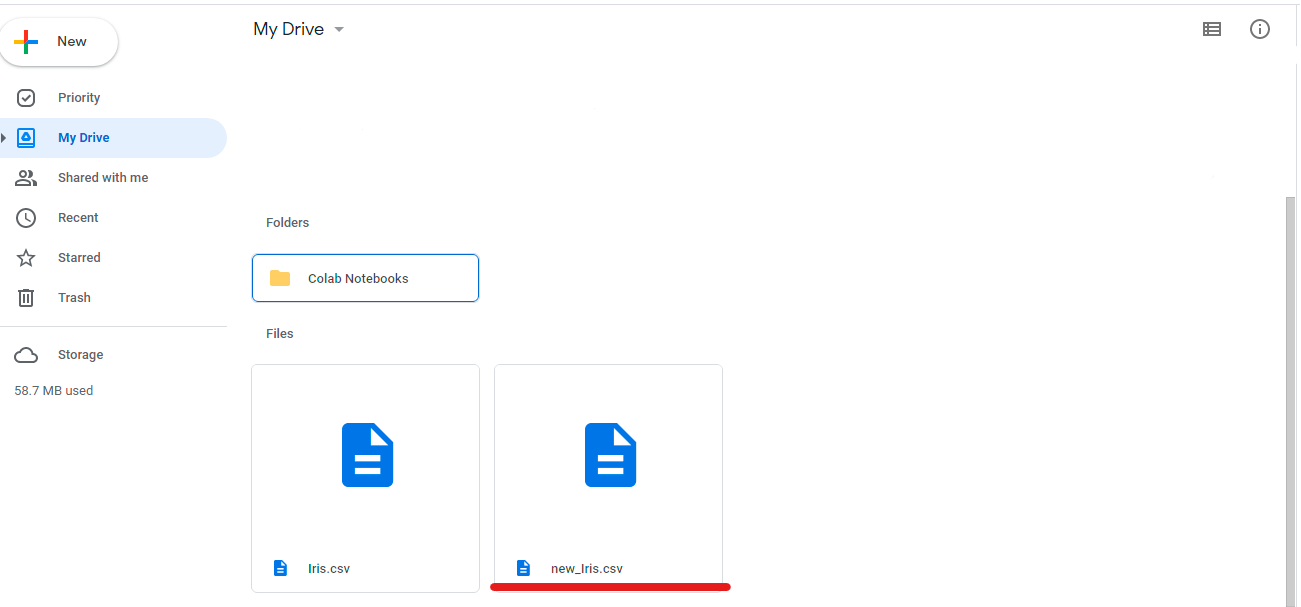

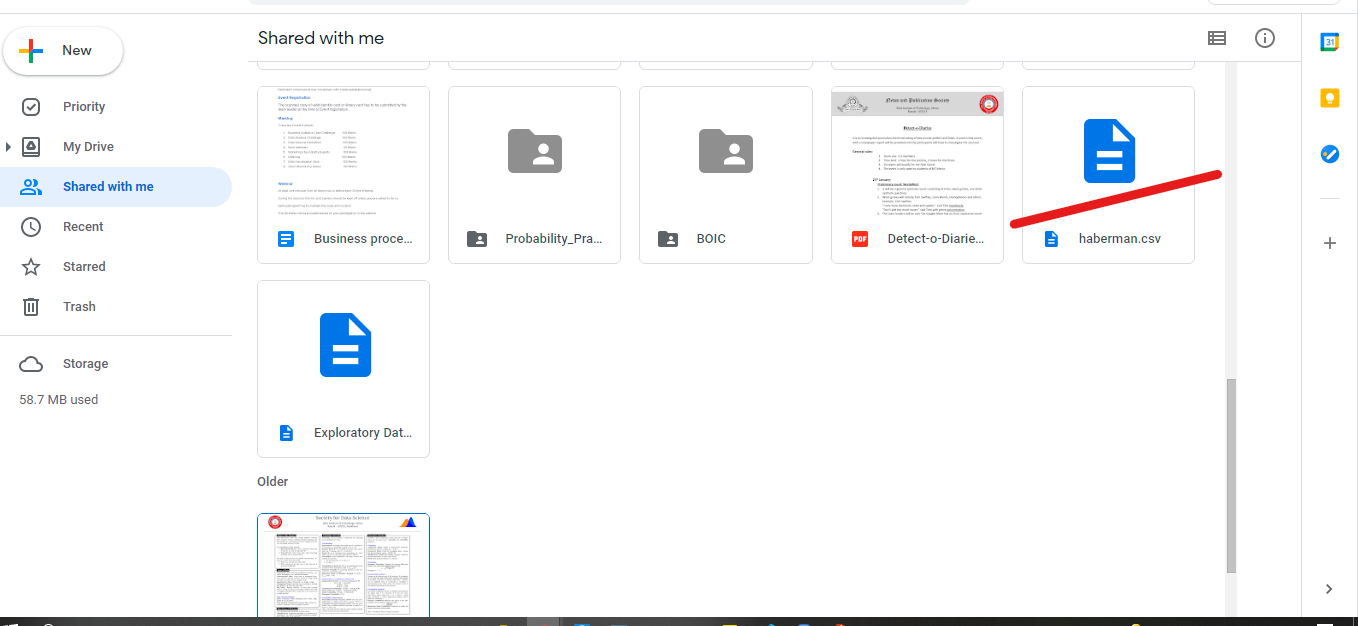

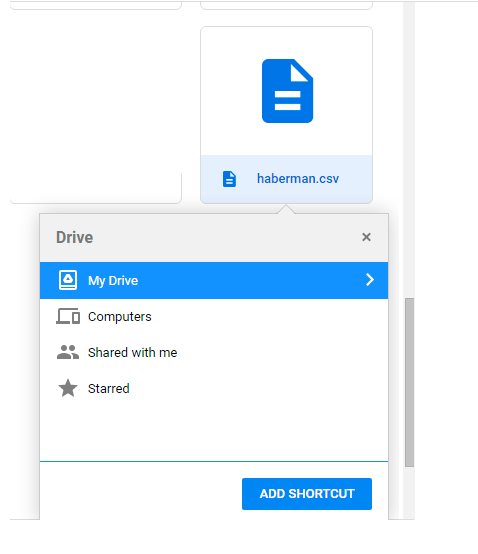

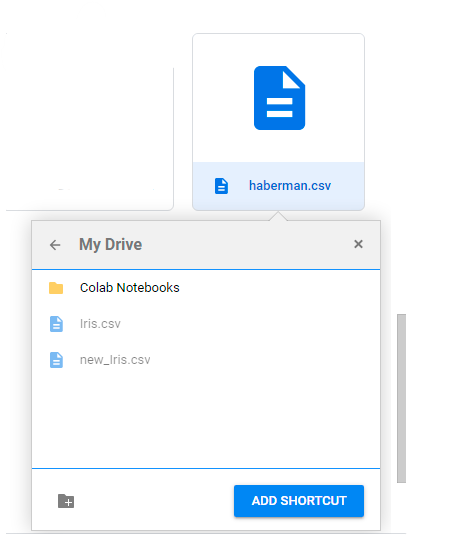

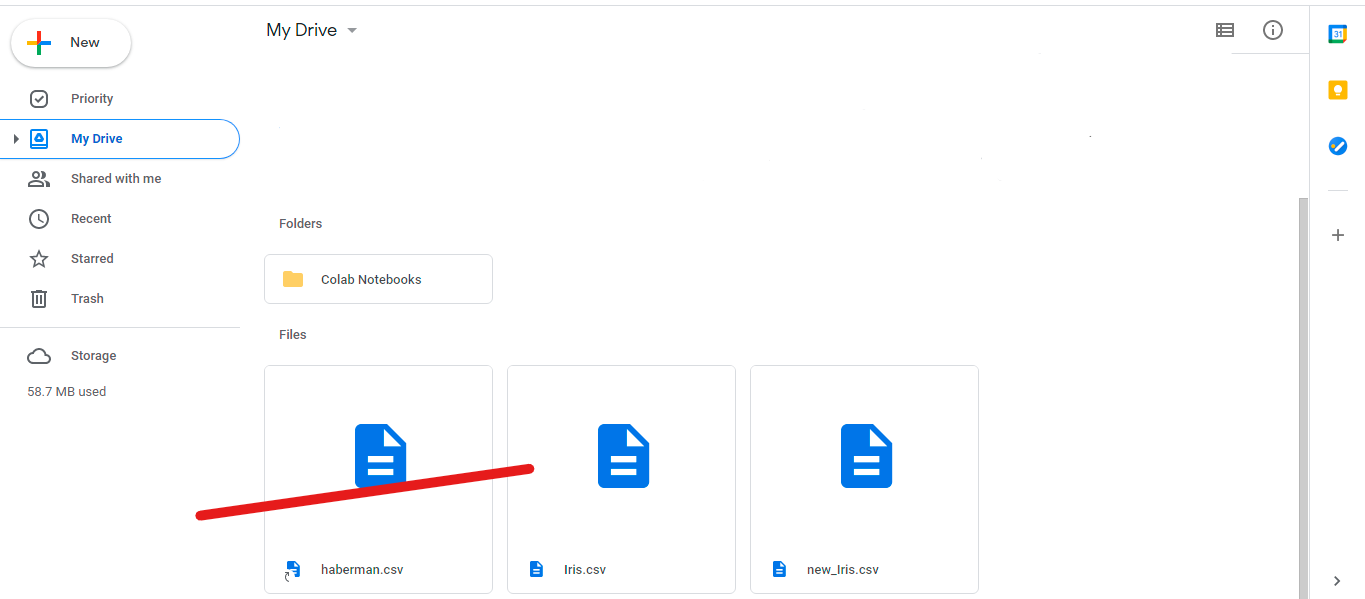

As you might have noticed, mounting drive to google colab only includes files and folders from the My Drive folder. To use the files/folders from Shared with me, you must add that file to your My Drive. Follow the steps below to add the shared with me file in the My Drive folder.

1. Select the file/folder and press Shift+Z on the keyboard.

2. Select the folder on your My Drive where you want to copy your file/folder and click on ADD SHORTCUT

3. File/folder is added to your My Drive; you can also see the changes into google colab.

Conclusion

You must have encountered various methods to upload data on google colab either by API links, URLs, or simply uploading data. You can use this method for all formats and data that download on your local system. Instead of making uploading data on colab a cumbersome process, use CurlWget. Once data is loaded through CurlWget, use google colab to store preprocess data to avoid preprocessing again and again.

I hope you found this a helpful blog, and may it makes it easy for you to deal with large datasets.

About the Author

I am Shreya Shukla currently in my 3rd year at BIT, Mesra. Connect with me on LinkedIn, and do leave a message if you are interested in more hacks like this or if you want to learn about data science and machine learning.

The media shown in this article on CurlWget Extension are not owned by Analytics Vidhya and are used at the Author’s discretion.