How to Use Yolo v5 Object Detection Algorithm for Custom Object Detection

This article was published as a part of the Data Science Blogathon

Introduction

In this article, I will explain to you about using Yolov5 Algorithm for Detecting & Classifying different types of 60+ Road Traffic Signs. We will start from very basic and covers each step like Preparation of Dataset, Training, and Testing. In this article, we will use Windows 10 machine.

Let’s talk more about YOLO and its Architecture.

YOLO is an acronym that stands for You Only Look Once. We are employing Version 5, which was launched by Ultralytics in June 2020 and is now the most advanced object identification algorithm available. It is a novel convolutional neural network (CNN) that detects objects in real-time with great accuracy. This approach uses a single neural network to process the entire picture, then separates it into parts and predicts bounding boxes and probabilities for each component. These bounding boxes are weighted by the expected probability. The method “just looks once” at the image in the sense that it makes predictions after only one forward propagation run through the neural network. It then delivers detected items after non-max suppression (which ensures that the object detection algorithm only identifies each object once).

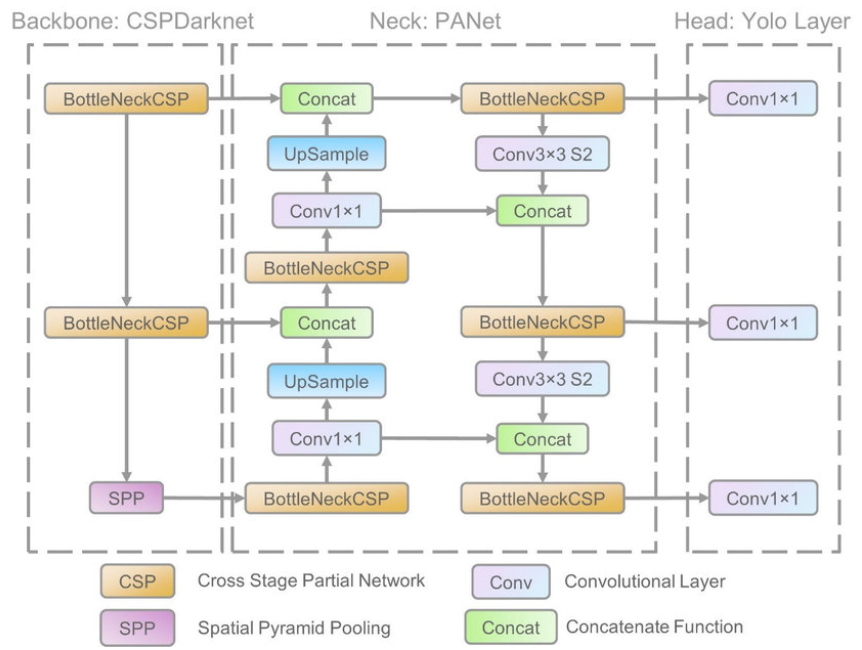

Its architecture mainly consisted of three parts, namely-

1. Backbone: Model Backbone is mostly used to extract key features from an input image. CSP(Cross Stage Partial Networks) are used as a backbone in YOLO v5 to extract rich in useful characteristics from an input image.

2. Neck: The Model Neck is mostly used to create feature pyramids. Feature pyramids aid models in generalizing successfully when it comes to object scaling. It aids in the identification of the same object in various sizes and scales.

Feature pyramids are quite beneficial in assisting models to perform effectively on previously unseen data. Other models, such as FPN, BiFPN, and PANet, use various sorts of feature pyramid approaches.

PANet is used as a neck in YOLO v5 to get feature pyramids.

3. Head: The model Head is mostly responsible for the final detection step. It uses anchor boxes to construct final output vectors with class probabilities, objectness scores, and bounding boxes.

Advantages & Disadvantages of Yolo v5

- It is about 88% smaller than YOLOv4 (27 MB vs 244 MB)

- It is about 180% faster than YOLOv4 (140 FPS vs 50 FPS)

- It is roughly as accurate as YOLOv4 on the same task (0.895 mAP vs 0.892 mAP)

- But the main problem is that for YOLOv5 there is no official paper was released like other YOLO versions. Also, YOLO v5 is still under development and we receive frequent updates from ultralytics, developers may update some settings in the future.

Table of Contents

1. Setting up the virtual environment in Windows 10.

2. Cloning the GitHub repository of Yolo v5.

3. Preparation & Pre-Processing of Dataset.

4. Training the model.

5. Prediction & Live Testing.

Let’s get started, 🤗

Creating a Virtual Environment

We will firstly set up the Virtual Environment, by running that command in your windows command prompt-

1. Install Virtualenv (run the following command to install the virtual environment)

$ pip install virtualenv

2. Creating an environment (run the following command to create the virtual environment)

$ py -m venv YoloV5_VirEnv

3. Activate it using that command (run the following command to activate that environment)

$ YoloV5_VirEnvScriptsactivate

You can also deactivate it using (run the following command if you want to deactivate that environment)

$ deactivate

Setting Up YOLO

After activation of your virtual environment, clone this GitHub repository which is created and maintained by Ultralytics.

$ git clone https://github.com/ultralytics/yolov5

$ cd yolov5

Directory Structure

Installing necessary libraries: Firstly, we will install all the necessary libraries required to do the image processing (OpenCV & Pillow), image classification with (Tensorflow & PyTorch), make manipulations with matrix (Numpy),

$ pip install -r requirements.txt

Preparation of Dataset

Download the complete labeled dataset from that Link.

Then extract the zip file and move it to yolov5/ directory.

Create a file named as data.yaml inside your yolov5/ directory and paste the below code into it. This file will contain your labels and the path of the training and testing datasets.

train: 1144images_dataset/train

val: 1144images_dataset/test

nc: 77

names: ['200m',

'50-100m',

'Ahead-Left',

'Ahead-Right',

'Axle-load-limit',

'Barrier Ahead',

'Bullock Cart Prohibited',

'Cart Prohobited',

'Cattle',

'Compulsory Ahead',

'Compulsory Keep Left',

'Compulsory Left Turn',

'Compulsory Right Turn',

'Cross Road',

'Cycle Crossing',

'Compulsory Cycle Track',

'Cycle Prohibited',

'Dangerous Dip',

'Falling Rocks',

'Ferry',

'Gap in median',

'Give way',

'Hand cart prohibited',

'Height limit',

'Horn prohibited',

'Humpy Road',

'Left hair pin bend',

'Left hand curve',

'Left Reverse Bend',

'Left turn prohibited',

'Length limit',

'Load limit 5T',

'Loose Gravel',

'Major road ahead',

'Men at work',

'Motor vehicles prohibited',

'Nrrow bridge',

'Narrow road ahead',

'Straight prohibited',

'No parking',

'No stoping',

'One way sign',

'Overtaking prohibited',

'Pedestrian crossing',

'Pedestrian prohibited',

'Restriction ends sign',

'Right hair pin bend',

'Right hand curve',

'Right Reverse Bend',

'Right turn prohibited',

'Road wideness ahead',

'Roundabout',

'School ahead',

'Side road left',

'Side road right',

'Slippery road',

'Compulsory sound horn',

'Speed limit',

'Staggred intersection',

'Steep ascent',

'Steep descent',

'Stop',

'Tonga prohibited',

'Truck prohibited',

'Compulsory turn left ahead',

'Compulsory right turn ahead',

'T-intersection',

'U-turn prohibited',

'Vehicle prohibited in both directions',

'Width limit',

'Y-intersection',

'Sign_C',

'Sign_T',

'Sign_S',

'No entry',

'Compulsory Keep Right',

'Parking',

]

We will use a total of 77 different classes

Training of Model using Yolo v5

Now, run that command to finally train your dataset. You can change the batch size depending on your PC’s Specifications. Training time will depend on your PC’s performance, prefer to use Google Colab.

You can also train different versions of YOLOv5 algorithm, which can find here. All will take different computational power and provide different combinations of FPS(Frames Per Second) & Accuracy.

In this article, we will use the YOLOv5s version, because it is the simplest of all.

$ python train.py --data data.yaml --cfg yolov5s.yaml --batch-size 8 --name Model

Now Inside runs/train/Model/, you will find your final trained model.

runs/train/Model/

weights/

best.pt

last.pt

227359 events.out.tfevents.1638984167.LAPTOP-7CJ5UG09.6292.0

hyp.yaml

opt.yaml

results.txt

results.png

train_batch0.jpg

train_batch1.jpg

train_batch2.jpg

best.pt contains your final model for final Detection & Classification.

results.txt file will contain your summary of Accuracy & Losses achieved at each epoch.

Other images contain some plots and diagrams that will be useful for more analysis.

Testing of Model Yolo v5

Move outside of your yolov5/ directory and clone that repository This repo will contain the codes for testing of the model.

$ git clone https://github.com/aryan0141/RealTime_Detection-And-Classification-of-TrafficSigns

$ cd RealTime_Detection-And-Classification-of-TrafficSigns

Directory Structure

RealTime_Detection-And-Classification-of-TrafficSigns/

Codes

Model

Results

Sample Dataset

Test

classes.txt

Documentation.pdf

README.md

requirements.txt

vidd1.mp4

Now copy the model that we have trained above and paste it into this directory.

Note: I have already included a trained model here in Model/ directory, but you can also replace it with your trained model.

Move inside that directory where your codes are present.

$ cd Codes/

Put your Videos or Images in Test/ directory. I have also included some sample videos and images for your reference.

For testing Images

$ python detect.py --source ../Test/test1.jpeg --weights ../Model/weights/best.pt

For testing Videos

$ python detect.py --source ../Test/vidd1.mp4 --weights ../Model/weights/best.pt

For Webcam

$ python detect.py --source 0 --weights ../Model/weights/best.pt

Your final images and videos are stored in Results/ directory.

Sample Image

The Frames Per Second(FPS) depend on which GPU have you used. I got around 50FPS on Nvidia MX 350 2GB Graphic Card.

This completes our today’s discussion on the project which involves the YOLO v5 algorithm, and finally, we are come up with one of the exciting Data Science projects.

Here is my Linkedin profile in case you want to connect with me. I’ll be happy to be connected with you.

GitHub

Here is my Github profile to find the complete code which I have used in this article.

End Note

Thanks for reading!

Do check my other blogs also.

I hope that you have enjoyed the article. If you like it, share it with your friends also. Something not mentioned or want to share your thoughts? Feel free to comment below And I’ll get back to you.

Excellent worked sir I am Ghulam Abbass, PhD research student. I need yolo5s complete Python code. Thanks in advance.

Thank you for sharing!

Thanks for the explanation I have a question. please can YOLO-ultralytics be able to give id to object detected, if it can then how?