The sigmoid function is a fundamental component of artificial neural network and is crucial in many machine-learning applications. This blog post will dive deep into the sigmoid function and explore its properties, applications, and implementation in code.

Source: Pixabay

First, let’s start with the basics. The sigmoid function is a mathematical function that maps any input value to a value between 0 and 1, making it useful for binary classification and logistic regression problems. The shape of the sigmoid function is often referred to as an “S” shape, as it starts with a slow increase, rapidly approaches 1, and finally levels off.

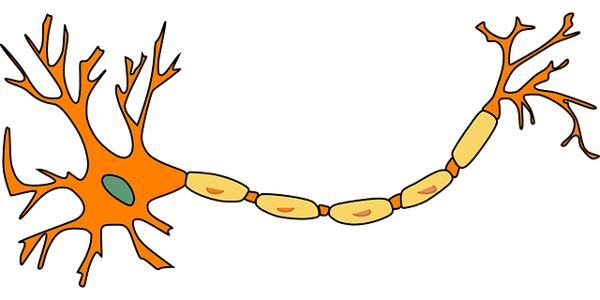

In artificial neural networks, the sigmoid function is commonly used as an activation function in the neurons. It introduces non-linearity into the model, allowing the neural network to learn more complex decision boundaries. The function is particularly useful in feedforward neural networks, which are used in different applications like image recognition, natural language processing, and speech recognition.

Learning Objectives:

In this blog post, we will discuss the properties of the sigmoid function, its use in artificial neural networks, and how to implement it in code. We will also explore different applications of sigmoid and its limitations. So, let’s get started and explore the exciting world of the sigmoid function in artificial neural networks.

This article was published as a part of the Data Science Blogathon.

The sigmoid function is defined mathematically as 1/(1+e^(-x)), where x is the input value and e is the mathematical constant of 2.718. The function maps any input value to a value between 0 and 1, making it useful for binary classification and logistic regression problems. The range of the function is (0,1), and the domain is (-infinity,+infinity).

One of the key properties of the sigmoid function is its “S” shape. As the input value increases, the output value of it starts with a slow increase, then rapidly approaches 1, and finally levels off. This property makes a valuable function for modeling decision boundaries in binary classification problems.

Another property of the sigmoid is its derivative, commonly used in training neural networks. The derivative of the function is defined as f(x)(1-f(x)), where f(x) is the output of the function. The derivative is helpful in training neural networks because it allows the network to adjust the weights and biases of the neurons more efficiently.

It’s also worth mentioning that the sigmoid function has some limitations. For example, the output of the sigmoid is always between 0 and 1, which can cause problems when the network’s output should be greater than 1 or less than 0. Other activation functions like ReLU and tanh can be used in such cases.

Visualizing the sigmoid function using graphs can help to understand its properties better. Its graph will show the “S” shape of the function and how the output value changes as the input value changes.

Python Code:

The sigmoid function is commonly used as an activation function in artificial neural networks. In feedforward neural networks, the sigmoid function is applied to each neuron’s output, allowing the network to introduce non-linearity into the model. This nonlinearity is important because it allows the neural network to learn more complex decision boundaries, which can improve its performance on specific tasks.

Advantages:

Disadvantages:

Here is a comparison table of sigmoid, ReLU, and tanh activation functions in terms of performance and optimization:

|

|---|

In the previous section, we have seen how to implement the sigmoid function in Python using NumPy. However, when working with deep learning frameworks like TensorFlow and PyTorch, it’s often more convenient to use built-in functions to calculate the sigmoid function.

Here is an example of how to implement the sigmoid function in TensorFlow:

import tensorflow as tf # Define a tensor x = tf.constant([-1.0, 0.0, 1.0]) # Apply the sigmoid function y = tf.math.sigmoid(x) print(y)

The output of the above code will be 0.26894143, 0.5, and 0.7310586, which are the sigmoid values of -1.0, 0.0, and 1.0, respectively.

Here is an example of how to implement the sigmoid function in PyTorch:

import torch # Define a tensor x = torch.tensor([-1.0, 0.0, 1.0]) # Apply the sigmoid function y = torch.sigmoid(x) print(y)

The output of the above code will be 0.2689, 0.5000, and 0.7311, which are the sigmoid values of -1.0, 0.0, and 1.0, respectively.

It’s worth mentioning that when working with deep learning frameworks, you must be mindful of the data types and shapes of the tensors you’re working with. For example, suppose you’re working with a large dataset. In that case, it may be more efficient to use a batch version of the sigmoid to simultaneously calculate the sigmoid values for multiple input values. Additionally, it’s essential to consider the memory and computational requirements of the sigmoid function when working with large models.

In terms of best practices, ensuring that your implementation of the sigmoid function is efficient and numerically stable is essential. This means that you should avoid using explicit loops to calculate the sigmoid and instead use vectorized operations or built-in functions. Additionally, you should be mindful of the range of the input values and make sure that the sigmoid function is not producing NaN or Inf values.

Source: Pixabay

The sigmoid function is a mathematical function that takes an input value and outputs a value between 0 and 1. It is shaped like an S curve, with a steep slope in the middle and flat slopes at the top and bottom.

The derivative of a function tells us how much the function changes in response to a change in its input. The derivative is calculated as follows:

d/dx sigmoid(x) = sigmoid(x) * (1 – sigmoid(x))

Here are the following points why it is important:

In conclusion, the sigmoid function is an essential component in artificial neural networks, particularly in the context of binary classification and logistic regression. It allows for the introduction of non-linearity into the model, which enables the neural network to learn more complex decision boundaries. The sigmoid function is also commonly used in deep learning frameworks like TensorFlow and PyTorch, and it can be easily implemented in code using NumPy, TensorFlow, and PyTorch.

Key Takeaways:

The logistic function outputs values between 0 and 1, while the sigmoid function outputs values between -1 and 1. The logistic function is also more computationally efficient than the sigmoid function.

No, the sigmoid function is a transformation function. It takes a number as input and outputs a new number between 0 and 1. Loss functions, on the other hand, compare the model’s predictions to the actual data to see how well the model is performing.

In short, transformation functions change inputs, while loss functions measure performance.

There are a number of other activation functions that can be used instead of the sigmoid function, such as the tanh function, the ReLU function, and the leaky ReLU function. These activation functions have different advantages and disadvantages, so the best choice for a particular application will depend on the specific problem being solved.

If you liked this blog, consider following me on Analytics Vidhya, GitHub, and LinkedIn.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,