Most Essential 2023 Interview Questions on Data Engineering

Introduction

Data engineering is the field of study that deals with the design, construction, deployment, and maintenance of data processing systems. The goal of this domain is to collect, store, and process data efficiently and efficiently so that it can be used to support business decisions and power data-driven applications. This includes designing and implementing data pipelines, building data storage solutions, and building data processing systems to process big data. Data engineers work closely with data scientists, analysts, and stakeholders to ensure that data systems meet organizational needs and support the generation of valuable insights.

Today’s article will cover questions and topics relevant to data engineering that you might expect to come across in your following interview. Learning objectives for today would be

- To understand the nuances of data engineering

- The features and functioning of Hadoop

- Understanding MapReduce in Hadoop

- Understanding the Snowflake Schema

- The difference between structured and unstructured data and how to convert from one to another

This article was published as a part of the Data Science Blogathon.

Table of Contents

- Data Engineering Beginner-Level Interview Questions

1.1. What is data engineering?

1.2. What is the difference between structured and unstructured data?

1.3. What are the features of Hadoop?

1.4. What are the various aspects of Hadoop? - Data Engineering Intermediate-Level Interview Questions

2.1. Explain MapReduce in Hadoop.

2.2. What is Name Node? How does a Name Node communicate with Data Node?

2.3. Explain the Snowflake Schema in brief.

2.4. What is Hadoop Streaming?

2.5. What are the Skewed Table and SerDe in the Hive? - Data Engineering Expert-Level Interview Questions

3.1. What is orchestration?

3.2. What are the different data validation approaches?

3.3. Explain the use of the Hive in the Hadoop ecosystem.

3.4. How does a data warehouse differ from an operational database?

3.5. How to reform unstructured data into structured data?

3.6. What is the difference between Data Architect and Data Engineer? - Conclusion

Data Engineering Beginner-Level Interview Questions

Q1. What is Data Engineering?

Data Engineering is designing, constructing, and maintaining the architecture and infrastructure for storing, processing, and analyzing large and complex data sets to support data-driven decision-making. It involves using various tools, technologies, and techniques to manage data, ensure data quality and integrity, and make data available for analysis and visualization. Data engineering is a crucial aspect of the data science workflow and provides the foundation for data-driven insights and discoveries.

Q2. What is the Difference Between Structured and Unstructured Data?

Data in the real world comes mainly in two different forms – structured and unstructured data. Structured data refers to those data that possess a definite format – often arranged in tabular format with names and values distinctly mentioned in it. Examples of the same include data or spreadsheets, CSVs, etc. However, most real-world data is unstructured, meaning they do not possess a pre-defined structure or organization. Examples include text, audio, video, or image data. Structural data is easier to process using computational tools, while unstructured data requires complex processes like NLP, text mining, or image processing to make sense out of the data. Thus there is a constant attempt to transform unstructured data into structured data, as w will see in the proceeding questions and concepts.

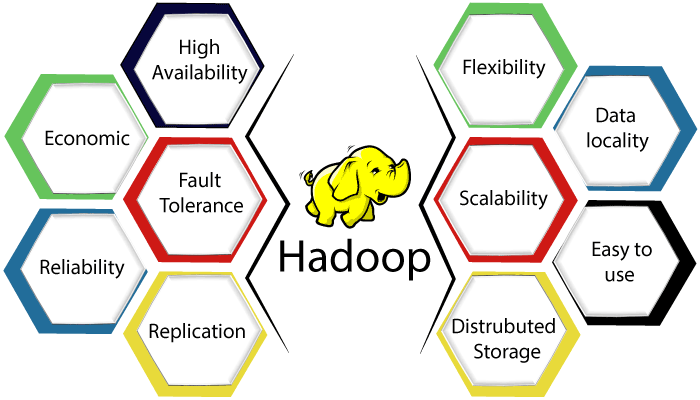

Q3. What are the Features of Hadoop?

Apache Hadoop is an open-source framework for storing and processing big data. Some of its key features include:

- Distributed Processing: Hadoop enables the distribution of large data sets across multiple commodity servers, allowing for parallel data processing.

- Scalability: Hadoop can easily handle petabyte-scale data and can be scaled horizontally by adding more nodes to the cluster.

- Fault Tolerance: Hadoop has built-in redundancy so that if a node fails, its tasks can be automatically re-assigned to other nodes in the cluster, ensuring high availability.

- Cost-Effective: Hadoop uses commodity hardware, making it a cost-effective solution for storing and processing big data.

- Ecosystem: Hadoop has a large and growing ecosystem of related projects, including MapReduce, HDFS, Hive, Pig, Spark, and others, that provide additional functionality and tools for data processing and analysis.

- Open-source: Hadoop is open-source software, allowing a large and active community of developers to contribute to and support its development.

Q4. What are the Components of Hadoop?

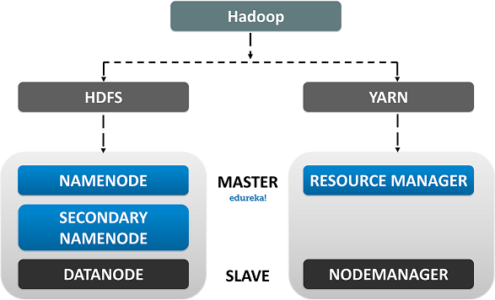

Apache Hadoop has two main components:

- Hadoop Distributed File System (HDFS): A scalable, tolerant to a fault, and distributed file system that stores large data sets.

- MapReduce: A programming model for processing large data sets that divides the processing into two stages: “Map” and “Reduce.” The map stage processes individual data units and generates intermediate results, while the Reduce stage aggregates the intermediate results into the final output.

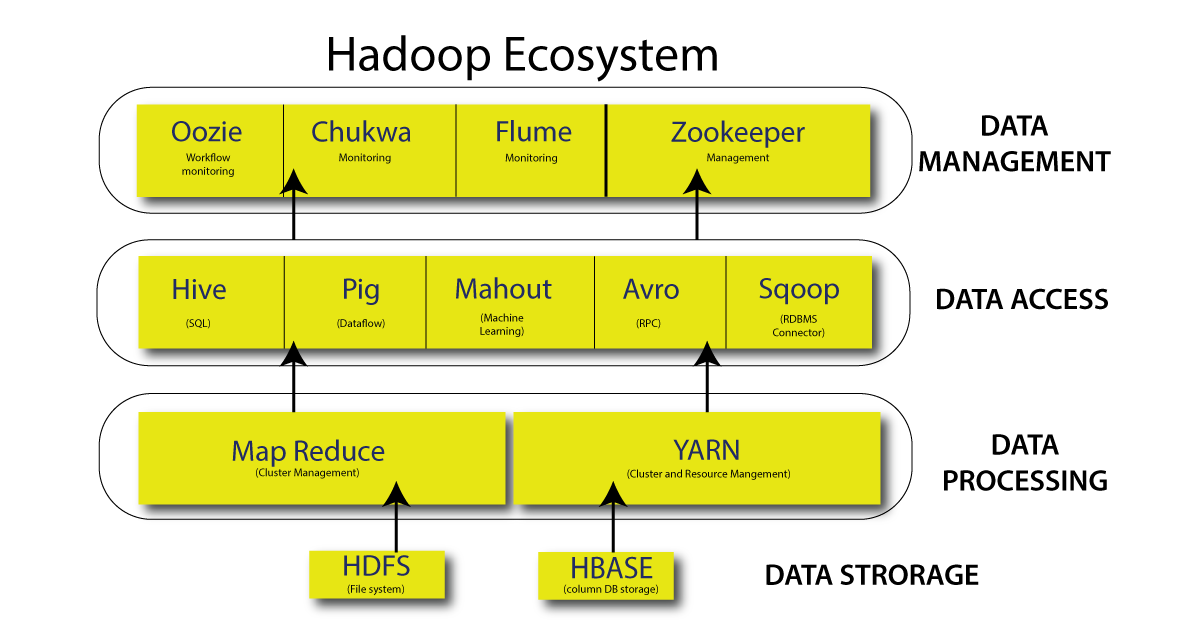

In addition to these two core components, the Hadoop ecosystem also includes several other sub-projects, including:

- Hive: A data warehousing and SQL-like query language for big data.

- Pig: A high-level platform for creating MapReduce programs with large data sets.

- Spark: An open-source, distributed computing system that can quickly process large amounts of data.

- HBase: A non-relational, column-oriented database for storing and retrieving large amounts of structured data.

- Yet Another Resource Negotiator (YARN): A resource management system that coordinates the allocation of computing resources within a Hadoop cluster.

These components, along with others, comprise the comprehensive Hadoop ecosystem and provide a complete solution for storing, processing, and analyzing large data sets.

Data Engineering Intermediate-Level Interview Questions

Q1. Explain MapReduce in Hadoop.

MapReduce is a programming model for processing large datasets in a parallel and distributed manner. It is commonly used for big data processing in Hadoop.MapReduce consists of two main stages:

- Map: This stage takes input data in the form of (key, value) pairs and processes it to generate intermediate (key, value) pairs.

- Reduce: This stage takes the intermediate (key, value) pairs produced by the Map stage and aggregates the values associated with each key to produce the final output.

Hadoop’s implementation of MapReduce uses a cluster of computers to distribute the processing across many nodes, allowing for the efficient processing of large datasets. The output of the Reduce stage is written to HDFS (Hadoop Distributed File System) for persistence.

Q2. What is Name Node? How Does a Name Node Communicate with Data Node?

The NameNode is a central component of the Hadoop Distributed File System (HDFS). Architecture. Acts as the master node and manages metadata for all files stored in the HDFS cluster. Metadata information includes block locations, the number of replicas, and their locations.The NameNode communicates with the DataNodes (worker nodes) in the HDFS cluster to manage data storage and retrieval processes. The NameNode periodically sends heartbeats to the DataNodes to check their status and track block information. DataNodes send block reports containing information about the blocks they contain to the NameNode.

In the event of a DataNode failure, the NameNode can use metadata information to identify replicas of lost data blocks and initiate the recovery process by copying them to new DataNodes.

NameNodes and DataNodes communicate with each other to manage data storage and retrieval within the HDFS cluster, with the NameNode acting as a central coordination point.

Q3. Explain the Snowflake Schema in Brief.

A Snowflake schema is a type of dimensional data modeling technique used in data warehousing. It is named for its snowflake-like structure with multiple dimensions radiating out from a central fact table.In the Snowflake schema, each dimension is represented by a separate table, and relationships between dimensions are stored in fact tables. This design allows for a more granular dimension table and reduces data redundancy as each size can be normalized and stored in that table.

A key benefit of the Snowflake schema is that it separates dimensional data from fact data, reducing the amount of data that needs to be scanned during query processing and allowing for more efficient queries and data aggregation. It also makes it easier to add new dimensions and attributes to your data model because you can add new dimensions and attributes to dimension tables without affecting the existing structure of your data model.

Overall, Snowflake schemas are valuable tools for designing and organizing data in data warehouse systems, enabling efficient querying and analysis of data.

Q4. What is Hadoop Streaming?

Hadoop Streaming is a utility provided with Apache Hadoop that allows users to write MapReduce programs in any programming language that can read from standard input and write to standard output.Hadoop Streaming allows you to write MapReduce programs in the language of your choice instead of being restricted to using Java, the default programming language for Hadoop MapReduce. This makes it easier for developers to leverage existing code and functionality to process large datasets on Hadoop clusters.

Hadoop Streaming communicates with the MapReduce framework by sending input data to the standard input of the map program and receiving output data from the standard output of the reduce program. This allows Hadoop streaming to be used with many programming languages, such as Python, Perl, Ruby, and more. Hadoop streaming is a flexible and convenient way to write MapReduce programs, making it easy for developers to get started with Hadoop and start processing big data. It is also useful for testing and prototyping, allowing developers to quickly try out different algorithms and processing approaches before committing to a full-fledged MapReduce implementation.

Q5. What are the Skewed Table and SerDe in the Hive?

A Hive table Hive is a table where some keys have a disproportionate number of records compared to others. This can lead to performance issues during query processing as the data is not evenly distributed across the nodes in the Hadoop cluster, and some nodes become bottlenecks.Connect SerDe in Hive archives to serializer/deserializer. This library allows Hive tHivead to write data to and from various formats, including text files, sequence files, and more. Hive uses SerDe to parse and serialize data in tables so that it can be processed efficiently. When Hive detects a bent table, a DBA or data engineer can use the SerDe to modify the data structure to optimize the table’s performance. For example, a SerDe can be used to split a curved key into multiple partitions, effectively balancing the load across the nodes in the cluster.

In summary, skewed tables in Hive can degrade performance, and SerDe can be used to optimize skewed table performance by modifying the data structure.

Data Engineering Expert-Level Interview Questions

Q1. What is Orchestration?

Orchestration is the automation of tasks and processes in a specific order to achieve a desired result. Commonly used in IT and DevOps, it refers to coordinating and managing interdependent components, systems, and tools to achieve a common goal.Orchestration in IT can include tasks such as resource provisioning, application deployment and management, infrastructure scaling, and service health monitoring and management. By automating these tasks, orchestration helps organizations streamline IT operations, reduce manual errors, and improve system reliability and efficiency.

Various tools and frameworks can be used for orchestration, including Ansible, Puppet, and Chef. These tools provide an integrated platform for automating and managing tasks and processes across multiple systems and technologies.

In summary, orchestration is a key component of modern IT and DevOps practices, providing organizations with the ability to automate and manage systems and processes in a reliable, efficient, and scalable manner.

Q2. What are the Different Data Validation Approaches?

Data validation is checking the accuracy, completeness, and consistency of data. There are several approaches to data validation, including:

- Syntax validation: This checks the format and structure of data to ensure that it adheres to a predefined set of rules or standards.

- Semantic validation: This checks the meaning of data, ensuring that it is meaningful and conforms to a set of business rules and standards.

- Domain validation: This checks if the data falls within a specific range of values or meets a particular set of conditions.

- Cross-field validation: This checks if the data in different fields is consistent and makes sense in the context of the entire record.

- Database constraint validation: This checks if the data meets the constraints set in the database, such as unique values, null values, and others.

- User-defined validation: This allows organizations to define custom validation rules specific to their needs and use cases.

- External reference validation: Checks if the data matches external sources, such as reference data from a separate database or external API.

Q3. Explain the Use of the Hive in the Hadoop Ecosystem.

Hive is a data warehousing and SQL-like query language component of the Hadoop ecosystem. It provides an easy-to-use interface for querying and analyzing large amounts of data stored in Hadoop’s distributed file system ‘HDFS’. Hive allows users to write SQL-like queries in her language called HiveQL to perform various data analysis tasks such as: B. Filtering, Aggregating, and Grouping Data.Hive translates these HiveQL queries into a series of MapReduce jobs running on a Hadoop cluster, providing high scalability and concurrency. This allows Hive to efficiently and efficiently process massive amounts of data. They can be terabytes or even petabytes in size. Hive also provides a metadata store called Hive Metastore. It allows users to define, manage, and access the structure and format of their data. A Hive metastore serves as a central repository for metadata. B. Schemas and data types for tables and columns. This makes the long-term management and maintenance of large datasets easier.

Hive is widely used in organizations to process and analyze big data, especially for business intelligence and data warehouse applications. It provides a powerful and flexible toolset for querying and analyzing large datasets in Hadoop. Its SQL-like interface makes it accessible to many users, including business analysts and data scientists.

Q4. How does a Data Warehouse Differ from an Operational Aatabase?

Data warehouses and operational databases have different purposes and designs, and architectural characteristics.A data warehouse is a central repository of structured data designed specifically for business intelligence and data analysis. Data in a data warehouse is typically transformed, cleaned, optimized for fast and efficient querying and exploration, and stored in large, scalable systems such as relational databases and big data platforms such as Hadoop.

On the other hand, operational databases support an organization’s day-to-day operations, such as: B. Transaction Processing and Recordkeeping. It is designed for online transaction processing (OLTP), requiring fast and efficient data updates, inserts, and deletes. Operational databases are typically small, stored in relational or NoSQL databases, and support real-time, low-latency data access.

One of the main differences between data warehouses and operational databases is the focus on write and read operations. Data warehouses are optimized for read-intensive functions such as reporting and analytics, while active databases are optimized for write-intensive functions such as transaction processing.

Another difference is in the structure of the data. Data in data warehouses are often denormalized and organized into star or snowflake schemas. In contrast, data in operational databases are typically stored in a normalized format to minimize data duplication and improve data integrity.

Q5. How to Transform Unstructured Data into Structured Data?

Transforming unstructured data into structured data involves several steps and techniques. These include:

- Data extraction: The first step is to extract the data from its unstructured sources, such as a text document, an email, a web page, or a social media post. This involves using web scraping or natural language processing (NLP) techniques to extract relevant data from the unstructured source.

- Data standardization: Once the data has been extracted, the next step is standardizing it into a standard format. This involves converting data into a uniform structure, such as converting dates into a standardized date format, currency into a standardized currency format, and text into a consistent case.

- Data normalization: The next step is to normalize the data, which involves organizing the data into a series of related tables and enforcing relationships between these tables. This helps to minimize data duplication and improve data integrity.

- Data enrichment: This step involves adding additional information, such as geographic information, demographic information, or market data.

- Data validation: The final step is to validate the data, which involves checking the data for completeness, accuracy, and consistency. This step helps to identify and correct any errors or inconsistencies in the data.

These steps can be performed using various data management tools, such as data integration tools, data quality tools, or big data platforms like Hadoop. The specific approach and tools used will depend on the size and complexity of the data, as well as the desired outcome.

In summary, transforming unstructured data into structured data involves several steps, including data extraction, standardization, normalization, enrichment, and validation. These steps can be performed using various data management tools, and the specific approach will depend on the size and complexity of the data, as well as the desired outcome.

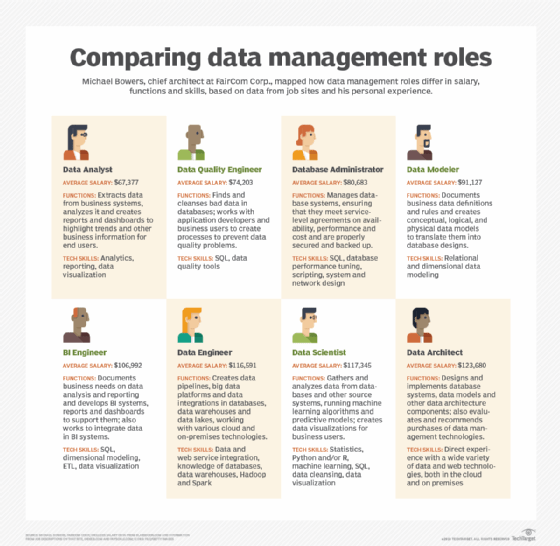

Q6. What is the Difference Between Data Architects and Data Engineers?

Data architect and data engineer are two different but related roles in the field of data management. Both departments are involved in the design, development, and maintenance of data systems but have different focuses and responsibilities.Data architects are responsible for creating an organization’s overall data architecture. We work with stakeholders to understand their data needs and develop a comprehensive data strategy that aligns with organizational goals and objectives. He also oversees the design and implementation of data systems, including data warehousing, big data platforms, and data integration systems. They ensure that data systems are scalable, secure, and capable of supporting an organization’s business intelligence and analytics needs.

On the other hand, data engineers are responsible for building and maintaining the underlying infrastructure of data systems. Design, build, and maintain data pipelines and workflows that enable data flow from source systems to data warehouses or big data platforms. We are also working to improve our data collection processes, data quality, and data security. Work with data scientists and analysts to ensure that data systems are optimized for performance and scalability.

Conclusion

Well, I hope you were able to understand today’s reading! If you were able to answer all the questions, then bravo! You are on the right track toward your preparation; if not, there’s no need to be concerned. The real value of today’s blog would come up if you can absorb these concepts and then apply them to the questions you would be facing in your interviews.

To summarize for you, the key takeaways of today’s articles would be:

- To understand data engineering and the difference between structured and unstructured data

- The features and components of Hadoop

- MapReduce in Hadoop

- The Snowflake Schema

- Hadoop Streaming

- Skewed Table and SerDe in Hadoop

- The concept of orchestration

- The different approaches to data validation

- The use of Hive in Hadoop

- The differences between a data warehouse and an operational database

- The process of transforming unstructured data into structured data

- And finally, the difference between a data engineer and a data architect

If you go through these thoroughly, I can ensure that you have covered the length and breadth of data engineering. The next time you face similar questions, you can confidently answer them! I hope you found this blog helpful and that I successfully added value to your knowledge. Good luck with your interview preparation process and your future endeavors!

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.