Embark on a journey through the evolution of artificial intelligence and the astounding strides made in Natural Language Processing (NLP). In a mere blink, AI has surged, shaping our world. The seismic impact of LLM fine-tuning large language models has utterly transformed NLP, revolutionizing our technological interactions. Rewind to 2017, a pivotal moment marked by ‘Attention is all you need,’ birthing the groundbreaking ‘Transformer’ architecture. This architecture now forms the cornerstone of NLP, an irreplaceable ingredient in every Large Language Model recipe, including the renowned ChatGPT.

Imagine generating coherent, context-rich text effortlessly – that’s the magic of models like GPT-4. Powerhouses for chatbots, translations, and content generation, their brilliance stems from architecture and the intricate dance of pretraining and fine-tuning. Our upcoming article delves into this symphony, uncovering the artistry behind leveraging Large Language Models for tasks, wielding the dynamic duet of pre-training and fine-tuning to masterful effect. Join us in demystifying these transformative techniques!

Table of contents

Getting Started with LLMs

LLMs stand for Large Language Models. LLMs are deep learning models designed to understand the meaning of human-like text and perform various tasks such as sentiment analysis, language modeling(next-word prediction), text generation, text summarization, and much more. They are trained on a huge amount of text data.

We use applications based on these LLMs daily without even realizing it. Google uses BERT(Bidirectional Encoder Representations for Transformers) for various applications such as query completion, understanding the context of queries, outputting more relevant and accurate search results, language translation, and more.

These models are built upon deep learning techniques, profound neural networks, and advanced techniques such as self-attention. They are trained on vast amounts of text data to learn the language’s patterns, structures, and semantics.

Since these models are trained on extensive datasets, it takes a lot of time and resources to train them, and it does not make sense to train them from scratch.

There are techniques by which we can directly use these models for a specific task. So let’s discuss them in detail.

Large Language Model Lifecycle

Before delving into LLM fine-tuning, it’s crucial to comprehend the LLM lifecycle and its functioning.

- Vision & Scope: Begin by defining the project’s vision. Decide whether your LLM will be a universal tool or target a specific task, like named entity recognition. Clear objectives save time and resources.

- Model Selection: Choose between training a model from scratch or modifying an existing one. Adapting a pre-existing model is often efficient, but some situations may necessitate fine-tuning with a new model.

- Model Performance and Adjustment: After preparing your model, assess its performance. If it’s unsatisfactory, explore prompt engineering or further fine-tuning. Ensure the model’s outputs align with human preferences.

- Evaluation & Iteration: Regularly conduct evaluations using metrics and benchmarks. Iterate between prompt engineering, fine-tuning, and evaluation until achieving the desired outcomes.

- Deployment: Once the model performs as expected, deploy it. Optimize for computational efficiency and user experience at this juncture.

Overview of Different Ways to Build LLM Applications

We often see exciting LLM applications in a day to day life. Are you curious to know how to build LLM applications? Here are the 3 ways to build LLM applications:

- Training LLMs from Scratch

- Finetuning Large Language Models

- Prompting

Training LLMs from Scratch

People often get confused between these 2 terminologies: training and finetuning LLMs. Both of these techniques work similarly, i.e., change the model parameters, but the training objectives are different.

Training LLMs from Scratch is also known as pretraining. Pretraining is the technique in which a large language model is trained on a vast amount of unlabeled text. But the question is, ‘How can we train a model on unlabeled data and then expect the model to predict the data accurately?’. Here comes the concept of ‘Self-Supervised Learning’. In self-supervised learning, a model masks a word and tries to predict the next word with the help of the preceding words. E.g., suppose we have a sentence: ‘I am a data scientist’.

The model can create its own labeled data from this sentence, like:

| Text | Label |

| I | am |

| I am | a |

| I am a | data |

| I am a Data | Scientist |

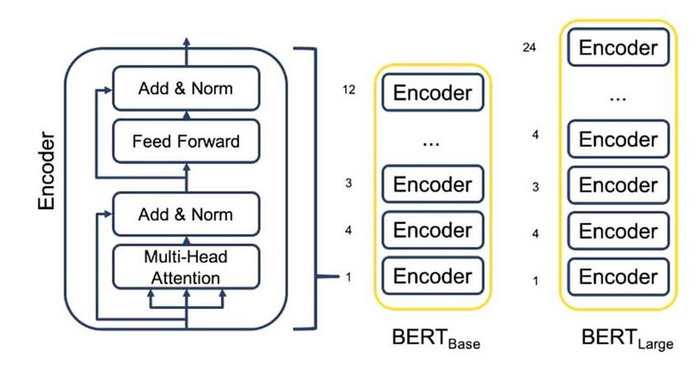

This is known as the next work prediction, done by an MLM (Masked Language Model). BERT, a masked language model, uses this technique to predict the masked word. We can think of MLM as a `fill in the blank` concept, in which the model predicts what word can fit in the blank.

There are different ways to predict the next word, but for this article, we only talk about BERT, the MLM. BERT can look at both the preceding and the succeeding words to understand the context of the sentence and predict the masked word.

So, as a high-level overview of pre-training, it is just a technique in which the model learns to predict the next word in the text.

Finetuning Large Language Models

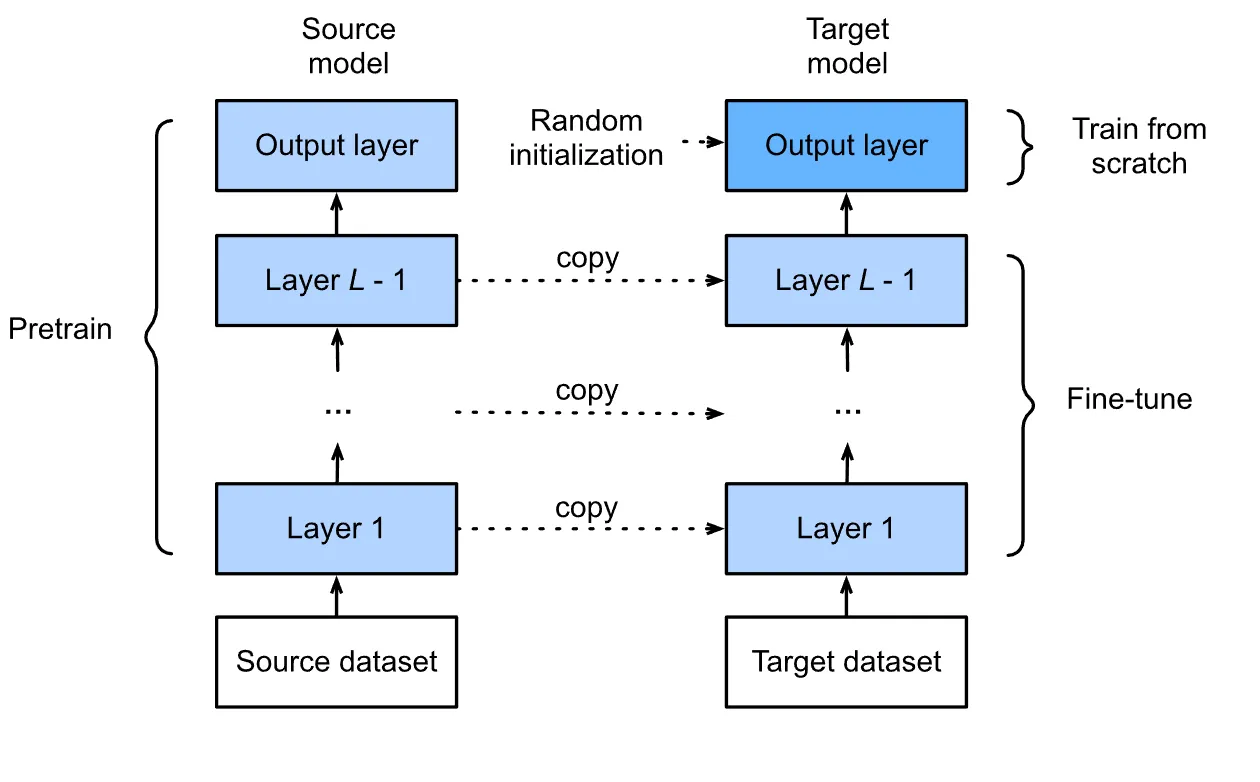

Finetuning is tweaking the model’s parameters to make it suitable for performing a specific task. After the model is pre-trained, it is then fine-tuned, or in simple words, trained to perform a specific task such as sentiment analysis, text generation, finding document similarity, etc. We do not have to train the model again on a large text; rather, we use the trained model to perform a task we want to perform. We will discuss how to fine-tune a Large Language Model in detail later in this article.

Prompting

Prompting is the easiest of all three 3 techniques, but a bit tricky. It involves giving the model a context(Prompt) based on which the model performs tasks. Think of it as teaching a child a chapter from their book in detail, being very discrete about the explanation, and then asking them to solve the problem related to that chapter.

- In the context of LLM, take, for example, ChatGPT; we set a context and ask the model to follow the instructions to solve the problem given.

- Suppose I want ChatGPT to ask me some interview questions on Transformers only. For a better experience and accurate output, you need to set a proper context and give a detailed task description.

Example: I am a Data Scientist with two years of experience and am currently preparing for a job interview at so-and-so company. I love problem-solving, and currently working with state-of-the-art NLP models. I am up to date with the latest trends and technologies. Ask me very tough questions on the Transformer model that the interviewer of this company can ask based on the company’s previous experience. Ask me ten questions, and also give me the answers to the questions.

The more detailed and specific you prompt, the better the results. The most fun part is that you can generate the prompt from the model itself and then add a personal touch or the information needed.

Understand Different Finetuning Techniques

There are different ways to fine-tune a model conventionally, and the different approaches depend on the specific problem you want to solve.

Let’s discuss the techniques to fine-tune a model.

There are 3 ways of conventionally finetuning an LLM.

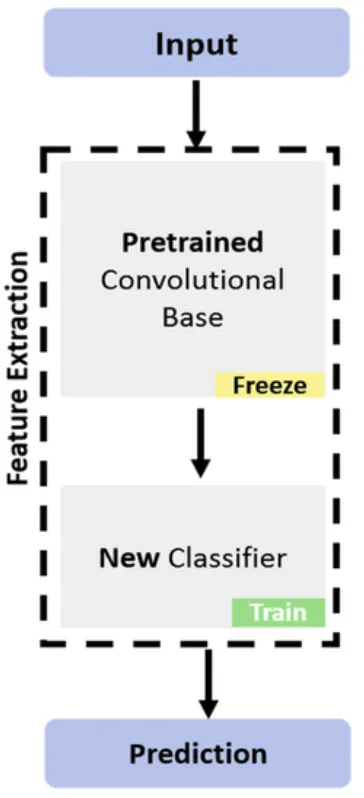

Feature Extraction

People use this technique to extract features from a given text, but why do we want to extract embeddings from a given text? The answer is straightforward. Because computers do not comprehend text, there needs to be a representation of the text that we can use to carry out various tasks. Once we extract the embeddings, they are capable of performing tasks like sentiment analysis, identifying document similarity, and more. In feature extraction, we lock the backbone layers of the model, meaning we do not update the parameters of those layers; only the parameters of the classifier layers get updated. The classifier layers involve the fully connected layers.

Full Model Finetuning

As the name suggests, we train each model layer on the custom dataset for a specific number of epochs in this technique. We adjust the parameters of all the layers in the model according to the new custom dataset. This can improve the model’s accuracy on the data and the specific task we want to perform. It is computationally expensive and takes a lot of time for the model to train, considering there are billions of parameters in the fine-tuning of Large Language Models.

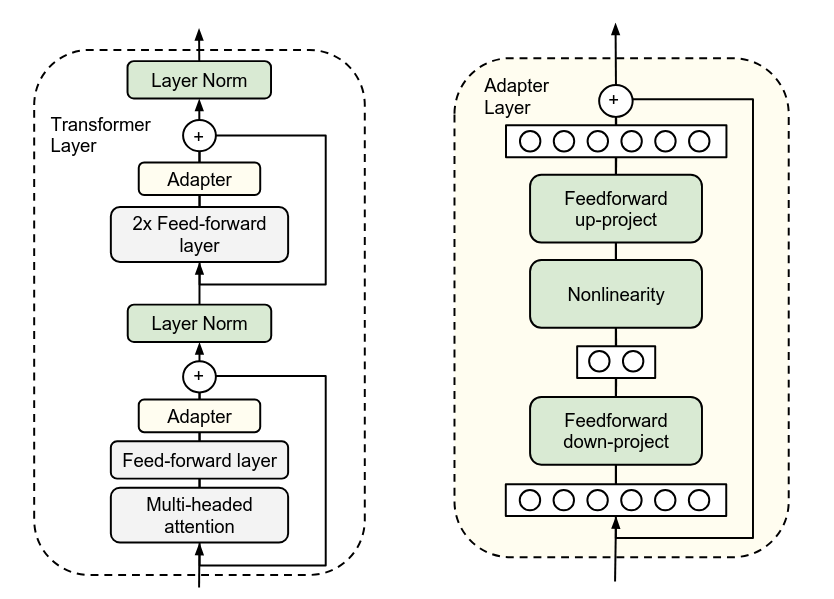

Adapter-Based Finetuning

Adapter-based finetuning is a comparatively new concept in which an additional randomly initialized layer or a module is added to the network and then trained for a specific task. In this technique, the model’s parameters are left undisturbed, or we can say that the model’s parameters are not changed or tuned. Rather, the adapter layer parameters are trained. This technique helps in tuning the model in a computationally efficient manner.

Implementation: Finetuning BERT on a Downstream Task

Now that we know the finetuning techniques, let’s perform sentiment analysis on the IMDB movie reviews using BERT. BERT is a large language model that combines transformer layers and is an encoder-only model. Google developed it and has proven to perform very well on various tasks. BERT comes in different sizes and variants like BERT-base-uncased, BERT Large, RoBERTa, LegalBERT, and many more.

BERT Model to Perform Sentiment Analysis

Let’s use the BERT model to perform sentiment analysis on IMDB movie reviews. For the free availability of a GPU, it is recommended to use Google Colab.

Setup and Installation

Since BERT (Bidirectional Encoder Representations for Encoders) is based on Transformers, the first step would be to install Transformers in our environment.

!pip install transformers

!pip install datasetsImporting Libraries

Let’s load some libraries that will help us to load the data as required by the BERT model, tokenize the loaded data, load the model we will use for classification, perform train-test-split, load our CSV file, and some more functions.

import torch

from transformers import AutoTokenizer, AutoModelForSequenceClassification, Trainer, TrainingArguments

from datasets import load_dataset

from sklearn.metrics import accuracy_score, precision_recall_fscore_supportLoading the Dataset

We will now load the IMDB dataset for fine-tuning our model:

# Load the IMDB dataset

dataset = load_dataset("imdb")Loading the Model

We will now load our pre-trained model from a checkpoint:

# Define the model checkpoint

model_checkpoint = "distilbert-base-uncased"

# Load the tokenizer and model from the checkpoint

tokenizer = AutoTokenizer.from_pretrained(model_checkpoint)

model = AutoModelForSequenceClassification.from_pretrained(model_checkpoint, num_labels=2)Tokenization

Tokenization of our data. The part of the code is responsible for preparing the text data in the IMDB dataset for input into the model.

# Tokenization function

def tokenize(batch):

return tokenizer(batch["text"], padding=True, truncation=True)

# Apply tokenization to the dataset

encoded_dataset = dataset.map(tokenize, batched=True)Defining Evaluation Metrics

Defining Evaluation metrics for our model: The below code defines a function to calculate evaluation metrics for the model’s predictions. It computes accuracy, precision, recall, and F1 score by comparing the predicted labels to the true labels. The results are returned as a dictionary of these metrics.

# Define the evaluation metrics

def compute_metrics(pred):

labels = pred.label_ids

preds = pred.predictions.argmax(-1)

precision, recall, f1, _ = precision_recall_fscore_support(labels, preds, average='binary')

acc = accuracy_score(labels, preds)

return {"accuracy": acc, "f1": f1, "precision": precision, "recall": recall}Setting Training Arguments

Defining training arguments for our model: This code sets up training parameters for the model. It defines where to save results, the evaluation strategy, learning rate, batch sizes, the number of training epochs, and the weight decay for regularization. These settings are used to configure the training process managed by the Trainer class.

# Define the training arguments

training_args = TrainingArguments(

output_dir="./results",

evaluation_strategy="epoch",

learning_rate=2e-5,

per_device_train_batch_size=16,

per_device_eval_batch_size=16,

num_train_epochs=3,

weight_decay=0.01,

logging_dir='./logs',

)Training and Evaluation

The code below initializes the Trainer class to manage the training and evaluation of the model. It links the model, training arguments, tokenized training data, evaluation data, and the function to compute metrics. The trainer.train() method starts the training process, and trainer.evaluate() assesses the model’s performance on the test dataset.

# Initialize the Trainer

trainer = Trainer(

model=model,

args=training_args,

train_dataset=encoded_dataset["train"],

eval_dataset=encoded_dataset["test"],

compute_metrics=compute_metrics,

)

# Train the model

trainer.train()

# Evaluate the model

trainer.evaluate()And there you have it. You can use your trained model to infer any data or text you choose. Here’s the link to my Collab notebook.

Conclusion

This article explored the world of finetuning Large Language Models and their significant impact on natural language processing. Discuss the pretraining process, where LLMs are trained on large amounts of unlabeled text using self-supervised learning. We also delved into finetuning, which involves adapting a pre-trained model for specific tasks and prompting, where models are provided with context to generate relevant outputs. Additionally, we examined different finetuning LLM techniques, such as feature extraction, full model finetuning, and adapter-based finetuning. Large Language Models have revolutionized NLP and continue to drive advancements in various applications.

Master concepts like finetuning LLMs with our GenAI Pinnacle Program. Learn the best GenAI skills with the best industry experts, hands-on projects, 1:1 mentorship, and more. Check out the program today!

Frequently Asked Questions

A. LLMs employ self-supervised learning techniques like masked language modeling, where they predict the next word based on the context of surrounding words, effectively creating labeled data from unlabeled text.

A. Finetuning allows LLMs to adapt to specific tasks by adjusting their parameters, making them suitable for sentiment analysis, text generation, or document similarity tasks. It builds upon the pre-trained knowledge of the Finetuning LLM model.

A. Prompting involves providing context or instructions to LLMs to generate relevant outputs. Users can guide the model to answer questions, generate text, or perform specific tasks based on the given context by setting a specific prompt.

Can you share the link of the movie.csv file used in this article?