What are GENERATIVE ADVERSARIAL NETWORKS and what are GANs used for?

A generative adversarial network is a subclass of machine learning frameworks in which when we give a training set, this technique learns to generate new data with the same statistics as the training set with the help of algorithmic architectures that uses two neural networks to generate new, synthetic instances of data that is very much similar to the real data and GANs were designed in 2014 by Ian Goodfellow and his colleagues.

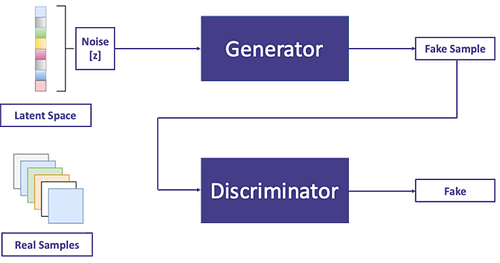

GANs are usually trained to generate images from random noises and a GAN has usually two parts in which it works namely the Generator that generates new samples of images and the second is a Discriminator that classifies images as real or fake for example we can train a GAN model to generate digit images that look like hand-written digit images from the MNIST dataset and apart from this GANs are widely used for voice generation, image generation or video generation.

-

- Generator: A generator is a model that is used to generate new reasonable data examples from the problem statement and

-

- Discriminator: A discriminator model is a model that classifies the given examples as real (from the domain) or fake (generated).

Hey there! Get ready for an epic adventure at the DataHack Summit 2023! From August 2nd to 5th, we’ll be taking over the prestigious NIMHANS Convention Center in vibrant Bengaluru, and we want YOU to join us! Picture this: mind-blowing workshops, insights straight from industry experts, and a chance to connect with fellow data enthusiasts like never before. This is your ticket to staying ahead of the curve, keeping up with the latest trends, and unlocking endless possibilities in the thrilling world of data science and AI. So mark those calendars, secure your spot, and get ready for an unforgettable experience at the DataHack Summit 2023. Click here for more details!

What makes Generative Adversarial Networks (GANs) so popular?

There are a variety of reasons why fans are so exciting and one of them is because GANs were the first generative algorithms to give convincingly good results also they have opened up many new directions for research and GANs themselves is considered to be the most prominent research in machine learning in the last several years, and since then GANs have started a revolution in deep learning and this revolution has produced some major technological breakthroughs in the history of computer science and artificial intelligence.

There’s an array of auxiliary reasons for the excitement over GANs, we will discuss them below:

-

- The first and the best thing about GANs is their nature of learning that is they tend to follow powerful unsupervised learning that is why GANs don’t need labeled data and it makes GANs very powerful and easy to understand as the boring work of labeling and annotating the data is not required.

-

- Secondly, they proposed a generative model which can generate high-quality natural images that develop gradually to generate more and more realistic looking data by coupling with an adversarial network. This framework not only has the possibility of generating very high-quality synthetic data but also it can be used to enhance pixels in photos, generate images from the input text, conversion of images from one domain to another, change the appearance of the face image, and many more, the list just goes on.

-

- Thirdly, suppose a scenario when you don’t have ample data for a problem you are working on, in that case, you can use adversarial networks to “generate” more data, instead of resorting to the tricks like data augmentation and not only this, there are many tasks which intrinsically require the realistic generation of samples from some distribution and in such cases, GANs have proved to be very much useful.

-

- Most of the Generative models and GANs, in particular, allow machine learning to work with multi-modal outputs, that is when we have many tasks to perform, in such cases a single input may have a close similarity to many different correct outputs, each of which is acceptable.

-

- Another reason for GANs being so popular is the power of adversarial training which tends to produce much sharper and discrete outputs rather than blurry averages that MSE provides and this has led to several applications of GANs such as super-resolution GANs which is used to perform better than MSE and various other loss functions in trend.

-

- Last but not least the endless research put around GANs is so mesmerizing that it grabs the attention of every other industry, we will see some major technological breakthroughs in the history of GANs that made them prominent.

What does the Media say?

.jpg?mode=max)

What might the future hold concerning GANs?

Generative adversarial networks (GANs) have been improved over the years and despite all the hurdles brought by this past decade of research, GANs have generated content that will become increasingly difficult to distinguish from real content and comparing image generation in 2014 to today, the quality was not expected to become that good and if the progress continues like this, GANs will remain a very important research project in future provided the acceptance of GANs and their applications by the research community.

We don’t know “what GANs can do for us”, as we are still talking about “what we can do for GANs” to make them more stable but the future of GANs looks bright for humanity, and as a matter of fact, within no time, we could see machine-generated code, music, videos, and even essays and blogs, however, I can assure you that this blog post wasn’t written by a GAN (or was it?).

-

- The results of Text, Video, and Audio applications of GANs have not been as compelling as in images and videos but we have seen the most success in the visual domain as GANs have so far shown very impressive results on tasks such as transforming low-resolution images to high-resolution images that were difficult to perform using conventional methods such as deep learning and CNNs, and was previously considered quite a challenging task but now GAN architectures, such as SRGANs or pix2pix, have shown the potential of GANs for solving this issue, and other GAN architectures like StackGAN network has also proved to be useful for text-to-image synthesis tasks, so it is not surprising to see GANs taking hold of the future.

-

-

GANs have been used to produce data in the medical field, on which other AI models will train to invent treatments for rare diseases that haven’t received much attention to date and researchers can train the generator on available drugs dataset for existing illnesses for generating new possible treatments for incurable diseases by proposing an adversarial autoencoder (AAE) model for the identification and generation of new compounds based on the available biochemical dataset. Many scholars and researchers from prestigious universities and labs are actively working on drug discovery programs in cancer, dermatological diseases, fibrosis, Parkinson’s, Alzheimer’s, ALS, diabetes, sarcopenia, and aging using GANs.

-

-

- One interesting application will be seen in the dental department, where it is believed that researchers are manufacturing dental crowns with the help of GANs which will in turn speed up the whole process for the patient because the procedure which took weeks before could now be done with high precision in just a few hours.

-

- GANs are also being used for improving augmented reality (AR) scenes in some scenarios such as incomplete environment maps can be completed using the creative generation capabilities of GANs by learning the statistical structure of the world. It also handles other AR-related use cases of GANs that involve environment texturing such as enabling, lighting, and reflections.

-

- One more use case where GANs will prove their existence is for generating training data to be used in low-data regimes. Using GANs it’s possible to mix two sources of data for generating more realistic and useful training data, for instance, a research team at Apple showed that you can use a large amount of unlabeled data and feed it to a refiner powered by GANs, which can be then trained to generate more realistic training data given some base labeled synthetic data and this technique can reduce the cost of generating supervised datasets and help on a variety of machine learning tasks that weren’t addressed before.

-

- There are many interesting research topics involving GANs which includes improving disentanglement, applying contrastive learning, and training more stable GANs, that are yet to be dealt with with the help of various building tools that help practitioners and researchers easily go from proof of concepts to real-world applications and this will inspire more creative use of GANs in future.

-

- Differential private GANs are an important topic to be researched where data privacy is concerned and there are great opportunities for training more efficient models for fast rendering of data and for supporting the multimodal types of data which is the case with hard problems like self-driving vehicles.

Last Words: Last year alone we saw several interesting experiments involving GANs which included, advancements in deep learning techniques, GANs being used in commercial applications, maturation of the training process of GANs, and many more.

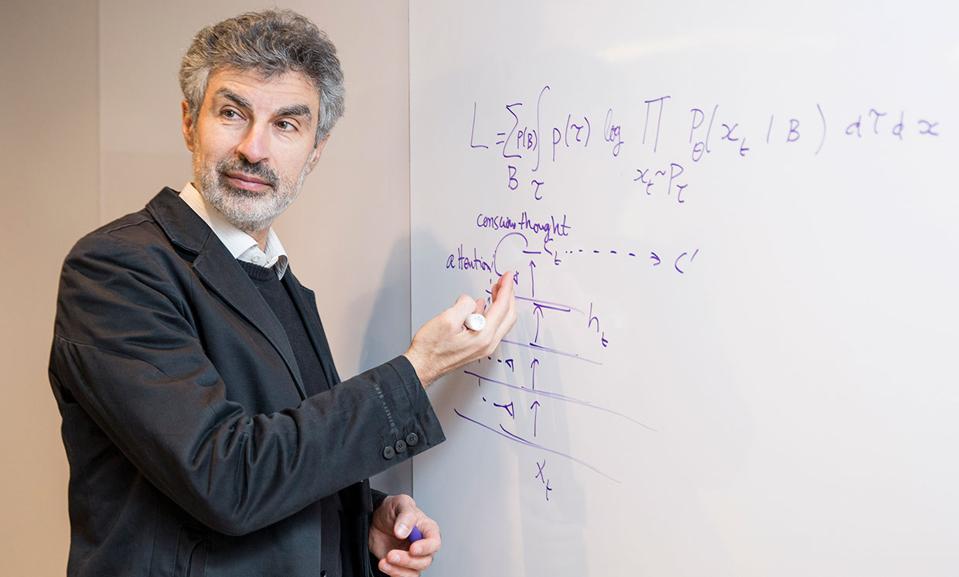

The evolution of GANs according to the Facebook AI research director Yann LeCun is the most interesting idea of the decade and it is somewhat long and winding, and very much continues to this day as they have their deficiencies, but GANs remains one of the most versatile neural network architectures in use today and continues to evolve and grow with time.

Before you go, I’d like to reiterate DataHack Summit 2023, where a lineup of mind-blowing workshops has been curated! From Mastering LLMs: Training, Fine-tuning, and Best Practices to Exploring Generative AI with Diffusion Models and Build Scalable Machine Learning Model (and so much more), these workshops are the holy grail of unlocking immense value. Just imagine diving headfirst into immersive, hands-on experiences that will equip you with practical skills and real-world knowledge you can implement straight away. But wait, there’s more! You’ll also get the chance to mingle with industry leaders, creating connections that can launch your career to new heights. Grab your spot and register now for the highly anticipated DataHack Summit 2023.

If you are reading this blog, I am sure that we share similar interests and will be in similar industries. I have worked on various projects which involve ML/AI/CV and also written blogs on various platforms to share my knowledge regarding the same. Let’s connect on my social media platforms:

Github

Quora

DataScience+

ModularMachineLearning

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.