Exploration of Few-shot learning, a cutting-edge paradigm in the field of data science. Unlike traditional supervised learning methods, Few-shot learning empowers models to make predictions based on limited data samples, emphasizing generalization over memorization. In this article, we delve into the foundational concepts and methodologies that underpin Few-shot learning. We begin by distinguishing it from conventional supervised learning, elucidating its core principles of “learning to learn.” We dissect key terminologies like support sets and meta-learning, shedding light on their significance in Few-shot learning scenarios.

This article was published as a part of the Data Science Blogathon.

Few-shot learning is the problem of making predictions based on a limited number of samples. Few-shot learning is different from standard supervised learning. The goal of few-shot learning is not to let the model recognize the images in the training set and then generalize to the test set. Instead, the goal is to learn. “Learn to learn” sounds hard to understand. You can think of it in this way.

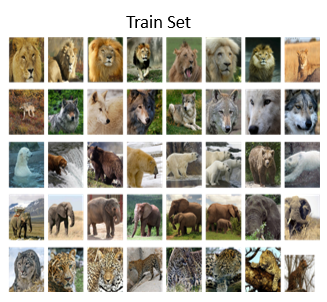

I train the model on a big training set. The goal of training is not to know what an elephant is and what a tiger is. Instead, the goal is to know the similarity and difference between objects.

After training, you can show the two images to the model and ask whether the two are the same kind of animals. The model has similarities and differences between objects. So, the model is able to tell that the contents in the two images are the same kind of objects. Take a look at our training data again.

The training data has 5 classes that do not include the squirrel class. Thus, the model is unable to recognize squirrels. When the model sees the two images, it does not know they are squirrels. However, the model knows they look alike. The model can tell you with high confidence that they are the same kind of objects.

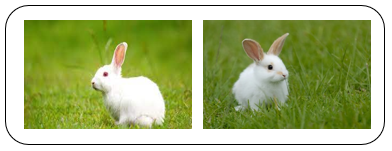

For the same reason, the model has never seen a rabbit during training. So, it does not know the two images are rabbits. But the model knows the similarity and difference between things. The model knows that the contents of the two images are very alike. So, the model can tell that they are the same object.

Then I show the above two images to the model. While the model has never seen pangolin and dog. The model knows the two animals look quite different. The model believes they are different objects.

Ace Your Next Job Interview 90+ Python Interview Questions

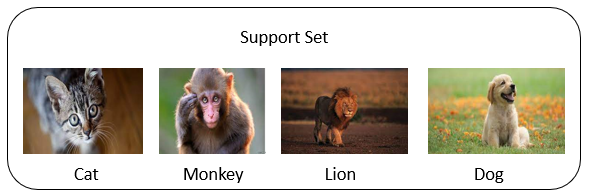

Support set is meta learning’s jargon. The small set of labeled images is called a support set. Note the differences between the training set and the support set. The training set is big. Every class in the training set has many samples. The training set is big enough for learning a deep neural network. In contrast, the support set is small. Every class has at most a few samples. In the training set, if every class has only one sample, it is impossible to train a deep neural network. The support set can only provide additional information at test time. Here is the basic idea of few-shot learning.

We do not train a big model using a big training set. Rather than training the model to recognize specific objects such as tigers and elephants in the training set, we train the model to know the similarity and differences between objects.

You may have heard of meta-learning. Few-shot learning is a kind of meta-learning. Meta-learning is different from traditional supervised learning. Traditional supervised learning asks the model to recognize the training data and then generalize to unseen test data. Differently, meta learning’s goal is to learn.

You bring a kid to the zoo. He’s excited to see the fluffy animal in the water which he has never seen before. He asked you, what’s this? Although he has never seen this animal before, he is a smart lid and can learn by himself. Now, you give the kid a set of cards. On every card, there is an animal and its name. The kid has never seen the animal in the water. He has never seen the animals on the cards, either. But the kid is so smart that by taking a look at all the cards, he knows the animal in the water. The animal in the water is most similar to the animal on the card. Teaching the kid to learn by himself is called meta-learning.

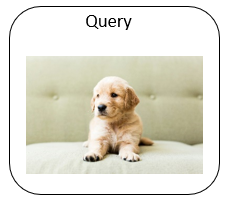

Before going to the zoo, the kid was already able to learn by himself. He knew the similarity and differences between animals. In meta-learning, the unknown animal is called a query. You give him a card and let him learn by himself. The set of cards is the support set. Learning to learn by himself is called meta-learning. If there is only one card for every species, the kid learns to recognize using only one card. This is called one-shot learning.

Here I compare traditional supervised learning with few-shot learning. Traditional supervised learning is to train a model using a big training set.

After the model is trained, we can use the model for making predictions. We show a test sample to the model and it recognizes it. Few-shot learning is a different problem. The query sample is never seen before. The query sample is from an unknown class. This is the main difference from traditional supervised learning.

Let’s see few important terminologies.

The support set is called k-way and n-shot.

When performing few-shot learning, the prediction accuracy depends on the number of ways and the number of shots.

As the number of ways increases, the prediction accuracy drops.

You may ask why does this happen?

Let’s look at the same example. Now, the kid has given 3 cards and asked to choose one out of three. This is 3-way 1-shot learning. What if the kid was given 6 cards? This would be 6-way 1-shot learning. Which one do you think is easier, 3-way or 6-way?

Obviously, 3-way is easier than 6-way. Choosing one out of 3 is easier than choosing one out of six.

Thus, 3-way has higher accuracy than 6-way.

As the number of shots increases, the prediction accuracy improves. The phenomenon is easy to interpret. With more samples, the prediction becomes easier. Thus, 2-shot is easier than 1-shot.

The basic idea of few-shot learning is to train a function that predicts similarity.

After training, the learned similarity function can be used for making predictions for unseen queries. We can use the similarity function to compare the query with every sample in the support set and calculate the similarity scores. Then, find the sample with the highest similarity score and use it as the prediction.

Now I demonstrate how few-shot learning makes a prediction.

Given the query image, I want to know what the image is.

We can compare the query with every sample in the support set.

Compare the query with cat, the similarity function outputs a similarity score of 0.6

The similarity score between the query and the monkey is 0.4

Similarly, for the lion, it is 0.2 and for the dog, it is 0.9

Among those similarity scores, 0.9 is the biggest. Thus, the model predicts the query is a dog. One-shot learning can be performed in this way. Given a support set, we can compute the similarity between the query and every sample in the support set to find the most similar sample.

Few-shot learning has a wide range of applications in the trending fields of data science such as computer vision, robotics, and much more. They can be used for character recognition, image recognition, and classification approaches. They perform well for some applications of NLP such as translation, text classification, sentiment analysis, etc. In robotics, they can be used to train robots with a small number of training sets.

If you do research on meta-learning, then you will need datasets for evaluating your model.

Here I introduce 2 datasets that are most widely used in research papers.

Omniglot is the most frequently used dataset. It is a hand-written dataset. You can get it here

Another commonly used dataset is Mini-ImageNet. Mini-ImageNet

In conclusion, Few-shot learning emerges as a promising frontier in data science, offering a paradigm shift from traditional supervised learning approaches. Its emphasis on generalization from minimal data samples opens avenues for tackling real-world challenges where data scarcity prevails. By equipping models to discern underlying similarities and differences, Few-shot learning holds the key to enhanced adaptability and robustness. As we witness its applications across diverse domains, from computer vision to robotics, the transformative potential of Few-shot learning becomes increasingly evident. With ongoing research and innovation, Few-shot learning stands poised to revolutionize data-driven decision-making, paving the way for more agile and efficient machine learning systems.

A. Few-shot learning refers to a machine learning paradigm where a model is trained to make accurate predictions with only a small number of examples per class. This approach enables the model to generalize well to new, unseen data despite having limited training data. It’s particularly useful for tasks where collecting abundant labeled data is challenging or time-consuming.

A. Zero-shot learning is a concept where a model can generalize to new classes it has never seen during training. For instance, if a model is trained to recognize various dog breeds but hasn’t encountered a specific breed like “Xoloitzcuintli,” it can still make accurate predictions about it by leveraging shared attributes and information from related classes. This ability to infer without explicit training on a class characterizes the essence of zero-shot learning.

A. Few-shot learning finds applications across various domains such as computer vision, natural language processing, and robotics, enabling tasks like character recognition, image classification, and even training robots with minimal training sets.

A. Unlike traditional supervised learning, Few-shot learning focuses on training models to generalize from minimal examples rather than memorizing specific training instances, emphasizing “learning to learn” over mere recognition.

A. Prediction accuracy in Few-shot learning depends on the number of ways (classes) and shots (samples). As the number of ways increases, accuracy tends to drop due to increased complexity.

The media shown in this article are not owned by Analytics Vidhya and is used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,

Nice copy-pasting from Shusen Wang's lecture on YouTube. Also credits for not giving credits. :)

Hi, Do you have a recommendation of any papers that have easy to implement code for few-shot image classification? I am doing researching this for my masters project but currently can't get anything to work. Thanks, Rob

This tutorial was my first contact with this topic. My greatest congrats to the author for making it so clear and loud.

Very informative ,thank you.