In machine learning, model accuracy and robustness are often enhanced through ensemble techniques, which combine predictions from multiple algorithms to achieve improved results. Among these, stacking is an advanced and flexible approach, often yielding better performance by leveraging diverse algorithms in unison. Stacking combines the strengths of different base learners, feeding their predictions into a meta-learner to make a final prediction that surpasses individual models’ capabilities. This article delves into ensemble stacking, exploring its theoretical foundations and guiding you through practical applications in machine learning and deep learning contexts. Whether you’re a beginner or an experienced practitioner, understanding stacking can elevate your approach to predictive modelling.

Overview:

- Learn how combining multiple models through ensemble methods enhances predictive accuracy by reducing bias and variance.

- Explore how bagging and boosting improve model stability and accuracy by leveraging multiple weak learners.

- Discover stacking’s unique approach of integrating diverse models with a meta-learner for optimal predictive results.

- Follow a practical example using Sklearn to build a stacked model that combines various classifiers.

- See how stacking multiple neural networks with a custom function boosts performance in deep learning models.

This article was published as a part of the Data Science Blogathon

Table of contents

What are Ensemble Techniques?

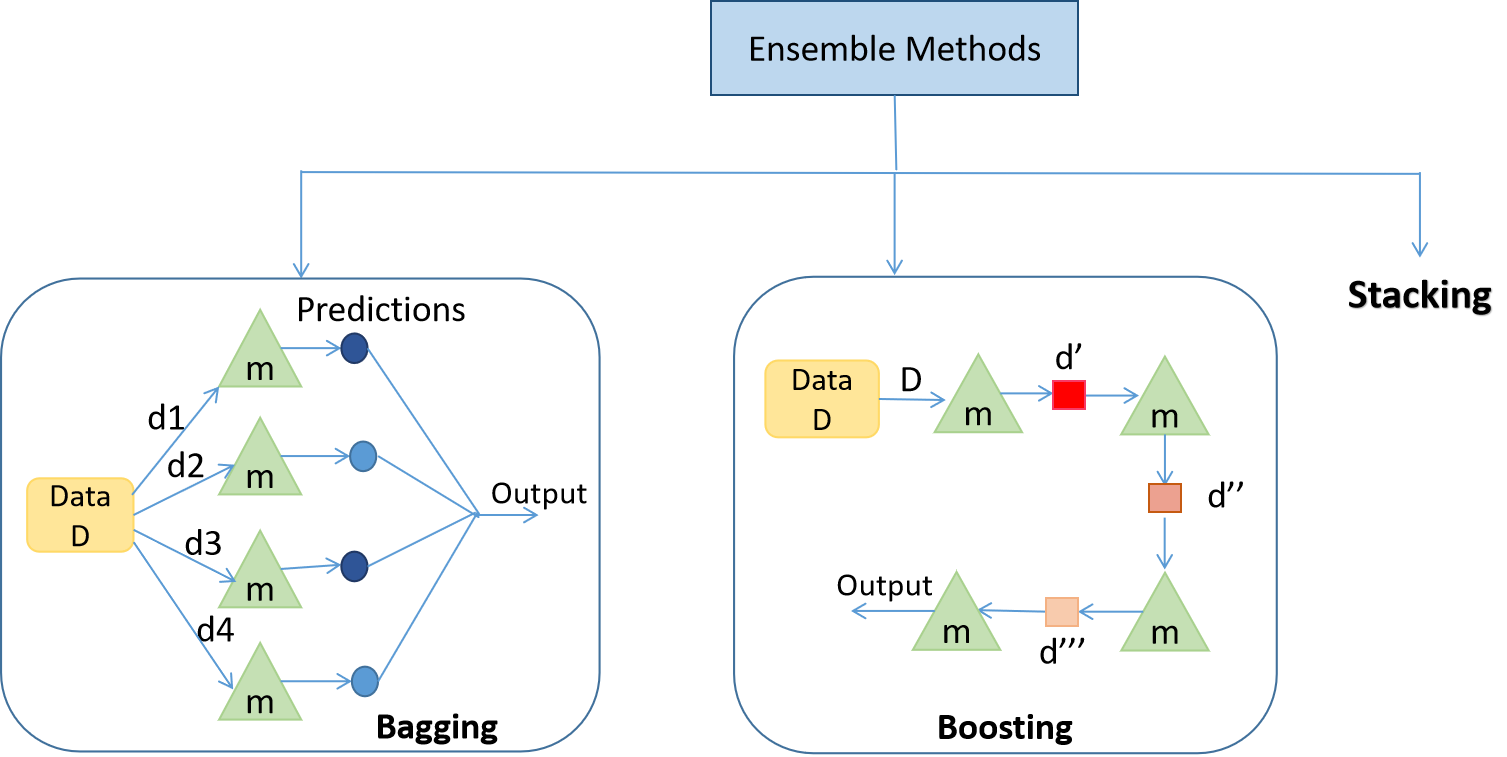

Ensemble techniques use multiple learning algorithms or models to produce one optimal predictive model. The model produced performs better than the base learners taken alone. Other applications of ensemble learning include selecting the important features, data fusion, etc. Ensemble techniques can be primarily classified into Bagging, Boosting, and Stacking.

What is Bagging?

Bagging is mainly applied in supervised learning problems. It involves two steps, i.e., bootstrapping and aggregation. Bootstrapping is a random sampling method in which samples are derived from the data using the replacement procedure. In Fig 1., the first step in bagging is bootstrapping, where random data samples are fed to each base learner. The base learning algorithm is run on the samples to complete the procedure. In Aggregation, the outputs from the base learners are combined. The goal is to increase the accuracy while reducing variance to a large extent. E.g.- RandomForest, where the predictions from decision trees(base learners) are taken parallelly. In the case of regression problems, these predictions are averaged to give the final prediction, and in the case of classification problems, the mode is selected as the predicted class.

What is Boosting?

It is an ensemble method in which each predictor learns from preceding predictor mistakes to make better predictions in the future. The technique combines several weak base learners arranged sequentially (Fig 1.) so that weak learners learn from the previous weak learner’s errors to create a better predictive model. Hence, one strong learner is formed by significantly improving the predictability of models. E.g., XGBoost, AdaBoost.

Now that we have discussed ensembling and the two types of ensemble methods, it’s time to discuss Stacking!

What is Stacking?

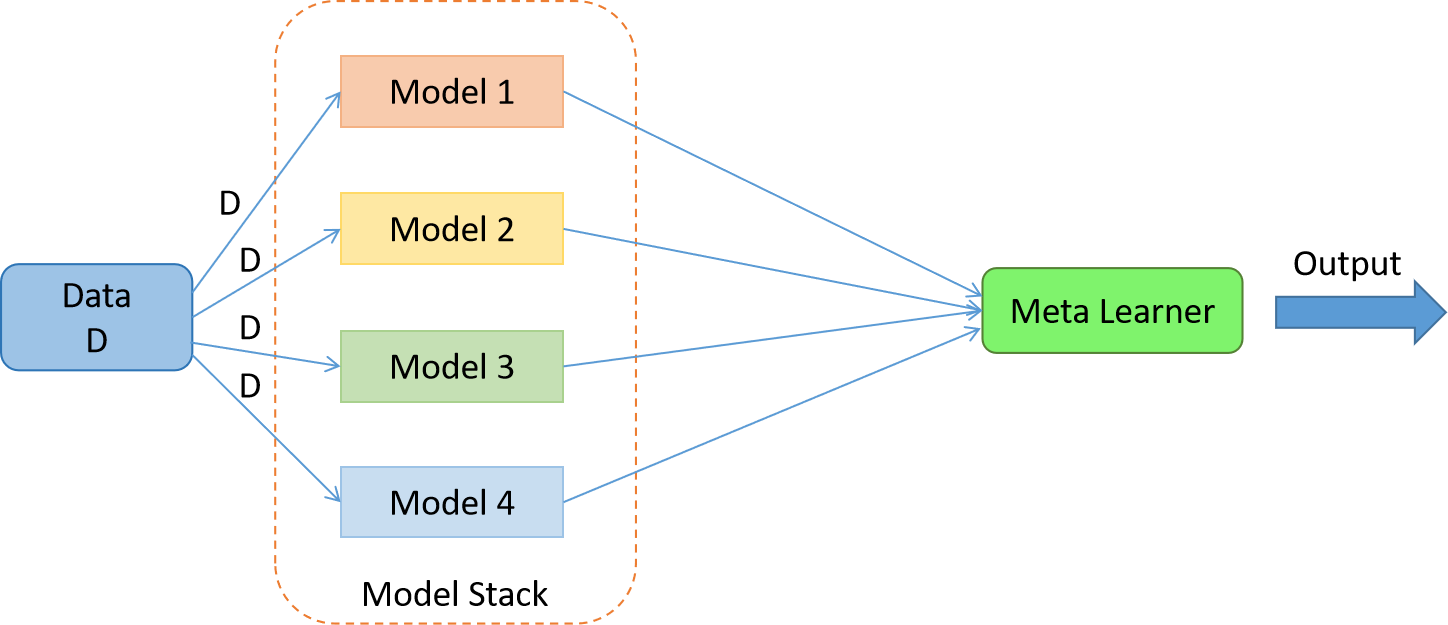

While bagging and boosting use homogenous weak learners for ensemble, Stacking often considers heterogeneous weak learners, learns them in parallel, and combines them by training a meta-learner to output a prediction based on the different weak learners’ predictions. A meta-learner inputs the predictions as the features and the target as the ground truth values in data D(Fig 2).

It attempts to learn how to combine the input predictions best to make a better output prediction. In averaging ensemble, e.g. Random Forest, the model combines the predictions from multiple trained models. A limitation of this approach is that each model contributes the same amount to the ensemble prediction, irrespective of how well the model performed. An alternate approach is a weighted average ensemble, which weighs the contribution of each ensemble member by the trust in their contribution in giving the best predictions. The weighted average ensemble provides an improvement over the model average ensemble.

A further generalization of this approach is replacing the linear weighted sum with Linear Regression (regression problem) or Logistic Regression (classification problem) to combine the predictions of the sub-models with any learning algorithm. This approach is called Stacking.

In stacking, an algorithm takes sub-model outputs as input and attempts to learn how to combine the input predictions best to make a better output prediction.

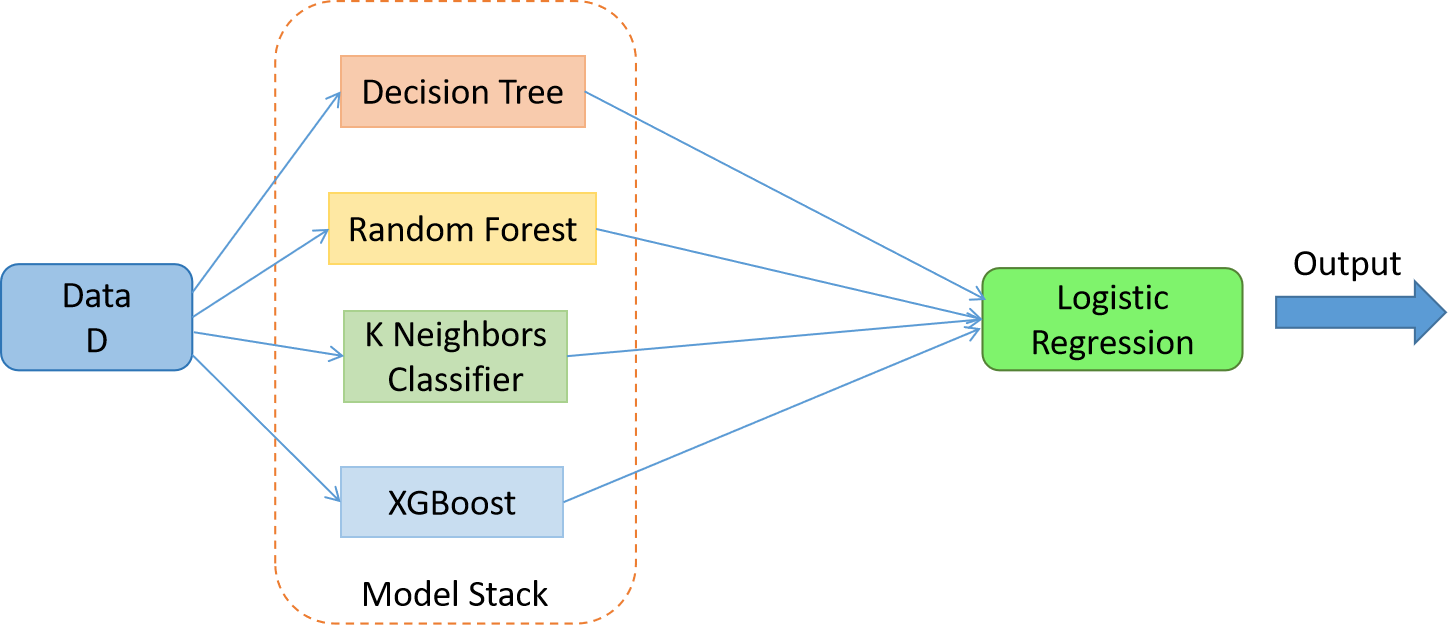

Stacking for Machine Learning

Fig 3. The stacked model with meta learner = Logistic Regression and the weak learners = Decision Tree, Random Forest, K Neighbors Classifier and XGBoost

Dataset – Sklearn Breast Cancer Dataset (Classification)

Note – The data preprocessing part isn’t included in the following code. Kindly go through the link for the full code.

Load the base learning algorithms that you want to stack –

dtc = DecisionTreeClassifier()

rfc = RandomForestClassifier()

knn = KNeighborsClassifier()

xgb = xgboost.XGBClassifier()Perform cross-validation and record the scores –

clf = [dtc,rfc,knn,xgb]

for algo in clf:

score = cross_val_score( algo,X,y,cv = 5,scoring = 'accuracy')

print("The accuracy score of {} is:".format(algo),score.mean())Perform stacking and cross-validation –

dtc = DecisionTreeClassifier()

rfc = RandomForestClassifier()

knn = KNeighborsClassifier()

xgb = xgboost.XGBClassifier()

clf = [('dtc',dtc),('rfc',rfc),('knn',knn),('xgb',xgb)] #list of (str, estimator)

from sklearn.ensemble import StackingClassifier

lr = LogisticRegression()

stack_model = StackingClassifier( estimators = clf,final_estimator = lr)

score = cross_val_score(stack_model,X,y,cv = 5,scoring = 'accuracy')

print("The accuracy score of is:",score.mean())The stacked model gives an accuracy score of 0.969, higher than any other base learning algorithm taken alone!

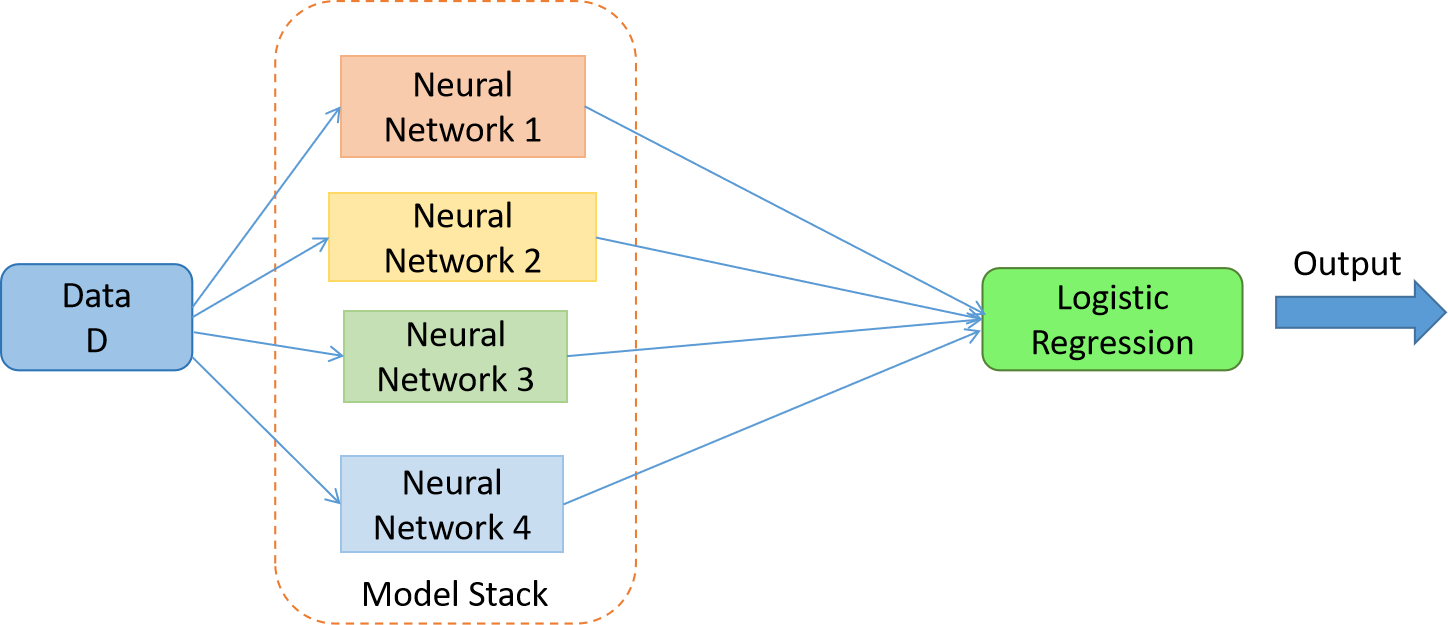

Stacking for Deep Learning

Dataset – Churn Modeling Dataset. Please go through the dataset to better understand the code below.

Fig 4. The stacked model with meta learner = Logistic Regression and weak learners = 4 Neural Networks

Note—1. The data preprocessing part isn’t included in the following code. Kindly go through the link for the full code. 2. Keras doesn’t provide a function to create a stack of deep neural networks, so we create a function of our own. 3. The Churn Modeling dataset has 10,000 examples (rows). I split the data into training and testing with a ratio of 70:30.

Create neural network architecture –

model1 = Sequential()

model1.add(Dense(50,activation = 'relu',input_dim = 11))

model1.add(Dense(25,activation = 'relu'))

model1.add(Dense(1,activation = 'sigmoid'))I chose the loss function as binary cross-entropy, the optimizer as Adam, and the F1-score as the error metric. Since F1 score isn’t available in Keras, we build of our own –

def recall_m(y_true, y_pred):

true_positives = K.sum(K.round(K.clip(y_true * y_pred, 0, 1)))

possible_positives = K.sum(K.round(K.clip(y_true, 0, 1)))

recall = true_positives / (possible_positives + K.epsilon())

return recall

def precision_m(y_true, y_pred):

true_positives = K.sum(K.round(K.clip(y_true * y_pred, 0, 1)))

predicted_positives = K.sum(K.round(K.clip(y_pred, 0, 1)))

precision = true_positives / (predicted_positives + K.epsilon())

return precision

def f1_m(y_true, y_pred):

precision = precision_m(y_true, y_pred)

recall = recall_m(y_true, y_pred)

return 2*((precision*recall)/(precision+recall+K.epsilon()))Train the model and record the performance. I chose epochs = 100 –

model1.compile(loss='binary_crossentropy', optimizer='adam', metrics=[f1_m])

history = model1.fit(X_train,y_train,validation_data = (X_test,y_test),epochs = 100)Create 3 different neural network architectures and train them with the same settings –

model2 = Sequential()

model2.add(Dense(25,activation = 'relu',input_dim = 11))

model2.add(Dense(25,activation = 'relu'))

model2.add(Dense(10,activation = 'relu'))

model2.add(Dense(1,activation = 'sigmoid'))

model2.compile(loss='binary_crossentropy', optimizer='adam', metrics=[f1_m])

history1 = model2.fit(X_train,y_train,validation_data = (X_test,y_test),epochs = 100)

model3 = Sequential()

model3.add(Dense(50,activation = 'relu',input_dim = 11))

model3.add(Dense(25,activation = 'relu'))

model3.add(Dense(25,activation = 'relu'))

model3.add(Dropout(0.1))

model3.add(Dense(10,activation = 'relu'))

model3.add(Dense(1,activation = 'sigmoid'))

model3.compile(loss='binary_crossentropy', optimizer='adam', metrics=[f1_m])

history3 = model3.fit(X_train,y_train,validation_data = (X_test,y_test),epochs = 100)

model4 = Sequential()

model4.add(Dense(50,activation = 'relu',input_dim = 11))

model4.add(Dense(25,activation = 'relu'))

model4.add(Dropout(0.1))

model4.add(Dense(10,activation = 'relu'))

model4.add(Dense(1,activation = 'sigmoid'))

model4.compile(loss='binary_crossentropy', optimizer='adam', metrics=[f1_m])

history4 = model4.fit(X_train,y_train,validation_data = (X_test,y_test),epochs = 100)Save the models

model1.save('model1.h5')

model2.save('model2.h5')

model3.save('model3.h5')

model4.save('model4.h5')Load the model

dependencies = {'f1_m': f1_m }

# create a custom function to load model

def load_all_models(n_models):

all_models = list()

for i in range(n_models):

# Specify the filename

filename = '/content/model' + str(i + 1) + '.h5'

# load the model

model = load_model(filename,custom_objects=dependencies)

# Add a list of all the weaker learners

all_models.append(model)

print('>loaded %s' % filename)

return all_models

n_members = 4

members = load_all_models(n_members)

print('Loaded %d models' % len(members))Perform stacking

We train the meta learner first by providing examples from the test set to the weak learners i.e the 4 neural networks and collecting the predictions. In this case, each model will output one prediction (‘exited’: 1 or ‘not exited’: 0) for each example. PS. Please go through the dataset. Therefore, the 3,000 examples in the test set will result in four arrays (since there 4 neural networks) with the shape [3000, 1].

We then combine these arrays into a three-dimensional array with the shape [3000, 4, 1] through dstack() NumPy function that will stack each new set of predictions.

As input for a new model, we will require 3,000 examples with some number of features. Given that we have four models and each model makes 1 prediction in each example, then we would have 4 (1 x 4) features for each example provided to the submodels. We can transform the [3000, 4, 1] shaped predictions from the sub-models into a [3000, 4] shaped array to be used to train a meta-learner using the reshape() NumPy function and flattening the final two dimensions. The stacked_dataset() function implements this step.

# create stacked model input dataset as outputs from the ensemble

def stacked_dataset(members, inputX):

stackX = None

for model in members:

# make prediction

yhat = model.predict(inputX, verbose=0)

# stack predictions into [rows, members, probabilities]

if stackX is None:

stackX = yhat #

else:

stackX = dstack((stackX, yhat))

# flatten predictions to [rows, members x probabilities]

stackX = stackX.reshape((stackX.shape[0], stackX.shape[1]*stackX.shape[2]))

return stackXWe have created the dataset to train the meta-learner. We fit the meta-learner on the data for training.

# fit a model based on the outputs from the ensemble members

def fit_stacked_model(members, inputX, inputy):

# create dataset using ensemble

stackedX = stacked_dataset(members, inputX)

# fit the meta learner

model = LogisticRegression() #meta learner

model.fit(stackedX, inputy)

return model

model = fit_stacked_model(members, X_test,y_test)Make the predictions

# make a prediction with the stacked model

def stacked_prediction(members, model, inputX):

# create dataset using ensemble

stackedX = stacked_dataset(members, inputX)

# make a prediction

yhat = model.predict(stackedX)

return yhat

# evaluate model on test set -

yhat = stacked_prediction(members, model, X_test)

score = f1_m(y_test/1.0, yhat/1.0)

print('Stacked F Score:', score)The stacked model gives an F-score of 0.6007 on test data, which is higher than any other neural network taken alone!

This concludes that the stacked model can perform better than the individual models. This is no doubt why Stacking Ensembling is the favourite approach to winning hackathons and achieving state-of-the-art results.

Do check the Github repo for the codes and the respective outputs.

Conclusion

Ensemble Stacking is a versatile and effective approach for improving the accuracy of machine learning and deep learning models. Combining different model predictions through stacking can lead to significantly better outcomes than using individual models. This technique has become a popular choice for practitioners aiming for state-of-the-art results, and it remains a favourite in competitive data science due to its potential to maximize predictive performance.

Frequently Asked Questions

A. Stacking is an ensemble learning technique that combines multiple machine learning models to improve predictive performance. Instead of averaging predictions, it uses a meta-learner to learn how best to integrate outputs from base models, allowing the final stacked model to make more accurate predictions.

A. In stacking, multiple base models (often diverse ones) generate predictions, which are then fed into a meta-learner. This meta-learner, typically a simple model like logistic regression, learns from the base model predictions to create a final, more robust output, optimizing prediction accuracy.

A. Stacking is used to enhance model performance in classification and regression tasks by leveraging the strengths of multiple models. By combining diverse model predictions, stacking can improve accuracy and generalization, especially for complex datasets where single models may fall short.

A. The two main types of stacking are single-level stacking, where a single layer of base models feeds predictions into a meta-learner, and multi-level stacking (or stacking with layers), where additional meta-learners iteratively refine predictions from multiple layers of base models.

A. Stacking is valuable because it combines the strengths of various models, addressing limitations like bias and variance in individual models. This approach can achieve higher accuracy, better generalization, and improved stability, making it effective for complex, high-dimensional, or noisy datasets.

A. Stacking’s main advantage is its ability to yield more accurate predictions by combining complementary models. This reduces overfitting and underfitting while maximizing performance, as the meta-learner adapts to different patterns, enhancing the ensemble’s robustness and predictive power over single models.

The media shown in this article are not owned by Analytics Vidhya and are used at the Author’s discretion.

Informative article thank you. Query: Is this possible for the stacked model with meta learner = a deep learning-based model and weak learners = 4 machine learning-based models? Can you please guide me with the implementation?