This article was published as a part of the Data Science Blogathon.

Introduction

In this section, we will build a face detection algorithm using Caffe model, but only OpenCV is not involved this time. Instead, along with the computer vision techniques, deep learning skills will also be required, i.e. We will use the deep learning pre-trained model to detect the faces from the image of different angles, and the model that we will use is Caffe model.

Perks of Using the Deep Learning Model

We already have multiple options for detecting the face, so in this section, we will discuss the benefits of using the deep learning model for seeing the faces.

- Efficient processing: As we all know, deep learning models are best whenever we mention image processing, so for that reason, we are using the Caffe model, which is the pre-trained model.

- Accurate results: Whenever we use the deep learning model in image processing applications, we use neural networks, which will give better results when compared to the HAAR cascade classifier.

- Detecting multiple faces: In other methods, sometimes we are not able to see the multiple faces, but while using the ResNet-10 Architecture we can efficiently see the various faces through the network model (SSD).

Import the Libraries

We will first import the required libraries.

import os import cv2 import dlib from time import time import matplotlib.pyplot as plt

Deep Learning-based Face Detection

We have come across many algorithms that are used to detect faces both in real-time and in images. We use the deep learning approach that uses a ResNet-10 neural network architecture to see the multiple faces in the image, which is more accurate than the OpenCV HAAR cascade face detection method.

Loading the Deep Learning-based Face Detector

For loading the deep learning-based face detector, we have two options in hand,

- Caffe: The Caffe framework takes around 5.1 Mb as memory.

- Tensorflow: The TensorFlow framework will be taking around 2.7 MB of memory.

For loading the Caffe model we will use the cv2.dnn.readNetFromCaffe() and if we want to load the Tensorflow model, then cv2.dnn.readNetFromTensorflow() function will be used. Just make sure to use the appropriate arguments.

opencv_dnn_model = cv2.dnn.readNetFromCaffe(prototxt="models/deploy.prototxt",

caffeModel="models/res10_300x300_ssd_iter_140000_fp16.caffemodel")

opencv_dnn_model

Output:

Creating the Face Detection Function

So it’s time to make a face detection function which will be named as cvDnnDetectFaces()

Approach:

- The first step will be to retrieve the frame/image using the cv2.dnn.blobFromImage() function

- Then we will use the opencv_dnn_model.set input() function for the pre-processing image part that will make it ready to work as the input for the neural network.

- Then comes the opencv_dnn_model.forward() function that will give us the array that will hold the coordinates of the normalised bounding boxes and the confidence value of detection.

Note: The more the confidence value of detection, the more accurate the results will be.

def cvDnnDetectFaces(image, opencv_dnn_model, min_confidence=0.5, display = True):

image_height, image_width, _ = image.shape

output_image = image.copy()

preprocessed_image = cv2.dnn.blobFromImage(image, scalefactor=1.0, size=(300, 300),

mean=(104.0, 117.0, 123.0), swapRB=False, crop=False)

opencv_dnn_model.setInput(preprocessed_image)

start = time()

results = opencv_dnn_model.forward()

end = time()

for face in results[0][0]:

face_confidence = face[2]

if face_confidence > min_confidence:

bbox = face[3:]

x1 = int(bbox[0] * image_width)

y1 = int(bbox[1] * image_height)

x2 = int(bbox[2] * image_width)

y2 = int(bbox[3] * image_height)

cv2.rectangle(output_image, pt1=(x1, y1), pt2=(x2, y2), color=(0, 255, 0), thickness=image_width//200)

cv2.rectangle(output_image, pt1=(x1, y1-image_width//20), pt2=(x1+image_width//16, y1),

color=(0, 255, 0), thickness=-1)

cv2.putText(output_image, text=str(round(face_confidence, 1)), org=(x1, y1-25),

fontFace=cv2.FONT_HERSHEY_COMPLEX, fontScale=image_width//700,

color=(255,255,255), thickness=image_width//200)

if display:

cv2.putText(output_image, text='Time taken: '+str(round(end - start, 2))+' Seconds.', org=(10, 65),

fontFace=cv2.FONT_HERSHEY_COMPLEX, fontScale=image_width//700,

color=(0,0,255), thickness=image_width//500)

plt.figure(figsize=[20,20])

plt.subplot(121);plt.imshow(image[:,:,::-1]);plt.title("Original Image");plt.axis('off');

plt.subplot(122);plt.imshow(output_image[:,:,::-1]);plt.title("Output");plt.axis('off');

else:

return output_image, results

Code Breakdown:

- Here, the first step is to get the height and width of the image using the shape function.

- Now we will create a copy of the original image, and on this image only, we will draw the bounding boxes and write the confidence scores as well.

- Now we will create a blob from the image i.e. to make it ready in the input format for the neural network and perform the pre-processing on the image.

- Then just after that, we will resize the image to apply the mean subtraction on the channels of the image and convert the image from BGR to RGB format.

- With the help of the set input function, we will set up the input value for the architecture and get the current time from the system just before starting the face detection.

- Finally, we will initiate the face detection using the forward() function, and after that, note the current time after the face has been detected.

- As we are trying to detect the multiple faces then, here we will loop through each face that our model has seen

- Before drawing the bounding boxes and writing the confidence scores, we have to perform the following steps:

- The very first thing will be to get the confidence score and then check whether the confidence score is greater than the threshold value or not

- If yes, then we will get the coordinates of the face using indexing and then scale them just according to the original image.

- Based on our coordinates, we will draw the bounding box on the copy image based on those coordinates. Also, note that we are drawing the filled rectangle to make the confidence score visible to us.

- At last, we will write the confidence score on the image.

- So, by far, we have done all the image pre-processing steps, so now it’s time to display the image, and to do that, we have first to make sure that the display flag is True.

- If it’s true, we will use the put text method to print the time taken to detect the face on the image and then we will display the original and pre-processed image. Else it will merely return the output_image and results.

We are done with all the image pre-processing tasks, and we have also created a function. Now it’s time to display the visual results with the help of the function that we have created.

Read an Image to Detect the Face/Faces

image = cv2.imread('media/test.jpg')

cvDnnDetectFaces(image, opencv_dnn_model, display=True)

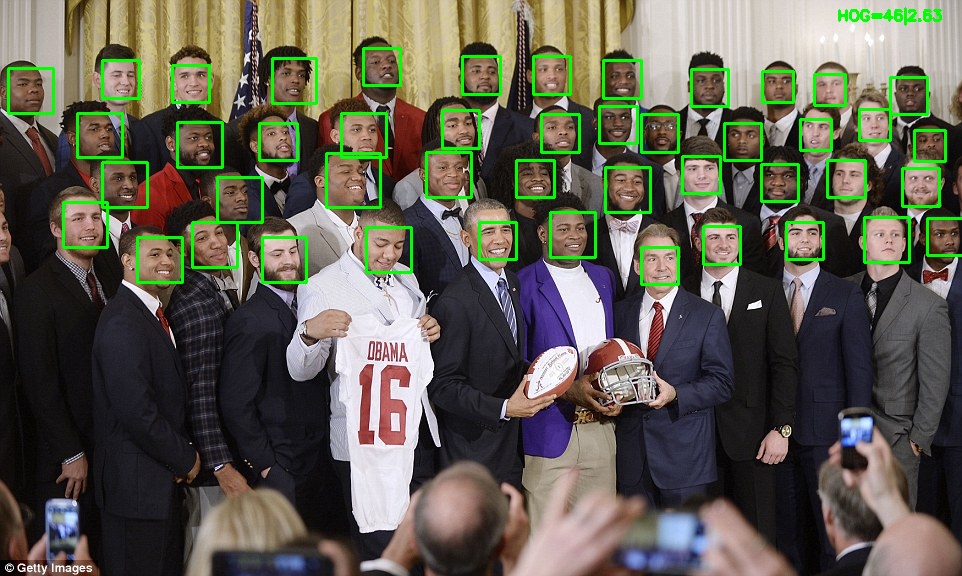

Output:

So from the above output, it is visible how well our model has performed to even detect the multiple faces in the image with a reasonable confidence score and show the time taken for the face to be detected.

Conclusion

Here we have come to the conclusion part of this article. So let’s discuss what we have learned so far in a nutshell or the key takeaways from the article:

- So the very first thing that we got to know about is an altogether new algorithm/architecture/model to detect the faces and their background, i.e. Deep learning-based face detection (Caffe/TensorFlow architecture).

- We have also learned about the main methods which are essential to perform the face detection using those model i.e. opencv_dnn_model.setInput() and opencv_dnn_model.forward().

- Then we learned how to detect the faces/face using the Caffe model and display the result in the form of bounding boxes and confidence scores.

Here’s the repo link to this article. I hope you liked my article on Face detection using the Caffe model. If you have any opinions or questions, then comment below.

Connect with me on LinkedIn for further discussion.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

How to identify who are them using detected that with the database