This article was published as a part of the Data Science Blogathon.

Introduction

Kubernetes popularly known as (K8s) is a system for automating deployment, scaling, and managing containerized applications.

An application’s containers are grouped into logical units, which can be easily managed and discovered. A key element of Kubernetes is 15 years’ experience running production workloads at Google in conjunction with best-of-breed ideas and practices from the community.

As part of the microservices architecture, Kubernetes represents a step away from monolithic architectures, where services are decoupled, isolated, and only as big as they need to be. Developed in the form of containers, these microservices are launched in seconds and can be terminated after only minutes of use.

If you’re going to unbundle and containerize your monolith, why bother when the end result is near-identical to the user? It’s nearly as easy to maintain and develop as deploying. Microservices offer a number of advantages over monolithic architectures, such as simplified configuration management, more precise allocation of resources, and improved performance monitoring.

We will go over everything you need to know about preparing your applications for life inside cloud containers in this guide.

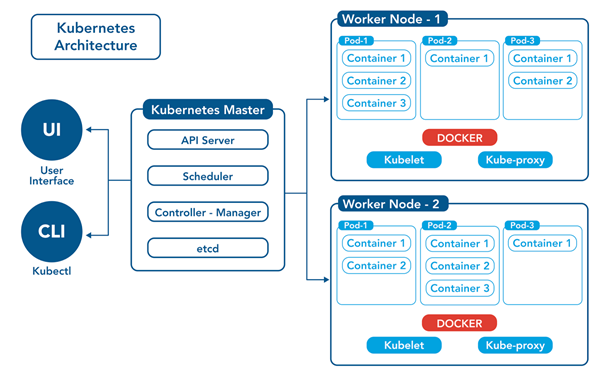

Essential of Kubernetes Architecture

https://tinyurl.com/2tp33ydc

Containers: In general, a container is an isolated “micro-application” with all the necessary software packages and libraries.

Pods: Pods group together two or more containers and are a key part of the K8s architecture. If a pod fails, K8s can automatically replace it, add more CPU and memory, and even replicate it to scale out. Pods are assigned IP addresses. A “controller” manages a group of pods, which together form a scalable “workload.” The pods are connected via “services,” which represent the entirety of the workload. In spite of scaling or destruction of some pods, a service balances web traffic across the pods. It’s also important to note that storage volumes are attached to pods and are exposed to containers within those pods.

Controller: A controller is a control loop that compares the desired and actual states of the K8s cluster and connects with the API server to create, update, and delete the resources it manages. The label selectors in the controller determine the set of pods that the controller may control. Replication Controller (scales pods), DeamonSet Controller (ensures each node gets one copy of a designated pod), Job Controller (runs software at a specific time), Cronjob Controller (schedules jobs that run periodically), StatefulSet Controller (controls stateful applications), and Deployment Controller are all examples of K8s controllers (controls stateless applications).

Node: A pod can be scheduled on a node, a physical or virtual server. A container runtime, a kubelet pod, and a Kube-proxy pod are all installed on every node (more on those three items to come). Manually and automatically scaling node groups (also called autoscaling groups or node pool groups) is possible.

Volumes: K8s storage volumes allow long-term data storage that is accessible throughout the pod’s lifespan. A pod’s containers can share a storage volume. A node can also access a storage volume directly.

Services: A Kubernetes service is a collection of pods that cooperate. An example of a K8s service is shown below.

In a service configuration file, a “label selector” defines a collection of pods that make up a single service. The service feature gives the service an IP address and a DNS name, and it round-robins traffic to addresses that match the label selector. This approach effectively allows the frontend to be “decoupled” (or abstracted) from the backend.

Kube-proxy: The Kube-proxy component is installed on each node and is responsible for maintaining network services on worker nodes. It is also in charge of maintaining network rules, allowing network communication between services and pods, and routing network traffic.

Kubelet: A kubelet runs on each node and sends information to Kubernetes about the state and health of containers.

Container Runtime: The container runtime is the software that allows containers to run. Containers, Docker, and CRI-O are popular container runtime examples.

Controller Plane: A cluster’s main controlling unit is the Kubernetes master. It manages the entire K8s cluster, including workloads, and serves as the cluster’s communication interface. The Kubernetes controller plane is made up of several parts. These components enable Kubernetes to run highly available applications. The following are the primary components of the Kubernetes control plane :

- Etcd: etcd stores the cluster’s overall configuration data (i.e., the state and details of a pod), thereby representing the cluster’s state in Kubernetes master nodes. The API Server monitors the cluster and makes changes to the cluster to match the desired state set using etcd data.

- API Server: JSON data is sent over HTTP. It serves as Kubernetes’s internal and external interface. It processes requests, validates them, and instructs the appropriate service or controller to update the state object in etcd, as well as allowing users to configure workloads across the cluster.

- Scheduler: evaluates nodes to determine where an unscheduled pod should be placed based on CPU and Memory requests, policies, label affinities, and workload data locality

Controller Manager: The controller manager is a single process that oversees all of Kubernetes’ controllers. While the controllers are logically separate processes, they are run as a single process in a DaemonSet to simplify things.

Prerequisites

One or more machines running one of:

Minimal required memory & CPU (cores)

Cluster Setup

To manage your cluster you need to install kubeadm, kubelet, and kubectl.

Steps to follow

- Configure IP Tables

- Disable SWAP

- Install Docker & configure

- Install Kubeadm-Kubelet & Kubectl

- Create Default Audit Policy

- Install NFS Client Drivers

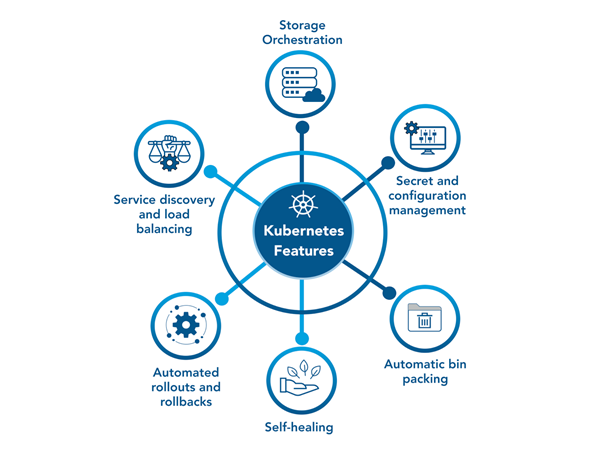

Key Features of Kubernetes

https://tinyurl.com/yc8rdrhh

Service Discovery and Load Balancing: Kubernetes assigns a single DNS name and an IP address to each group of pods. When there is a high volume of traffic, K8s automatically balance the load across a service that may include multiple pods.

Automated Rollouts and Rollbacks: K8s can deploy new pods and swap them with existing pods. It can also change configurations without affecting end users. Kubernetes also includes an automated rollback feature. If a task is not completed successfully, this rollback functionality can undo the changes.

Secret and Configuration Management: Configuration and secret information can be securely stored and updated without the need to rebuild images. Stack configuration does not necessitate the disclosure of secrets, reducing the risk of data compromise.

Storage Orchestration: K8s can be used to mount a variety of storage systems, including local storage, network storage, and public cloud providers.

Automatic Bin Packing: Kubernetes algorithms attempt to allocate containers efficiently based on configured CPU and RAM requirements. This function assists businesses in optimizing resource utilization.

Self-Healing: Kubernetes performs health checks on pods and restarts containers that fail. If a pod fails, K8s does not allow connections to it until the health checks are completed.

Difference Between Kubernetes vs Docker

|

Real-World Case Studies

Babylon

Challenge

Babylon’s products greatly rely on machine learning and artificial intelligence, and in 2019, there wasn’t enough computing power at Babylon to run a particular experiment. As well as growing (from 100 to 1,600 in three years), the company planned to expand to other countries.

Solution

The infrastructure team at Babylon moved its Kubernetes-based user-facing applications to Kubeflow in 2018, a toolkit that enables machine learning on Kubernetes. We designed a Kubernetes core server, we deployed Kubeflow, and we orchestrated the whole experiment, which turned out very well,” says AI Infrastructure Lead Jérémie Vallée. A self-service platform for AI training is being developed on top of Kubernetes.

Impact

As opposed to waiting hours or days for access to computing resources, teams can access them instantly. It used to take 10 hours for a clinical validation to be completed; now it takes under 20 minutes. Additionally, Babylon’s cloud-native platform has enabled it to expand internationally.

Booking.com

Challenge

Booking.com implemented OpenShift in 2016, which allowed product developers to access infrastructure faster. The infrastructure team became a “knowledge bottleneck” when challenges arose because Kubernetes was abstracted from the developers. The ability to scale support became unsustainable.

Solution

The platform team decided, after using OpenShift for a year, to build their own vanilla Kubernetes platform, and ask developers to learn some Kubernetes in order to use it. Ben Tyler, Principal Developer, B Platform Track, says “This is not a magical platform.”. You can’t use it just by following the instructions. Developers need to learn, and we’re going to do everything we can to help them learn.

Impact

There has been an uptick in Kubernetes adoption despite its learning curve. It could take hours for developers to create a new service before containerization, or weeks if they didn’t know Puppet. Using the new platform, it takes as little as 10 minutes. Over 500 new services have been developed in the first eight months.

Spotify

Challenge

With over 200 million monthly active users worldwide, the audio-streaming platform was launched in 2008. Jai Chakrabarti, Director of Engineering, Infrastructure, and Operations, says, “We aim to empower creators and enable an immersive listening experience for all of our consumers today and into the future.” Spotify, an early adopter of microservices and Docker, had containerized microservices running across its fleet of VMs using Helios, a homegrown container orchestration system. “Having a small team working on the features was just not as efficient as adopting something that was supported by a much larger community,” he says, by late 2017.

Solution

“We saw the incredible community that had grown up around Kubernetes and wanted to be a part of that,” Chakrabarti says. Kubernetes offered more features than Helios. Furthermore, “we wanted to benefit from increased velocity and lower costs, as well as align with the rest of the industry on best practices and tools.” Simultaneously, the team wished to contribute its knowledge and influence to the thriving Kubernetes community. The migration, which would take place concurrently with Helios, should go smoothly because “Kubernetes fits very nicely as a compliment and now as a replacement to Helios,” according to Chakrabarti.

Impact

The team spent much of 2018 addressing the core technology issues required for a migration, which began late that year and is a major focus for 2019. “A small percentage of our fleet has been migrated to Kubernetes, and we’ve heard from our internal teams that they have less of a need to focus on manual capacity provisioning and more time to focus on delivering features for Spotify,” says Chakrabarti. According to Site Reliability Engineer James Wen, the largest service currently running on Kubernetes handles approximately 10 million requests per second as an aggregate service and benefits greatly from autoscaling. “Previously, teams would have to wait for an hour to create a new service and get approval,” he adds.

Adidas

Challenge

In recent years, the Adidas team was pleased with its technological choices—but access to all of the tools was a challenge. “Just to get a developer VM,” says Daniel Eichten, Senior Director of Platform Engineering, “you had to send a request form, give the purpose, give the title of the project, who’s responsible, give the internal cost center a call so that they can do recharges.” “In the best-case scenario, you received your machine within half an hour. Worst-case scenario is a half-week or even a week.”

Solution

To improve the process, “we started from the developer point of view,” and looked for ways to shorten the time it took to get a project up and running and into the Adidas infrastructure, says Senior Director of Platform Engineering Fernando Cornago. They found the solution with containerization, agile development, continuous delivery, and a cloud-native platform that includes Kubernetes and Prometheus.

Impact

Six months after the project began, the Adidas e-commerce site was running entirely on Kubernetes. The e-commerce site’s load time was cut in half. The frequency of releases increased from every 4-6 weeks to 3-4 times per day. adidas now runs 40% of its most critical, impactful systems on its cloud-native platform, with 4,000 pods, 200 nodes, and 80,000 builds per month

Benefits

- Using Kubernetes and its huge ecosystem can improve your productivity

- Kubernetes and a cloud-native tech stack attract talent

- Test and autocorrection of applications

- Kubernetes is a future proof solution

- Kubernetes helps to make your application run more stable

- Kubernetes can be cheaper than its alternatives

- It is developed by Google, which brings years of valuable industry experience to the table.

- Largest community among container orchestration tools.

- Offers a variety of storage options, including on-premises SANs and public clouds.

- Adheres to the principles of immutable infrastructure.

- Control and automate deployments and updates

- Save money by optimizing infrastructural resources thanks to the more efficient use of hardware

- Scale resources and applications in real-time

- Solve many common problems derived from the proliferation of containers by organizing them in “pods”

Disadvantages

- Kubernetes can be an overkill for simple applications

- The transition to Kubernetes can be cumbersome

- Limited functionality according to the availability in the Docker API.

- Highly complex Installation/configuration process

- Not compatible with existing Docker CLI and Compose tools

- Complicated manual cluster deployment and automatic horizontal scaling setup

Kubernetes Ecosystem Glossary

Cluster

Is a set of machines individually referred to as nodes used to run containerized applications managed by Kubernetes.

Node

Is either a virtual or physical machine. A cluster consists of a master node and a number of worker nodes.

Cloud Container

Is an image that contains software and its dependencies.

Pod

Is a single container or a set of containers running on your Kubernetes cluster.

Deployment

Is an object that manages replicated applications represented by pods. Pods are deployed onto the nodes of a cluster.

Replicaset

Ensures that a specified number of pod replicas are running at one time.

Service

Describes how to access applications represented by a set of pods. Services typically describe ports and load balancers and can be used to control internal and external access to a cluster.

Containers: A standalone, executable package of software that includes all necessary code and dependencies.

Immutable Architecture: An infrastructure paradigm where servers are never modified, only replaced.

Infrastructure-as-Code: The practice of provisioning and managing data center resources using humanly readable declarative definition files (e.g., YAML).

Microservices: A series of independently deployable software services that, together, make up an application.

Vertical Scaling: Where you allocate more CPU or memory to your individual machines or containers.

Horizontal Scaling: Where you add more machines or containers to your load-balanced computing resource pool.

Conclusion

Kubernetes is a sophisticated system. It has, however, proven to be the most resilient, scalable, and performant platform for orchestrating highly available container-based applications, supporting decoupled and diverse stateless and stateful workloads, and providing automated rollouts and rollbacks.

There is no simple answer to whether or not adopting Kubernetes is the best option for you. It is dependent on your specific requirements and priorities, and many technical reasons are not even mentioned here. If you are starting a new project, working in a startup that wants to scale and develop more than just a quick MVP, or need to upgrade a legacy application, Kubernetes may be a good choice because it provides a lot of flexibility, power, and scalability. However, it always necessitates a time investment because new skills must be learned, and new workflows must be established in your development team.

However, if done correctly, investing the time to learn and adopt Kubernetes will often pay off in the future due to improved service quality, increased productivity, and a more motivated workforce.

In any case, you should make an informed decision, and there are numerous compelling reasons to use or avoid Kubernetes. I hope this post has assisted you in making the best decision for you.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.