This article was published as a part of the Data Science Blogathon.

According to research by deeplearning.ai, only 2% of the companies using Machine Learning, Deep learning have successfully deployed a model in production. The remaining 98% of the companies either drop their implementation of ML in their firms or rely on other companies for production. Deploying an ML model in production is not the same as deploying other software applications. It needs a specific life cycle and this cycle is called MLOps. A generic MLOps workflow can be followed to build, deploy, monitor ML applications.

Let us have a detailed discussion on the fundamentals of MLOps.

Software Development Life Cycle is a technique used by the software industries to plan, design, develop, and test high-quality software. The main motive of this life cycle is that the software should meet customers’ expectations that should be completed within time and within budget.

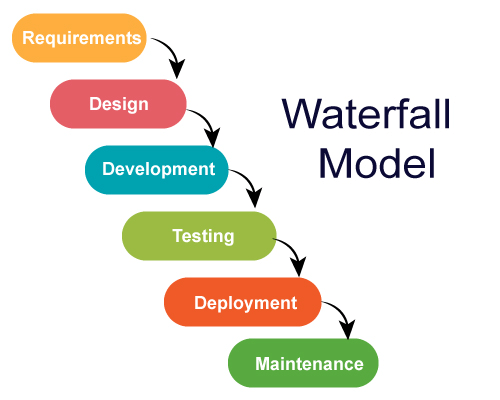

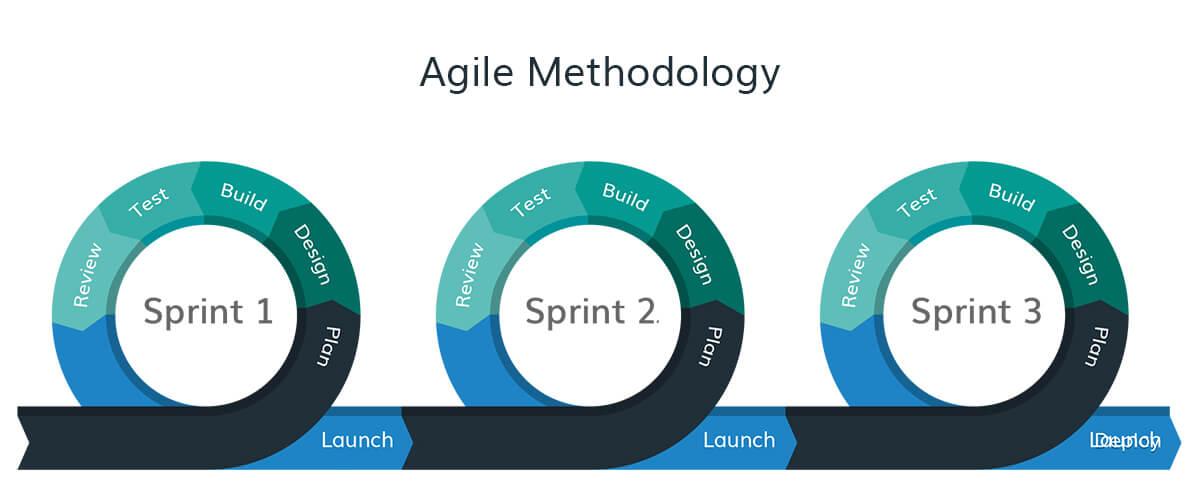

Traditional Software Development Life Cycle started with the waterfall model and after many years transformed into an agile model and DevOps. For understanding MLOps, we must be clear with the other Non-ML software development life cycles. Some of them are listed below.

This was the oldest model. It is a non-iterative method. Every stage of the model is pre-organized and executed one after another. It all starts from requirement gathering then to design, development, and testing. We can’t move into another phase without completing the ongoing phase. It is highly used for small projects where requirements are clearly defined. It is not suitable for dynamic and large projects where requirements change over time as per user demand. So we moved into the agile methodology.

This method made a breakthrough in the IT industry. Most of the organizations followed this approach. It is an iterative and procedural way of development. The solution to the problem can be segregated into different modules and they are delivered periodically. In each iteration, a working product is made and further changes are added to it in subsequent iterations. This model also involves the interaction with customers in the development and testing process to test and give feedback on the product. We can get some suggestions from the customers directly and improvements are done throughout the project development process. This agile methodology can be followed for projects where requirements are changing from time to time.

The DevOps method extends agile development practices. It is the method of executing their software applications driven by continuous integration, continuous deployment, and continuous delivery. It involves collaboration, integration, and automation among software developers and IT operators to improve efficiency, speed, and quality. With the help of DevOps, it is easy to ship software to production and to keep it running reliably.

The main cause is that there is a fundamental difference between ML development and traditional software development.

Machine Learning is not just code. It is code plus data

Machine learning = Code+Data

A Machine learning model is created by applying an algorithm(via code) to fit the data to result in an ML model.

In traditional development, the version of source code produces the version of the software. In machine learning, a version of source code and the version of the data produces the version of the ML model.

Code is crafted in the development environment. Data comes from multiple sources for training and testing. The data may change over time in terms of volume, velocity, veracity, and variety. There is some additional dimension that differentiates traditional software and ML applications. Sometimes Big data, brings entirely new challenges to the development process. For this evolving data, code needs to evolve over time.

Volume: It denotes the Size of the data

Velocity: Refers to the speed at which the data enter the system

Veracity: It says how accurate the data is

Variety: Refers to different formats of data.

So data and code with the progression of time, end up going in two directions with one objective of building and maintaining a scalable ML system.

.png)

Data and code progress over time( Author’s own image)

This disconnect causes several challenges that need to be solved to put an ML model in production.

To overcome these challenges, MLOps approaches bridging data and code together over the progression of time.

.png)

MLOps – Binding data and code together(Image source- Author)

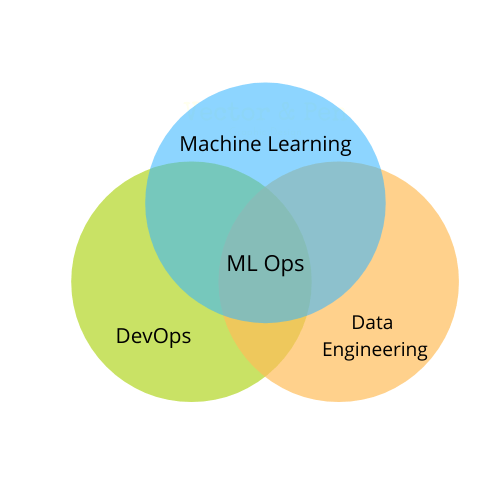

MLOps is a method to fuse ML with software development by integrating multiple domains ML, DevOps and data engineering which aims to build, deploy, and maintain ML systems in production reliably and efficiently. It brings together data engineering, ML, DevOps in a streamlined manner.

MLOps Intersection

How does data engineering come into the picture in MLOps?

As the IT industry and internet became so wide, the data generated per day keeps on increasing. To tackle this problem of data generation and storage, companies and tech giants started using Big Data. Our data is being stored somewhere in the world in databases, data warehouses, data lakes, etc. Storing and retrieving data from these sources is not an easy job.

For building a machine learning model, we primarily require data. Extracting specific data from these large data sources can’t be done with a single line of code. So we need data engineering which involves extracting, transforming, loading(ETL), and analyzing the data at a large scale.

So, automating the data incoming pipeline for training the data is the foremost step in MLOps.

Machine Learning

After we obtain the data from various sources, we need to build the ML models. Once the problem statement and business use cases are well understood, we try to organize and preprocess the data. We try different combinations of algorithms and tune their hyperparameters. This will produce certain models that are trained and fit the data. We also test our trained model with certain evaluation metrics to monitor the performance. This data pre-processing, model building, testing altogether form the machine learning pipeline.

DevOps

DevOps is bringing the development, testing, and operational aspects of software development. Its primary aim is to automate the process, continuous integration of code, and continuous delivery of the product to production.

These 3 domains of Data engineering, Machine Learning pipeline, DevOps altogether combine to form the MLOps.

So with the help of MLOps, data scientists/Machine learning Engineers can extract the data, train the models, run experiments, validate the models, and finally deploy the scalable applications to production smoothly.

There are so many cloud service providers started delivering microservices for ML operations. Some of the most used solutions of popular cloud platforms are listed below.

Amazon Sagemaker – Helps in the ML pipeline (i.e) build, train, deploy and monitor machine learning models.

To know more about Sagemaker, read out my blog Introduction to Exciting AutoML services of AWS

Microsoft Azure MLOps Suite:

Microsoft Machine Learning – Build, train, evaluate models

Azure Pipeline – Automate ML

Azure Monitor – Tracking and monitoring

Azure Kubernetes Service – Managing containers

Google Cloud MLOps:

Dataflow – Used for ETL

BigQuery – Cloud data warehouse, which is used as the source for ML training

TFX – to deploy models

To know about building ML pipeline in GCP, reach out to this comprehensive guide,

Google Cloud Platform with ML Pipeline: A Step-to-Step Guide

Based on the type of use case, organizations choose the best suitable tools and microservices for their ML operations. The budget is also the main factor in determining the tool.

In this article, we have explored the domains behind MLOps and seen how organizations perform MLOps.

If you liked this article and want to know more, go visit my other articles on Data Science and Machine Learning by clicking on the Link

I hope you enjoyed the article and increased your knowledge. Please feel free to contact me at [email protected] Linkedin

Image Sources: The sources of the images are given below the respective image. Some images are created by the author for illustration.

Read more articles on Blog.

The media shown in this article is not owned by Analytics Vidhya and are used at the Author’s discretion.

Lorem ipsum dolor sit amet, consectetur adipiscing elit,