Introduction

Welcome to the September edition of our popular GitHub repositories and Reddit discussions series! GitHub repositories continue to change the way teams code and collaborate on projects. They’re a great source of knowledge for anyone willing to tap into their infinite potential.

As more and more professionals are vying to break into the machine learning field, everyone needs to keep themselves updated with the latest breakthroughs and frameworks. GitHub serves as a gateway to learn from the best in the business. And as always, Analytics Vidhya is at the forefront of bringing the best of the bunch straight to you.

This month’s GitHub collection is awe-inspiring. Ever wanted to convert a research paper into code? We have you covered. How about implementing the top object detection algorithms using a framework of your choosing? Sure, we have that as well. And the fun doesn’t stop there! Scroll down to check out this, and other top repositories, launched in September.

On the Reddit front, I have included the most thought-provoking discussions in this field. My suggestion is to not only read through these threads but also actively participate in them to enhance and supplement your existing knowledge.

You can check out the top GitHub repositories and top Reddit discussions (from April onwards) we have covered each month below:

GitHub Repositories

Papers with Code

How many times have you come across research papers and wondered how to implement them on your own? I have personally struggled on multiple occasions to convert a paper into code. Well, the painstaking process of scouring the internet for specific pieces of code is over!

Hundreds of machine learning and deep learning research papers and their respective codes are included here. This repository is truly stunning in its scope and is a treasure trove of knowledge for a data scientist. New links are added weekly and the NIPS 2018 conference papers have been added as well!

If there’s one GitHub repository you bookmark, make sure it’s this one.

Object Detection using Deep Learning

Object detection is quickly becoming commonplace in the deep learning universe. And why wouldn’t it? It’s a fascinating concept with tons of real-life applications, ranging from games to surveillance. So how about a one-stop shop where you can find all the top object detection algorithms designed since 2014?

Yes, you landed in the right place. This repository, much like the one above, contains links to the full research papers and the accompanying object detection code to implement the approach mentioned in them. And the best part? The code is available for multiple frameworks! So whether you’re a TensorFlow, Keras, PyTorch, or Caffe user, this repository has something for everyone.

At the time of publishing this article, 43 different papers were listed here.

Train Imagenet Models in 18 Minutes

Yes, you really can train a model on the ImageNet dataset in under 18 minutes. The great Jeremy Howard and his team of students designed an algorithm that outperformed even Google, according to the popular DAWNBench benchmark. This benchmark measures the training time, cost and other aspects of deep learning models.

And now you can reproduce their results on your own machine! You need to have Python 3.6 (or higher) to get started. Go ahead and dive right in.

Pypeline – Creating Concurrent Data Pipelines

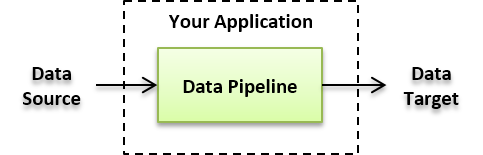

Source: North Concepts

Data engineering is a critical function in any machine learning project. Most aspiring data scientists these days tend to skip over this part, preferring to focus on the model building side of things. Not a great idea! You need to be aware (and even familiar) with how data pipelines work, what role Hadoop, Spark and Dask have to play, etc.

Sounds daunting? Then check out this repository. Pypeline is a simple yet very effective Python library for creating concurrent data pipelines. The aim of this library is to solve low to medium data tasks (that involve concurrency or parallelism) where the use of Spark might feel unnecessary.

This repository contains codes, benchmarks, documentation and other resources to help you become a data pipeline expert!

Everybody Dance Now – Pose Estimation

This one is a personal favourite. I covered the release of the research paper back in August and have continued to be in awe of this technique. It is capable of transferring motion between human objects in different videos. I high recommend checking out the video available in the above link, it will blow your mind!

This repository contains a PyTorch implementation of this approach. The sheer amount of details this algorithm can pick up and replicate are staggering. I can’t wait to try this on my own machine!

Reddit Discussions

Beginner Friendly AI Papers you can Implement

This thread continues our theme of implementing research papers. It’s an ideal spot for beginners in AI looking for a new challenge. There’a two fold advantage of checking out this thread:

- Quite a few links to easy-to-read AI research papers

- Advice from experienced data scientists and machine learning experts on how to go about converting these papers into codes

Don’t you love the open source community?

What Happens when an Already Accepted Research Paper is Found to have Flaws?

A keen-eyed Redditor recently found a flaw in one of the CVPR (Computer Vision and Pattern Recognition) 2018 research papers. This is quite a big thing since the paper had already been accepted by the conference committee and successfully presented to the community.

The original author of the paper took time out to respond to this mistake. It led to a very civil and thought-provoking discussion between the top ML folks on what should be done when a mistake like this is unearthed. Should the paper be retracted or amended with the corrections? There are over 100 comments in this thread and render this a must-read for everyone.

Having Trouble Understanding a Research Paper? This Thread has All the Answers

We all get stuck at some point while going through a research paper. The math can often be difficult to understand, and the approach used can bamboozle the best of us. So why not reach out to the community and ask for help?

That’s exactly what this thread aims to do. Make sure you follow the format mentioned in the original post and your queries will be answered. There are plenty of Q&As already there so you can browse through them to get a feel for how the process works.

How can you Prepare for a Research Oriented Role?

A pertinent question. A lot of people I speak to are interested in getting into the research side of ML, without having a clue of what to expect. Is a background in mathematics and statistics enough? Or should you be capable enough of cracking open all research papers and making sense of them on your own?

The answer lies more towards the latter. Research is a field where the experts can guide you, but no one really knows the right answer until someone figures it out. There’s no single book or course that can prepare you for such a role. Needless to say, this thread is an enlightening one with different takes on what the prerequisites are.

Researchers who Claim they will Release Code Mentioned in a Paper but Never Do

This is a controversial topic, but one I feel everyone should be aware of. Researchers release the paper and mention that the code will follow soon in order to make the reviewers happy. But sometimes this just doesn’t happen. The paper gets accepted to the conference, but the code is never released to the community.

It’s a question of ethics than anything else. Why not mention that the data is private and can only be shared with a select few? If your code cannot be validated, what’s the point of presenting it to the audience? This is a microcosm of the questions asked in this thread.

End Notes

Curating this list and writing about each repository and discussion thread was quite a thrill. It filled with me a sense of wonder and purpose – there is so much knowledge out there and most of it is open-source. It would be highly negligent of us to not learn from it and put it to good use.

If there are any other links you feel the community should know about, feel free to let us know in the comments section below.

I need recommendations about this .

Hi Praveen, Can you please elaborate on what you need recommendations on?

Thank you so much!