Introduction

Databricks simplify and accelerate data management and data analysis in the rapidly evolving world of big data and machine learning. Developed by Apache Spark, it offers tools for data storage, processing, and data visualization, all integrated with major cloud providers like AWS, Microsoft Azure, and Google Cloud Platform. This article provides a detailed Databricks tutorial for beginners and acts as a practical guide for data enthusiasts and professionals.

Learning Outcomes

- Understand Databricks’ core functionalities and their integration with cloud platforms.

- Learn to manage and analyze large datasets using Databricks efficiently.

- Explore the capabilities of Databricks for building and deploying machine learning models.

- Gain hands-on experience with Databricks notebooks, SQL, and visualization tools.

- Discover best practices for leveraging Databricks in data engineering and data science workflows.

This article was published as a part of the Data Science Blogathon.

Table of contents

- Introduction

- What is Databricks?

- Important Key Points of Databricks

- About Databricks Community Edition

- What is Meant by Data Lake?

- Role-based Databricks adoption

- Advantages of Databricks

- Step-by-step guide to Databricks

- Visualize SQL Output on Databricks Notebook

- End-to-End Machine Learning Classification on Databricks

- Experiment with XGBoost and Hyperopt

- Databricks Certification

- Are Databricks Easy to Learn?

- Conclusion

- Frequently Asked Questions

What is Databricks?

In simple terms, Databricks is a data warehousing and machine learning web-based platform developed by the creators of Spark. But Databricks is much more than that. It’s a one-stop product for all data needs, from data storage to data analysis. It derives insights using SparkSQL, builds predictive models using SparkML, and provides active connections to visualization tools such as PowerBI, Tableau, Qlikview, etc. It can be viewed as Facebook’s big data.

Amazon generates a large amount of data from operational sources like app clicks, Amazon Pay transactions, and Echo voice information. The data engineering team handles ETL to ensure data quality and sourcing. Spark simplifies ETL tasks, saving time and providing a competitive edge to stakeholders. It speeds up tasks like storing POS data in an SQL table, saving valuable time.

Databricks offers a solution for loan approval batch pipelines with 100M applications, allowing faster ETLs and easier decision-making. Conventional models take days to evaluate input data, leading to operational delays and unhappy customers. Fintech apps like Databricks provide various products and solutions, reducing the need for 24-48 hours to load weekly operations data and preventing workflow delays and potential lost opportunities.

Databricks integrates with Amazon Web Services, Microsoft Azure, and Google Cloud Platform, making connecting with these major cloud computing infrastructures easy. Some of the major firms, such as Starbucks,

Important Key Points of Databricks

Databricks can be thought of as a big toolbox for data folks. It allows data analysts, data engineers, and data scientists to work together on one platform. Here are some key things Databricks helps with:

- Managing and storing large amounts of data: Imagine a giant warehouse for all your company’s information. Databricks helps organize and protect it.

- Working with data together: Databricks provide a space where everyone can access and analyze the data, like a shared workspace for data projects.

- Making sense of data: Databricks has tools to clean, transform, and analyze information to uncover patterns and trends.

- Building data-driven applications: You can use Databricks to create programs that predict outcomes or automate tasks using data.

Overall, Databricks helps businesses unlock the power of their data by making it easier to store, analyze, and collaborate on data projects.pen_sparktunesharemore_vert

About Databricks Community Edition

- Databricks community version is hosted on AWS and is free of cost.

- Ipython notebooks can be imported onto the platform and used as usual.

- 15GB clusters, a cluster manager, and the notebook environment are provided, and usage is not time-limited.

- Supports SQL, scala, python, and pyspark.

- Provides interactive notebook environment.

The Databricks paid version has a 14-day trial period but must be used alongside AWS, Azure, or GCP.

What is Meant by Data Lake?

Lakehouse or data lake is a marketing term used in Databricks for a storage layer that can accommodate structured or unstructured, streaming or batch information. It’s a simple platform for storing all data. The data lake from Databricks is called Delta Lake. Below are a few features of Delta Lake.

- It’s based on the Parque file format.

- Compatible with Apache Spark.

- Versioning of data.

- ACID transactions (atomicity, consistency, isolation, durability) ensure data durability and consistency.

- Batch and streaming data sink.

- Supports deleting and upgrading into tables using API’s.

- Able to query millions of files using Spark.

| Data Lake Grid |

Data lake

| Data lakehouse |

Data warehouse

|

|

Types of data

| All types: Structured data, semi-structured data, unstructured (raw) data | All types: Structured data, semi-structured data, unstructured (raw) data | Structured data only |

| Cost | $ | $ | $$$ |

| Format | Open format | Open format | The closed, proprietary format |

| Scalability | Scales to hold any amount of data at low cost, regardless of the type | Scales to hold any amount of data at low cost, regardless of the type | Scaling up becomes exponentially more expensive due to vendor costs |

|

Intended users

| Limited: Data scientists | Unified: Data analysts, data scientists, machine learning engineers | Limited: Data analysts |

|

Ease of use

| Difficult: Exploring large amounts of raw data can be difficult without tools to organize and catalog the data | Simple: Provides simplicity and structure of a data warehouse with the broader use cases of a data lake | Simple: The structure of a data warehouse enables users to quickly and easily access data for reporting and analytics |

Role-based Databricks adoption

Data Analyst/Business Analyst: Analysis, RACs, and visualizations are the bread and butter of analysts, so the focus must be BI integration and Databricks SQL. Read about the Tableau visualization tool here.

Data Scientist: Data scientist have well-defined roles in larger organizations but in smaller organizations, data scientist wears various hats, one can own all the 3 roles, of an analyst, data engineer, bi visualizer etc. In a well-defined role, data scientists are responsible for sourcing data, a skill grossly neglected in the face of modern ML algorithms. Build predictive models and manage model deployment and monitor data drift,

Important skills:

- Sourcing data

- Build predictive models

- Model lifecycle

- Model deployment

Data Engineer: Largely responsible for building ETLs and managing the constant flow of ever-increasing data. Process, clean, and quality check the data before pushing it to operational tables. Model deployment and platform support are other responsibilities entrusted to data engineers.

Databricks must combine with Azure, AWS, or GCP, and its relatively higher costs lead to low adoption among small and medium startups in India.

Advantages of Databricks

- Support for the frameworks (scikit-learn, TensorFlow, Keras), libraries (matplotlib, pandas, numpy), scripting languages (e.g.R, Python, Scala, or SQL), tools, and IDEs (JupyterLab, RStudio).

- Databricks delivers a Unified Data Analytics Platform. Data engineers, data scientists, data analysts, and business analysts can all work in tandem on the same notebook.

- Flexibility across different ecosystems – AWS, GCP, Azure.

- Data reliability and scalability through Delta Lake.

- Basic built-in visualizations.

- AutoML and model lifecycle management through MLFLOW.

- Hyperparameter tuning support through HYPEROPT.

- Github and bitbucket integration

- 10X Faster ETL’s.

Apache Spark

Spark is a tool for coordinating tasks/jobs across a cluster of computers. A cluster manager, such as YARN (Yet Another Resource Negotiator), Mesos, or Spark’s own Stand-Alone cluster manager, manages these clusters of machines. It supports Scala, Python, SQL, Java, and R. A Spark application consists of one driver and executors.

The driver node is responsible for three things:

- Maintaining information about the Spark application;

- Responding to a user’s program.

- Analyzing, distributing, and scheduling work across the executors.

The executors are responsible for two things:

- Executing code assigned to it by the driver.

- Reporting the computation state on that executor back to the driver node.

The cluster manager is responsible for the following:

- Controlling physical machines and allocating resources to Spark applications.

Also Read: Understand The Internal Working of Apache Spark.

Step-by-step guide to Databricks

The Databricks community edition is free to use, and it has two main Roles:

- Data Science and Engineering

- Machine learning.

The machine learning path has an added model registry and experiment registry, where experiments can be tracked using MLFLOW. Databricks offers Jupyter notebooks to share across teams, making collaboration easy.

Create a cluster

For the notebooks to work, they have to be deployed on a cluster. Databricks provides 1 Driver: 15.3 GB Memory, 2 Cores, and 1 DBU for free.

- Select Create, then click on Cluster.

- Provide a cluster name.

- Select Databricks Runtime Version 9.1 (Scala 2.12, Spark 3.1.2) or other runtimes; GPU isn’t available for the free version.

- Select the availability zone as AUTO, which will configure the nearest available zone.

- It might take a few minutes before the cluster is up and running.

-

The cluster will automatically terminate after an idle period of two hours.

- To close the cluster, there are two options: one is to terminate and then restart later, and the other is to delete the cluster entirely. Deleting the cluster will not delete the notebook, as notebooks can be mounted on any available cluster relevant to the job at hand.

Alternatively,

- Select Compute

- Select Create Cluster and then follow step 2 onwards, given above.

Create a notebook

- Select the Create option and then click on a Notebook.

- Provide a relevant name to the notebook.

- Select the language of preference – SQL, Scale, Python, R.

- Select a cluster for the notebook to run on.

Publish Workbook

Once the analysis is complete, Databricks notebooks can be published(publicly available) and the links will be available for 6 months.

Import Published Notebook

Databricks notebooks, which are published, can be imported using URLs and physical files. To import using URL.

- Select Workspace and move to the folder to which the file needs to be saved.

- Click on import, and a new dialogue box will appear.

- Paste the URL and click on import.

- Use the link and import a sparkSQL tutorial to the workspace.

Run SQL on Databricks

Create a new notebook and select SQL as the language. In the notebook, select Upload Data and upload the csv.

Write the data to events002:

%python

df1 = spark.read.format("csv").load("dbfs:/FileStore/shared_uploads/[email protected]/test-3.csv",header="true", inferSchema="true")

df1.write.format("delta").mode("overwrite").save("/mnt/delta/events002")Create a SQL table using the below code:

DROP TABLE IF EXISTS diamonds; CREATE TABLE diamonds USING DELTA LOCATION '/mnt/delta/events002/'

Run SQL commands to query data:

select * from diamonds limit 10

select manufacturer, count(*) as freq from diamonds group by 1 order by 2 descVisualize SQL Output on Databricks Notebook

The output data-frames can be visualized directly in the notebook. Select the bar icon below and choose the appropriate chart. A total of 11 chart types are available.

- Bar chart

- Scatter chart

- Maps

- Line chart

- Area chart

- Pie chart

- Quantile chart

- Histogram

- Box plot

- Q-Q plot

- Pivot (Excel-like pivot chart interface. )

End-to-End Machine Learning Classification on Databricks

Databricks machine learning support is growing daily, and MLlib is Spark’s machine learning (ML) library, which was developed for machine learning activities on Spark. Below is a classification example to predict the quality of Portuguese “Vinho Verde” wine based on the wine’s physicochemical properties.

Use the link to download the data. Then, download both winequality-red.csv and winequality-white.csv to your local machine. Finally, upload the CSV using the Upload Data command in the toolbar.

import pandas as pd

red_wine = pd.read_csv("/dbfs/FileStore/shared_uploads/[email protected]/winequality_red.csv")

white_wine = pd.read_csv("/dbfs/FileStore/shared_uploads/[email protected]/winequality_white.csv")white_wine['is_red'] = 0.0

red_wine['is_red'] = 1.0

data_df = pd.concat([white_wine, red_wine], axis=0)Plotting

Plot a histogram of the Y label:

import seaborn as sns

sns.distplot(data.quality, kde=False)

Box plots to compare features and Y label:

import matplotlib.pyplot as plt

dims = (3, 4)

f, axes = plt.subplots(dims[0], dims[1], figsize=(25, 15))

axis_i, axis_j = 0, 0

for col in data.columns:

if col == 'is_red' or col == 'quality':

continue # Box plots cannot be used on indicator variables

sns.boxplot(x=high_quality, y=data[col], ax=axes[axis_i, axis_j])

axis_j += 1

if axis_j == dims[1]:

axis_i += 1

axis_j = 0

Split train test data

from sklearn.model_selection import train_test_split

train, test = train_test_split(data, random_state=123)

X_train = train.drop(["quality"], axis=1)

X_test = test.drop(["quality"], axis=1)

y_train = train.quality

y_test = test.qualityBuild a baseline Model

import mlflow

import mlflow.pyfunc

import mlflow.sklearn

import numpy as np

import sklearn

from sklearn.ensemble import RandomForestClassifier

from sklearn.metrics import roc_auc_score

from mlflow.models.signature import infer_signature

from mlflow.utils.environment import _mlflow_conda_env

import cloudpickle

import time

# The predict method of sklearn's RandomForestClassifier returns a binary classification (0 or 1).

# The following code creates a wrapper function, SklearnModelWrapper, that uses

# the predict_proba method to return the probability that the observation belongs to each class.

class SklearnModelWrapper(mlflow.pyfunc.PythonModel):

def __init__(self, model):

self.model = model

def predict(self, context, model_input):

return self.model.predict_proba(model_input)[:,1]

# mlflow.start_run creates a new MLflow run to track the performance of this model.

# Within the context, you call mlflow.log_param to keep track of the parameters used, and

# mlflow.log_metric to record metrics like accuracy.

with mlflow.start_run(run_name='untuned_random_forest'):

n_estimators = 10

model = RandomForestClassifier(n_estimators=n_estimators, random_state=np.random.RandomState(123))

model.fit(X_train, y_train)

# predict_proba returns [prob_negative, prob_positive], so slice the output with [:, 1]

predictions_test = model.predict_proba(X_test)[:,1]

auc_score = roc_auc_score(y_test, predictions_test)

mlflow.log_param('n_estimators', n_estimators)

# Use the area under the ROC curve as a metric.

mlflow.log_metric('auc', auc_score)

wrappedModel = SklearnModelWrapper(model)

# Log the model with a signature that defines the schema of the model's inputs and outputs.

# When the model is deployed, this signature will be used to validate inputs.

signature = infer_signature(X_train, wrappedModel.predict(None, X_train))

# MLflow contains utilities to create a conda environment used to serve models.

# The necessary dependencies are added to a conda.yaml file which is logged along with the model.

conda_env = _mlflow_conda_env(

additional_conda_deps=None,

additional_pip_deps=["cloudpickle=={}".format(cloudpickle.__version__), "scikit-learn=={}".format(sklearn.__version__)],

additional_conda_channels=None,

)

mlflow.pyfunc.log_model("random_forest_model", python_model=wrappedModel, conda_env=conda_env, signature=signature)Derive feature importance

feature_importances = pd.DataFrame(model.feature_importances_, index=X_train.columns.tolist(), columns=['importance'])

feature_importances.sort_values('importance', ascending=False)

Experiment with XGBoost and Hyperopt

Hyperopt is a hyperparameter tuning framework based on Bayesian optimization. Grid search is time-consuming, and Random search, while better than grid search, fails to provide optimum results.

from hyperopt import fmin, tpe, hp, SparkTrials, Trials, STATUS_OK

from hyperopt.pyll import scope

from math import exp

import mlflow.xgboost

import numpy as np

import xgboost as xgb

search_space = {

'max_depth': scope.int(hp.quniform('max_depth', 4, 100, 1)),

'learning_rate': hp.loguniform('learning_rate', -3, 0),

'reg_alpha': hp.loguniform('reg_alpha', -5, -1),

'reg_lambda': hp.loguniform('reg_lambda', -6, -1),

'min_child_weight': hp.loguniform('min_child_weight', -1, 3),

'objective': 'binary:logistic',

'seed': 123, # Set a seed for deterministic training

}

def train_model(params):

# With MLflow autologging, hyperparameters and the trained model are automatically logged to MLflow.

mlflow.xgboost.autolog()

with mlflow.start_run(nested=True):

train = xgb.DMatrix(data=X_train, label=y_train)

test = xgb.DMatrix(data=X_test, label=y_test)

# Pass in the test set so xgb can track an evaluation metric. XGBoost terminates training when the evaluation metric

# is no longer improving.

booster = xgb.train(params=params, dtrain=train, num_boost_round=1000,

evals=[(test, "test")], early_stopping_rounds=50)

predictions_test = booster.predict(test)

auc_score = roc_auc_score(y_test, predictions_test)

mlflow.log_metric('auc', auc_score)

signature = infer_signature(X_train, booster.predict(train))

mlflow.xgboost.log_model(booster, "model", signature=signature)

# Set the loss to -1*auc_score so fmin maximizes the auc_score

return {'status': STATUS_OK, 'loss': -1*auc_score, 'booster': booster.attributes()}

# Greater parallelism will lead to speedups, but a less optimal hyperparameter sweep.

# A reasonable value for parallelism is the square root of max_evals.

spark_trials = SparkTrials(parallelism=10)

# Run fmin within an MLflow run context so that each hyperparameter configuration is logged as a child run of a parent

# run called "xgboost_models" .

with mlflow.start_run(run_name='xgboost_models'):

best_params = fmin(

fn=train_model,

space=search_space,

algo=tpe.suggest,

max_evals=96,

trials=spark_trials,

rstate=np.random.RandomState(123)

)

Finally, retrieve the best model from the MLFLOW run :

best_run = mlflow.search_runs(order_by=['metrics.auc DESC']).iloc[0]

print(f'AUC of Best Run: {best_run["metrics.auc"]}')Practice – Market Basket Analysis on Databricks

Use the online notebook to analyse InstaKart grocery data and recommend upselling/cross-selling opportunities using market basket analysis.

Dashboarding on Databricks

Databricks has a feature that allows users to create an interactive dashboard using existing codes, images, and output.

- Move to the View menu and select + New Dashboard

- Provide a name to the dashboard.

- Click on the tiny Bar Graph image on each cell’s top right corner.

- It will show the available dashboard for the notebook.

- If the code block or image needs to be on the dashboard, tick the box.

- All ticked cells will appear on the dashboard.

- The cell can be organised easily with the drag and drop feature.

Useful resources and references

- Forecasting at Starbucks using Databricks.

- Databricks community for discussion on various topics.

- Databricks university alliance

- Webinars and Ebooks

- Ebook on Delta Lake.

- Ebook on ML lifecycle.

- Data lake Databricks explanation

- Dashboarding on Databricks.

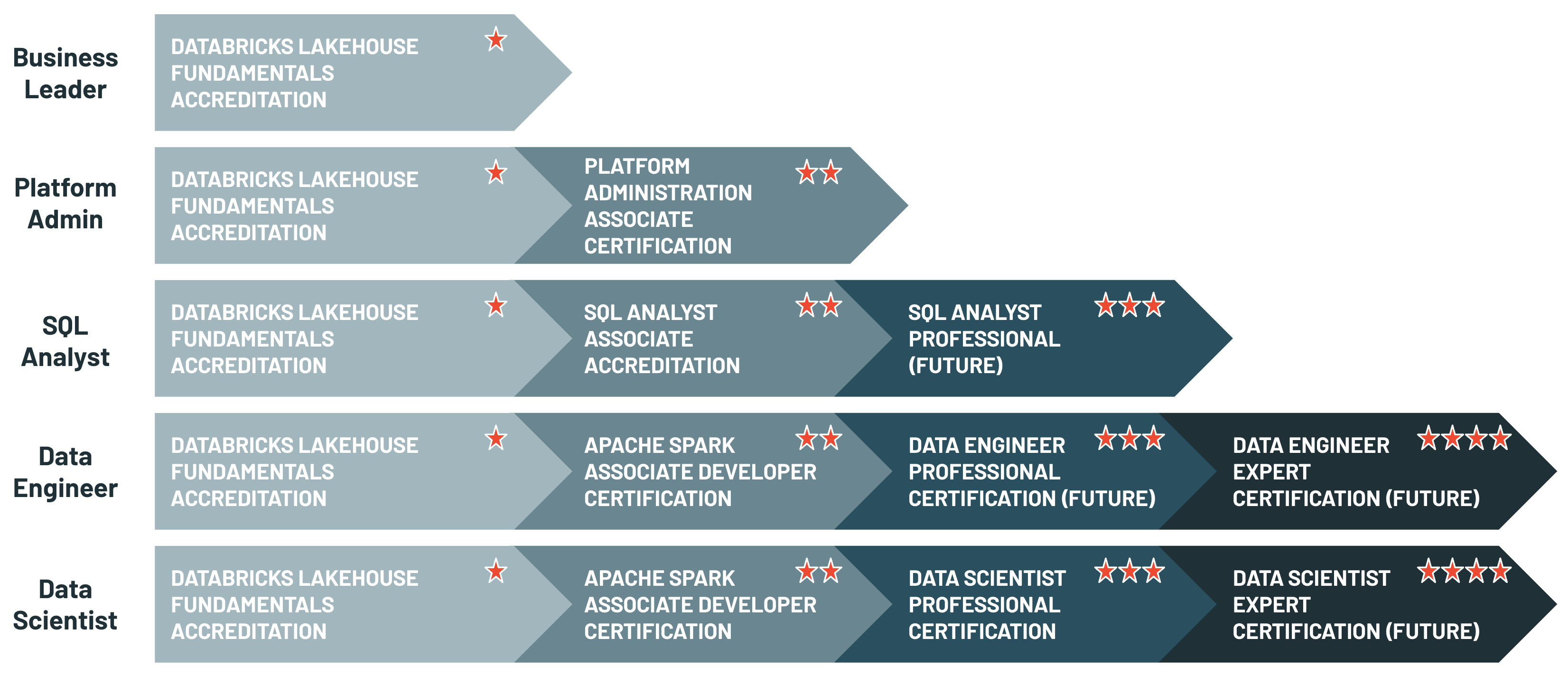

Databricks Certification

Databricks provides live instructor-led training and self-paced programs to help individuals better understand the platform. The self-paced course is priced at $2000. It also provides certification based on role fitment. The common career tracks are Business leader, platform admin, SQL analyst, data engineer, and data scientist.

There are four certifications, namely:

- Databricks is a certified associate developer for Apache Spark, which is for spark developers.

- Databricks Certified Professional Data Scientist is for all things ML.

- Azure Databricks Certified Associate Platform Administrator is an exam that assesses understanding the basics of network infrastructure and security, identity and access, cluster usage, and automation with the Azure Databricks platform.

- Databricks Certified Professional Data Engineer asses ETL, pipelines, and deployment.

Are Databricks Easy to Learn?

Databricks are relatively easy to learn, especially if you have some experience with related technologies. Here’s a breakdown:

- Straightforward Interface: Databricks offers a user-friendly interface with notebooks, making working with data intuitive.

- Leverages Existing Skills: If you’re familiar with SQL or Python, you’re well on your way. Databricks utilize these languages for data manipulation and analysis.

- Learning Resources: Databricks provides a comprehensive set of learning resources, including tutorials, documentation, and even hands-on labs to get you started. To access them, visit https://www.databricks.com/learn.

However, there are some factors to consider that might influence the learning curve:

- Apache Spark: Databricks is built on Apache Spark, a powerful big data processing engine. While Databricks simplifies Spark functionalities, understanding core Spark concepts can be beneficial in the long run.

- Data Engineering/Science Background: If you have a data engineering or data science background, grasping Databricks’ functionalities for data pipelines and machine learning will be easier.

Conclusion

This Databricks tutorial for beginners scratches the surface of what Databricks is capable of. Databricks is capable of a lot more, which are not explored in this article, and for data enthusiasts, it is quite a treasure trove. So practice and always keep learning. At the End of this article, you will fully understand Databricks and what they are for beginners.

Key Takeaways

- Databricks accelerates big data management and machine learning by integrating major cloud platforms and unified data analytics tools.

- It simplifies data storage, processing, and visualization while supporting various frameworks and libraries for data science.

- Databricks’ Delta Lake enhances data reliability and scalability, making it suitable for large-scale data operations.

- The platform’s collaborative features, such as shared notebooks and interactive dashboards, streamline teamwork and data analysis.

- Databricks provides comprehensive learning resources and certification programs to support users in mastering its features.

Frequently Asked Questions

A. Databricks is a unified analytics platform that simplifies data management and machine learning by integrating with major cloud providers like AWS, Microsoft Azure, and Google Cloud Platform.

A. Databricks leverages Apache Spark to efficiently handle large-scale data processing, including ETL tasks, data analysis, and machine learning model development.

A. Yes, Databricks is relatively easy to learn, especially if you have experience with SQL or Python. It offers a user-friendly interface and extensive learning resources.

A. Delta Lake is a storage layer that provides reliability and scalability by supporting ACID transactions, versioning, and the ability to handle both batch and streaming data.

A. Yes, Databricks facilitates collaboration through shared notebooks and interactive dashboards, allowing teams to work together seamlessly on data projects.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.