Introduction

Quora is one of the most popular online question-and-answer platforms, with millions of users asking and answering questions on various topics. As the platform has grown in popularity, it has become an important source of information and knowledge for individuals and organizations. However, answering questions on Quora can be time-consuming, especially for organizations that must respond to many questions daily. To address this problem, this article proposes an innovative solution – using OpenAI’s ChatGPT to automate the process of answering questions on Quora.

ChatGPT is a state-of-the-art language generation model developed by OpenAI. Trained on a massive dataset of text, it can generate human-like text in response to a given prompt, making it an ideal tool for answering questions on Quora. By integrating ChatGPT into a Quora answering tool, organizations can save time and effort while ensuring that answers are accurate and relevant. Moreover, this tool can also be used to answer questions on other platforms such as Yahoo Answers, StackOverflow, etc. This can be extremely beneficial for organizations that must maintain a strong online presence and engage with their customers and clients.

This article will discuss the technical details of implementing such a tool, including using Selenium to extract questions from Quora and integrating ChatGPT to generate answers. We will also explore some of this approach’s potential challenges and limitations. By automating the process of answering questions on Quora, organizations can improve their efficiency, reduce costs, and enhance their reputation as reliable sources of information.

Learning Objectives

In this article, you will learn:

1. The technical details of implementing a Quora answering tool using OpenAI’s ChatGPT and Selenium.

2. The use cases and applications of ChatGPT across industries and domains.

3. The impact of AI-based tools on organizations’ efficiency and cost-effectiveness.

This article was published as a part of the Data Science Blogathon.

Table of Contents

- Project Description

2.1 Problem Statement - Pre-project Requirements

- Approach to the Project

4.1 Warning - Data Collection from Quora

5.1. Step 1

5.2. Step 2

5.3. Step 3

5.4. Sep 4

5.5. Step 5 - Integrating ChatGPT’s API

- Posting the Answers Back to Quora

- Evaluating the Performance of the Tool

- How Does the Tool Help in Time & Cost Savings

- Challenges & Limitations of Using Automated Tools

- Conclusion

Project Description

This project aims to develop a tool that utilizes the capabilities of OpenAI’s ChatGPT to automate the process of answering questions on Quora. The tool will use Selenium to extract questions from Quora based on a specified topic and time frame and then use ChatGPT to generate answers to these questions. The answers will then be posted back to Quora.

The tool will be helpful for organizations that need to maintain a strong online presence and engage with customers and clients on a regular basis, particularly in industries such as customer service, marketing, and e-commerce.

Problem Statement

This project aims to solve the inefficiency and resource-intensive nature of manually answering questions on Quora for organizations. This project aims to develop an automated tool that utilizes the capabilities of OpenAI’s ChatGPT to extract questions from Quora, generate answers using the ChatGPT API, and post the answers back on Quora.

Pre-Project Requirements

To undertake this project, the following prerequisites should be met:

1. Experience with Selenium: Selenium will be used to extract questions from Quora, so experience with Selenium is necessary to navigate the Quora website and extract the necessary information properly.

2. Familiarity with OpenAI’s API: The tool will utilize the ChatGPT API to generate answers to questions. It is important to understand how to interact with the API and use it to generate answers.

3. Understanding of web scraping: the project relies on web scraping to extract questions and answers from Quora, so understanding how to do web scraping is important.

4. Familiarity with web development: The tool will interact with the Quora website, so knowledge of web development concepts is important to understand how the website works and how to navigate it.

5. Familiarity with Quora: Understanding how Quora works and what kind of questions are asked will help develop the tool.

6. Understanding of machine learning and natural language processing: As this project utilizes a machine learning model, it would be beneficial to understand machine learning and natural language processing concepts and techniques.

Approach to the Project

The approach for this project is to utilize the capabilities of OpenAI’s ChatGPT to automate the process of answering questions on Quora. The steps that will be taken to achieve this are:

1. Use Selenium to extract questions from Quora based on a specified topic and time frame.

2. Use the ChatGPT API to generate answers to the extracted questions.

3. Post the generated answers back to Quora.

4. Evaluate the tool’s performance by measuring its ability to extract questions from Quora, generate accurate answers, and post them back on Quora.

The choice of Selenium is based on its ability to automate browser actions, which allows for easy navigation of the Quora website and extraction of the necessary information. ChatGPT is chosen for its state-of-the-art language generation capabilities and ability to generate human-like text in response to a given prompt.

Warning!

Source: Pexels

Before we move any further, I would like to state explicitly that this project is executed for academic purposes only and should not be used in a commercial setting without proper permission. Web scraping without permission violates Quora’s terms of service and can result in your IP address being blocked from accessing Quora’s website. Before considering using this tool in a production environment, make sure to understand and abide by Quora’s web scraping policies.

Data Collection from Quora

Step 1:

We will start by importing the necessary libraries, such as Selenium, Pandas, and time and setting up the chrome driver. Selenium is a library that allows you to automate web browsers, which is helpful for collecting data from websites. Pandas is a library that allows you to work with data in a tabular format, and time is a library that allows you to control the flow of the program based on time. Finally, the chrome driver is a piece of software that allows Selenium to interact with Google Chrome.

# Importing the necessary libraries import selenium from selenium import webdriver as wb import pandas as pd import time from selenium.webdriver.support.ui import WebDriverWait from selenium.webdriver.common.by import By from selenium.webdriver.support import expected_conditions as EC from selenium.webdriver.common.keys import Keys import datetime

driver = wb.Chrome(r"YOUR PATHchromedriver.exe")

Step 2:

The next step is to log into Quora by visiting the login page and providing the credentials. The code uses Selenium to automate this process by locating the elements on the page where the username and password need to be entered and simulating the keystrokes to enter the information, followed by clicking on the login button to complete the login process. This is demonstrated using a Google account login method, where the Google account credentials are used for logging into Quora.

# Logging into Quora

driver.get('https://www.quora.com/')

#Finding and clicking the login button on the Quora page

driver.find_element_by_xpath('//*[@id="root"]/div/div[2]/div[1]/div[2]/div/div[2]/div/button/div/div/div').click()

time.sleep(5)

# Finding and clicking the "Continue with Google" button on the login page

driver.find_element_by_xpath('//*[@id="root"]/div/div[2]/div[2]/div/div/div[1]/div[2]/div/div[2]/div[1]/div/div/div/div/div').click()

# Finding the email input field and entering the email address

driver.find_element_by_xpath('//*[@id="identifierId"]').send_keys('[email protected]')

# Finding and clicking the "Next" button to proceed to the password page

driver.find_element_by_xpath('//*[@id="identifierNext"]/div/button/span').click()

time.sleep(2)

# Finding and clicking the "Next" button on the password page

driver.find_element_by_xpath('//*[@id="view_container"]/div/div/div[2]/div/div[1]/div/form/span/section/div/div/div/ul/li[1]/div/div[2]/div').click()

time.sleep(2)

# Finding the password input field and entering the password

driver.find_element_by_xpath('//*[@id="password"]/div[1]/div/div[1]/input').send_keys('YOUR PASSWORD')

# Finding and clicking the "Next" button to log in to the account

driver.find_element_by_xpath('//*[@id="passwordNext"]/div/button/span').click()

Step 3:

Once logged in, let’s search for a topic to answer questions. For our purposes, let’s assume that we are an organization that provides training on Data Science. We want to build our online presence by answering questions related to data science. We optimize our code to define the topic to search for on Quora, in this case, “data science,” and a time frame of “day.” The timeframe refers to new questions posted within the selected timeframe, which can be an hour, day, week, month, or year. The code uses the .format() method to combine the topic and the time frame into a search query and navigates to the search page using the driver.get() method.

Step 4:

The next step is to scroll down the page to load more questions by using the elem.send_keys(Keys.PAGE_DOWN) method, which simulates the pressing of the “Page Down” button on the keyboard. This is done multiple times, in this case, 1 time, to load a sufficient number of questions on the page.

# Defining the topic to search for as "data science"

query = 'data science'

# Defining the time frame as "day"

t = 'day'

# Navigating to the Quora search page for the defined topic and time frame

driver.get('https://www.quora.com/search?q={}%20{}&time={}'.format(query.split(' ')[0],query.split(' ')[1],t))

time.sleep(5)

driver.set_window_size(1024, 600)

driver.maximize_window()

# Finding the body element of the HTML document

elem = driver.find_element_by_tag_name("body")

# Defining the number of times to press the "Page Down" key

no_of_pagedowns = 1

# Pressing the "Page Down" key on the keyboard

while no_of_pagedowns:

elem.send_keys(Keys.PAGE_DOWN)

time.sleep(5)

no_of_pagedowns-=1

Step 5:

The next step in the process is to extract all the loaded questions from the Quora page and store them in a dataframe. We further use a try-except block to handle any errors that may occur during the extraction process. This is useful because it allows the code to continue running despite an error rather than stopping and crashing the entire program.

The code uses the find_element_by_xpath() method from the Selenium library to locate and extract the text of the questions on the page. The method takes as input the XPath of the elements in the HTML of the page that we want to extract. Xpath is a way to navigate through the elements and attributes of an XML document, which is the webpage format. The code uses a loop to extract the questions from different page sections and saves them in a series, which is then appended to the dataframe.

# Initializing an empty dataframe

df = pd.DataFrame()

try:

# Iterate through a range of numbers from 1 to 5

for i in range(1,5):

# Finding and extracting the question text using xpath

questions = driver.find_element_by_xpath('//*[@id="mainContent"]/div/div/div[2]/div['+str(i)+']/span/a/div/div/div/div/span').text

questions = pd.Series(questions)

df_temp = pd.DataFrame({'Questions':questions})

df = df.append(df_temp)

except:

print('No more from this section')

try:

# Iterate through a range of numbers starting from the next number after the first loop

for j in range(i+2,10):

questions2 = driver.find_element_by_xpath('//*[@id="mainContent"]/div/div/div[2]/div['+str(j)+']/span/a/div/div/div/div/span').text

questions2 = pd.Series(questions2)

df_temp2 = pd.DataFrame({'Questions':questions2})

df = df.append(df_temp2)

except:

print('No more from this section')

# Creating a variable "date_today" that will store the current date in the format "YYYYMMDD"

date_today=datetime.date.today().strftime("%Y%m%d")

# Inserting a new column "S.No" with a sequence number starting from 1 to the end of the dataframe

df.insert(0, 'S.No', range(1, 1 + len(df)))

df

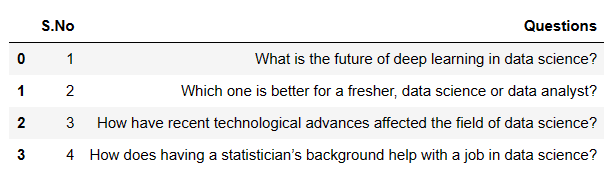

We have managed to extract the following four questions

Integrating ChatGPT’s API

Now that we have extracted some relevant questions, our next step is to answer them. For this, we will call upon ChatGPT’s API and use it to generate answers to the questions. The OpenAI API uses the GPT-3 language model, also known as ChatGPT, to generate human-like text. In the code, we use the openai.completion. to create a method to generate answers to the questions we extracted earlier.

This method takes several parameters, such as the engine, specifying the specific version of GPT-3 to use; prompt, which is the text we want the model to complete; and temperature, which controls the “creativity” of the generated text. A lower temperature will result in more conservative, safe answers, while a higher temperature will generate more creative, unexpected answers. In our case, we use the text-DaVinci-002 engine and a temperature of 0.5. We also pass the question as the prompt to the API, which will generate the answers.

The code then loops through the questions in the dataframe, and for each question, it generates an answer using the OpenAI API and appends the answer to a new dataframe called answers_df. The code also uses a try-except block to handle any errors that may occur during this process.

import openai

import pandas as pd

# Set your OpenAI API key

openai.api_key = "YOUR API KEY"

# Create an empty dataframe to store the answers

answers_df = pd.DataFrame(columns=["Questions", "Answers"])

# Iterate over the questions and get the answers

for index, row in df.iterrows():

question = row["Questions"]

try:

# Using the OpenAI API to generate an answer for the current question

response = openai.Completion.create(

# The GPT-3 model to use for generating answers

engine="text-davinci-002",

# The prompt to be passed to the model

prompt=f"Answer the following question: {question}",

# The degree of randomness in the generated answer, value between 0 and 1

temperature=0.5

)

# Extracting the generated answer from the API response

answer = response["choices"][0]["text"]

# Appending the question and answer to the dataframe

answers_df = answers_df.append({"Questions": question, "Answers": answer}, ignore_index=True)

except Exception as e:

print(f"Error occurred while processing the question: {question}")

print(e)

answers_df

We get the following output: a dataframe with the extracted questions and their answers generated through ChatGPT’s API.

Posting the Answers Back to Quora

Once we have obtained the answers to the questions, the next step is to post them back to Quora. This step is crucial in building our online presence, as it allows us to engage with the community and showcase our expertise in the field of data science. There are a few different ways to post answers on Quora. One method is to manually copy and paste the answers into the answer box on the website. Another method is to use a script or program to automate the process of posting answers. Both methods have their own set of pros and cons and should be carefully considered before deciding which one to use.

The manual method of posting answers is simple. It involves logging into Quora, navigating to the question page, and then manually copying and pasting the answer into the answer box. However, this method can be time-consuming and may not be practical for answering many questions.

The automated method of posting answers involves using a script or program to automate the process of logging into Quora, navigating to the question page, and posting the answer. This method can save a lot of time, but it also risks being detected by Quora’s anti-scraping system and getting your account suspended. Additionally, using an automated method may violate Quora’s terms of service. However, given the academic nature of this project, we will use a script for posting the answers back on Quora and demonstrate how the process can be automated entirely.

We add a function within our script to iterate over the answers stored in the answers_df dataframe, and for each answer, it navigates to the corresponding question page on Quora. Next, it clicks on the “Write Answer” button, enters the answer, clicks on the “Post” button, and selects the appropriate credentials for the user.

# Iterate over the answers

for index, row in answers_df.iterrows():

question = row["Questions"]

answer = row["Answers"]

try:

# Go to the question page

driver.get('https://www.quora.com/'+question.replace(' ','-').replace('?',''))

time.sleep(3)

# Click on the "Write Answer" button

driver.find_element_by_xpath('//*[@id="mainContent"]/div[1]/div/div/div/div[2]/div/div/div[1]/button[1]/div/div[2]/div').click()

time.sleep(3)

# Enter the answer

driver.find_element_by_xpath('//*[@id="root"]/div/div[2]/div/div/div/div/div[2]/div/div[2]/div[1]/div/div[1]/div[2]/div/div/div/div/div[1]/div/div').send_keys(answer)

time.sleep(3)

# Click on the "Post" button

driver.find_element_by_xpath('//*[@id="root"]/div/div[2]/div/div/div/div/div[2]/div/div[2]/div[2]/div/div[2]/button').click()

time.sleep(5)

# Select your credentials

driver.find_element_by_xpath('//*[@id="root"]/div/div[2]/div/div/div/div/div[2]/div/div[2]/div[2]/div/div[2]/button/div/div/div').click()

time.sleep(5)

except Exception as e:

print(f"Error occurred while posting the answer for question: {question}")

print(e)

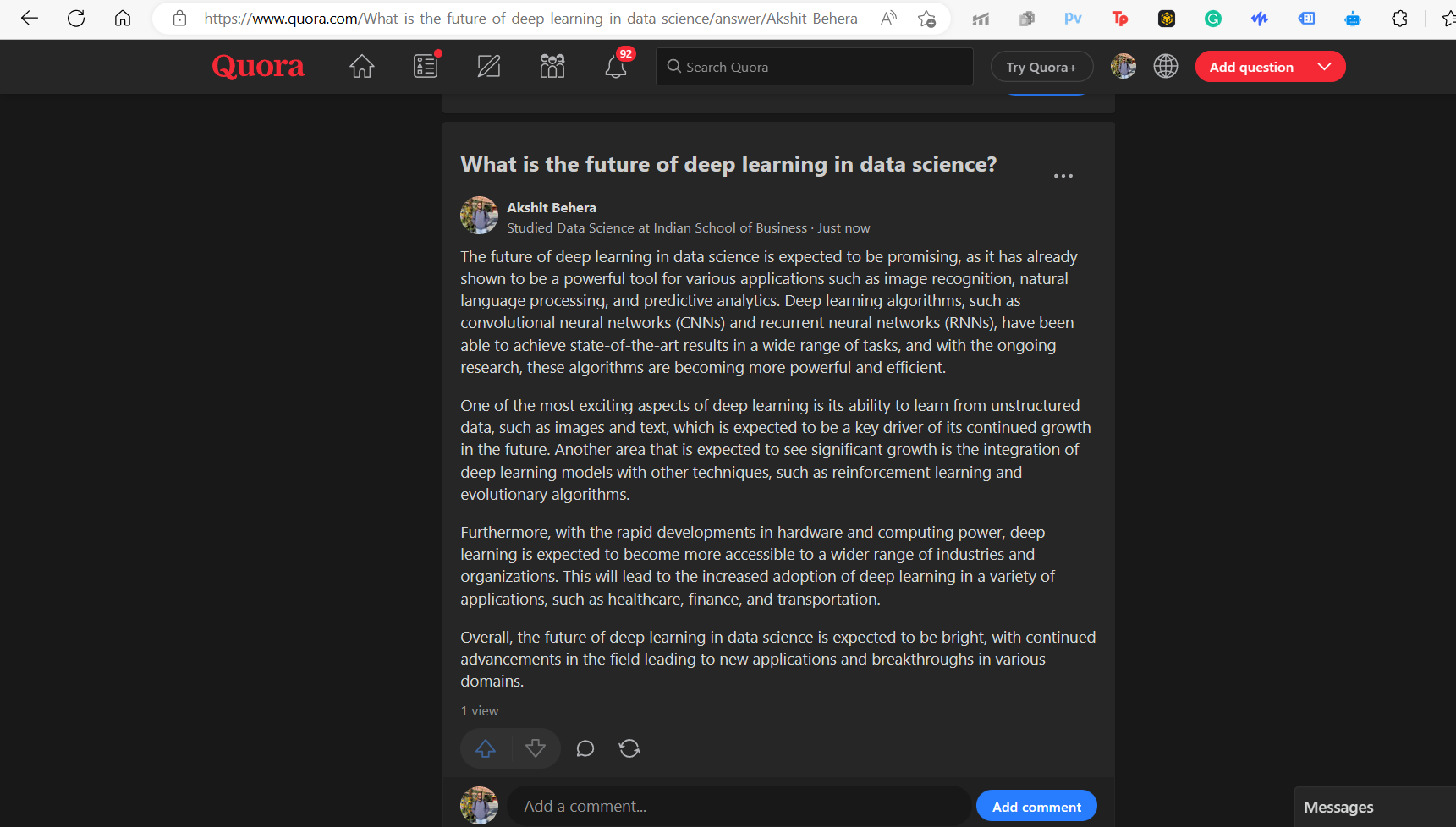

Here is a snap of one of our answers posted on Quora.

Evaluating the Performance of the Tool

In evaluating the tool’s performance we have created, it is important to consider several factors. One key factor to consider is the accuracy and relevance of the questions that are being extracted from Quora. The tool should be able to extract questions that are relevant to the topic and likely to be of interest to the target audience. Additionally, the tool should be able to generate answers that are accurate, informative, and well-written.

Another important factor to consider is the speed at which the tool can extract questions, generate answers, and post them back to Quora. With the use of web scraping and an AI language model, this tool can automate the process of answering questions, saving organizations significant time and resources. The ability of the tool to quickly and efficiently extract questions, generate answers, and post them back to Quora can be measured by the number of questions answered per unit of time.

The tool we have created can achieve high performance in these areas. The questions that are extracted from Quora are relevant to the topic of data science, and the answers generated by the tool are accurate, informative, and well-written. Additionally, the tool can automate the process of answering questions, which can save organizations a significant amount of time and resources. Further, the accuracy and relevance of the answers generated by the tool can also be evaluated by monitoring the engagement of the answers posted on Quora.

It is important to note that the tool’s performance can be improved by fine-tuning the parameters and settings used in the web scraping and AI language model.

How Does the Tool Help in Time & Cost Savings?

By using this tool, organizations can potentially save a significant amount of time and money that would have been spent manually answering these questions. However, it can be difficult to estimate the exact amount of time and cost savings that organizations can achieve by using this tool, as it will depend on factors such as the number of questions being answered, the complexity of the questions, and the efficiency of the automation process. However, organizations can save significant time and resources by automating answering questions on Quora.

For example, if an organization is answering 100 questions daily, and it takes an average of 10 minutes to answer each question manually, the organization would spend approximately 16 hours answering them. With this tool, the organization can automate the process and potentially reduce the time spent answering questions to a fraction of that amount.

Similarly, organizations can save on labor costs by automating the process of answering questions. Instead of hiring a team of people to answer questions, organizations can use this tool to automate the process, reducing the need for additional staff.

Additionally, organizations can save on hiring outside agencies to build and manage their online presence.

It’s worth noting that these are just estimates, and the actual time and cost savings will depend on the specific organization’s usage, and it might not be the same for everyone.

Challenges & Limitations of Using Automated Tools

Using automated tools such as Selenium and the OpenAI API in answering questions on Quora can bring about many benefits for organizations. However, there are also certain challenges and limitations to consider when implementing this tool.

1. One major challenge is that Quora does not currently have a public API for developers. This means that the tool must rely on web scraping techniques to access the data, which can be more prone to errors and may not be as efficient as using an API. Additionally, as web scraping is against Quora’s terms of service, it could lead to an account ban if the tool is used excessively or in a way that goes against Quora’s policies.

2. The absence of an official API also means that the tool may be more susceptible to changes in the website’s layout and structure. This means that if Quora changes its website, it may require updating the tool’s script accordingly.

3. Another limitation is the cost of using the OpenAI API, which can be substantial for organizations that need to answer many questions.

4. Furthermore, as the tool is based on a pre-defined set of rules and instructions, it may not be able to adapt to unexpected situations or edge cases. Therefore, it’s crucial to keep monitoring the answers on quora after posting them to ensure that it is not breaking any rules of the platform and is providing value to the community.

One of the interesting reads on OpenAI’s GPT 2:

OpenAI’s GPT-2: A Simple Guide to Build the World’s Most Advanced Text Generator in Python

Conclusion

In conclusion, this article demonstrated how organizations could leverage the power of web scraping and AI to automate their Quora presence-building process. Using Selenium and ChatGPT, we extracted relevant questions from Quora, answered them, and posted them back on the platform. The use of this tool can bring about significant time and cost savings for organizations, as they no longer have to manually search for questions to answer and spend time crafting responses. Additionally, using AI-powered answers ensures that the responses are accurate and relevant.

However, it’s important to note that this approach has challenges and limitations. Firstly, this approach’s success depends on the quality of the AI model used. Additionally, as Quora’s website structure and xpaths may change, the script may need to be updated accordingly. Furthermore, the non-availability of Quora’s API can also be challenging in implementing this tool.

In summary, this tool provides organizations with a powerful way to build their online presence on Quora efficiently, and the key takeaways are:

1. One can increase their online presence and brand awareness on Quora and automate the process of finding and answering relevant questions

2. AI can be leveraged to ensure the relevance and accuracy of the answers

3. This can result in potential time and cost savings for the organization

4. One needs to be aware of the limitations and challenges of the approach and update the script accordingly if needed.

5. Monitoring and tracking the tool’s performance is required to ensure it’s meeting the organization’s goals.

6. The tool can be improved by using machine learning techniques such as fine-tuning the AI model with more data and monitoring the performance of the answers on Quora.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.