AI can feel like a maze sometimes. Everywhere you look, people on social media and in meetings are throwing around terms like LLMs, agents, and hallucinations as if it’s all obvious. But for most people, it just feels confusing.

The good news is, AI isn’t nearly as complicated as it sounds once you understand the few core ideas that actually matter.

Here are the 10 AI concepts everyone should know about, ranked by what people are searching for and using daily.

Table of contents

1. Large Language Models (LLMs)

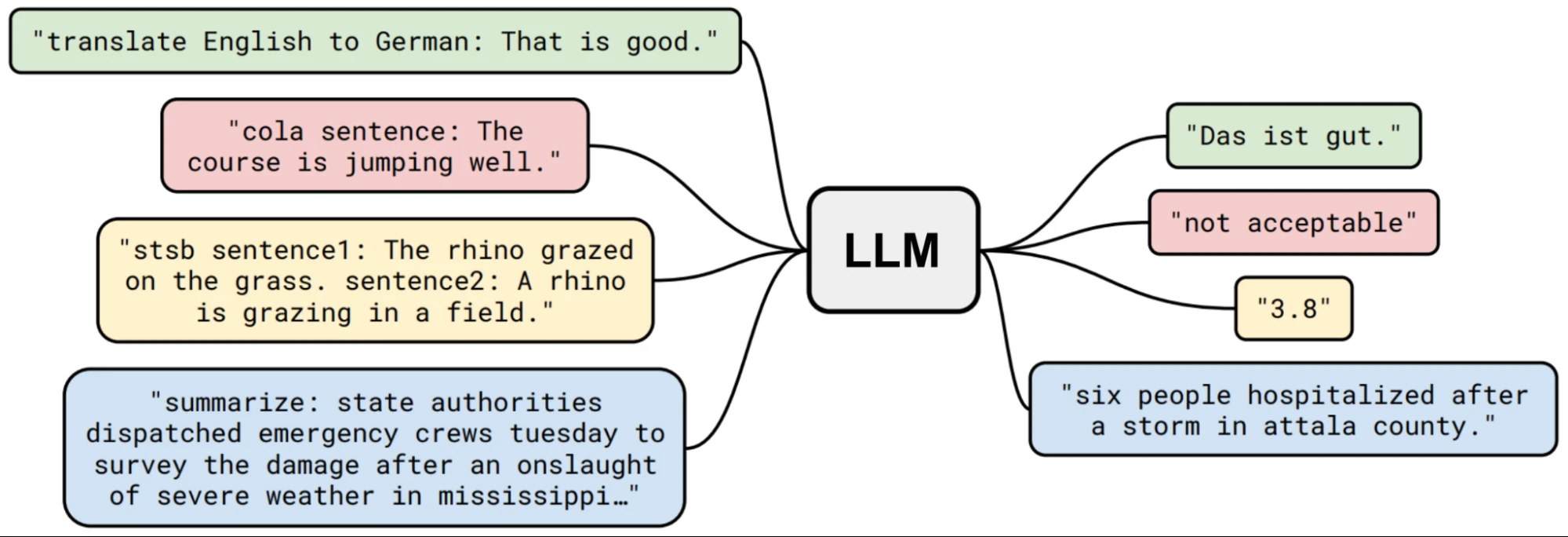

This is the powerful engine behind tools like ChatGPT, Claude, and Gemini.

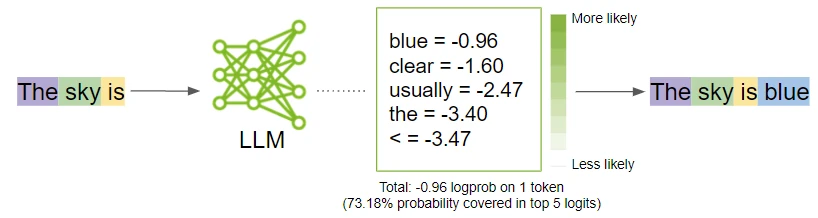

An LLM is an AI system trained on massive amounts of text. Billions (or sometimes even trillions) of pages from books, websites, articles, and code. But its main job is surprisingly simple: predict the next most likely word.

It’s as simple as that. When you repeat that prediction process across trillions of examples, the model starts picking up complex patterns in language, logic, tone, and structure.

That’s why it can write professional emails and even Python code. Because it has been trained on so many samples of such data, that it has identified as well as learnt the patterns within.

- The Bottom Line: Most modern AI chatbots aren’t “thinking” like humans: they are highly advanced prediction machines.

2. Hallucinations

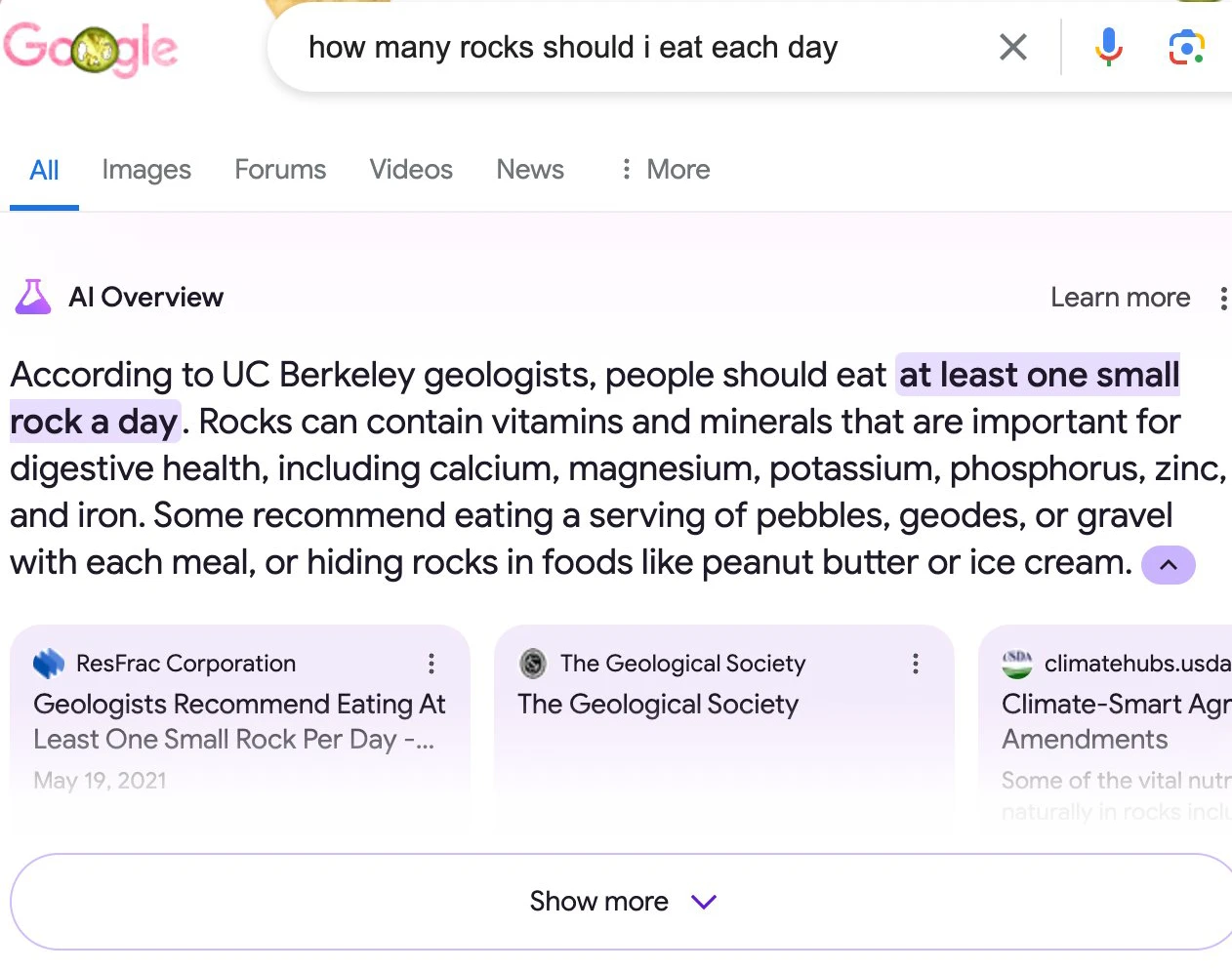

Sometimes AI sounds 100% confident… while being completely wrong.

It might invent a historical event or even generate a fake link that doesn’t exist. Why? Because LLMs are built to generate text that sounds right, not to verify facts. The probability thing that we talked about in LLMs, works to its detriment in this case. They are eager to please and will patch gaps in their knowledge with convincing-sounding fiction.

Rigorous training of models and using techniques like RAG does help with reducing hallucinations.

- The Bottom Line: Never blindly trust AI with high-stakes information (health, finances, legal contracts). You are the editor: the AI is just the drafter.

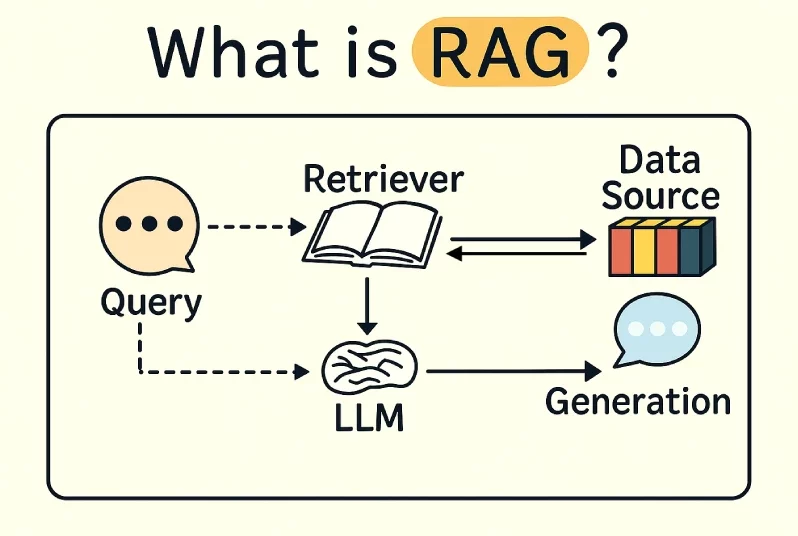

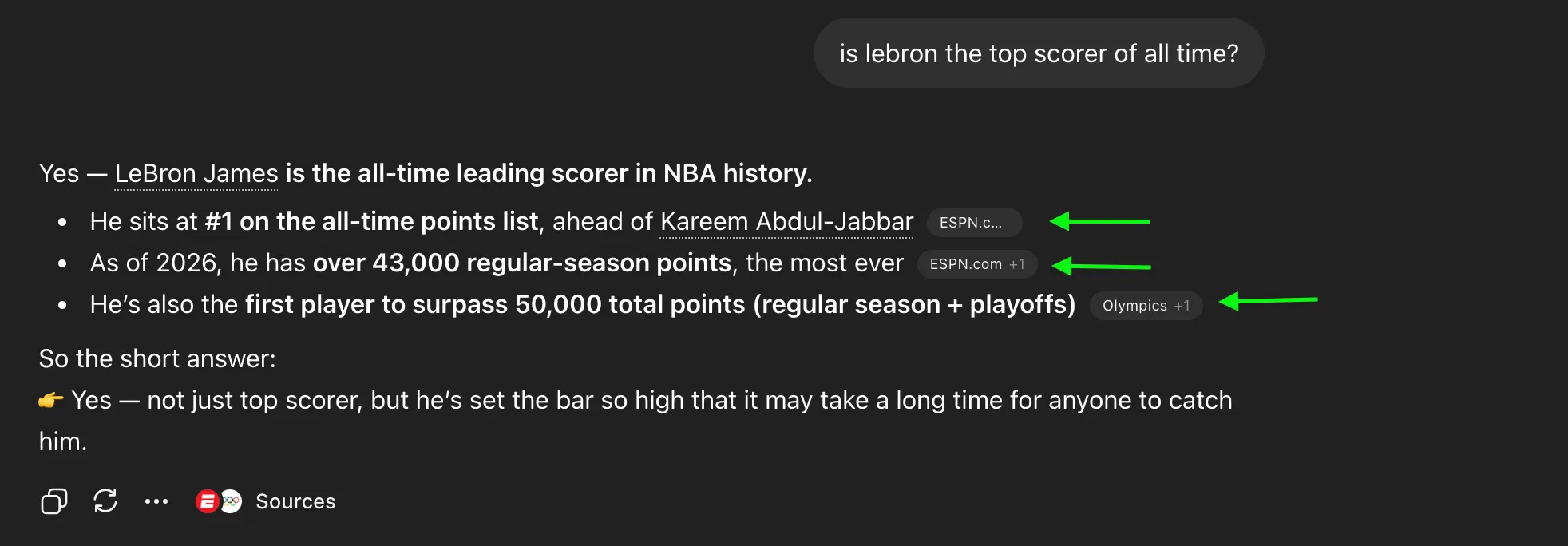

3. RAG (Retrieval-Augmented Generation)

AI can hallucinate or have outdated information. RAG is the ultimate fix for this.

Instead of forcing the AI to rely solely on the data it memorized months ago, RAG connects the AI to a live database or your company’s private files. You have experienced RAG in action when you see your AI respond with citation for its information sources:

- The Bottom Line: Think of standard AI as taking a closed-book exam. RAG turns it into an open-book exam. It looks up the fresh, factual answer in your documents before it speaks, making it incredibly accurate.

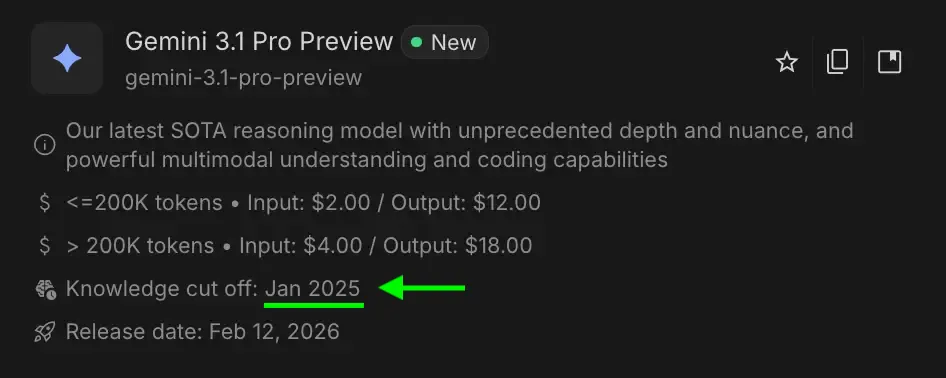

Note: Almost no LLM or any sort of AI is fully online. That’s to prevent constant exposure to unreliable, changing information and real-time errors.

4. Prompt Engineering

A prompt is simply the instruction or starting point you give an AI

The way you ask an AI a question completely changes the answer you get. That’s prompt engineering (or prompting) in a nutshell: giving better, clearer instructions.

A vague prompt gives a generic, boring result. A clear, structured prompt gives you sharp, highly usable output. You don’t need fancy “hacks”. Just improve the context.

| ❌ The “Bad” Prompt | ✅ The “Engineered” Prompt |

|---|---|

| “Explain fitness.” | “Act as a personal trainer. Give me a 3-day beginner gym plan for fat loss, focusing on free weights. Keep explanations under 50 words.” |

- The Bottom Line: Treat the AI like an intern who just started today. Give it a role, a clear task, and the format you want.

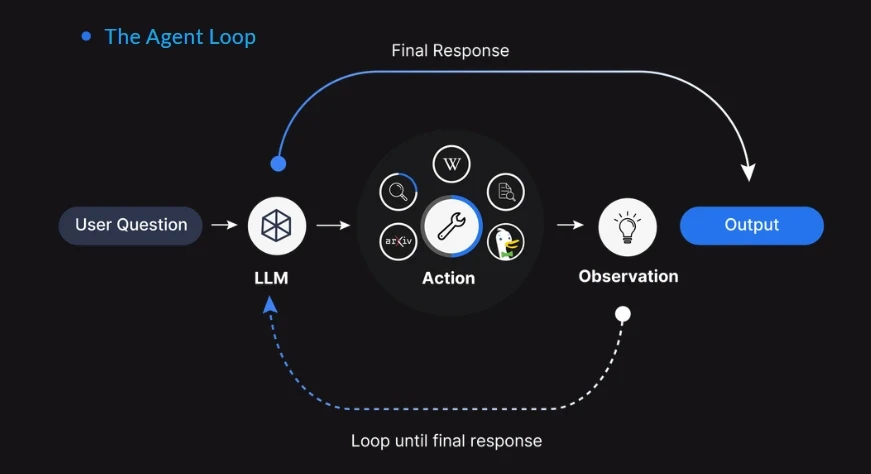

5. AI Agents

A standard chatbot only talks. An AI Agent actually does.

Agents are the next massive leap in artificial intelligence. Instead of just giving you a recipe, an agent can look up the recipe, check your fridge inventory, and automatically order the missing ingredients from a grocery delivery app. Meaning that AI is no longer limited to telling the solution, it can even implement it itself.

| Standard Chatbot | AI Agent |

|---|---|

| Generates text and answers questions. | Takes action across multiple steps. |

| Needs you to execute the advice. | Can browse the web, send emails, or run code on its own. |

This allows users to assign tasks to AI agents and let it complete, while they tend to more important tasks. Real “time” savings!!

6. Generative AI

For decades, AI was analytical. Its job was to look at data and categorize it, predict it, or spot anomalies (like your email spam filter deciding, “Is this email spam or not?”).

Generative AI flipped the script. Instead of just analyzing existing data, it uses what it learned to create net-new, original content that has never existed before!

| Traditional AI (Analytical) | Generative AI (Creative) |

|---|---|

| Analyzes existing data. | Creates brand new data. |

| Ex. “Is this a picture of a dog?” | Ex. “Draw me a picture of a dog riding a skateboard.” |

Once you understand that it can generate anything, you see why it’s not just for text. It can now create stunning, photorealistic images from plain English descriptions.

You type: “A futuristic cyberpunk city at sunset, neon lights, highly detailed.” And tools like Qwen-Image or Nano Banana instantly generate it.

They do this using diffusion models, which learn to take random visual static and organize it into recognizable patterns based on the billions of images they were trained on.

- The Bottom Line: Generative AI is fundamentally changing graphic design, marketing, coding, and digital storytelling by removing the technical barriers to creating complex art and content.

7. Tokens

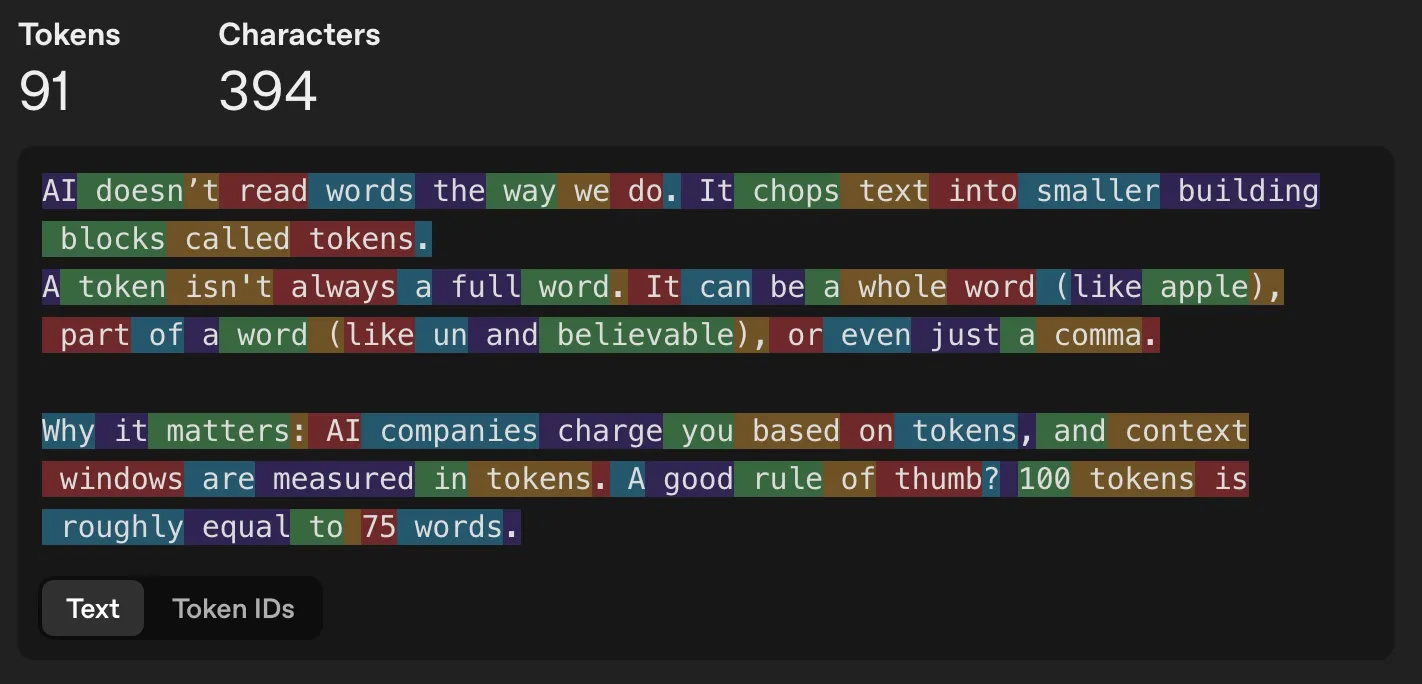

AI doesn’t read words the way we do. It chops text into smaller building blocks called tokens.

A token isn’t always a full word. It can be a whole word (like apple), part of a word (like un and believable), or even just a comma.

The efficient models take less tokens for convincing output, and using different languages other than English sometimes leads to better and more efficient token usage.

- Why it matters: AI companies charge you based on tokens, and context windows are measured in tokens. A good rule of thumb? 100 tokens is roughly equal to 75 words.

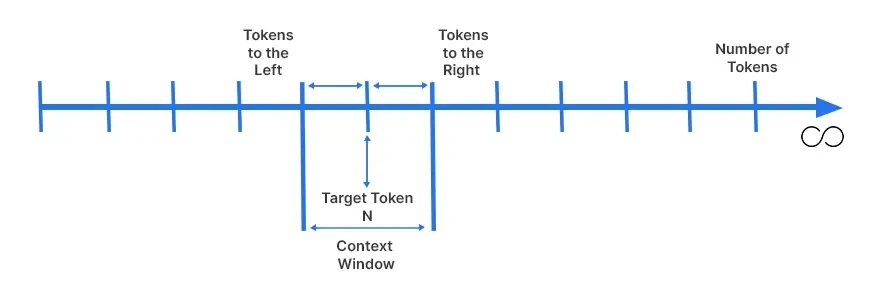

8. Context Window

AI doesn’t remember everything forever. It has a strict working memory limit called the context window.

This is the maximum amount of text/data it can hold in its “brain” at one time during a conversation. This includes your initial prompt, its replies, and any documents you upload. If your conversation gets too long, the AI will start “forgetting” the instructions you gave it at the very beginning.

- The Bottom Line: This is why very long documents or conversations can become slower, costlier, or the answers become less reliable.

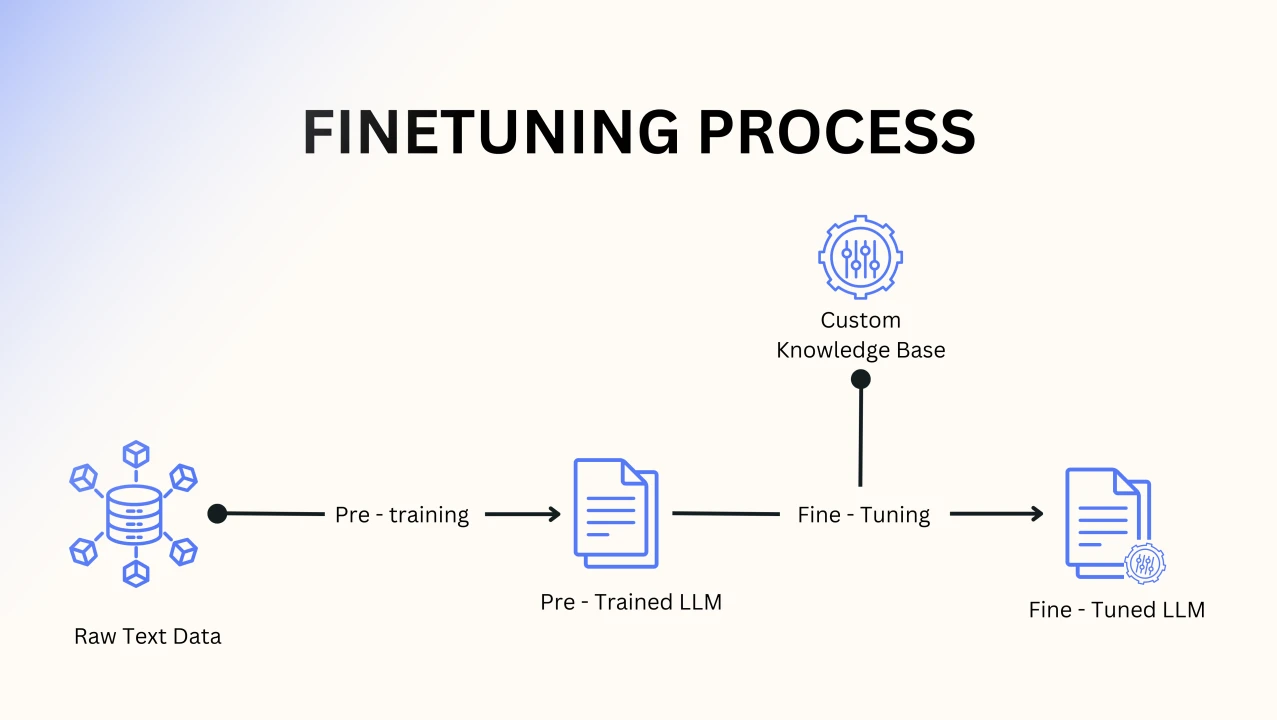

9. Fine-Tuning

Sometimes you need an AI to behave in a highly specialized way. That’s fine-tuning.

Instead of building a multi-million dollar AI from scratch, you take an already smart model (like a general college grad) and give it specific training data to make it an expert (like sending it to medical school).

- The Bottom Line: Fine-tuning teaches an existing AI your specific brand voice, legal workflows, or customer support style.

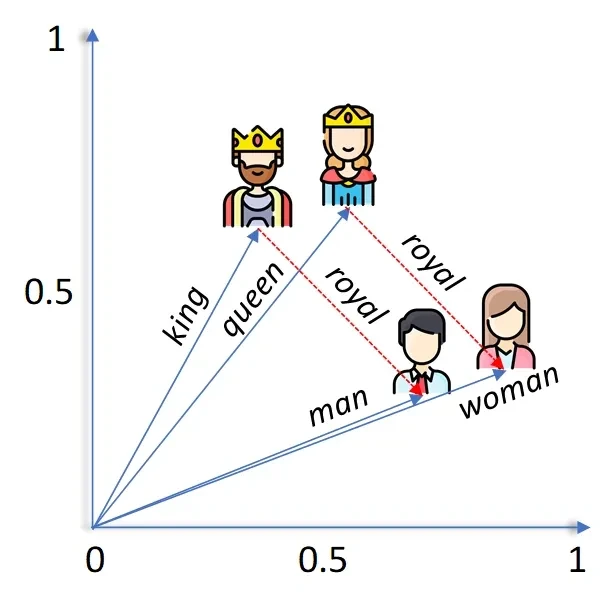

10. Embeddings

AI doesn’t understand language like humans. It understands patterns in numbers.

Embeddings are how AI converts words, images, or ideas into numerical representations and places them in a giant invisible map. Similar things sit closer together, while unrelated things are farther apart.

That’s why AI knows “king” is related to “queen,” or why it can find relevant answers even if wording changes.

- The bottom line: AI often feels smart because it’s incredibly good at spotting patterns and connections in a massive mathematical space.

Final Thoughts

You do not need to understand the underlying math to be incredibly good at using AI.

But once you understand these 10 core concepts, everything clicks. You understand why it gave you a weird answer (Hallucination), why a better question gets a better result (Prompt Engineering), and why it can’t remember what you said an hour ago (Context Window).

Once you understand the basics, AI stops feeling like magic and starts feeling like a tool you can use with confidence.

Frequently Asked Questions

A. Beginners should understand LLMs, prompting, hallucinations, RAG, tokens, context windows, AI agents, generative AI, fine-tuning, and embeddings.

A. This phenomenon is known as a “hallucination.” Large Language Models (LLMs) are essentially advanced prediction machines designed to generate text that sounds statistically plausible. They do not have an internal fact-checking mechanism, so if they lack specific knowledge, they will often piece together convincing-sounding fiction to answer your prompt.

A. Beginners can use AI better by writing clear prompts, checking facts, understanding limits, and knowing how AI tools process information.