Most AI tools forget you as soon as you close the browser window. The system begins all interactions with a new user. AI agents provide a solution to this problem because they handle their complete workflow through their system. MaxClaw is one of the best in this category. MiniMax developed this system which operates completely from the cloud space. The system requires no installation procedures because users need neither to download anything nor to configure anything nor to perform upkeep tasks.

For those unaware, the MiniMax M2.5 foundation model, operating on the open-source OpenClaw framework, powers MaxClaw. MaxClaw operates as a continuous AI system that maintains the memory of previous interactions while it interacts with users through various communication platforms. These platforms include Telegram, Discord, and Slack. In this technical article, we’ll examine MaxClaw’s architecture, core features, real-world use cases, and how it compares to alternatives in the growing Claw AI agent ecosystem.

Table of contents

What is MaxClaw?

Released on February 25, 2026, MaxClaw by MiniMax is an AI agent acting as an all-in-one solution that uses three significant technologies:

- A wrapper of the OpenClaw open-source framework that serves as its operational backbone.

- The M2.5 foundation model from MiniMax used to create a natural understanding of language.

- The cloud infrastructure provided by MiniMax for full management and deployment 24×7.

MaxClaw uses these three technologies to create a technically advanced and accessible agent. It iseasy for non-technical users to set up and use as an assistant. It also serves as a development platform for creating and automating complex workflows using agents.

Core Features of MaxClaw

Here are the core features of MaxClaw:

1. Easy Application Deployment

What makes MaxClaw’s speed of deployment stand out amongst other SaaS applications is that it only takes 10 seconds to deploy an agent using a single button click (Deploy Now) from the MiniMax Agent Platform. There is no need for server provisioning, binary compilation, or dependency management.

By utilizing this zero-friction setup model, users who do not have DevOps or cloud infrastructure experience will have the same access to enterprise-level AI agents. This is a significant improvement in terms of the accessibility of AI.

2. Persistent Memory

MaxClaw provides persistent long-term memory with over 200,000 tokens and is completely run on the MiniMax cloud environment. Most chatbots are stateless, meaning they do not retain context during multiple sessions. In contrast, MaxClaw retains the last conversation, understands user preferences, and continually develops a greater understanding of the user’s working style through all interactions with the agent.

The memory allows the agent to be more useful and intelligent with each interaction with the user, which is a differentiator for productivity-based use cases.

3. Multi-Platform Native Integration

MaxClaw establishes direct connections with Telegram, Discord, and Slack through its one-click native integration feature. MaxClaw provides a direct system connection, which enables users to use their communication platforms without needing to switch between different AI tools.

The system provides seamless integration, which allows users to maintain their current tasks without experiencing interruptions, which helps both teams and advanced users to work more efficiently. The self-hosted solution OpenClaw needs users to set up webhooks and manage bot tokens to reach its operational capabilities.

4. Powerful Toolchain and Agentic Capabilities

MaxClaw combines all OpenClaw tools, which enable users to access an extensive range of agentic functions including:

- Web browsing and real-time research

- Code execution and generation

- File analysis and document processing

- Automation scripts and scheduled task management

- Multi-step reasoning and workflow orchestration

MaxClaw uses these functions to operate autonomous systems that can complete advanced multi-step processes that include research work, report creation, code execution, and final output delivery without needing human input during any process stage.

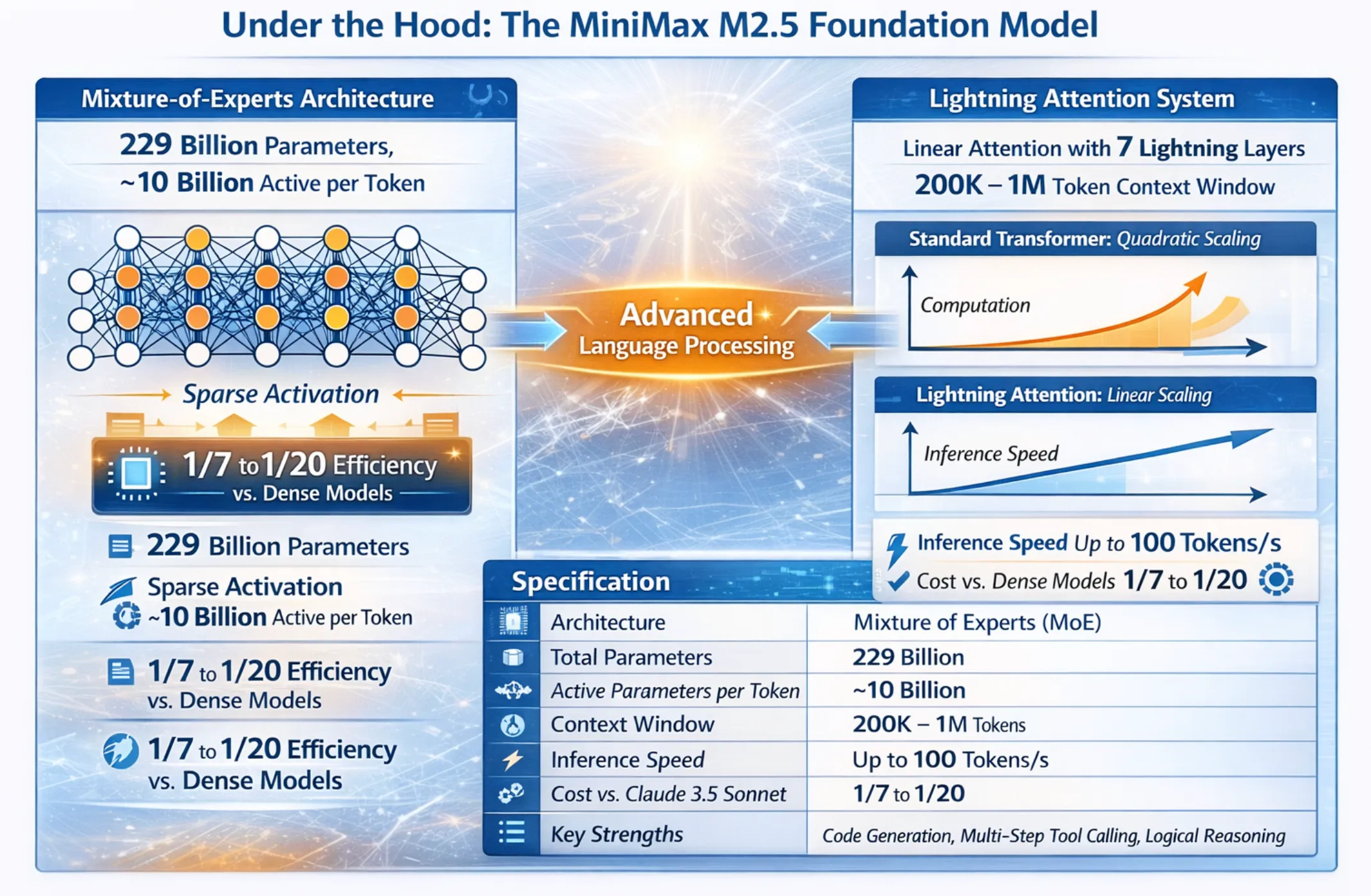

Under the Hood: The MiniMax M2.5 Foundation Model

MaxClaw’s intelligence operates because MiniMax M2.5 powers its primary foundation model, which represents advanced language processing technology that uses a Mixture-of-Experts design together with MiniMax’s special Lightning Attention system.

Mixture-of-Experts Architecture

The M2.5 model contains 229 billion total parameters, but only approximately 10 billion parameters are activated per token via sparse activation. The system achieves reasoning performance that equals the capabilities of dense frontier models through its MoE design, which requires only one-seventh to one-twentieth of the processing power. MaxClaw users can achieve frontier-level intelligence through this system, which operates between 80 and 90 percent more efficiently than standard systems according to their current operational requirements.

Lightning Attention: Eliminating Quadratic Complexity

The standard Transformer architecture encounters fundamental scaling limitations, which cause its computational requirements to double when handling longer contexts. MiniMax uses Lightning Attention as its solution to implement a linear attention system. It maintains efficient performance during context length expansion. The M2.5 model uses seven Lightning Attention layers together with a single SoftMax attention layer to process context windows that extend from 200,000 to 1 million tokens at inference speeds of 100 tokens per second.

| Specification | Value |

|---|---|

| Architecture | Mixture of Experts (MoE) |

| Total Parameters | 229 Billion |

| Active Parameters per Token | ~10 Billion |

| Context Window | 200K – 1M Tokens |

| Inference Speed | Up to 100 Tokens/s |

| Cost vs. Claude 3.5 Sonnet | 1/7 to 1/20 |

| Key Strengths | Code generation, multi-step tool calling, logical reasoning |

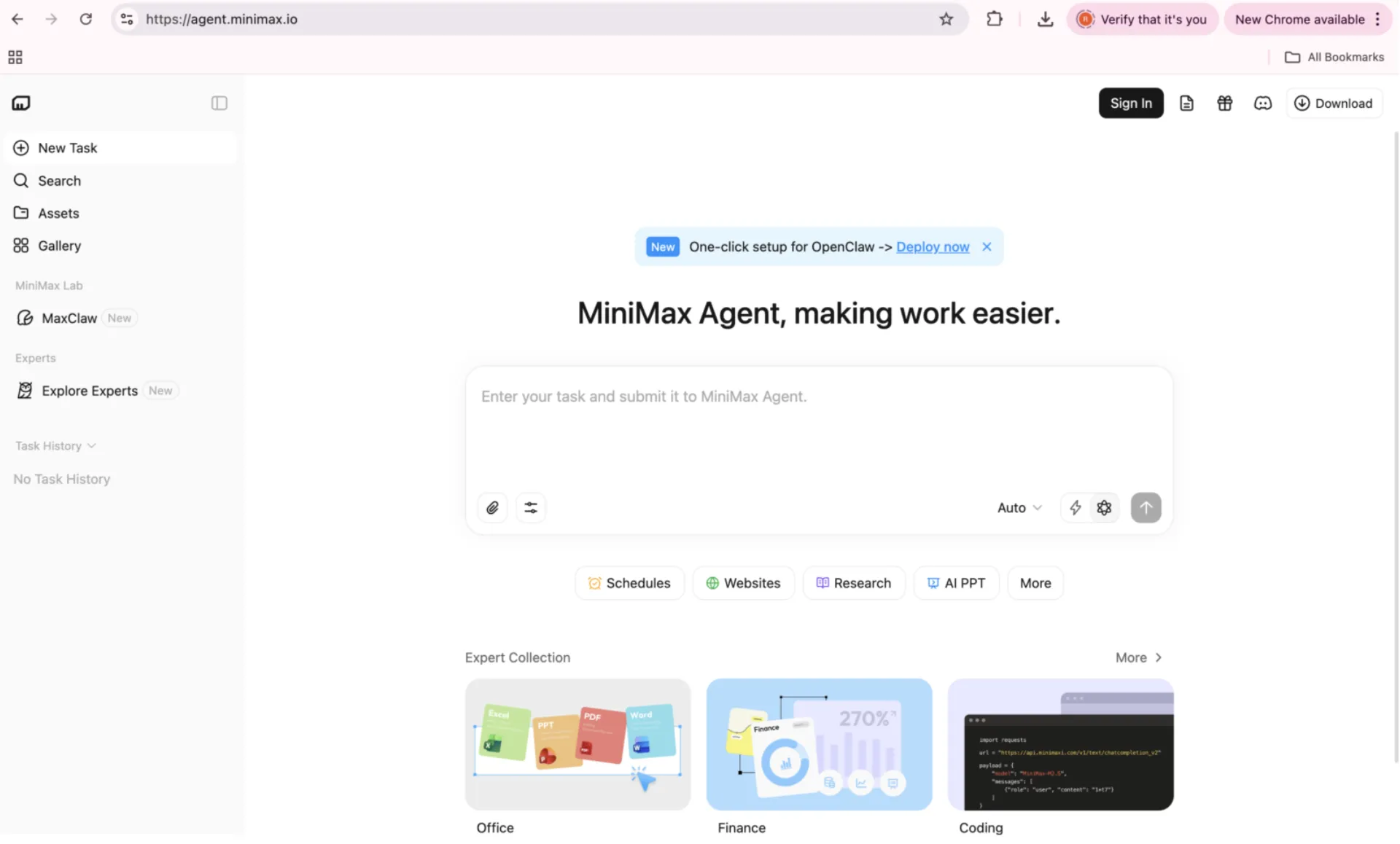

Getting Started with MaxClaw

The MaxClaw deployment process needs no technical skills and takes less than one minute to finish:

1. The user must first access the MiniMax Agent platform through the URL agent.minimax.io.

2. Then the user must choose MaxClaw from the left navigation menu on the dashboard.

3. The user should press the “Deploy Now” button to start the agent deployment process which completes within 10 seconds.

4. One must follow the on-screen instructions to establish connections with Telegram, Discord, and Slack.

MiniMax Agent provides a free tier for initial exploration, with usage-based pricing for production workloads.

Who is MaxClaw for?

MaxClaw serves a diverse user base across technical and non-technical profiles:

- General Users: Individuals who want powerful AI agent capabilities without any technical setup. The platform provides users with one-click deployment and native integrations, which make it accessible to everyone.

- Developers and Researchers: Engineers who require advanced automation tools and long-text evaluation systems, and coding tools that need multi-step agentic operations. The M2.5 model’s coding and reasoning performance make it a capable development partner.

- Teams and individuals: Users who depend on Telegram, Discord, and Slack for their work activities want to use AI that operates inside their normal work activities without requiring them to switch tasks.

- Organizations that want to automate their operations through an affordable AI assistant that requires no maintenance should use cloud-based AI solutions, which enable them to automate scheduled content processing, monitoring, and analysis tasks without incurring high costs.

MaxClaw vs. OpenClaw vs. KimiClaw: Detailed Comparison

The Claw ecosystem spans managed cloud services, self-hosted platforms, and ultra-lightweight runtimes. Here is a comprehensive comparison across the primary variants that we came across in the last few days:

| Feature | MaxClaw | OpenClaw | KimiClaw | ZeroClaw | PicoClaw |

|---|---|---|---|---|---|

| Developer | MiniMax | Community (OS) | Moonshot AI | Independent (OS) | Sipeed (OS) |

| Foundation Model | MiniMax M2.5 | Bring Your Own | Kimi K2.5 | Bring Your Own | Bring Your Own |

| Runtime | Node.js (Cloud) | Node.js | Node.js (Cloud) | Rust | Go |

| Memory | 200K+ Tokens | 1.5 GB+ RAM | ~40 GB Storage | ~7.8 MB RAM | <10 MB RAM |

| Deployment | 10s Cloud Setup | Local / Docker | Browser / Cloud | System Daemon | Embedded / IoT |

| Cost vs. Claude 3.5 | ~1/10 | API + Server costs | Platform Credits | API + Hardware | API + Hardware |

| Setup Complexity | None (1-click) | High | Low–Medium | High | Very High |

| Long-Term Memory | Yes (managed) | Manual config | Yes (cloud storage) | Limited | No |

| Platform Integrations | Telegram, Discord, Slack | Manual setup required | Browser-native | CLI-based | Hardware-specific |

| Best For | Productivity, complex workflows | Full privacy, self-host | Browser-centric tasks | Edge, high performance | IoT, embedded systems |

Conclusion

If you are interested in introducing an artificial intelligence (AI) agent into your workflow, MaxClaw is a game-changing option that makes deploying autonomous AI agents more accessible and economical than ever before. Using both the MiniMax M2.5 model’s Mixture of Experts architecture and Lightning Attention technology with OpenClaw’s flexible ecosystem, combined with a fully managed cloud-based service, MaxClaw provides a singularly compelling offering across user types.

For the non-tech-savvy end user, MaxClaw eliminates all barriers preventing them from deploying an AI agent. For the developer who desires to deploy an agentic platform without spending significant amounts of money on infrastructure, MaxClaw delivers a powerful yet cost-effective agentic platform without incurring any infrastructure overhead. For organizations that run high-volume, automated workflows, MaxClaw’s radical cost effectiveness opens the door to new uses of AI agents previously considered too expensive to implement.