This article was published as a part of the Data Science Blogathon

Pre-requisites

- Basic Knowledge of Natural Language Processing

- Hands-on practice of Python

Introduction

As we know data has some kind of meaning in its position. For every moment, mostly text data is getting generated in different formats like SMS, reviews, Emails, and so on. The main purpose of this article is to understand the basic idea of NLP using the library- SpaCy. So let’s go ahead.

In this article, we are going to see how to perform natural language processing tasks using a popular library named “SpaCy” simply. The Natural Language Process is a subfield of Artificial Intelligence and it is concerned with interactions between machine and human languages. NLP is the method of analyzing, understanding, and extracting meaning from human languages for computers.

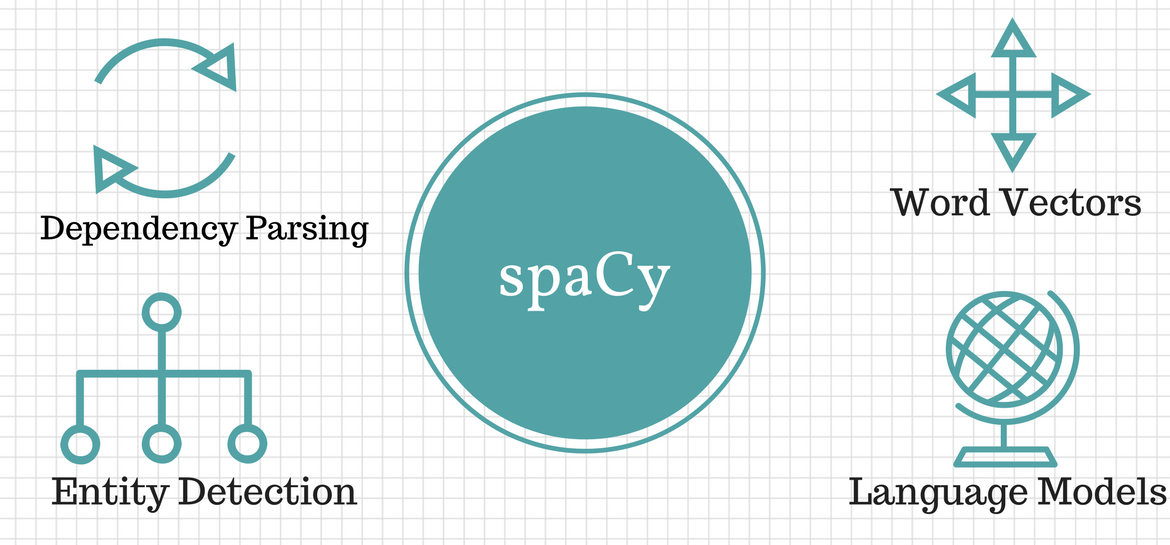

SpaCy is an open-source and free library for Natural Language Processing (NLP) in Python having a lot of in-built functionalities. It’s becoming popular for processing and analyzing data in NLP. Unstructured text data is produced in a large quantity, and it is important to process and extract insights from unstructured data. To do this, we need to represent the data in a format that can be understood by machines. NLP will help us to do that.

There are also other alternatives for performing NLP tasks using NLTK, Genism, and flair libraries.

Some Applications of NLP are:

1. Chatbots: Customer service, as well as experience, are the most important things for any organization. It will help the companies to improve their products, and also keep the satisfaction of customers. But interaction with every customer manually becoming a tedious job so chatbots come into the picture as it helps companies in achieving the goal for a better experience of customers.

2. Autocorrection and Autocompletion Search: When we search on google by typing a couple of letters, it will show us related terms. And if we type a word incorrectly, nlp corrects it automatically.

Let’s begin with actual implementation:

Implementation

Installation of necessary libraries on the machine:

SpaCy can be installed using the pip command. We can use a virtual environment to avoid depending on system-wide packages. Let see:

python3 -m venv envsource ./env/bin/activate

pip install spacyWe need to download the language model and data by using the following command:

python -m spacy download en_core_web_smNow we will use spacy and give a string and text file as input and also load the model. Here ‘nlp’ is an object of our model so we are going to use it for further coding also:

import spacy

nlp = spacy.load('en_core_web_sm')Now we will perform sentence detection i.e extraction of sentences.

about_text = ('I am a Python developer currently'

' working for a London-based Fintech'

' company. I am interested in learning'

' Natural Language Processing.')

about_doc = nlp(about_text)

sentences = list(about_doc.sents)

len(sentences)for sentence in sentences:

print (sentence)

Out: 'I am a Python developer currently working for a

London-based Fintech company.'

'I am interested in learning Natural Language Processing.'Next, we will move ahead with tokenization to break text into meaningful tokens with the index also.

for token in about_doc:

print (token, token.idx)

Out: I 0

am 4

a 10

Python 13

developer 15

currently 22

working 32

for 42

a 50

London 54

- 56

based 62

Fintech 63

.....We can customize our tokenizations using a tokenizer function

from spacy.tokenizer import Tokenizer

custom_nlp = spacy.load('en_core_web_sm')

prefix_re = spacy.util.compile_prefix_regex(custom_nlp.Defaults.prefixes)

suffix_re = spacy.util.compile_suffix_regex(custom_nlp.Defaults.suffixes)

infix_re = re.compile(r'''[-~]''')

def customize_tokenizer(nlp):

# Adds support to use `-` as the delimiter for tokenization

return Tokenizer(nlp.vocab, prefix_search=prefix_re.search,

suffix_search=suffix_re.search,

infix_finditer=infix_re.finditer,

token_match=None

)

custom_nlp.tokenizer = customize_tokenizer(custom_nlp)

custom_tokenizer_about_doc = custom_nlp(about_text)

print([token.text for token in custom_tokenizer_about_doc])

Out: ['I', 'am', 'a', 'Python', 'developer', 'currently',

'working', 'for', 'a', 'London', '-', 'based', 'Fintech',

'company', '.', 'I', 'am', 'interested', 'in', 'learning',

'Natural', 'Language', 'Processing', '.']Let us understand stopwords in SpaCy. We remove these stopwords from our text because it is not significant.

spacy_stopwords = spacy.lang.en.stop_words.STOP_WORDS

len(spacy_stopwords)

>>>326for stop_word in list(spacy_stopwords)[:10]:

print(stop_word)Out: using

becomes

had

itself

once

often

is

herein

who

too..Let’s remove these stopwords from the given text:

for token in about_doc:

if not token.is_stop:

print (token)

Out: Python

developer

currently

working

London

-

based

Fintech

company

.

interested

learning

Natural

Language

Processing

.let us understand how we can use lemmatization. Lemmatization is the process of reducing incurved forms of a word. This reduced form or formed root word is called a Lemma. Lemmatization helps us to avoid duplicate words that have similar meanings within text.

conference_help_text = ('Raj is helping organize a developer'

'conference on Applications of Natural Language'

' Processing. He keeps organizing local Python meetups'

' and several internal talks at his workplace.')

conference_help_doc = nlp(conference_help_text)

for token in conference_help_doc:

print (token, token.lemma_)

Raj Raj

is be

helping help

organize organize

a a

developer developer

conference conference

on on

Applications Applications

of of

Natural Natural

Language Language

Processing Processing

.....

He -PRON-

keeps keep

organizing organize

local local

Python Python

meetups meetup

and and

several several

internal internal

talks talk

at at

his -PRON-

workplace workplace

.....Here we will see word frequency:

from collections import Counter

complete_text = ('J K is a Python developer currently'

'working for a London-based Fintech company. He is'

' interested in learning Natural Language Processing.'

' There is a conference happening on 21 June'

' 2019 in London. It is titled "Applications of Natural'

' Language Processing". There is a helpline number '

' available . J is helping organize it.'

' He keeps organizing local Python meetups and several'

' internal talks at his workplace. J is also presenting'

' a talk. The talk will introduce the reader about "Use'

' cases of Natural Language Processing in Fintech".'

' Apart from his work, he is very passionate about music.'

' J is learning to play the Piano. He has enrolled '

' himself in the weekend batch of Great Piano Academy.'

' Great Piano Academy is situated in Mayfair or the City'

' of London and has world-class piano instructors.')

complete_doc = nlp(complete_text)

# Remove stop words and punctuation symbols

words = [token.text for token in complete_doc

if not token.is_stop and not token.is_punct]

word_freq = Counter(words)

# 5 commonly occurring words with their frequencies

common_words = word_freq.most_common(5)

print (common_words)Out: [('J', 4), ('London', 3), ('Natural', 3), ('Language', 3), ('Processing', 3)]# Unique words

unique_words = [word for (word, freq) in word_freq.items() if freq == 1]

print (unique_words)Out: ['K', 'currently', 'working', 'based', 'company',

'interested', 'conference', 'happening', '21', 'June',

'2019', 'titled', 'Applications', 'helpline', 'number',

'available', 'helping', 'organize',

'keeps', 'organizing', 'local', 'meetups', 'internal',

'talks', 'workplace', 'presenting', 'introduce', 'reader',

'Use', 'cases', 'Apart', 'work', 'passionate', 'music', 'play',

'enrolled', 'weekend', 'batch', 'situated', 'Mayfair', 'City',

'world', 'class', 'piano', 'instructors']Now understand the named entity recognition(NER). The Named Entity Recognition (NER) is the process of locating named entities in unstructured data and then classifying them into pre-defined categories, like person’s names, organizations, locations, percentages, and so on.

piano_text = ('Piano Academy is situated'

' in Mayfair or the City of London and has'

' world-class piano instructors.')

piano_doc = nlp(piano_text)

for ent in piano_doc.ents:

print(ent.text, ent.start_char, ent.end_char,

ent.label_, spacy.explain(ent.label_))

Out: Piano Academy 0 19 ORG Companies, institutions, etc.

Mayfair 35 42 GPE Countries, cities, states

the City of London 46 64 GPE Countries, citiesConclusion

SpaCy is an advance and powerful library that is exploring huge popularity for NLP applications because of its speed, ease to use, it’s accuracy, etc. So finally You got to know the following points:

– Concepts of NLP are

– Implementation using SpaCy

– Understanding of customization and built-in functionalities

– Extracting meaningful insights from text

.jpg)

Image source- https://ruelfpepa.files.wordpress.com/2019/10/understanding.jpg

Hope you like this article. Thank You!