This article was published as a part of the Data Science Blogathon

Introduction

Without data, no one can complete a data science project; and you can not say data science without data. Usually, in many projects, the data we use to analyze and develop ML models are stored in a database. We may collect data from specific web pages about a particular product or social media to discover patterns or perform sentiment analysis of data. Regardless of why we collect data or how we intend to use it, collecting information from the web – web scraping – is a task that could be quite tedious, but we need to collect data to achieve our project goals.

As a Data Scientist, web scraping is one of the vital skills you need to master, and you have to look for useful data, collect and preprocess data so that your results are meaningful and accurate.

Before we dive into tools that could help in data extraction activities, let us confirm that this activity is legal since web scraping has been a grey legal area. US court completely legalized web scraping of publicly available data in 2020. It means if you found information online(such as Wiki articles), then it is legal to scrape the data.

Still, When you do it, make sure:

- That you do not re-use or re-publish the data in a way that violates the copyright.

- That you adhere to the terms of service of the website you’re scraping.

- That you have a fair crawl-rate.

- That you do not try to extract private parts of the website.

As long as you do not violate the above terms, your web scraping activity would be on the legal side.

I think a few of you might have used BeautifulSoup and requests to collect the data and pandas to analyze it for your projects. This post will give you Five web scraping tools that do not include BeautifulSoup it is free to use and collect the data for your upcoming project.

1. Common Crawl

Creator of Common Crawl created this tool because they assume that everyone should have an opportunity to explore and perform analysis of the data around them and discover useful insights. They contribute high-quality data that was only open for large organizations and research institutes to any prying mind free of cost to encourage their open-source beliefs.

One can use this tool without any worry about charges or any other financial difficulties. If you are a student, a newbie to dive into data science, or just an eager person who loves to explore insights and discover new trends, this tool would be helpful. They make raw web page data and word extractions available as open datasets. It also offers resources for instructors teaching data analysis and assistance for non-code based usage cases.

Go through the website for more information regarding the use of datasets and ways to scrape the data.

2. Crawly

Crawly is another choice, particularly if you only need to extract simple data from a website or if you like to extract data in CSV format so you can examine it without writing any code. The user needs to input a URL, email id to send the extracted data, the format of required data (choose between CSV or JSON), and voila, the scraped data is in your inbox to use.

One can use JSON data and analyze it using Pandas and Matplotlib, or any other programming language. If you are a newbie to data science and web scraping, not a programmer, this is good and has its limitations. A limited set of HTML tags including, Title, Author, Image URL, and publisher, can be extracted.

Once you had open the crawly website enter the URL to be scraped, select the format of the data and your Email id to receive the data. Check your inbox for the data.

3. Content Grabber

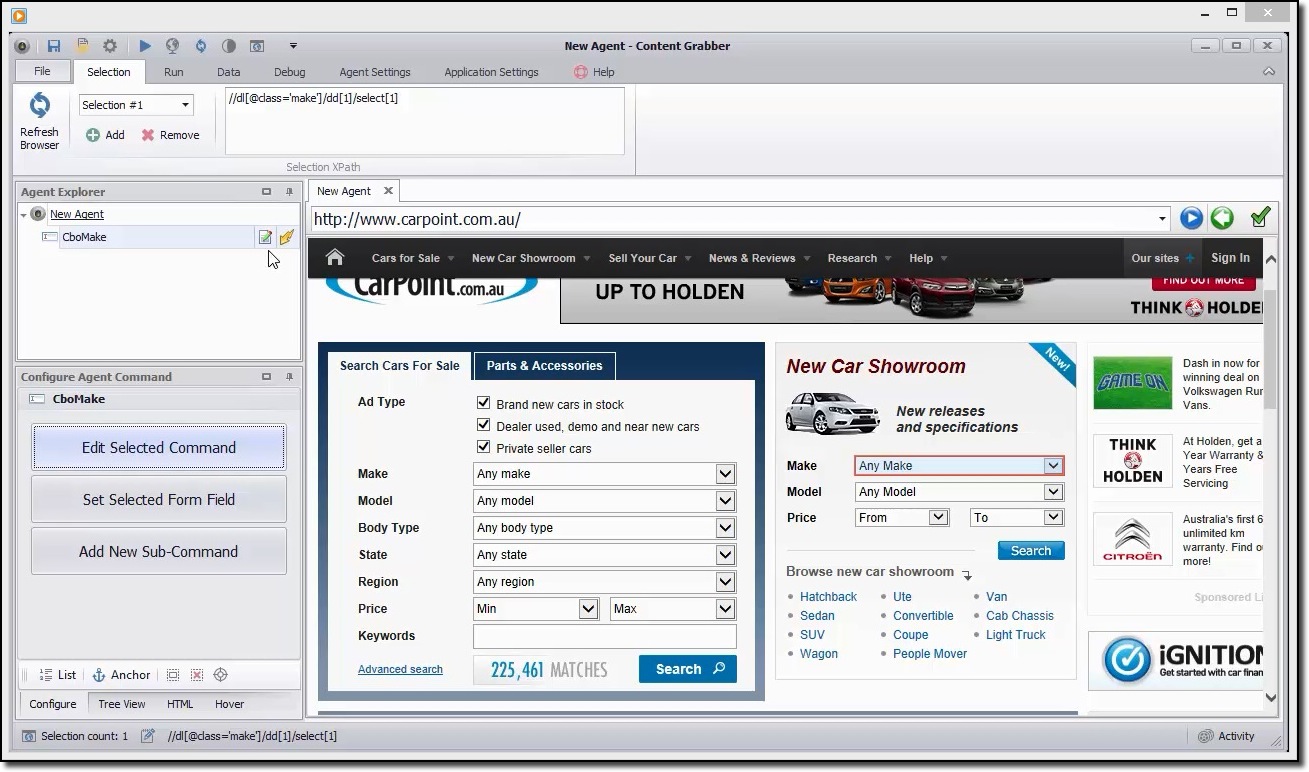

Content grabber is a flexible tool if you like to scrape a webpage and do not want to specify other parameters, the user can perform using their simple GUI. Still, it gives the option to full control of extraction parameters to customize.

User can schedule the scrape of information from the web automatically, is one of its advantages. Nowadays, we all know that web pages are updated regularly, so frequent extraction of content would be useful.

It offers various formats of extracted data such as CSV, JSON to SQL Server, or MySQL.

A quick example to scrape the data

One can use this tool to browse the website visually and click on the data elements in the order you want to collect them. It will automatically detect the correct action type and supply default names for each command as it creates the agent for you based on the content items specified.

This tool is a collection of commands that are executed in order until completed. The order of execution is updated in the Agent Explorer panel. One can make use of configure agent command panel to customize the command based on the requirement of particular data. Users can also add new commands.

4. ParseHub

ParseHub is a powerful web scraping tool that anyone can use free of cost. It offers safe, accurate data extraction with the ease of a click. Users can also set scraping times to keep their remains relevant.

One of its strengths is that it can scrap even the more complicated web pages hassle-free. The user can specify instructions such as search forms, login to websites, and click on maps or images for the post-collection of data.

Users can also input with various links and keywords, where it can extract relevant information within seconds. Finally, one can use REST API to download the extracted data for analysis in either CSV or JSON formats. Users can also export the collected information as a Google Sheet or Tableau.

Scraping E-commerce website example

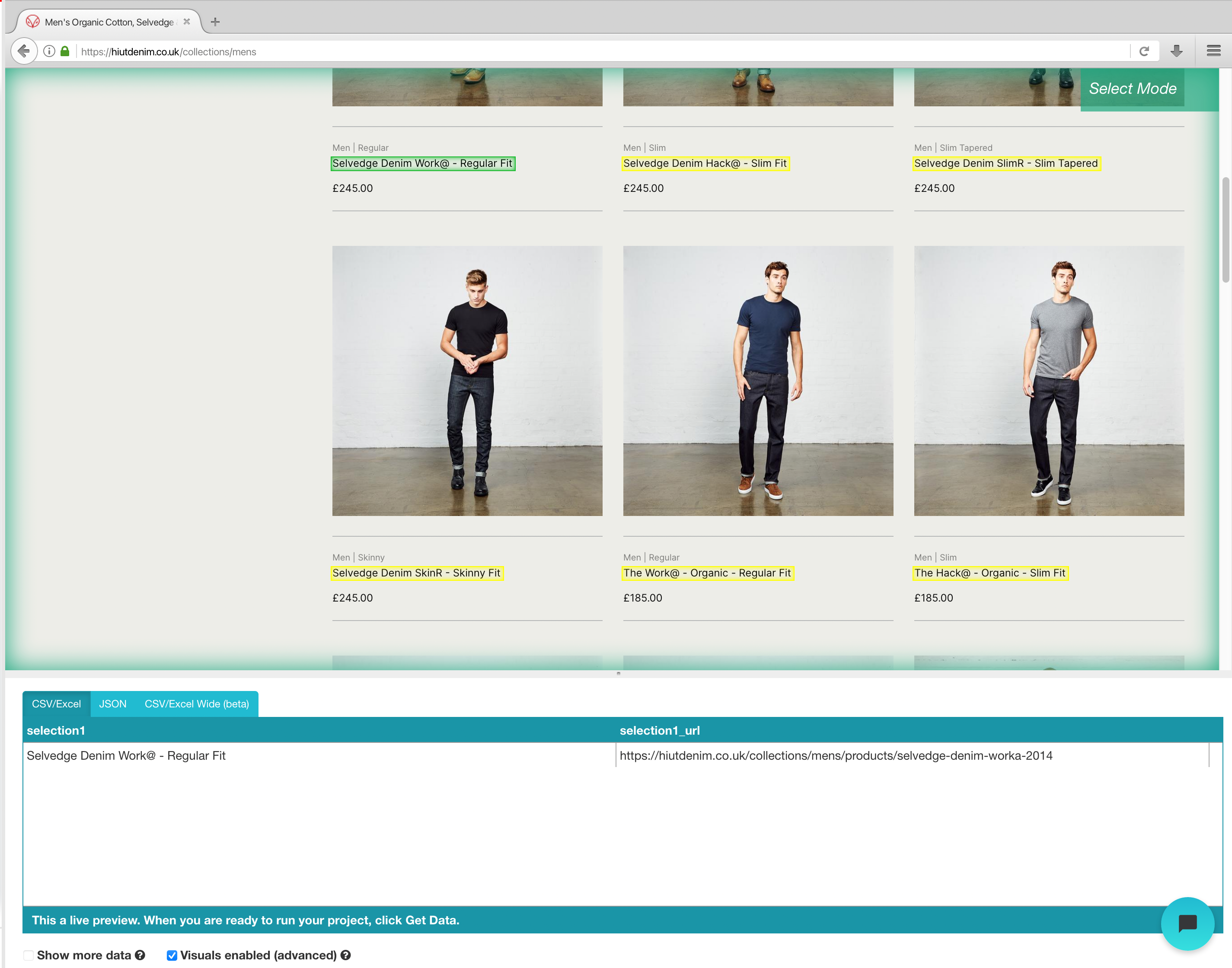

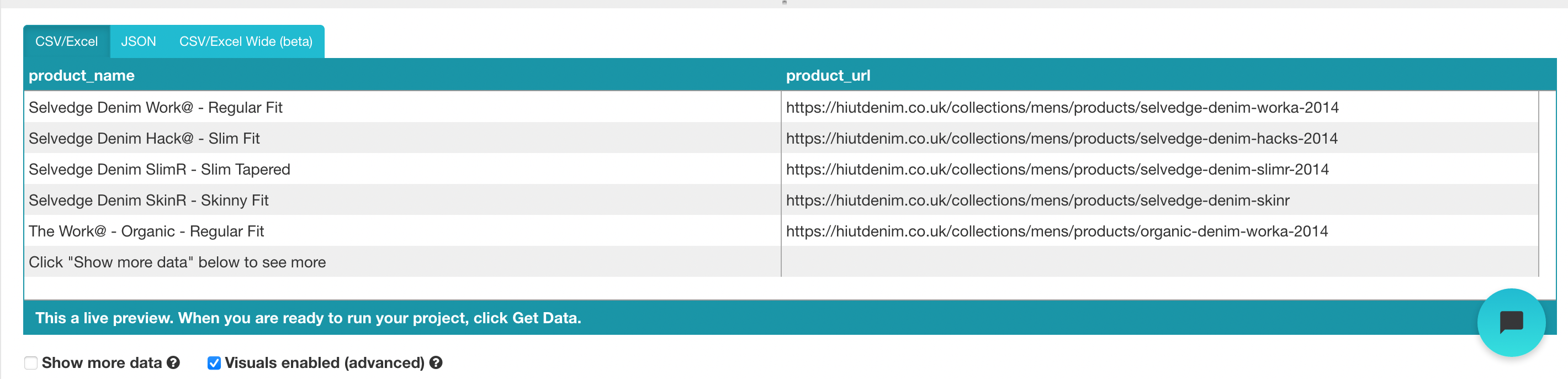

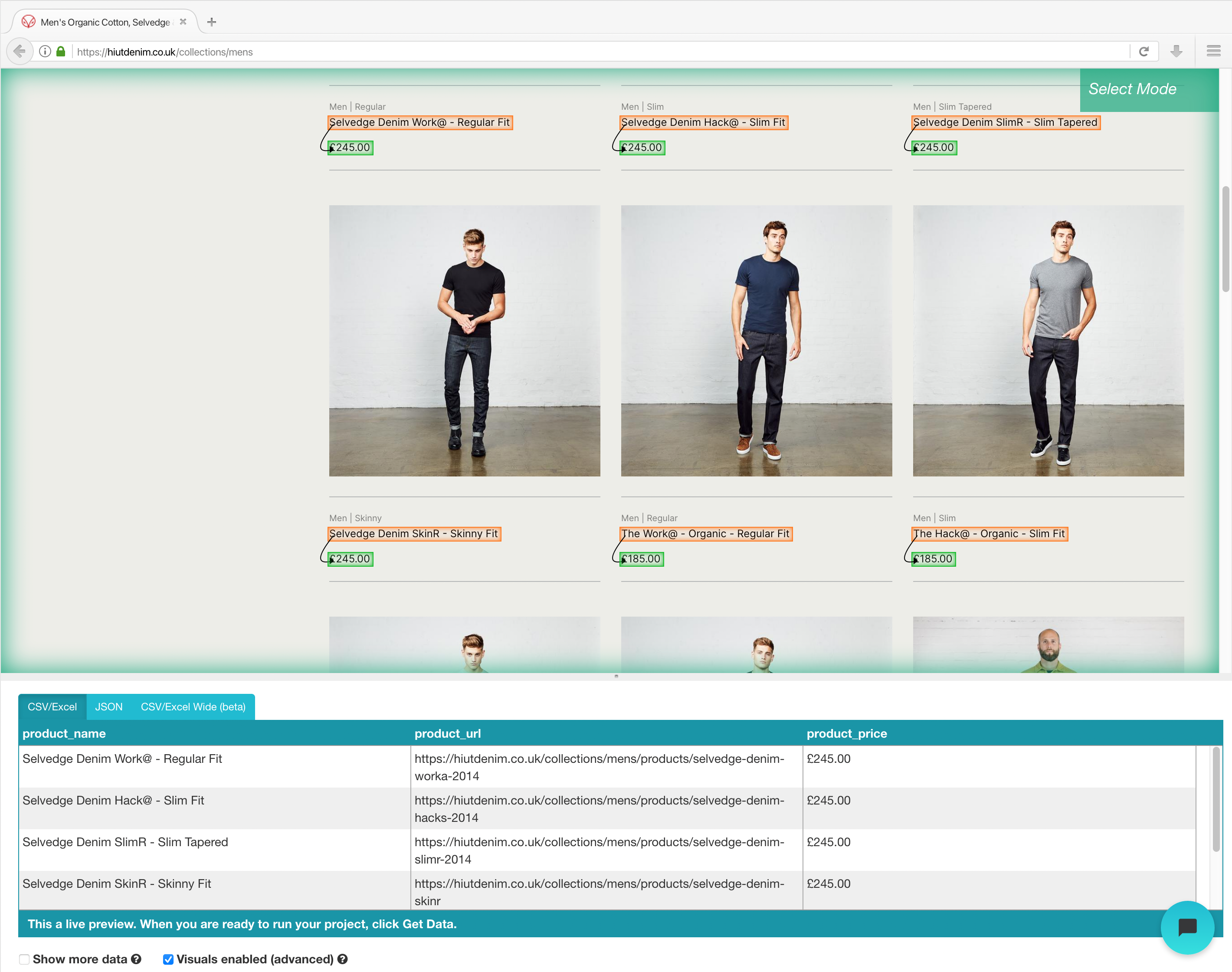

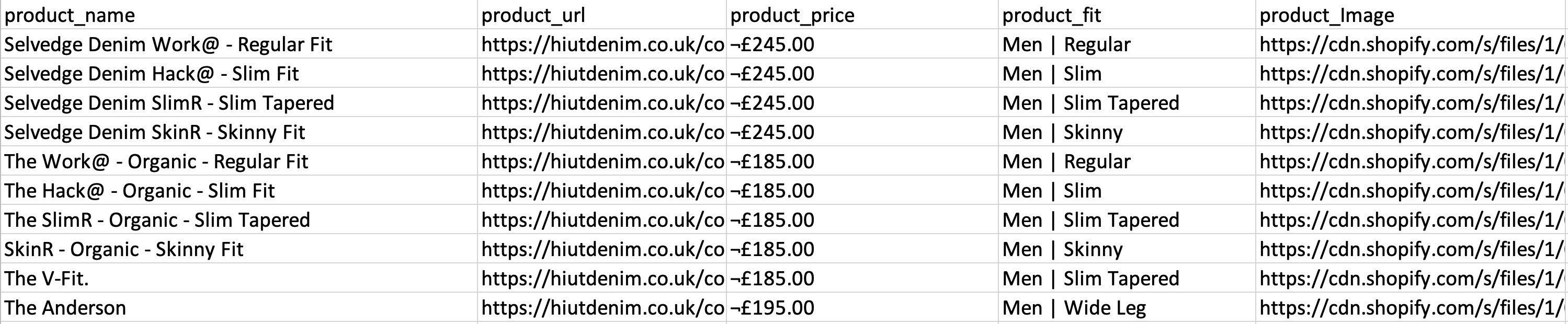

Once you had finished the installation, open a new project in the ParseHub, use the E-commerce URL, and the page is rendered in the app.

- Click on the product name of the first result on the page once the site has been loaded. When you select the product, it becomes green to signify that it has been chosen.

- Yellow will be used to highlight the rest of the product names. Select the second option from the list. Green will now be used to highlight all of the objects.

- Rename your pick to “product” on the left sidebar. Now you can see the product name and URL extracted by ParseHub.

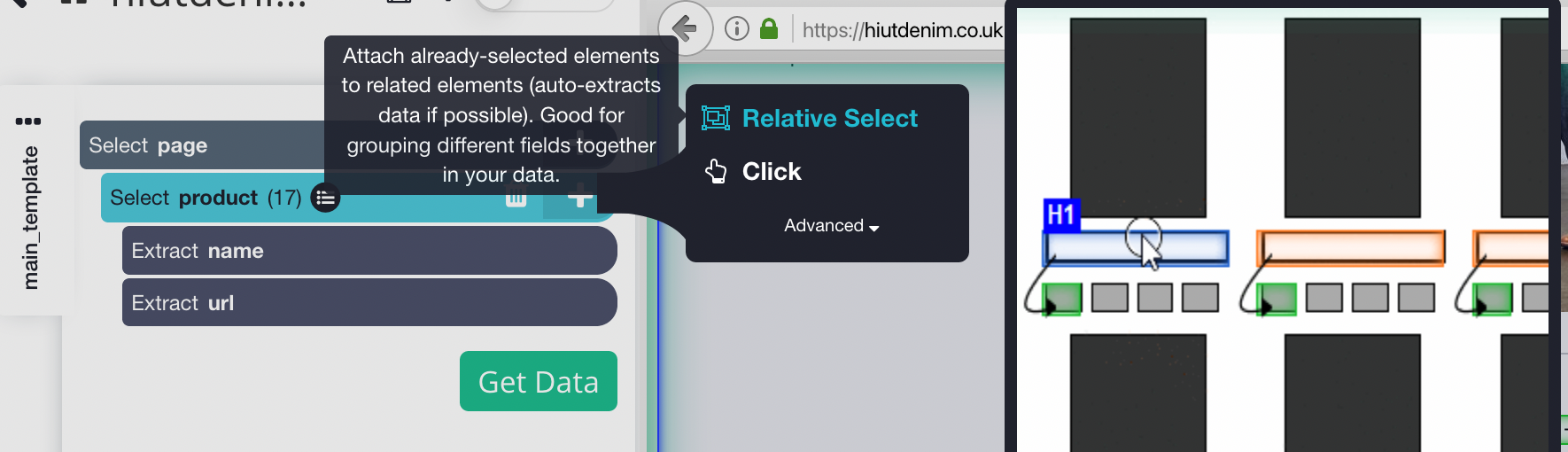

- Click the PLUS(+) sign next to the product selection on the left sidebar and select the Relative Select command.

- Click on the first product name on the page, followed by the product’s price, using the Relative Select command. An arrow will appear connecting the two options. This step needs to be repeated several times to train Parsehub on what you want to extract.

- Repeat the previous step to extract the fit style and product image as well. Make sure to rename your new choices appropriately.

Running and Exporting Your Project

Now that we’ve finished configuring the project, it’s time to run our scrape job.

To run your scrape, click the Get Data button in the left sidebar and then the Run button. For larger projects, we recommend running a Test Run to ensure that your data is formatted correctly.

5. Scrapingbee

It is the last scraping tool on the list. It has a web scraping API that can handle even the most complex Javascript pages and convert them to raw HTML for users to use. It also offers a specific API for scraping websites using Google search.

We can use this tool in one of three ways:

- General Web Scraping, for example, extracting customer reviews or stock prices.

- Search Engine Result Page used for keyword monitoring or SEO.

- Extracting contact information or social media data includes Growth Hacking.

This tool offers a free plan that includes 1000 credits and paid plans for unlimited use.

Walkthrough of using Scrapingbee API

Sign up for a free plan on the ScrapingBee website, and you’ll get 1000 free API requests, which should be enough to learn and test this API.

Now go to the dashboard and copy the API key that we’ll need later in this guide. ScrapingBee now provides multi-language support, allowing you to use the API key directly in your applications.

Because Scaping Bee supports REST APIs, it is suitable with any programming language, including CURL, Python, NodeJS, Java, PHP, and Go. For more Scraping, we’ll use Python and the Request framework, as well as BeautifulSoup. Install them using PIP in the following manner:

# To install the Python Requests library: pip install requests # Additional modules we needed: pip install BeautifulSoup

Use the code below to start the ScrapingBee web API. We’re making a Request call with the parameters URL and API key, and the API will respond with the HTML content of the target URL.

import requests

def get_data():

response = requests.get(

url="https://app.scrapingbee.com/api/v1/",

params={

"api_key": "INSERT-YOUR-API-KEY",

"url": "https://example.com/", #website to scrape

},

)

print('HTTP Status Code: ', response.status_code)

print('HTTP Response Body: ', response.content)

get_data()

Hit run to see the output:

import requests

def get_data():

response = requests.get(

url="https://app.scrapingbee.com/api/v1/",

params={

"api_key": "INSERT-YOUR-API-KEY",

"url": "https://example.com/", #website to scrape

},

)

print('HTTP Status Code: ', response.status_code)

print('HTTP Response Body: ', response.content)

get_data()

By adding a prettify code, we can make this output more readable using BeautifulSoup.

Encoding

You can also use urllib.parse to encrypt the URL you want to scrape, like shown below:

import urllib.parse

encoded_url = urllib.parse.quote("URL to scrape")

Conclusion

Collecting data for your projects is the most tedious step and least fun. This task could be time-consuming, and if you work in a company or freelancer, you knew that time is money, and if there is a most significant way to do a task, you better use it. The good news is that web scraping does not have to be tedious, where using the correct tool can help save you a lot of time, money, and effort. These tools can be beneficial for analysts or people without coding knowledge. Before selecting a tool to scrape, there are few factors to consider, such as API integration and large-scale scraping extensibility. This article presented you with some useful tools for different data collection tasks, where you can choose the one which makes your data collection easy.

I hope this article is useful. Thank you.

Excellent! I didn't know that there are these tools! Thank you Pavan!

Thank you for sharing, I recommend ScrapeStorm, I think it is also a good tool to scrape data.

Thank you for sharing, I think ScrapeStorm is also a good web scraping tool to collect data.