This article was published as a part of the Data Science Blogathon.

Introduction

Let’s find out how to use pose estimation for such Snapchat filters, shall we? Have you ever wondered how Snapchat uses its filters and engages people so much? The filters on Snapchat are of various kinds, from funny to using makeup filters on people’s faces. It’s more like swiping up the filters and choosing one for takings photos.

Whosoever reads this article doesn’t need any prior information regarding the pose estimation. This article sums up all the key points and important topics about pose estimation from starting to end. The article’s starting includes what is pose estimation and why we must know about pose estimation. How many types of pose estimation are described in this article from the head, hand, human, 2D, 3D, and many more pose estimations. After that, we will jump into various public datasets available for making pose estimation with popular algorithms.

After reading this article, one will get all the information related to 2D pose estimation and 3D pose estimation and a mini project of 2D human pose estimation with the OpenPose algorithm.

What is Pose Estimation?

A computer vision technique that detects the position and tracks of an object or a person in an image or video is called a pose estimation. The process is carried out by looking at the combination of orientations of the pose for a given object or a person. Based on the orientation of the points, we can compare various moments and postures of a person or an object and draw some insights.

Pose estimation is mostly done by identifying the key points from an object/person or even by identifying the location.

- For objects: The key points will be the corners or edges of an object.

- For Images: The image containing humans where the key points can be elbows, wrist, fingers, knees, etc.

One of the most exciting areas of research in computer vision that has gained a lot of attraction is various types of pose estimation. There are lots of benefits of using pose estimation techniques.

Applications of Pose Estimation

Nowadays, in the market, there are a plethora of apps that use computer vision technology. Especially the hype about pose estimation is on an insane level due to the efficient tracking system and measurement of pose estimation. Let’s see some applications of pose estimation with the example.

1) Augmented Reality Metaverse

Metaverse breaks through the science and technology community and attracts universal attention from youth to old people. The illusions pin the 3D elements to an object/person in the real world to make them look real. Metaverse creates an environment for people to go into another universe and helps to experience amazing things. The application suitable for metaverse solutions is Pose Estimation, Eye Tracking, and Voice & Face Tracking.

One of the useful use cases of pose estimation is used in the US Army, where they can differentiate between enemies and friendly troops.

2) Healthcare and Fitness Industry

The rapid growth in the fitness industry in covid times has increased, and there are numerous consumers who join the craziness of fitness. The rapid growth of fitness apps provides efficient health monitoring charts and plans to get in shape. And also, some apps provide incredible results in error detection and feedback to the consumers. These apps utilize the pose estimation technique in computer vision to minimize the possibility of an injury while working out.

3) Robotics

Pose Estimations are integrated into robotics. It is applied in the training of robots where they learn the movements of the persons.

Why do we Use Pose Estimation?

Detection of people plays a major role in the part of detection. With recent development in machine learning (ML) algorithms, it is easy to use pose detection and pose tracking.

In traditional methods such as object detection, we can only be perceived as a bounding box square. With the advancement in pose detection and pose tracking, machines can easily learn human body language. With the help of pose estimation, we can track an object at a granular level. These powerful techniques open up a large range of possibilities to apply in the real world.

To track human movements and activity, pose estimations have several applications ranges such as Augmented reality, Health care sectors, and Robotics. For example, Human pose estimation can be used in various ways, such as maintaining the social distance in bank queues by combining human pose estimation and distance projection heuristics. It will assist people in maintaining the rules and regulations of proper hygiene in banks and also helps in maintaining the physical distance in an overcrowded place.

Another example where pose tracking and pose estimation will work is in self-driving cars. Most accidents are caused by self-driving cars when vehicles cannot understand pedestrian behaviour. With the help of pose estimation, now the model will be trained better.

Methods for Multi-Person Pose Estimation

Two common methods used for pose estimation:

1) Top-Down Approach:

First, we will detect the person and make the bounding box around each person. Then we will estimate the parts of the body. After that, we can classify each joint as the correct person. This method is known as the top-down approach.

2) Bottom-up Approach:

First, we will detect all parts in the image, followed by associating/grouping parts belonging to distinct persons. This method is known as the bottom-up approach.

In general situation, the Top-down approach consumes time much more than the Bottom-up approach.

Models for Pose Estimation

With the rapid advancement of deep learning solutions in recent years, it has outperformed some of the computer vision methods in multiple tasks such as pose estimation, including image segmentation and object detection.

There are several models present for pose estimation. The model choice depends on the requirement of the problem. There are also countless factors that we need to consider while choosing the models. The requirements can be running time, the size of the model, and many more.

Here, I will be listing the most popular pose estimation libraries available on the internet. We can easily customize them as per our use case.

- OpenPose

- High-Resolution Net (HRNet)

- Blaze pose

- Regional Multi-Person Pose Estimation (AlphaPose)

- Deep Pose

- PoseNet

- Dense pose

- Deep cut

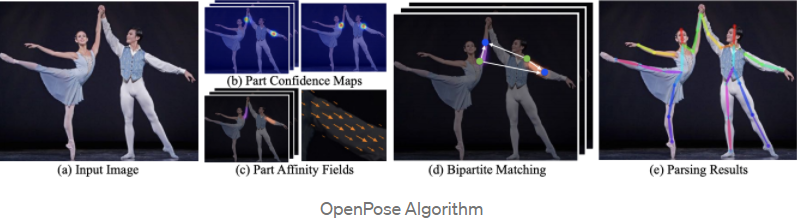

1) OpenPose

OpenPose is known as opensource video-based human pose estimation. OpenpPose architecture is popular for predicting multi-person pose estimation. One of the amazing things is Openpose is its open-sourced, real-time multi-person detection architecture. It uses a bottom-up approach for multi-person human pose estimation.

OpenPose gives high accuracy in estimating poses for body, foot, hand, and facial key points in a total of 135 key points in a single image. The main advantage of OpenPose is the API that gives the user control over selecting the image source and gives multiple options. We can select images from camera fields, webcams, and embedded systems also (CCTV cameras and systems).

One of the best things about OpenPose is its high accuracy without compromising on execution performance. The slight tradeoff between speed and accuracy (i.e. R-CNN runs faster)

The OpenPose research paper you can find here, and the documentation you can find here. The Github link for checking the code you can find here.

Image Source: Vanity.com

2) HRNet

HRNet is known as High-Resolution Net. For human pose estimation, we use HRNet neural network. HRNet is a state-of-the-art algorithm in the field of human pose estimation. HRNet is highly used in the detection of human posture in televised sports.

HRNet uses Convolutional Neural Networks (CNN), which are similar to Neural Networks, but the main difference comes in the model’s architecture. Normal Neural Networks do not scale well using images dataset, whereas Convolutional Neural Networks give a great output.

Even the normal Neural Networks work very slowly as all the hidden layers are connected to each other, which results in a slower run of the model. For example, if we have images sizes 32323, then we would have 3072 weights which are way too large. While feeding this data, it would slow down the neural network.

The HRNet research paper you can find here. The Github link for checking the code you can find here.

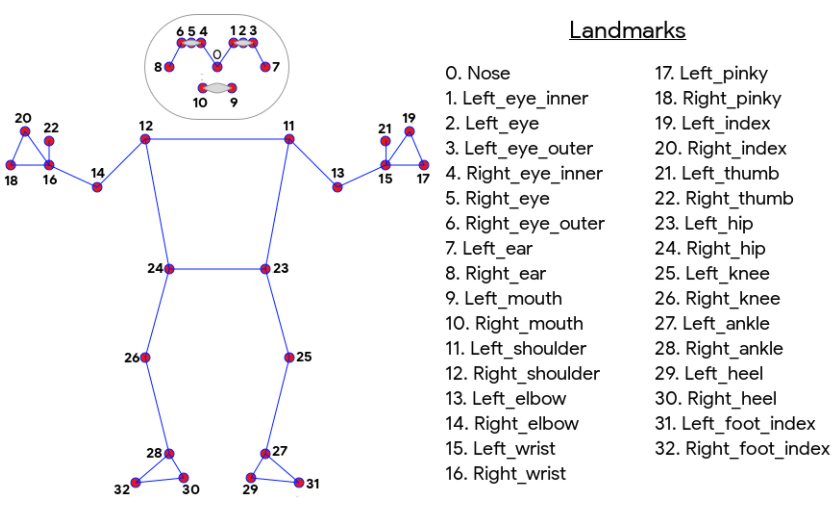

3) BlazePose

BlazePose is a machine learning (ML) model that can be used with ailia SDK. BlazePose is developed by Google that can compute up to 33 skeleton key points, and it is mainly used in fitness applications. BlazePose gives the 33 key points as an output of the model according to the below chart ordering convention. This allows us to determine body semantics from pose prediction alone that is consistent with face and hand models. We can easily use the BlazePose model to create AI applications using ailia SDK as well as many other ready-to-use ailia MODELS.

The Blaze Pose research paper you can find here, and the documentation you can find here. The Github link for checking the code you can find here. To read more about the BlazePose follow this blog.

Image Source: BlazePose

4) Regional Multi-Person Pose Estimation (AlphaPose)

AlphaPose is a Real-Time multi-person human pose estimation system. A popular method of top-down approach uses the AplhaPose dataset for human pose estimation. Aplhapose comes into the picture when there are inaccurate human bounding boxes, and it is very accurate.

We can detect a single person or multi-person from images or videos, or image lists with the help of AplhaPose. It is the pose tacker known as PoseFlow, and it is also open-sourced. AlphaPose is the tracker that can both satisfy 60+ mAP (66.5 mAP) and 50+ MOTA (58.3 MOTA) on the PoseTrack Challenge dataset.

Image Source: AplhaPose

Categorization of Pose Estimation

Generally, pose estimations are divided into two groups:

- Single Pose Estimation: Detecting a single object or person from an image or videos

- Multi Pose Estimation: Detecting multiple objects or people from an image or videos

There are various types of pose estimation

- Human pose estimation

- Rigid pose estimtaion

- 2D pose estimation

- 3D pose estimation

- Head Pose Estimation

- Hand Pose Estimation

1) Human Pose Estimation

The estimation of key points while working with human images or videos where the key points can be elbows, fingers, knees, etc. This is known as human pose estimation.

2) Rigid Pose Estimation

The objects that do not fall into account of the flexible object category are rigid objects. For instance, the edges of bricks are always going to be at the same distance regardless of the brick’s orientation, so predicting the position of these objects is known as rigid pose estimation.

3) 2D Pose Estimation

It predicts the key points estimation from the image in terms of pixel level. In 2D estimation, we simply estimate the key points of the location in 2D space that is relative to input data. The location of key points is represented as X and Y.

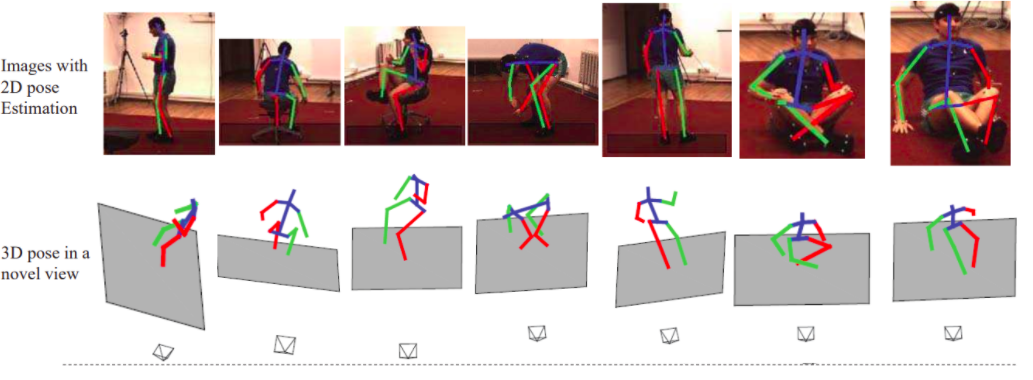

4) 3D Pose Estimation

It predicts a three-dimensional spatial arrangement of all the objects/persons as its final output. First, the object is transformed into a 3D image from a 2D image by additional z-axis to the prediction of the output.

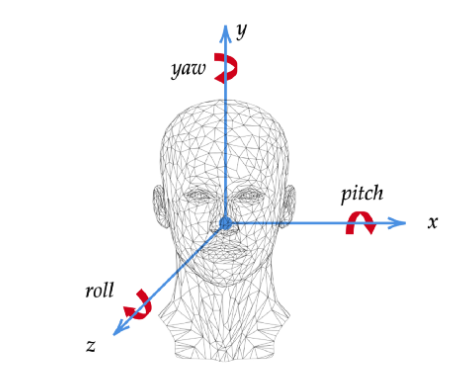

5) Head Pose Estimation

Finding the position of the head pose of a person is becoming a popular use case in computer vision. One of the best applications of head pose estimation is made by Snapchat, where we use various kinds of filters on our faces to make us look funny. In addition, we use head pose estimation in Instagram filters and Snapchat, and we can also use it in self-driving cars to track the activities of drivers while driving.

Head pose estimation has multiple applications, such as modelling attention in 3D games and performing face alignment. To do the head pose estimation in 3D poses, we need to find the facial key points from 2D image poses with the help of deep learning models.

You can read about the head pose estimation from this blog here.

Image Source: Medium.com

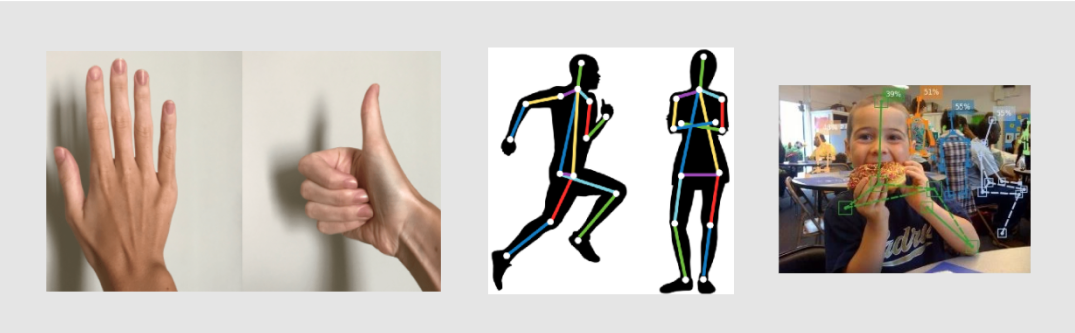

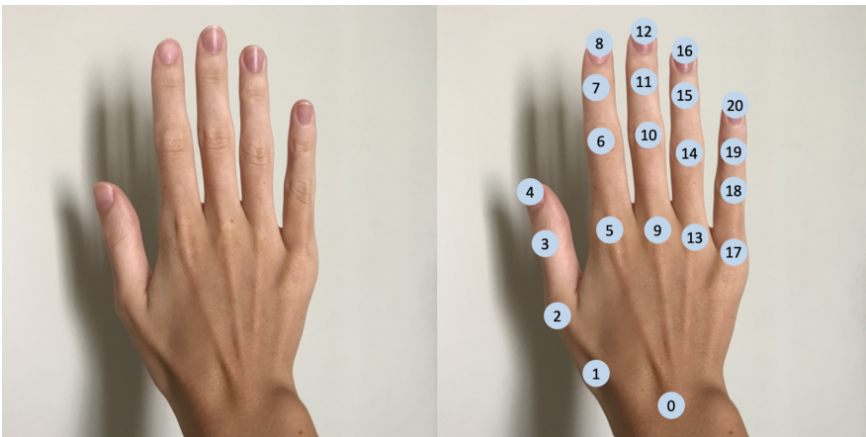

6) Hand Pose Estimation

Hand Pose Estimation aims to predict the position of joints on a hand from an image, and it has become popular because of the emergence of VR/AR/MR technology. In the hand pose estimation, the key points are the location of joints in the hands. In total hand has 21 key points, which consist of the wrist, and 5 fingers for the location of key points.

You can read more about hand pose estimation from this blog here.

Human Pose Estimation Dataset

Various datasets are available for the pose estimation of human images and videos. Here, I am listing some datasets for you to make a model and explore. I will explain what the dataset contains in terms of categories of images and videos. Additionally, it will give some basic information about the dataset and its performance.

- MPII

- COCO

- HumanEva

- Human3.6M

- SURREAL – Synthetic hUmans foR REAL tasks

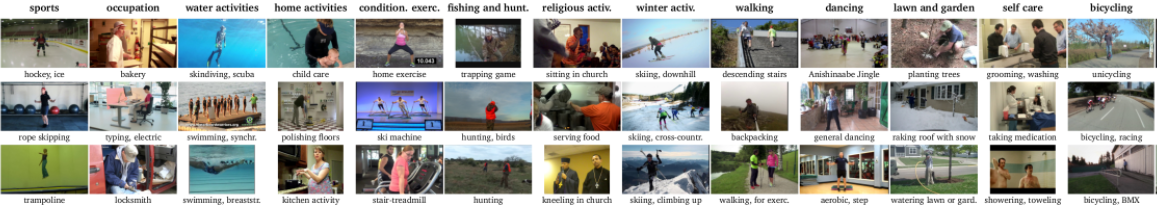

1) MPII

The MPII human pose dataset contains data of numerous human poses, which includes around 25K images containing over 40K people with annotated body joints. The MPII human pose dataset is a state-of-the-art benchmark for the evaluation of articulated human pose estimation.

The first dataset to launch a 2D Pose estimation challenge in 2014 was the MPII human poses, and it’s also the first dataset to contain such a diverse range of poses. The data contained images of human activities collected from Youtube videos. There are overall 410 human activities images having the activity label on them. Each image was extracted from a YouTube video and provided with preceding and following un-annotated frames. In addition, for the test set, we obtained richer annotations, including body part occlusions and 3D torso and head orientations.

You can find MPII human pose dataset here.

Image Source: MPII Human Pose Dataset

2) COCO

COCO is a large-scale dataset for object detection, segmentation, and captioning data, and it is also the dataset which is a multi-person 2D Pose Estimation dataset with images collected from Flickr. COCO dataset has 80 object categories and 91 stuff categories.

One of the largest 2D Pose Estimation datasets to date is COCO and is considering a benchmark for testing 2D Pose Estimation algorithms. You can find the COCO dataset here.

Image Source: COCO

3) HumanEva

The HumanEva dataset consists of video sequences of 3D pose estimation of humans which are recorded using a different cameras such as multiple RGB and grayscale cameras. HumanEva was the first 3D Pose Estimation dataset of substantial size.

The HumanEva-I dataset contains 7 video sequences which are: 4 grayscale and 3 colors. The amazing thing is that they are synchronized with 3D body poses obtained from a motion capture system. The HumanEva database contains 4 subjects performing 6 common actions (e.g. walking, jogging, gesturing, etc.). The error metrics for computing error in 2D and 3D poses are provided to participants. The dataset contains training, validation, and testing (with withheld ground truth) sets.

You can find the HumanEva dataset here.

4) Surreal

The name SURREAL is derived from Synthetic hUmans foR REAL tasks. SURREAL contains virtual video animations for single-person in 2D/3D Pose Estimation data. It is created using mocap data recorded in the lab. The first large-scale person dataset to generate depth, body parts, optical flow, 2D/3D pose, surface normal ground truth for RGB video input.

The dataset contains 6M frames of synthetic humans. The images are photo-realistic renderings of people under large variations in shape, texture, viewpoint, and pose. To ensure realism, the synthetic bodies are created using the SMPL body model, whose parameters are fit by the MoSh method given raw 3D MoCap marker data.

You can find the 3d human pose estimation SURREAL dataset here.

Code for Human Pose Estimation in ML

Let’s start the implementation of pose estimation

The first step is to make a new folder where you will store the project. It’s better to make a virtual environment and then load the OpenPose algorithm rather than download it into your normal environment.

Create an Anaconda environment for pose estimation. Here in ‘myenv’ put any name for the environment.

conda create --name myenv

Now let’s work in this environment for which we will activate the environment

conda activate myenv

Install the latest version of python

conda create -n myenv python=3.9

The code for human pose estimation is inspired by the OpenCV example for the OpenPose algorithm.

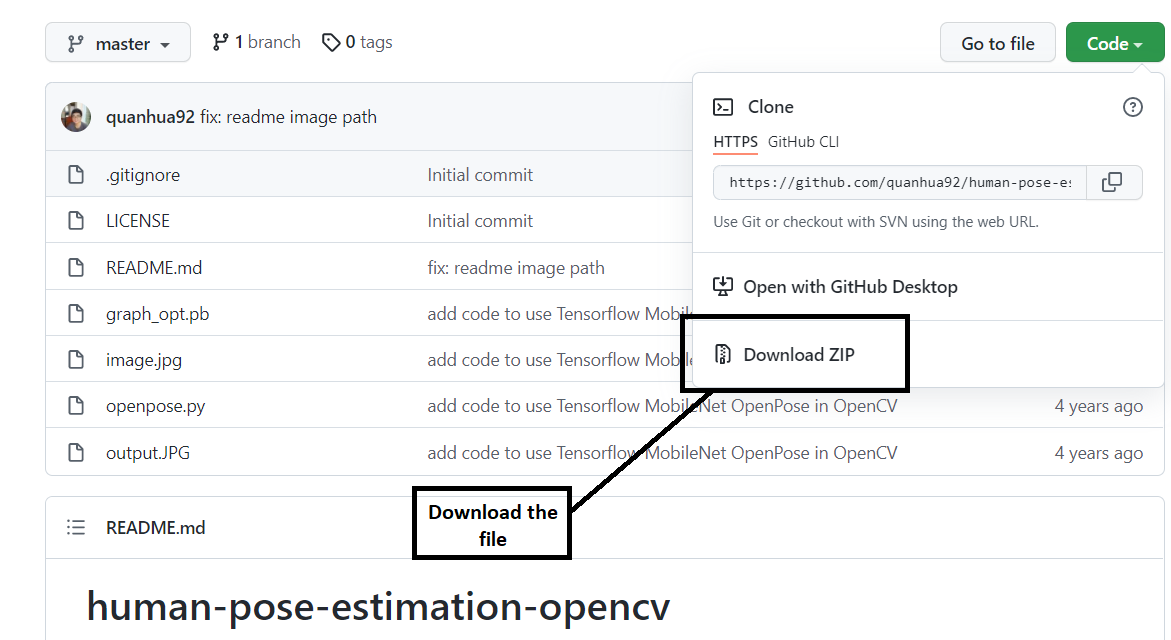

Now we will write the code for human pose estimation. Let’s download the zip file from this link.

First, let’s import all the libraries.

import cv2 as cv

import matplotlib.pyplot as plt

Now, we will load the weights. Weights are stored in graph_opt.pb file and stored the weights into a net variable.

net = cv.dnn.readNetFromTensorflow(r"graph_opt.pb")

Initialize, the height, width, and threshold for the images.

inWidth = 368

inHeight = 368

thr = 0.2

We will be using 18 points model for doing human pose estimation. The model is trained on the COCO dataset where the numbering of the key points is done in the below format.

BODY_PARTS = { "Nose": 0, "Neck": 1, "RShoulder": 2, "RElbow": 3, "RWrist": 4,

"LShoulder": 5, "LElbow": 6, "LWrist": 7, "RHip": 8, "RKnee": 9,

"RAnkle": 10, "LHip": 11, "LKnee": 12, "LAnkle": 13, "REye": 14,

"LEye": 15, "REar": 16, "LEar": 17, "Background": 18 }

Defining the pose pairs used to create the limbs that will connect all the key points.

POSE_PAIRS = [ ["Neck", "RShoulder"], ["Neck", "LShoulder"], ["RShoulder", "RElbow"],

["RElbow", "RWrist"], ["LShoulder", "LElbow"], ["LElbow", "LWrist"],

["Neck", "RHip"], ["RHip", "RKnee"], ["RKnee", "RAnkle"], ["Neck", "LHip"],

["LHip", "LKnee"], ["LKnee", "LAnkle"], ["Neck", "Nose"], ["Nose", "REye"],

["REye", "REar"], ["Nose", "LEye"], ["LEye", "LEar"] ]

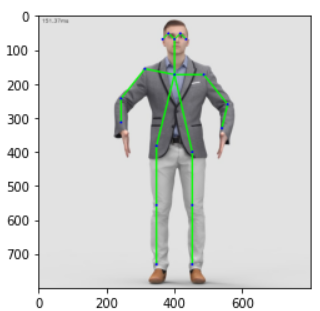

Let’s see how does the original picture look like.

img = plt.imread("image.jpg")

plt.imshow(img)

To generate the confidence map for each key point we will call the forward function on the original input images.

net.setInput(cv.dnn.blobFromImage(frame, 1.0, (inWidth, inHeight), (127.5, 127.5, 127.5), swapRB=True, crop=False))

This is the main code where the prediction of human pose estimation is done.

def pose_estimation(frame):

frameWidth = frame.shape[1]

frameHeight = frame.shape[0]

net.setInput(cv.dnn.blobFromImage(frame, 1.0, (inWidth, inHeight), (127.5, 127.5, 127.5), swapRB=True, crop=False))

out = net.forward()

out = out[:, :19, :, :]

assert(len(BODY_PARTS) == out.shape[1])

points = []

for i in range(len(BODY_PARTS)):

# Slice heatmap of corresponging body's part.

heatMap = out[0, i, :, :]

_, conf, _, point = cv.minMaxLoc(heatMap)

x = (frameWidth * point[0]) / out.shape[3]

y = (frameHeight * point[1]) / out.shape[2]

# Add a point if it's confidence is higher than threshold.

# combining all the key points.

points.append((int(x), int(y)) if conf > thr else None)

for pair in POSE_PAIRS:

partFrom = pair[0]

partTo = pair[1]

assert(partFrom in BODY_PARTS)

assert(partTo in BODY_PARTS)

idFrom = BODY_PARTS[partFrom]

idTo = BODY_PARTS[partTo]

if points[idFrom] and points[idTo]:

cv.line(frame, points[idFrom], points[idTo], (0, 255, 0), 3)

cv.ellipse(frame, points[idFrom], (3, 3), 0, 0, 360, (0, 0, 255), cv.FILLED)

cv.ellipse(frame, points[idTo], (3, 3), 0, 0, 360, (0, 0, 255), cv.FILLED)

t, _ = net.getPerfProfile()

freq = cv.getTickFrequency() / 1000

cv.putText(frame, '%.2fms' % (t / freq), (10, 20), cv.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 0))

return frame

Calling the main function to generate the key points on the original unedited image.

estimated_image = pose_estimation(img)

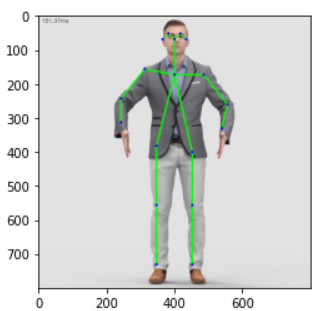

Let’s see how does the picture looks

plt.imshow(img)

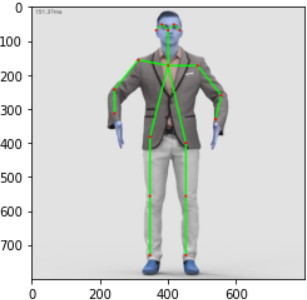

If we want to see the images in the RGB format on the key points.

plt.imshow(cv.cvtColor(estimated_image, cv.COLOR_BGR2RGB))

Conclusion

Going through the article, one will understand the concept of pose estimation. And also, why is the demand for pose estimation is skyrocketing? One will learn by reading the article. What is the application of pose estimation? Are there and where we have already used pose estimation, we will get to see in this article.

We will see how many types of pose estimations are there, such as Human Pose Estimation, Rigid Pose Estimation, 2D Pose Estimation, 3D Pose Estimation, Head Pose Estimation, Hand Pose Estimation, how we can use these types of pose estimation while using some popular algorithms for 2D and 3D pose estimation.

Lastly, we will see how to do pose estimation on human full-body images by using the Openpose estimation.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

Hello, I am currently doing my masters thesis on this subject and I want to know if I can use some of the images of this article?