This article was published as a part of the Data Science Blogathon.

Introduction

Today, Data Lakes is most commonly used to describe an ecosystem of IT tools and processes (infrastructure as a service, software as a service, etc.) that work together to make processing and storing large volumes of data easy. An ecosystem consists of several key components, including software tools and processes that store and process data; IoT (Internet of Things) connected devices that store, and process data about users and products; storage system providers, data integrator partners (Microsoft Azure Data Lakes Software Gateway), (software tools like Greenplum Realtime Report and hardware platforms like VMware vRealize Automation)

.jpg)

Table of contents

What is a Data Lakes?

It is a data storage and analysis platform that stores and analyzes large amounts of data. These are typically used to store, analyze, and visualize large amounts of data from various sources, such as weblogs, email archives, social media feeds, etc. The purpose of a data lake is to store and analyze large amounts of data in a centralized location..Various technologies, such as databases, NoSQL databases, and cloud storage, can be employed to establish a data lake. This reservoir serves as a repository for all the data produced by an organization’s systems, encompassing everything from sales transactions to evaluations of employee performance. By consolidating all data into a single location, a data lake facilitates analysis and seamless accessibility

While creating a data lake, it is important to remember that the data lake should only be used to store the most important data. This is because the more data is stored in a data lake, the more likely it is to be deleted or lost.

Why Data Lakes?

It is a data storage system that stores all the data generated by an organization. A data lakes is usually a collection of databases, but it can also include other data types, such as images, videos, and other file types. Apart from storing all the data an organization generates, a data lake can also be used to analyze data and predict future trends.

The purpose of a data lakes is to store all the data generated by an organization. This allows the organization to access all data anytime and make decisions based on the data. The benefits of a data lake include quick access to all data and data-driven decision-making. A data lake allows a large amount of storage to store data from data sources.

The following reasons for building a Data Lakes are:

- Company data is stored in various systems, including ERP platforms, CRM applications, marketing applications, etc. It helps to organize the data on these platforms. However, there are times when it is necessary to consolidate all the data into one place to analyze all the attribution and data journey. Using Data Architecture, a single organization can gain a general view of data and generate insights.

- It allows businesses to store data and use it directly from BI tools without having to worry about accessing transactional APIs. Enterprises use enterprise platforms to run daily tasks that provide transactional API access to data. It allows businesses to store and use data directly from BI tools. With ELT, you can quickly load data into the Data Lake and use it with other software tools using a flexible, reliable, and fast method.

- The performance of a particular application may be affected by data sources that do not offer faster query processing. The data aggregation process requires a higher query speed, which depends on the data’s nature and database type. The Architecture enables fast querying by providing a Data Lake infrastructure that supports fast query processing. Data Lake can be scaled up and down quickly, making them easy to query.

- Before moving on to the next stages, having the data in one place is important because loading data from one source makes it easier to work with BI tools. This makes your data cleaner and error-free, reducing the possibility of data duplication.

The Architecture of Data Lakes

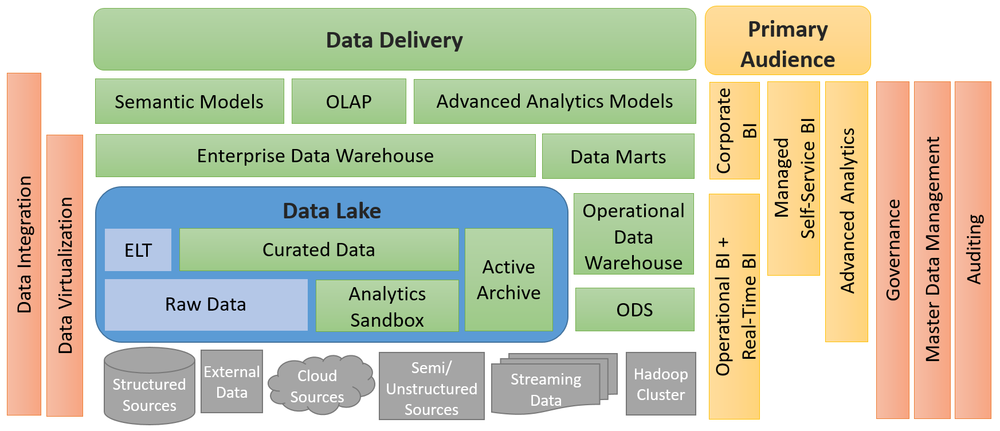

The main components of a data lake architecture are shown in the figure below. All key technologies are part of the ecosystem. All ETL tools transform the data into a structured or unstructured form, the data warehouse stores the data for long-term storage, and the expert solves queries against the data warehouse to get the final result.

Source: learn.microsoft.com

This Architecture is a step-by-step process that guides an organization in designing and maintaining a data lake. Data lake allow organizations to retain much of the work typically invested in creating the data structure. These are some of the primary aspects of a robust and effective Data Lake Architectural model:

- It is important to monitor and oversee data lake operations to measure performance and improve the data lake through monitoring and oversight.

- Security must be a key consideration when approaching the initial phase of architecture. This is different from relational database security.

- Data that is associated with metadata is referred to as metadata. For example, reload intervals, structures, etc.

- One organization can have multiple admin roles. Individuals who hold these roles are called administrators.

- It is important to monitor and control ELT processes to perform raw data transformations before they reach the clean space and application layer.

Key Components of the Architecture

It is an ecosystem where key elements work together to make storing and analyzing large volumes of structured data Easy. There are different types, including hybrid, public, and private. The public data lake is open to anyone to use. The private data lake is only available to those with the necessary security credentials. A hybrid data lake contains data from the organization. It is most likely owned by the marketing team, although it will be accessible to all business units in their corporate copy. An organization should define its data lake structure based on the following concept.

A data lake typically includes five divisions:

Ingest Layer: The ingest layer of the Data Lakes architecture is responsible for capturing raw data and transforming it into data inside the data lake. Raw data is not changed in this layer. The receiving layer is the first and foremost in the data pipeline, where data is captured and processed. Depending on the application’s requirements, a layer can be either front-end or back-end. When data is processed, the information must be transformed into something the application requires. For example, social media platforms must transform raw social media data into marketing content, and wearables must transform data into sensor data so that it can be used to improve the user experience.

Distillation Layer: This layer of the Data Lake architecture is responsible for transforming structured data into an ingestible form at the ingest layer. The process of data transformation is also known as cleansing or cleaning data to meet certain compliance, regulatory, or business needs. The data can be easily processed. It is formatted and made ready for business users to work with. The data transformation process must be able to transform data meaningfully for business users. Data transformation is an iterative process; the first stage is data collection.

Processing Layer: The Data Architect starts by designing the data stores and analytics tools’ architecture. Next, they identify the sections of the information system for complex analytical queries and establish a logical data structure. Query and analysis tools convert structured data into actionable insights. Data management oversees the data, while analysis delves into it. Data is extracted, transformed, and loaded for consumption, checked, and loaded into relevant tables. The audit process verifies and logs changes. Analytical processes use validated data to achieve goals. Finally, data is permanently deleted, and systems are rebooted as required for maintenance.

Insights Layer: Data is stored in a database and made available through various data sources. This query interface retrieves data from the Data Lake. SQL and NoSQL queries are used to retrieve data from the Data Lake. Business users are normally allowed to use the data if they wish. Once the data is retrieved from the Data Lakes, it is the same layer that displays it to the user. When presented in this flat analytical format, it can also be difficult to understand the data. The Visualizations and graphs allow users to understand data more visually and can be useful in conveying complex data trends and facts. Dashboards and reports can provide users with an overview of the state of a company’s data architecture and the efficiency with which queries are being processed. They can also monitor service or application usage and identify bottlenecks.

The Unified Operations Layer: The workflow management layer oversees system performance within the data lake, collecting and storing results. It also includes an audit layer that monitors the data lake’s health and system performance, analyzing data and generating reports for decision-making. Alongside data management, this layer handles system and data profiling, as well as data quality assurance. Sandboxes offer a flexible data analysis environment for scientists to experiment, explore data relationships, and validate predictions. They can model complex phenomena like climate change or disease epidemics, aiding in solving business problems and testing new models.

Challenges of Data Lakes

With so many players in today’s market, it is hard to make informed decisions while building a data lake ecosystem. This will lead to a data lake with unfinished features and limited scalability. Additionally, dependencies and interoperability between parts are challenging with so many different technologies and tools being used in the data lake ecosystem. This can lead to inconsistencies and inaccuracies in the data.

The following are issues that affect the design, development, and use of Data Lakes:

- They are great for data storage. However, they are not so great at data management. As data sets grow, keeping track of data security and privacy becomes difficult. Data governance, a set of best practices for managing the data we collect and how it is used, is often ignored. It is the process of planning, creating, and maintaining a set of practices for managing your data. Data management is important, but it’s not something you can do on the fly. It’s something you have to plan and execute. Data management is a process, not an event.

- New members may not be familiar with tools and services and require an explanation. The company will need to train new members to use the tools and services as the process progresses.

- If you are using a third-party data source and want to integrate it into your Data, you will need to get the data from the source and then convert it into a format that your Data Lakes Engine can handle. This can create a problem if your source does not support receiving large amounts of data simultaneously. If the source doesn’t support importing large amounts of data into your Data Lake, it’s a good idea to consider using tools to help with this task, such as Google Cloud Dataflow.

- There is not a one-time solution. They require ongoing investment in data management systems and personnel. A Data Lake business must also have a process to identify and eliminate duplicate data. Monitoring the Data Lake regularly is also essential to ensure it is not drying up. Finally, a Data Lake enterprise must have a process to scale the platform as needed to ensure that the company’s data is not stored in an underutilized or unsecured solution. They require the business to invest in the process.

Conclusion

A data lakes is a great way for organizations to collect and store structured data. It’s a way to centralize all your data and make it available across your organization. It can be used as a host device for other types of data, a work area for data analysis, or to house non-technical personnel who might assist with data analysis. A data lake is not only a good way to collect structured data but can also be used to store unstructured data such as images, videos, financial data, etc. It is important to remember that data lake are not just about data; they are about an ecosystem of technologies and processes which work together.

- The Architecture enables fast querying by providing a Data Lake infrastructure that supports fast query processing. Data Lake can be scaled up and down quickly, making them easy to query.

- A hybrid data lake contains data from the organization. It is most likely owned by the marketing team, although it will be accessible to all business units in their corporate copy.

- These are great for data storage. However, they are not so great at data management. As data sets grow, keeping track of data security and privacy becomes difficult. Data governance is a set of best practices for managing our data.

- Depending on the application’s requirements, a layer can be either front-end or back-end. When data is processed, the information must be transformed into something the application requires.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.