Image source: https://openaccess.thecvf.com/content_ICCVW_2019/papers/RLQ/Nguyen_State-of-the-Art_in_Action_Unconstrained_Text_Detection_ICCVW_2019_paper.pdf

Introduction

In this article, we study the “Character region Awareness for Text Detection” model by Clova AI research, Naver Corp. The deep learning-based model provides scene text detection or bounding boxes of text in an image. These bounding boxes can then be passed to an Optical character recognition (OCR) engine for enhanced text recognition accuracy.

Background

Optical character recognition is widely used in the Intelligent Document Processing industry. The technology has widespread application in the healthcare, fintech, shipping, logistics and insurance industries. An image containing text is uploaded by a client which is then passed through the OCR pipeline to get digitized output from the image.

A typical OCR pipeline consists of boundary detection, image deskewing, image preprocessing, text detection, text recognition and a post-processing module. Boundary detection is carried out to remove the background from the main image. Image deskewing and dewarping are done to improve text detection and recognition accuracy. Image preprocessing involves various steps like thresholding or binarizing, denoising, edge enhancement etc to improve the quality of the text in the image.

Text detection is one of the most important steps in the optical character recognition (OCR) pipeline as OCR engines work best with word-level bounding boxes as compared against the entire image. Various technologies like MSER, CRAFT, EAST, CTPN etc are available for this step.

Text recognition is usually carried out using OCR engines like Pytesseract, Google Vision API, AWS API and Azure API which are followed by a rule-based or statistically sound post-processing step where OCR errors are rectified. The mentioned OCR engines also provide text detection capabilities. However, they are mainly used as OCR text recognition engines.

Optical Character Recognition (OCR) Technology

OCR technology is typically used to study text in photos, scanned copies of documents, text on billboards, text in landscape etc. The text can be handwritten or machine-printed or typed by a typewriter. The text from the image is converted to a machine format and is typically used for tasks like digitization of financial documents, digitization of insurance documents, digitization of medical documents, enhancement of robotic vision etc. The digitization of text can also be used to detect fraudulent documents.

Traditionally, OCR was implemented by detecting text at the character level and then digitizing them. In one of the approaches, groups of characters can be detected as a blob on an image and their skew can be detected using splines. After deskewing, the blob can be digitized by considering the geometrical features of each character.

However, the training of such models is difficult due to the character level annotations required. These methods can traditionally learn one font at a time. Recent advancements in deep learning have made it possible to implement OCR across a variety of fonts. These advanced models are capable of digitizing multiple textual languages too.

Applications of the Optical Character Recognition (OCR) Technology

- The OCR technology can be used to read passports and ID documents – Various industries like the financial industry and the travel industry need to check passports and ID documents to verify the identity of an individual. OCR technology can enable fast reading and cross-verification of textual data present in these documents.

- The OCR technology can be used to digitize healthcare and business documents – healthcare services can be improved by OCR technology to speed up

data entry for treatment processes and insurance claim processes. Similarly, banks, investors and other lending institutions can benefit from the speed of reading text in images followed by data analytics or NLP, made possible by the OCR technology. - The OCR technology can be used in text to speech systems for the blind – Blind people can benefit from the commercial off-the-shelf OCR and text-to-speech technologies by the upload and digitization of various document images like bank statements, salary slips, bills, invoices etc.

- The OCR technology can be used for fraud detection -Words and fields detected by the OCR technology can be analyzed against

algorithmic rules and personalisation features specific to a document to check for possible fraud. E.g. National ID cards of various countries have rules for generating ID numbers. Similarly, bank statements can be analyzed for fraudulent transactions. - The OCR technology can be used for reading CAPTCHA – Captcha is a security keyword to be entered for authenticating a user

and detecting fraudulent bots. OCR technology can be used to read Captcha and design anti-bot systems for user authentication. - The OCR technology can be used in traffic systems – Traffic cameras can use OCR technology to read vehicle registration numbers. Similarly, self-driving cars can use OCR technology to read signs, billboards and vehicle registration numbers to navigate efficiently.

- The OCR technology can be used in robotic vision applications – Robots can be equipped with OCR technology to help humans with data

entry; students with taking notes; analyzing bank statements, bills and salary slips for humans; analyzing invoices for companies etc.

CRAFT Text Detection Model

Many deep learning models in computer vision have been used for the scene text detection task over the past few years. However, the performance of these models is not up to the mark when the text in the image is skewed or curved. The CRAFT model has been shown to outperform state-of-the-art models on various benchmark datasets like TotalText, CTW-1500 etc. The model performs well on even curved, long and deformed texts in addition to normal text.

Traditional character level bounding box detection techniques are not adequate to address these areas. In addition, the ground truth generation for character-level text processing is a tedious and costly task. The CRAFT text detection model uses a convolutional neural network model to calculate region scores and affinity scores. The region score is used to localize character regions while the affinity score is used to group the characters into text regions.

CRAFT uses a fully convolutional neural network based on the VGG16 model. The inference is done by providing word-level bounding boxes. The CRAFT text detection model works well at various scales, from large to small texts and is shown to be effective on unseen datasets as well. However, on Arabic and Bangla text, which have continuous characters, the text detection performance of the model is not up to the mark.

The time taken by CTPN, EAST and MSER text detection methods is lower compared to the CRAFT text detection engine. However, CRAFT is more accurate and the bounding boxes are more precise when the text is long, curved, rotated or deformed.

Implementation

We study the Pytorch implementation of the CRAFT text detection model provided by Clova AI research. Pytorch is an open-source deep-learning library maintained by Meta which provides fast tensor computations and integration with GPU. Pytorch is used by many famous organizations including Tesla, Uber etc. Pytorch works with tensors, which are multidimensional arrays of numbers.

First, we clone the GitHub repository from the official Pytorch CRAFT text detection implementation provided by Clova AI research.

git clone https://github.com/clovaai/CRAFT-pytorch.git

Next cd into the cloned directory and install the dependencies using

pip install -r requirements.txt

The pre-trained models can be used to get a high text detection accuracy. The company has not provided the full training code due to IP reasons. The pre-trained model can be downloaded from the links provided in the official GitHub repository https://github.com/clovaai/CRAFT-pytorch

We can use the pre-trained model by

python test.py --trained_model=[weightfile] --test_folder=[folder path to test images]The resulting images with text bounding boxes will be stored to ./result. Results will be similar to the bounding boxes presented in the title image.

Code Example

We start by cloning the official CRAFT GitHub repository and installing the required dependencies.

git clone https://github.com/clovaai/CRAFT-pytorch.git pip install -r requirements.txt

Image source: https://printjourney.com/product/id-cards/

Next, we cd into the cloned repository and create a ‘test’ folder with the above image copied into it. Rename the copied image to ‘id_craft_av.jpg’.

cd CRAFT-pytorch/

Next, we download the pre-trained model file “craft_mlt_25k.pth” from the link mentioned and copy it to CRAFT-pytorch/

sudo wget --load-cookies /tmp/cookies.txt "https://docs.google.com/uc?export=download&confirm=$(wget --quiet --save-cookies /tmp/cookies.txt --keep-session-cookies --no-check-certificate 'https://docs.google.com/uc?export=download&id=1Jk4eGD7crsqCCg9C9VjCLkMN3ze8kutZ' -O- | sed -rn 's/.*confirm=([0-9A-Za-z_]+).*/1n/p')&id=1Jk4eGD7crsqCCg9C9VjCLkMN3ze8kutZ" -O craft_mlt_25k.pth && rm -rf /tmp/cookies.txt

The above command is used to download large files from Google drive using their file id. Now, we can run our inference by using the below command:

python3 test.py --trained_model=craft_mlt_25k.pth --test_folder=test/

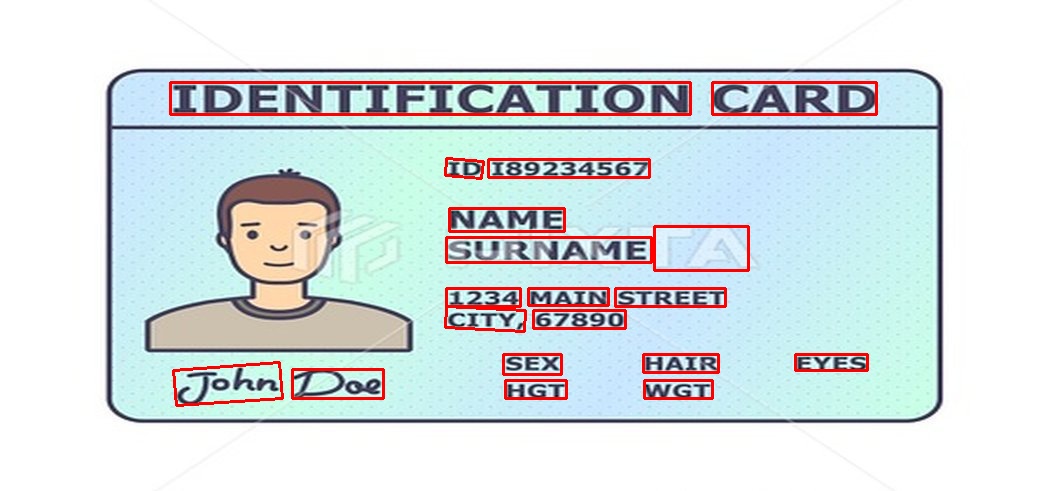

The results are stored in the result folder. Three files – ‘res_id_craft_av.jpg’, ‘res_id_craft_av.txt’ and ‘res_id_craft_av_mask.jpg’ are added to the result folder by the inference code. ‘res_id_craft_av.txt’ contains the coordinates of the detected words. The coordinates of the four corners of the detected words, in the format – x1,y1,x2,y2,x3,y3,x4,y4 – are given in clockwise order in the ‘res_id_craft_av.txt’ file.

‘res_id_craft_av.jpg’ gives the visualization of the bounding boxes for the detected words. The bounding box visualization generated by the model is shown below:

Conclusion

This brings us to the end of the article. In this article, we started by providing an introduction to the CRAFT text detection model developed by Clova AI research. Then we studied the various steps involved in the optical character recognition pipeline like text detection, text recognition and image preprocessing.

We proceeded to the theory behind Optical character recognition and how deep learning has produced advanced models and improvised upon the limitations of traditional models. Various applications of the OCR technology across industry segments were also provided.

We explored the background of the CRAFT text detection model in detail and we also implemented the CRAFT text detection model using a pre-trained model through Pytorch.

Please write comments and reviews on the article as applicable. Help and feedback are always welcome.

My LinkedIn profile can be found at https://www.linkedin.com/in/arvind-yetirajan-narayanan-iyengar-2b0632167/

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.