Introduction

A comprehensive guide on Neural Networks. This blog post will serve as your go-to resource for understanding the intricate world of Neural Networks, a cornerstone of modern Data Science. We’ll start by defining what a Neural Network is and then delve into its structure, including the Input, Hidden, and Output layers that form its architecture. We’ll also discuss the essential ingredients of a Neural Network, such as the Objective Function, Loss Function, Reward Function, and the Optimization Algorithm. To help you understand the versatility of Neural Networks, we’ll explore various types and their real-life applications, including Adaptive Battery and Live Caption in Android OS, Face Unlock, Google Camera Portrait Mode, and Google Assistant. So, whether you’re a beginner or an experienced data scientist, this blog will enhance your understanding and application of Neural Networks in the Data Science Universe.

Table of contents

Neural Network Definition

There are several definitions of neural networks. A few of them includes the following:

A neural network is a series of algorithms that endeavors to recognize underlying relationships in a set of data through a process that mimics the way the human brain operates – investopedia.com

A neural network is a network or circuit of neurons, or in a modern sense, an artificial neural network, composed of artificial neurons or nodes – Wikipedia

Neural networks or also known as Artificial Neural Networks (ANN) are networks that utilize complex mathematical models for information processing. They are based on the model of the functioning of neurons and synapses in the brain of human beings. Similar to the human brain, a neural network connects simple nodes, also known as neurons or units. And a collection of such nodes forms a network of nodes, hence the name “neural network.” – hackr.io

Well that’s a lot of stuff to consume

For anyone starting with a neural network, let’s create our own simple definition of neural networks. Let’s split these words into two parts.

What is Neural Network?

Network means it is an interconnection of some sort between something. What is something we will see this later down the road?

Neural means neurons. What are neurons? let me explain this shortly.

To explain neurons in a simple manner, those are the fundamental blocks of the human brain. All your life experiences, feeling, emotions, basically your entire personality is defined by those neurons. Every decision you make in your daily life, no matter how small or big are driven by those neurons.

So neural network means the network of neurons. That’s it. You might ask “Why are we discussing biology in neural networks?”. Well in the data science realm, when we are discussing neural networks, those are basically inspired by the structure of the human brain hence the name.

Another important thing to consider is that individual neurons themselves cannot do anything. It is the collection of neurons where the real magic happens.

Neural Network in Data Science Universe

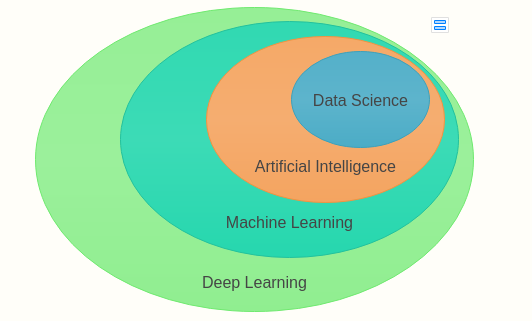

You might have a question “Where is neural network stands in the vast Data Science Universe?”. Let’s find this out with the help of a diagram.

In this diagram, what are you seeing? Under Data Science, we have Artificial Intelligence. Consider this as an umbrella. Under this umbrella, we have machine learning( a sub-field of AI). Under this umbrella, we have another umbrella named “Deep Learning” and this is the place where the neural network exists. (Dream inside of another dream 🙂 classical inception stuff )

Basically, deep learning is the sub-field of machine learning that deals with the study of neural networks. Why the study of neural networks called “Deep Learning”?. Well, read this blog further to know more 🙂

Structure of Neural Networks

Since we already said that neural networks are something that is inspired by the human brain let’s first understand the structure of the human brain first.

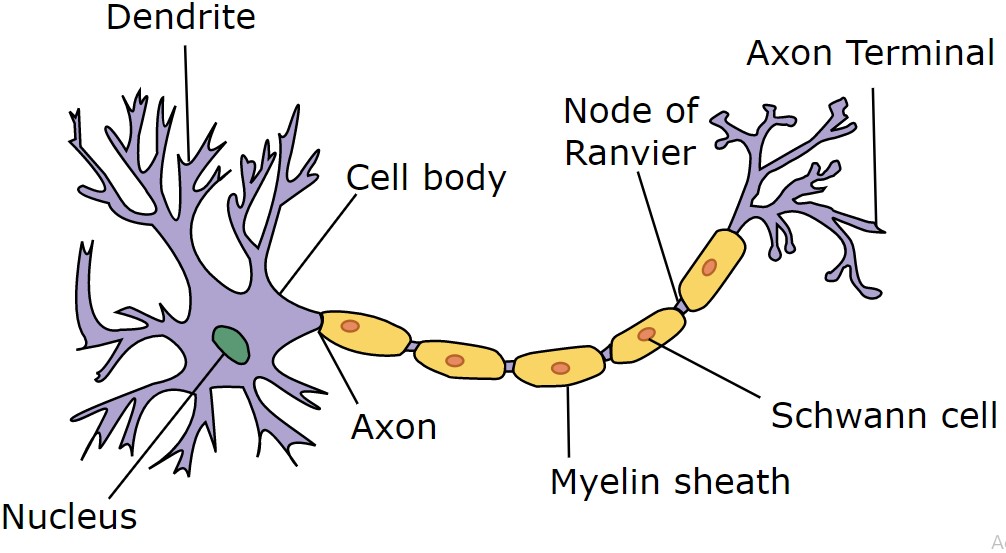

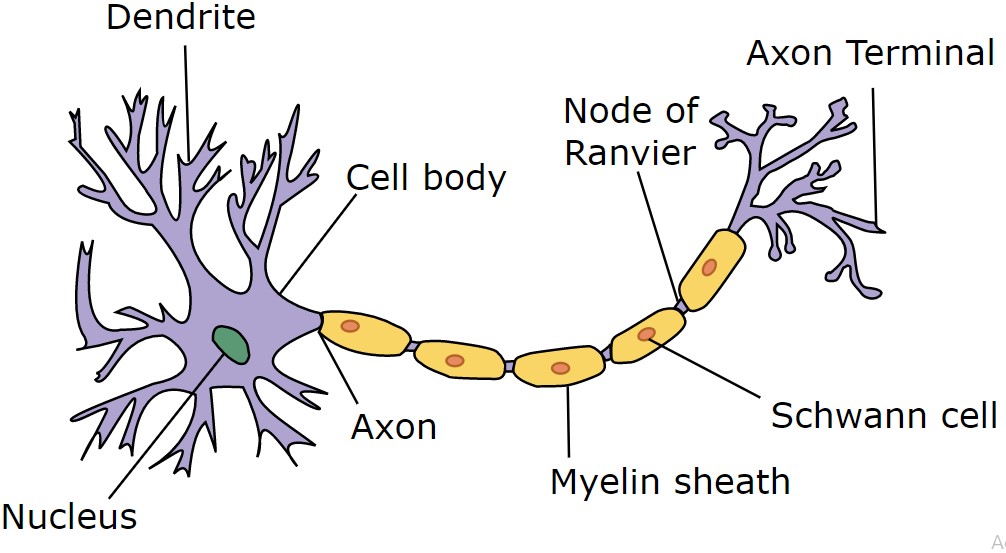

Each neuron composed of three parts:-

- 1.Axon

- 2.Dendrites

- 3.Body

As I explained earlier, neuron works in association with each other. Each neuron receives signals from another neuron and this is done by Dendrite. Axon is something that is responsible for transmitting output to another neuron. Those Dendrites and Axons are interconnected with the help of the body(simplified term). Now let’s understand its relevance to our neural network with the one used in the data science realm.

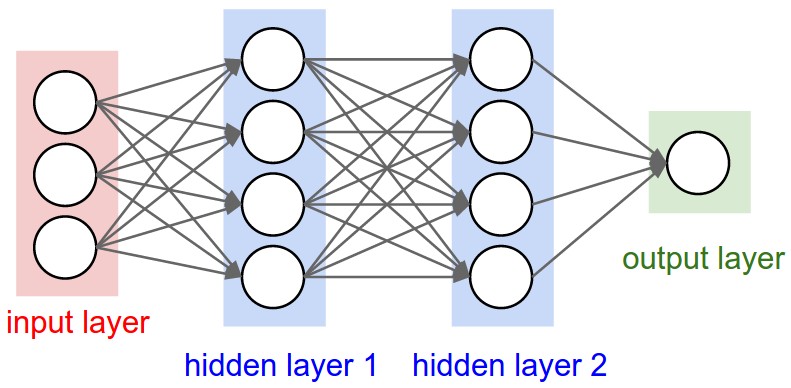

Neural Network Layers

1.Input Layer

It functions similarly to that of dendrites. The purpose of this layer is to accept input from another neuron.

2.Hidden Layer

These are the layers that perform the actual operation

3.Output Layer

It functions similarly to that of axons. The purpose of this layer to transmit the generated output to other neurons.

One thing to be noted here is that in the above diagram we have 2 hidden layers. But there is no limit on how many hidden layers should be here. It can be as low as 1 or as high as 100 or maybe even 1000!

Now it’s time to answer our question. “Why the study of neural networks called Deep Learning”? Well, the answer is right in the figure itself 🙂

It is because of the presence of multiple hidden layers in the neural network hence the name “Deep”. Also after creating the neural network, we have to train it in order to solve the problem hence the name “Learning”. Together these two constitute “Deep Learning”

Ingredients of Neural Network

As Deep Learning is a sub-field of Machine Learning, the core ingredients will be the same. These ingredients include the following:

- Data:- Information needed by neural network

- Model:- Neural network itself

- Objective Function:- Computes how close or far our model’s output from the expected one

- Optimization Algorithm:-Improving performance of the model through a loop of trial and error

The first two ingredients are quite self-explanatory. Let’s get familiar with objective functions.

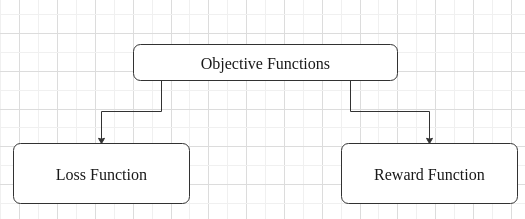

Objective Function

The purpose of the objective function is to calculate the closeness of the model’s output to the expected output. In short, it computes the accuracy of our neural network. In this regard, there are basically two types of objective functions.

Loss Function:-

To understand loss function, let me explain this with the help of an example. Imagine you have a Roomba(A rover that cleans your house). For those who do not know what Roomba is, well this is Roomba.

Let’s call our Roomba “Mr.robot”. Mr. robot’s job is to clean the floor when it senses any dirt. Now since Mr.robot is battery-operated, each time it functions, it consumes its battery power. So in this context what is the ideal condition in which Mr.robot should operate? Well by consuming minimum possible energy but at the same time doing its job efficiently. That is the idea behind loss function.

The lower the value of the loss function, the better is the accuracy of our neural network.

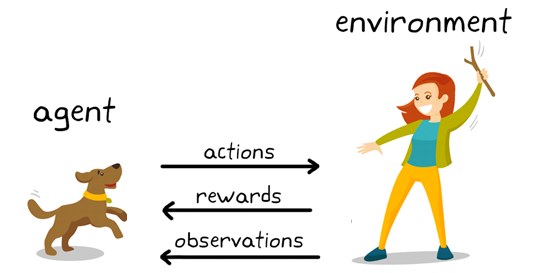

Reward Function:

Let me explain this with the help of another example.

Let’s say you are teaching your dog to fetch a stick. Every time when your dog fetches a stick, you award it let’s say a bone. Well that is the concept behind the reward function

Higher the value, the better the accuracy of our neural network.

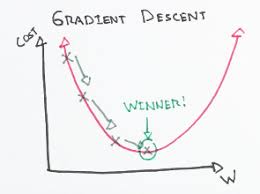

Optimization Algorithm

Any machine learning algorithm is incomplete without an optimization algorithm. The main goal of an optimization algorithm is to subject our ML model (in this case neural network) to a series of trial and error processes which eventually results in a model having higher accuracy.

In the context of neural networks, we use a specific optimization algorithm called gradient descent. Let’s understand this with the help of an example.

let’s imagine that we are climbing down a hill. With each step, we can feel that we are reaching a flat surface. Once we reach a flat surface, we no longer feel that strain on our fleet. Well, similar is the concept of gradient descent.

In gradient descent, there are few terms that we need to understand. In our previous example, when we climb down the hill we reach a flat surface. In gradient descent, we call this global minimum. Now, what do global minima mean? If you used a loss function, it means the point at which you have a minimum loss and is the preferred one.

Alternatively, if you are going to use a reward function, then our goal is to reach a point where the reward is maximum ( means reaching a global maximum). In that case, we have to use something called gradient ascent. Think of it as an opposite to gradient descent. Meaning that now we need to climb up the hill in order to reach its peak 🙂

Types of Neural Networks

There are many different types of neural networks. Few of the popular one includes following

| Convolutional Neural Network(CNN) | Used in image recognition and classification |

| Artificial Neural Network(ANN) | Used in image compression |

| Restricted Boltzmann Machine(RBM) | Used for a variety of tasks including classification, regression, dimensionality reduction |

| Generative Adversarial Network(GAN) | Used for fake news detection, face detection, etc. |

| Recurrent Neural Network(RNN) | Used in speech recognition |

| Self Organizing Maps(SOM) | Used for topology analysis |

Applications of Neural Network in Real Life

In this part, let’s get familiar with the application of neural networks

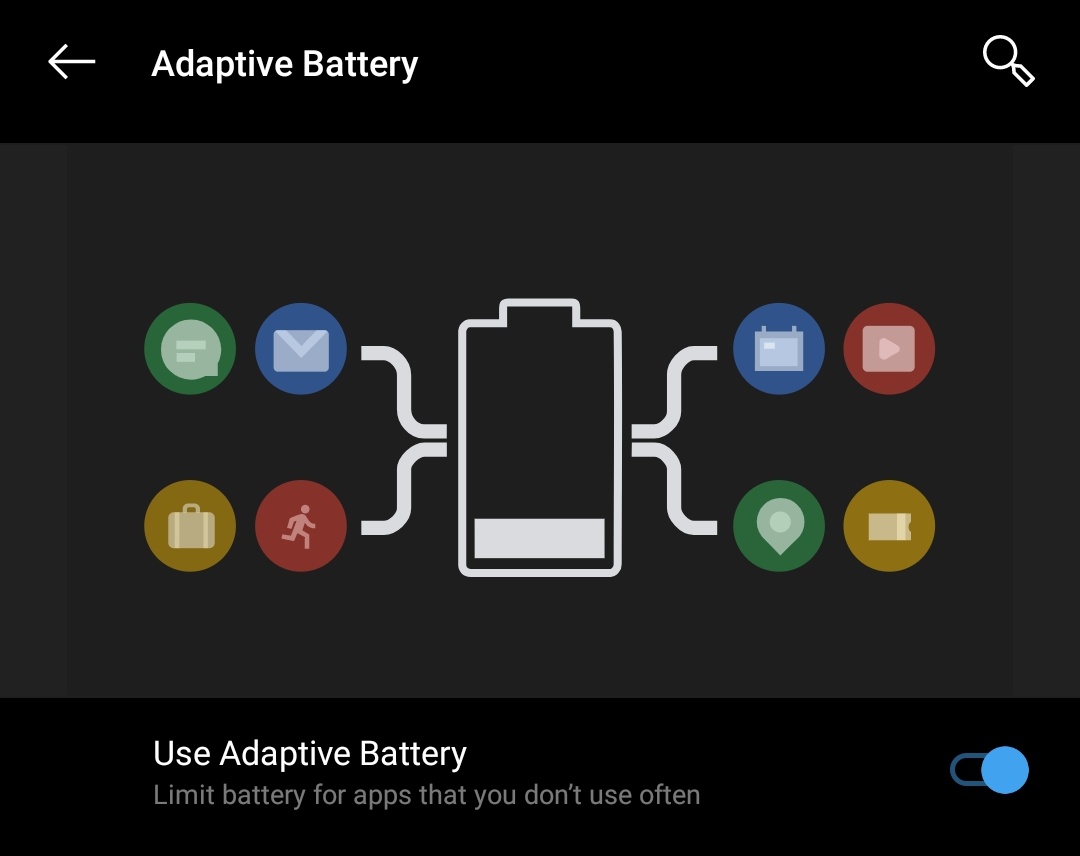

1.Adaptive Battery in Android OS

If you happened to have an android phone running android os 9.0 or above, when you go inside the setting menu under the battery section you will see an option for an adaptive battery. What this feature does is pretty remarkable. This feature basically uses Convolutional Neural Networks(CNN) to identify which apps in your phone are consuming more power and based on that, it will restrict those apps.

2. Live Caption in Android OS

As a part of Android OS 10.0, Google introduced a feature called Live Caption. When enabled this feature uses a combination of CNN and RNN to recognize the video and generate a caption for the same in real-time

3. Face Unlock

Today almost any newly launched android phone is using some sort of face unlock to speed up the unlocking process. Here essentially CNN’s are used to help identify your face. That’s why you can observe that the more you use face unlock, the better it becomes over time.

4.Google Camera Portrait Mode

Do you have google pixel? Wondering why it takes industry-leading bokeh shots. Well, you can thank the integration of CNN into google camera for that 🙂

5.Google Assistant

Wonder how Google assistant wakes after saying “Ok Google”. Don’t say this loudly. You might invoke someone’s google assistant :). It uses RNN for this wake word detection.

Well, this is it. This is all you need to know about neural networks as a starter.

Conclusion

In conclusion, we’ve seen the world of Neural Networks, understanding them as networks inspired by the structure of the human brain. We explored their architecture, comprising of input, hidden, and output layers, akin to the workings of neurons in our brains. We also delved into the core ingredients of Neural Networks, such as data, the model itself, objective functions, and optimization algorithms, essential for their functioning.

Furthermore, we enhance the terminology surrounding Neural Networks, breaking down concepts like objective functions, loss and reward functions, and optimization algorithms in simple terms. We also examined various types of Neural Networks, from Convolutional Neural Networks (CNN) for image recognition to Recurrent Neural Networks (RNN) for speech recognition, exploring their real-life applications like Adaptive Battery and Live Caption in Android OS, Face Unlock, Google Camera Portrait Mode, and Google Assistant.

With this comprehensive guide, whether you’re a novice or an experienced data scientist, you now have a deeper understanding and practical insights into the universe of Neural Networks, paving the way for further exploration and application in the realm of Data Science.